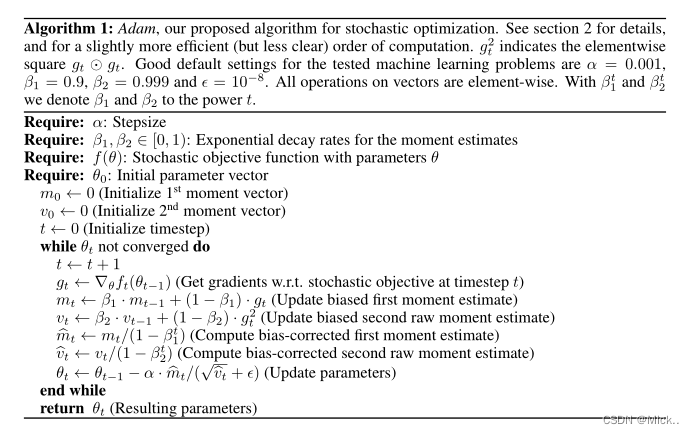

Adam: A method for stochastic optimization

Adam是通过梯度的一阶矩和二阶矩自适应的控制每个参数的学习率的大小。

adam的初始化

def __init__(self, params, lr=1e-3, betas=(0.9, 0.999), eps=1e-8,

weight_decay=0, amsgrad=False):

Args:

params (iterable): iterable of parameters to optimize or dicts defining

parameter groups

lr (float, optional): learning rate (default: 1e-3)

betas (Tuple[float, float], optional): coefficients used for computing

running averages of gradient and its square (default: (0.9, 0.999))

eps (float, optional): term added to the denominator to improve

numerical stability (default: 1e-8)

weight_decay (float, optional): weight decay (

本文转载自: https://blog.csdn.net/qq_40107571/article/details/126018026

版权归原作者 南妮儿 所有, 如有侵权,请联系我们删除。

版权归原作者 南妮儿 所有, 如有侵权,请联系我们删除。