贝叶斯优化(Bayesian Optimization, BO)虽然是超参数调优的利器,但在实际落地中往往会出现收敛慢、计算开销大等问题。很多时候直接“裸跑”标准库里的 BO,效果甚至不如多跑几次 Random Search。

所以要想真正发挥 BO 的威力,必须在搜索策略、先验知识注入以及计算成本控制上做文章。本文整理了十个经过实战验证的技巧,能帮助优化器搜索得更“聪明”,收敛更快,显著提升模型迭代效率。

1、像贝叶斯专家一样引入先验(Priors)

千万别冷启动,优化器如果在没有任何线索的情况下开始,为了探索边界会浪费大量算力。既然我们通常对超参数范围有一定领域知识,或者手头有类似的过往实验数据,就应该利用起来。

弱先验会导致优化器在搜索空间中漫无目的地游荡,而强先验能迅速坍缩搜索空间。在昂贵的 ML 训练循环中,先验质量直接决定了你能省下多少 GPU 时间。

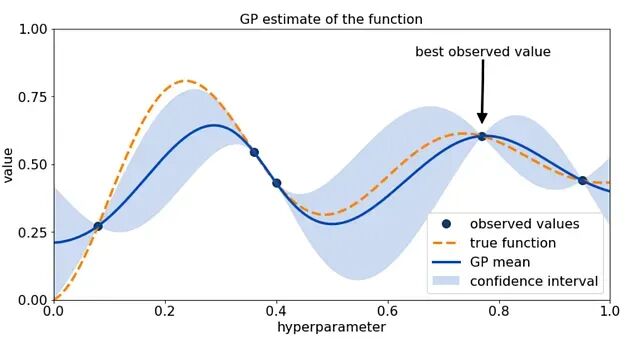

所以可以先跑一个微型的网格搜索或随机搜索(比如 5-10 次试验),把表现最好的几个点作为先验,去初始化高斯过程(Gaussian Process)。

利用知情先验初始化高斯过程

import numpy as np

from sklearn.gaussian_process import GaussianProcessRegressor

from sklearn.gaussian_process.kernels import Matern

from skopt import Optimizer

# Step 1: Quick cheap search to build priors

def objective(params):

lr, depth = params

return train_model(lr, depth) # your training loop returning validation loss

search_space = [

(1e-4, 1e-1), # learning rate

(2, 10) # depth

]

# quick 8-run grid/random search

initial_points = [

(1e-4, 4), (1e-3, 4), (1e-2, 4),

(1e-4, 8), (1e-3, 8), (1e-2, 8),

(5e-3, 6), (8e-3, 10)

]

initial_results = [objective(p) for p in initial_points]

# Step 2: Build priors for Bayesian Optimization

kernel = Matern(nu=2.5)

gp = GaussianProcessRegressor(kernel=kernel, normalize_y=True)

# Step 3: Initialize optimizer with priors

opt = Optimizer(

dimensions=search_space,

base_estimator=gp,

initial_point_generator="sobol",

)

# Feed prior observations

for p, r in zip(initial_points, initial_results):

opt.tell(p, r)

# Step 4: Bayesian Optimization with informed priors

for _ in range(30):

next_params = opt.ask()

score = objective(next_params)

opt.tell(next_params, score)

best_params = opt.get_result().x

print("Best Params:", best_params)

有 Kaggle Grandmaster 曾通过复用相似问题的先验配置,减少了 40% 的调优轮次。用几次廉价的评估换取贝叶斯搜索的加速,这笔交易很划算。

2、动态调整采集函数(Acquisition Function)

Expected Improvement (EI) 是最常用的采集函数,因为它在“探索”和“利用”之间取得了不错的平衡。但在搜索后期,EI 往往变得过于保守,导致收敛停滞。

搜索策略不应该是一成不变的。当发现搜索陷入平原区时,可以尝试动态切换采集函数:在需要激进逼近最优解时切换到 UCB(Upper Confidence Bound);在搜索初期或者目标函数噪声较大需要跳出局部优时,切换到 PI(Probability of Improvement)。

动态调整策略能有效打破后期平台期,减少那些对模型提升毫无帮助的“垃圾时间”。这里用

scikit-optimize

演示如何根据收敛情况动态切换策略:

import numpy as np

from skopt import Optimizer

from skopt.acquisition import gaussian_ei, gaussian_pi, gaussian_ucb

# Dummy expensive objective

def objective(params):

lr, depth = params

return train_model(lr, depth) # Replace with your actual training loop

space = [(1e-4, 1e-1), (2, 10)]

opt = Optimizer(

dimensions=space,

base_estimator="GP",

acq_func="EI" # initial acquisition function

)

def should_switch(iteration, recent_scores):

# Simple heuristic: if scores haven't improved in last 5 steps, switch mode

if iteration > 10 and np.std(recent_scores[-5:]) < 1e-4:

return True

return False

scores = []

for i in range(40):

# Dynamically pick acquisition function

if should_switch(i, scores):

# Choose UCB when nearing convergence, PI for risky exploration

opt.acq_func = "UCB" if scores[-1] < np.median(scores) else "PI"

x = opt.ask()

y = objective(x)

scores.append(y)

opt.tell(x, y)

best_params = opt.get_result().x

print("Best Params:", best_params)

3、善用对数变换(Log Transforms)

很多超参数(如学习率、正则化强度、Batch Size)在数值上跨越了几个数量级,呈现指数分布。这种分布对高斯过程(GP)非常不友好,因为 GP 假设空间是平滑均匀的。

直接在原始空间搜索,优化器会把大量时间浪费在拟合那些陡峭的“悬崖”上。对这些参数进行对数变换(Log Transform),把指数空间拉伸成线性的,让优化器在一个“平坦”的操场上跑。这不仅能稳定 GP 的核函数,还能大幅降低曲率,在实际调参中通常能把收敛时间减半。

import numpy as np

from skopt import Optimizer

from skopt.space import Real

# Expensive training function

def objective(params):

log_lr, log_reg = params

lr = 10 ** log_lr # inverse log transform

reg = 10 ** log_reg

return train_model(lr, reg) # replace with your actual training loop

# Step 1: Define search space in log10 scale

space = [

Real(-5, -1, name="log_lr"), # lr in [1e-5, 1e-1]

Real(-6, -2, name="log_reg") # reg in [1e-6, 1e-2]

]

# Step 2: Create optimizer with log-transformed space

opt = Optimizer(

dimensions=space,

base_estimator="GP",

acq_func="EI"

)

# Step 3: Run Bayesian Optimization entirely in log-space

n_iters = 40

scores = []

for _ in range(n_iters):

x = opt.ask() # propose in log-space

y = objective(x) # evaluate in real-space

opt.tell(x, y)

scores.append(y)

best_log_params = opt.get_result().x

best_params = {

"lr": 10 ** best_log_params[0],

"reg": 10 ** best_log_params[1]

}

print("Best Params:", best_params)

4、别让 BO 陷入“套娃”陷阱(Hyper-hypers)

贝叶斯优化本身也是有超参数的:Kernel Length Scales、噪声项、先验方差等。如果你试图去优化这些参数,就会陷入“为了调参而调参”的无限递归。

BO 内部的超参数优化非常敏感,容易导致代理模型过拟合或者噪声估计错误。对于工业级应用,更稳健的做法是早停(Early Stopping)GP 的内部优化器,或者直接使用元学习(Meta-Learning)得出的经验值来初始化这些超-超参数。这能让代理模型更稳定,更新成本更低,AutoML 系统通常都采用这种策略而非从零学起。

import numpy as np

from skopt import Optimizer

from sklearn.gaussian_process import GaussianProcessRegressor

from sklearn.gaussian_process.kernels import Matern, WhiteKernel

# Meta-learned priors from previous similar tasks

meta_length_scale = 0.3

meta_noise_level = 1e-3

kernel = (

Matern(length_scale=meta_length_scale, nu=2.5) +

WhiteKernel(noise_level=meta_noise_level)

)

# Early-stop BO's own hyperparameter tuning

gp = GaussianProcessRegressor(

kernel=kernel,

optimizer="fmin_l_bfgs_b",

n_restarts_optimizer=0, # Crucial: prevent expensive hyper-hyper loops

normalize_y=True

)

# BO with a stable, meta-initialized GP

opt = Optimizer(

dimensions=[(1e-4, 1e-1), (2, 12)],

base_estimator=gp,

acq_func="EI"

)

def objective(params):

lr, depth = params

return train_model(lr, depth) # your model's validation loss

scores = []

for _ in range(40):

x = opt.ask()

y = objective(x)

opt.tell(x, y)

scores.append(y)

best_params = opt.get_result().x

print("Best Params:", best_params)

5、惩罚高成本区域

标准的 BO 只在乎准确率,不在乎你的电费单。有些参数组合(比如超大 Batch Size、极深的网络、巨大的 Embedding 维度)可能只会带来微小的性能提升,但计算成本却是指数级增长的。

如果不管控成本,BO 很容易钻进“高分低能”的牛角尖。所以可以修改采集函数,引入成本惩罚项。我们不看绝对性能,而是看单位成本的性能收益。斯坦福 ML 实验室曾指出,忽略成本感知会导致预算超支 37% 以上。

成本感知的采集函数(Cost-Aware EI)

import numpy as np

from skopt import Optimizer

from skopt.acquisition import gaussian_ei

# Objective returns BOTH validation loss and estimated training cost

def objective(params):

lr, depth = params

val_loss = train_model(lr, depth)

cost = estimate_cost(lr, depth) # e.g., GPU hours or FLOPs proxy

return val_loss, cost

# Custom cost-aware EI: maximize EI / Cost

def cost_aware_ei(model, X, y_min, costs):

raw_ei = gaussian_ei(X, model, y_min=y_min)

normalized_costs = costs / np.max(costs)

penalty = 1.0 / (1e-6 + normalized_costs)

return raw_ei * penalty

# Search space

opt = Optimizer(

dimensions=[(1e-4, 1e-1), (2, 20)],

base_estimator="GP"

)

observed_losses = []

observed_costs = []

for _ in range(40):

# Ask a batch of candidate points

candidates = opt.ask(n_points=20)

# Evaluate cost-aware EI for each candidate

y_min = np.min(observed_losses) if observed_losses else np.inf

cost_scores = cost_aware_ei(

opt.base_estimator_,

np.array(candidates),

y_min=y_min,

costs=np.array(observed_costs[-len(candidates):] + [1]*len(candidates)) # fallback cost=1

)

# Pick best candidate under cost-awareness

next_x = candidates[np.argmax(cost_scores)]

(loss, cost) = objective(next_x)

observed_losses.append(loss)

observed_costs.append(cost)

opt.tell(next_x, loss)

best_params = opt.get_result().x

print("Best Params (Cost-Aware):", best_params)

6、混合策略:BO + 随机搜索

在噪声较大的任务(如 RL 或深度学习训练)中,BO 并非无懈可击。GP 代理模型有时候会被噪声“骗”了,导致对错误的区域过度自信,陷入局部最优。

这时候引入一点“混乱”反而有奇效。在 BO 循环中混入约 10% 的随机搜索,能有效打破代理模型的“执念”,增加全局覆盖率。这是一种用随机性的多样性来弥补 BO 确定性缺陷的混合策略,也是很多大规模 AutoML 系统的默认配置。

随机-BO 混合模式

import numpy as np

from skopt import Optimizer

from skopt.space import Real, Integer

# Define search space

space = [

Real(1e-4, 1e-1, name="lr"),

Integer(2, 12, name="depth")

]

# Expensive training loop

def objective(params):

lr, depth = params

return train_model(lr, depth) # your model's validation loss

# BO Optimizer

opt = Optimizer(

dimensions=space,

base_estimator="GP",

acq_func="EI"

)

n_total = 50

n_random = int(0.20 * n_total) # first 20% = random exploration

results = []

for i in range(n_total):

if i < n_random:

# ----- Phase 1: Pure Random Search -----

x = [

np.random.uniform(1e-4, 1e-1),

np.random.randint(2, 13)

]

else:

# ----- Phase 2: Bayesian Optimization -----

x = opt.ask()

y = objective(x)

results.append((x, y))

# Only tell BO after evaluations (keeps history consistent)

opt.tell(x, y)

best_params = opt.get_result().x

print("Best Params (Hybrid):", best_params)

7、并行化:伪装成并行计算

BO 本质上是串行的(Sequential),因为每一步都依赖上一步更新的后验分布。这在多 GPU 环境下很吃亏。不过我们可以“伪造”并行性。

启动多个独立的 BO 实例,给它们设置不同的随机种子或先验。让它们独立跑,然后把结果汇总到一个主 GP 模型里进行 Retrain。这样既利用了并行计算资源,又通过多样化的探索增强了最终代理模型的适应性。这种方法在 NAS(神经网络架构搜索)中非常普遍。

多路并行 BO + 结果合并

import numpy as np

from skopt import Optimizer

from multiprocessing import Pool

# Search space

space = [(1e-4, 1e-1), (2, 10)]

# Expensive objective

def objective(params):

lr, depth = params

return train_model(lr, depth)

# Create BO instances with different priors/kernels

def make_optimizer(seed):

return Optimizer(

dimensions=space,

base_estimator="GP",

acq_func="EI",

random_state=seed

)

optimizers = [make_optimizer(seed) for seed in [0, 1, 2, 3]] # 4 BO tracks

# Evaluate one BO step for a single optimizer

def bo_step(opt):

x = opt.ask()

y = objective(x)

opt.tell(x, y)

return (x, y)

# Run pseudo-parallel BO for N steps

def run_parallel_steps(optimizers, steps=10):

pool = Pool(len(optimizers))

results = []

for _ in range(steps):

async_calls = [pool.apply_async(bo_step, (opt,)) for opt in optimizers]

for res, opt in zip(async_calls, optimizers):

x, y = res.get()

results.append((x, y))

pool.close()

pool.join()

return results

# Step 1: parallel exploration

parallel_results = run_parallel_steps(optimizers, steps=15)

# Step 2: merge results into a master BO

master = make_optimizer(seed=99)

for x, y in parallel_results:

master.tell(x, y)

# Step 3: refine with unified BO

for _ in range(30):

x = master.ask()

y = objective(x)

master.tell(x, y)

print("Best Params:", master.get_result().x)

8、非数值输入的处理技巧

高斯过程喜欢连续平滑的空间,但现实中的超参数往往包含非数值型变量(如优化器类型:Adam vs SGD,激活函数类型等)。这些离散的“跳跃”会破坏 GP 的核函数假设。

直接把它们当类别 ID 输入给 GP 是错误的。正确的做法是使用 One-Hot 编码 或者 Embedding。将类别变量映射到连续的数值空间,让 BO 能理解类别之间的“距离”,从而恢复搜索空间的平滑性。在一个 BERT 微调的案例中,仅仅通过正确编码

adam_vs_sgd

,就带来了 15% 的性能提升。

处理类别型超参数

import numpy as np

from skopt import Optimizer

from sklearn.preprocessing import OneHotEncoder

# --- Step 1: Prepare categorical encoder ---

optimizers = np.array([["adam"], ["sgd"], ["adamw"]])

enc = OneHotEncoder(sparse_output=False).fit(optimizers)

def encode_category(cat_name):

return enc.transform([[cat_name]])[0] # returns continuous 3-dim vector

# --- Step 2: Combined numeric + categorical search space ---

# Continuous params: lr, dropout

# Encoded categorical: optimizer

space_dims = [

(1e-5, 1e-2), # learning rate

(0.0, 0.5), # dropout

(0.0, 1.0), # optimizer_onehot_dim1

(0.0, 1.0), # optimizer_onehot_dim2

(0.0, 1.0) # optimizer_onehot_dim3

]

opt = Optimizer(

dimensions=space_dims,

base_estimator="GP",

acq_func="EI"

)

# --- Step 3: Objective that decodes embedding back to category ---

def decode_optimizer(vec):

idx = np.argmax(vec)

return ["adam", "sgd", "adamw"][idx]

def objective(params):

lr, dropout, *opt_vec = params

opt_name = decode_optimizer(opt_vec)

return train_model(lr, dropout, optimizer=opt_name)

# --- Step 4: Hybrid categorical-continuous BO loop ---

for _ in range(40):

x = opt.ask()

# Snap encoded optimizer vector to nearest valid one-hot

opt_vec = np.array(x[2:])

snapped_vec = np.zeros_like(opt_vec)

snapped_vec[np.argmax(opt_vec)] = 1.0

clean_x = [x[0], x[1], *snapped_vec]

y = objective(clean_x)

opt.tell(clean_x, y)

best_params = opt.get_result().x

print("Best Params:", best_params)

9、约束不可探索区域

很多超参数组合理论上存在,但工程上跑不通。比如

batch_size

大于数据集大小,或者

num_layers < num_heads

等逻辑矛盾。如果不对其进行约束,BO 会浪费大量时间去尝试这些必然报错或无效的组合。

通过显式地定义约束条件,或者在目标函数中对无效区域返回一个巨大的 Loss,可以迫使 BO 避开这些“雷区”。这能显著减少失败的试验次数,通常能节省 25-40% 的搜索时间。

约束感知的贝叶斯优化

from skopt import gp_minimize

from skopt.space import Integer, Real, Categorical

import numpy as np

# Hyperparameter search space

space = [

Integer(8, 512, name="batch_size"),

Integer(1, 12, name="num_layers"),

Integer(1, 12, name="num_heads"),

Real(1e-5, 1e-2, name="learning_rate", prior="log-uniform"),

]

# Define constraints

def valid_config(params):

batch_size, num_layers, num_heads, _ = params

return (batch_size <= 12800) and (num_layers >= num_heads)

# Wrapped objective that enforces constraints

def objective(params):

if not valid_config(params):

# Penalize invalid regions so BO learns to avoid them

return 10.0 # large synthetic loss

# Fake expensive training loop

batch_size, num_layers, num_heads, lr = params

loss = (

(num_layers - num_heads) * 0.1

+ np.log(batch_size) * 0.05

+ np.random.normal(0, 0.01)

+ lr * 5

)

return loss

# Run constraint-aware BO

result = gp_minimize(

func=objective,

dimensions=space,

n_calls=40,

n_initial_points=8,

noise=1e-5

)

print("Best hyperparameters:", result.x)

10、集成代理模型(Ensemble Surrogate Models)

单一的高斯过程模型并不总是可靠的。面对高维空间或稀疏数据,GP 容易产生“幻觉”,给出错误的置信度估计。

更稳健的做法是集成多个代理模型。我们可以同时维护 GP、随机森林(Random Forest)和梯度提升树(GBDT),甚至简单的 MLP。通过投票或加权平均来决定下一步的搜索方向。这利用了集成学习的优势,显著降低了预测方差。在 Optuna 等成熟框架中,这种思想被广泛应用。

import optuna

from sklearn.gaussian_process import GaussianProcessRegressor

from sklearn.ensemble import RandomForestRegressor, GradientBoostingRegressor

import numpy as np

# Build surrogate ensemble

def build_surrogates():

return [

GaussianProcessRegressor(normalize_y=True),

RandomForestRegressor(n_estimators=200),

GradientBoostingRegressor()

]

# Train all surrogates on past trials

def train_surrogates(surrogates, X, y):

for s in surrogates:

s.fit(X, y)

# Aggregate predictions using uncertainty-aware weighting

def ensemble_predict(surrogates, X):

preds = []

for s in surrogates:

p = s.predict(X, return_std=False)

preds.append(p)

return np.mean(preds, axis=0)

def objective(trial):

# Hyperparameters

lr = trial.suggest_loguniform("lr", 1e-5, 1e-2)

depth = trial.suggest_int("depth", 2, 8)

# Fake expensive evaluation

loss = (depth * 0.1) + (np.log1p(1/lr) * 0.05) + np.random.normal(0, 0.02)

return loss

# Custom sampling strategy that ensembles surrogate predictions

class EnsembleSampler(optuna.samplers.BaseSampler):

def __init__(self):

self.surrogates = build_surrogates()

def infer_relative_search_space(self, study, trial):

return None # use independent sampling

def sample_relative(self, study, trial, search_space):

return {}

def sample_independent(self, study, trial, param_name, distribution):

trials = study.get_trials(deepcopy=False)

# Warm-up phase: random sampling

if len(trials) < 15:

return optuna.samplers.RandomSampler().sample_independent(

study, trial, param_name, distribution

)

# Collect training data

X = []

y = []

for t in trials:

if t.values:

X.append([t.params["lr"], t.params["depth"]])

y.append(t.values[0])

X = np.array(X)

y = np.array(y)

train_surrogates(self.surrogates, X, y)

# Generate candidate points

candidates = np.random.uniform(

low=distribution.low, high=distribution.high, size=64

)

# Predict surrogate losses

if param_name == "lr":

Xcand = np.column_stack([candidates, np.full_like(candidates, trial.params.get("depth", 5))])

else:

Xcand = np.column_stack([np.full_like(candidates, trial.params.get("lr", 1e-3)), candidates])

preds = ensemble_predict(self.surrogates, Xcand)

# Pick best predicted candidate

return float(candidates[np.argmin(preds)])

# Run ensemble-driven BO

study = optuna.create_study(sampler=EnsembleSampler(), direction="minimize")

study.optimize(objective, n_trials=40)

print("Best:", study.best_params)

总结

直接调用现成的库往往难以解决复杂的工业级问题。上述这十个技巧,本质上都是在弥合理论假设(如平滑性、无限算力、同质噪声)与工程现实(如预算限制、离散参数、失败试验)之间的鸿沟。

在实际应用中,不要把贝叶斯优化当作一个不可干预的黑盒。它应该是一个可以深度定制的组件。只有当你根据具体问题的特性,去精心设计搜索空间、调整采集策略并引入必要的约束时,贝叶斯优化才能真正成为提升模型性能的加速器,而不是消耗 GPU 资源的无底洞。