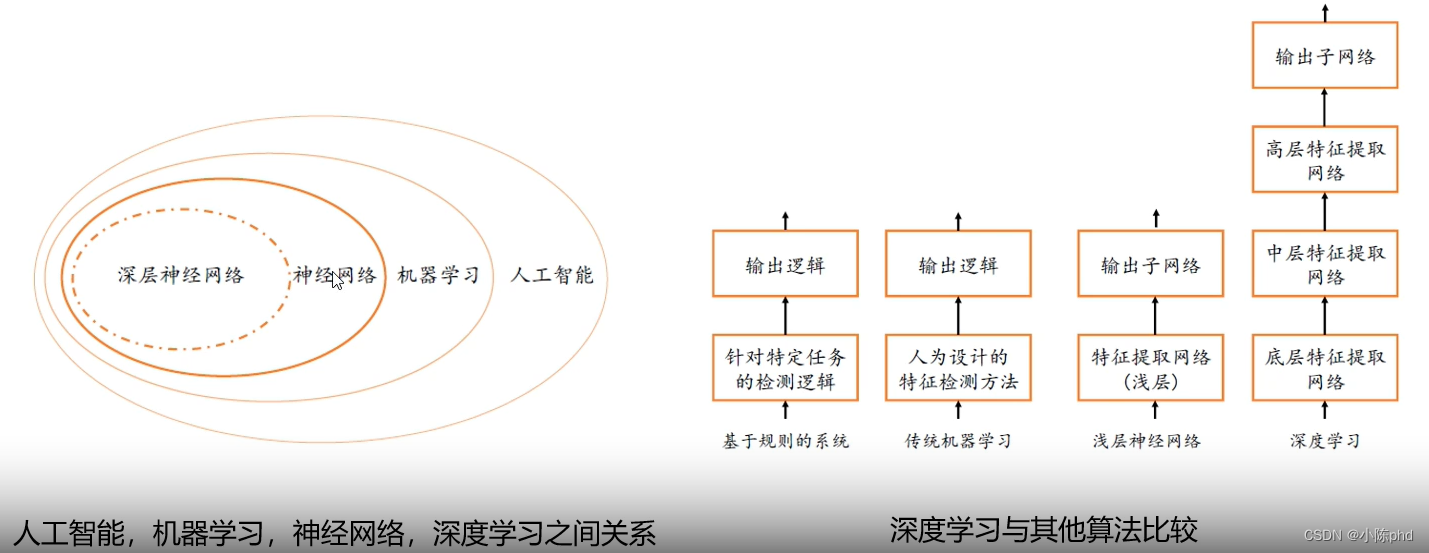

- 传统机器学习 - SVM,boosting,bagging,knn

- 深度学习 - CNN(典型),GAN

地震应用方向

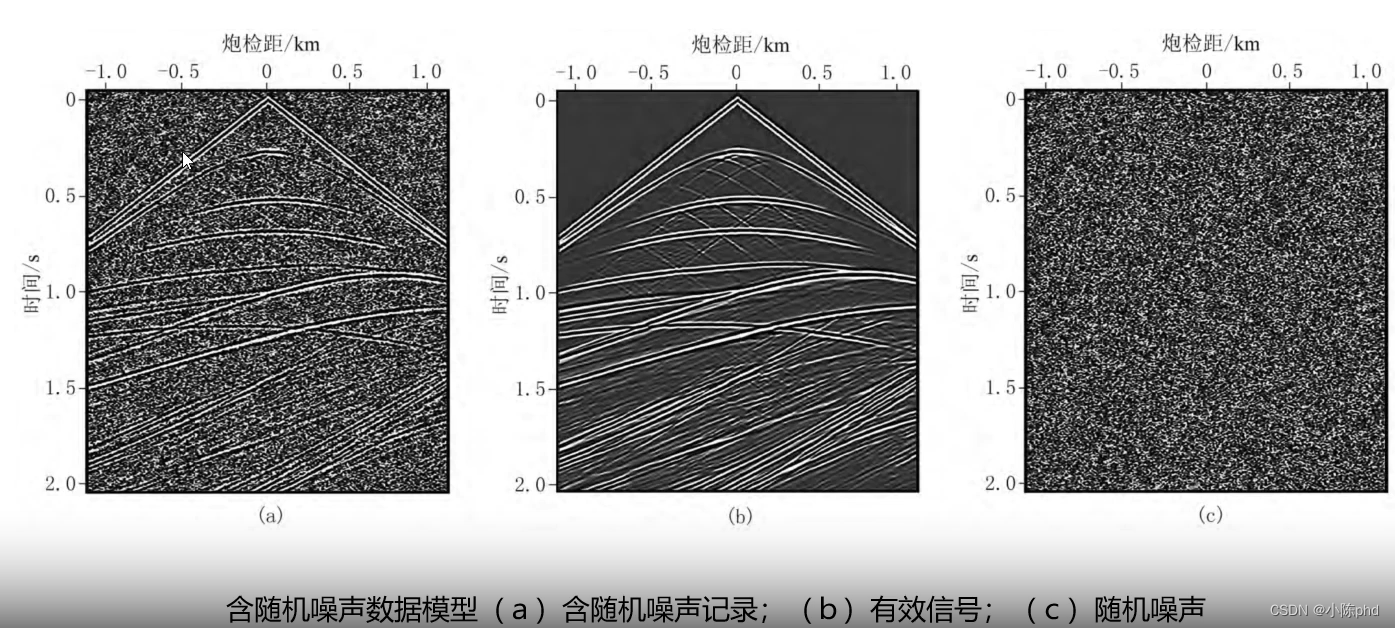

- 叠前地震数据随机噪声去除,实现噪声分离

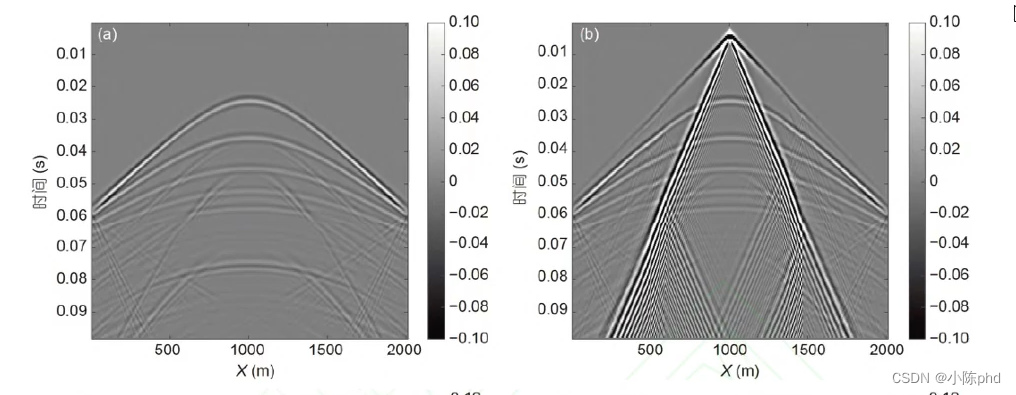

- 面波去噪 面波作为很强的干扰波出现在地震勘探中,大大降低了地震记录的分 辨率和信噪比。深度学习作为一种数据驱动类方法, 能够从大量数据样本中 学习得到有效信号与噪声的区别, 自适应建立深度神经网络来压制噪声。

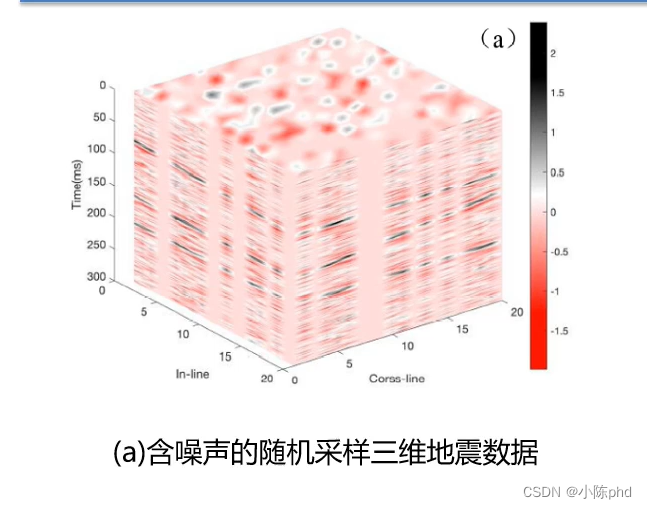

- 地震数据去噪与重建

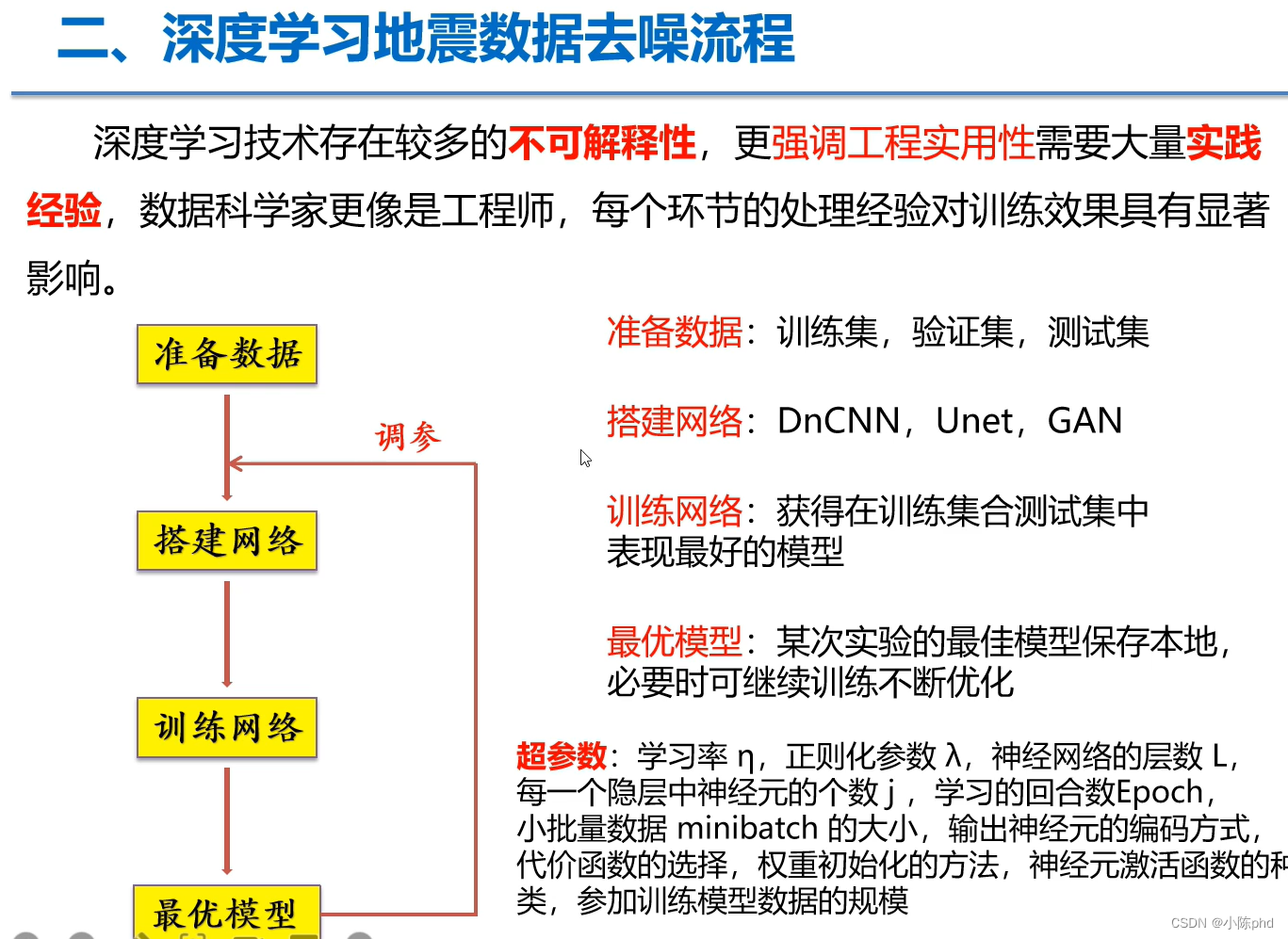

去噪流程、

- 去噪流程 - 深度学习常规流程

数据增强

- 利用数据增强方面,把地震波面波数据当作二维图像数据使用

- 那么可采用数据增强方式,

- 噪声类型添加

- 仿射变换与透视变换,裁剪

- 高级一点的:mixup,dropoutblock,mosaic,mask等等

模型设计

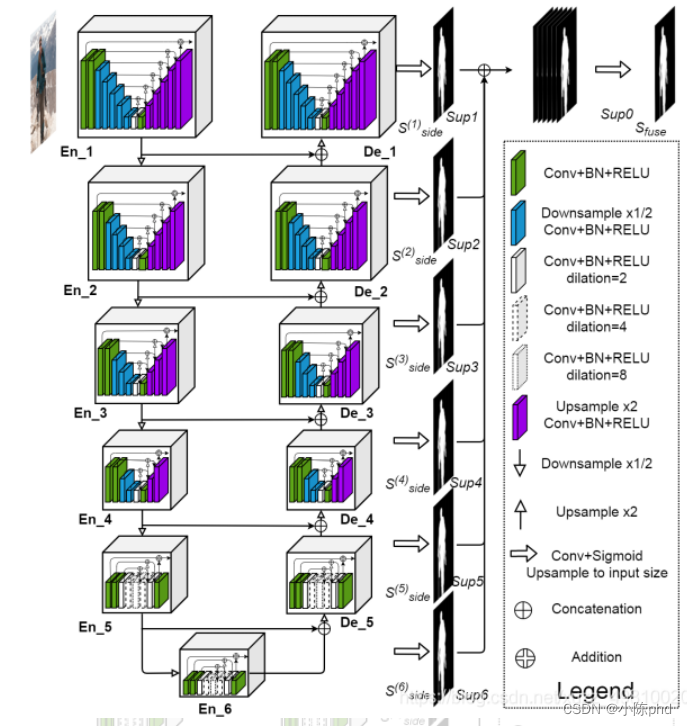

- 模型设计首先清晰自己需要什么,如果我们做的是噪声去除,那么针对性的模型如下: ①目标检测类CNN模型 首先清楚,地震波数据,无非是噪声数据与我想要的真实地震数据。 含有噪声的数据 = 真实地震波 + 噪声 那么模型的任务定义为目标侦测性,寻找里面的地震波或者是噪声。

- 相对来说,真实地震波数据无论是在求取还是在标注性上,都更容易求取。因此让模型找出噪声数据,利用原始数据-噪声数据 = 真实地震波数据。 这里的标签设置方法: 含噪数据,真实地震波数据 ②图像分割类

将地震波去噪任务分解为地震波+噪声,对含有噪声的地震波进行分类,对地震波数据进行提取,进而获取真实地震波。

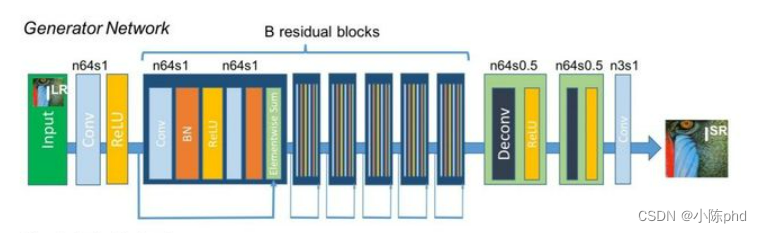

③超分技术(GAN)

标签设置方法: 含噪数据,真实地震波数据

利用超分的思想来做去噪任务,针对性的求取去噪后的图像。利用生成器直接对图像进行去噪,比较典型的油SRGAN。

如果对地震波的噪声类型有判断,可以采用CGAN的思想,对噪声类型可控化。通过已知噪声类型的方式对地震波进行针对性的去噪。

③无监督去噪(VAE系类)

利用编解码模型,对原始数据进行压缩成向量,再通过向量解码成真实地震波。

以前常用,实际应用性还可以,但是可解释性需要进一步探讨。

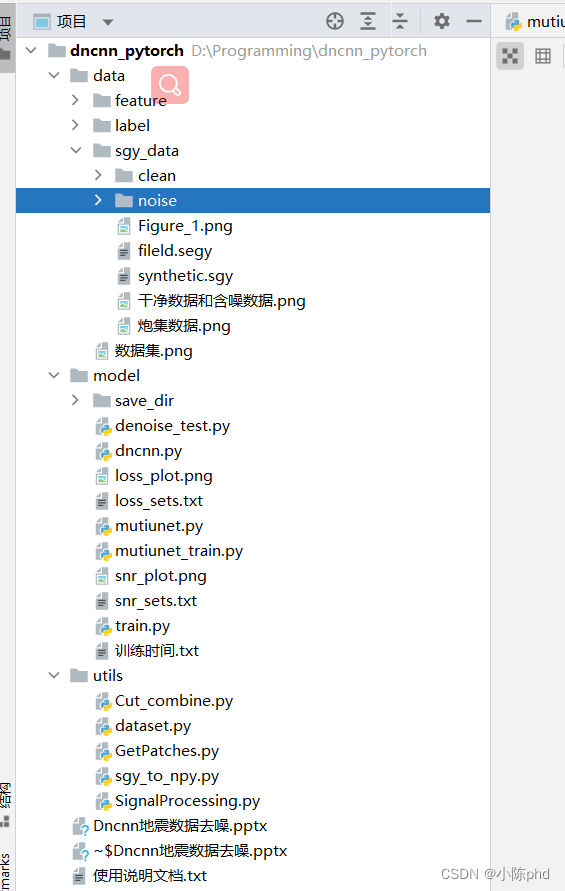

编程实战

这里采用下面博主教程里面的进行解释。

bilibili参考

- 对地震波数据的处理注意使用道的数据就好,其他的按照数据增强的方式走

- 数据采样原则 - 满足独立同分布的原理,可以的相同区块,但是不能的不同地方的数据。- 数据质量> 数据数量,采样质量一定要保证,数量不是最重要的。- 采样的标签制作,可以是(含噪数据,真实地震波),也可以是(含噪数据,噪声)- 分布均匀,不同情况下的噪声尽可能的考虑齐全,这样模型的鲁棒性才会比较好。针对区块,地质概况,可以针对性的制作一下。

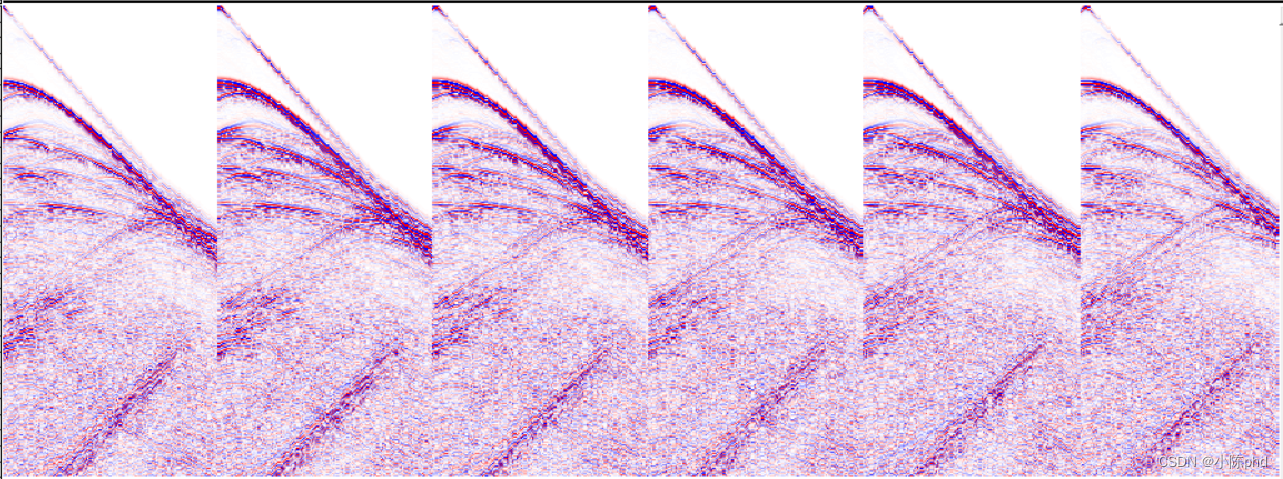

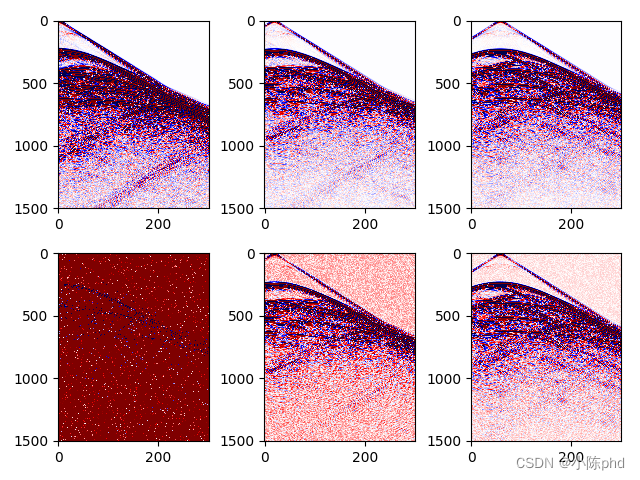

数据展示如下

干净数据与含噪数据的展示

- 模型的输入与输出 地震波数据,可以定义成单通道图像,图像格式为NCHW(pytorch,paddle),NHWC(tensorflow) 输出,如果是去噪任务,理论上输出与输入应该同型号,这里利用u2net修改了一下原up主的代码进行去噪任务。

mutiunet.py

mutiunet.py

import torch

import torch.nn as nn

from torchvision import models

import torch.nn.functional as F

classREBNCONV(nn.Module):def__init__(self,in_ch=3,out_ch=3,dirate=1):super(REBNCONV,self).__init__()

self.conv_s1 = nn.Conv2d(in_ch,out_ch,3,padding=1*dirate,dilation=1*dirate)

self.bn_s1 = nn.BatchNorm2d(out_ch)

self.relu_s1 = nn.ReLU(inplace=True)defforward(self,x):

hx = x

xout = self.relu_s1(self.bn_s1(self.conv_s1(hx)))return xout

## upsample tensor 'src' to have the same spatial size with tensor 'tar'def_upsample_like(src,tar):

src = F.upsample(src,size=tar.shape[2:],mode='bilinear')return src

### RSU-7 ###classRSU7(nn.Module):#UNet07DRES(nn.Module):def__init__(self, in_ch=3, mid_ch=12, out_ch=3):super(RSU7,self).__init__()

self.rebnconvin = REBNCONV(in_ch,out_ch,dirate=1)

self.rebnconv1 = REBNCONV(out_ch,mid_ch,dirate=1)

self.pool1 = nn.MaxPool2d(2,stride=2,ceil_mode=True)

self.rebnconv2 = REBNCONV(mid_ch,mid_ch,dirate=1)

self.pool2 = nn.MaxPool2d(2,stride=2,ceil_mode=True)

self.rebnconv3 = REBNCONV(mid_ch,mid_ch,dirate=1)

self.pool3 = nn.MaxPool2d(2,stride=2,ceil_mode=True)

self.rebnconv4 = REBNCONV(mid_ch,mid_ch,dirate=1)

self.pool4 = nn.MaxPool2d(2,stride=2,ceil_mode=True)

self.rebnconv5 = REBNCONV(mid_ch,mid_ch,dirate=1)

self.pool5 = nn.MaxPool2d(2,stride=2,ceil_mode=True)

self.rebnconv6 = REBNCONV(mid_ch,mid_ch,dirate=1)

self.rebnconv7 = REBNCONV(mid_ch,mid_ch,dirate=2)

self.rebnconv6d = REBNCONV(mid_ch*2,mid_ch,dirate=1)

self.rebnconv5d = REBNCONV(mid_ch*2,mid_ch,dirate=1)

self.rebnconv4d = REBNCONV(mid_ch*2,mid_ch,dirate=1)

self.rebnconv3d = REBNCONV(mid_ch*2,mid_ch,dirate=1)

self.rebnconv2d = REBNCONV(mid_ch*2,mid_ch,dirate=1)

self.rebnconv1d = REBNCONV(mid_ch*2,out_ch,dirate=1)defforward(self,x):

hx = x

hxin = self.rebnconvin(hx)

hx1 = self.rebnconv1(hxin)

hx = self.pool1(hx1)

hx2 = self.rebnconv2(hx)

hx = self.pool2(hx2)

hx3 = self.rebnconv3(hx)

hx = self.pool3(hx3)

hx4 = self.rebnconv4(hx)

hx = self.pool4(hx4)

hx5 = self.rebnconv5(hx)

hx = self.pool5(hx5)

hx6 = self.rebnconv6(hx)

hx7 = self.rebnconv7(hx6)

hx6d = self.rebnconv6d(torch.cat((hx7,hx6),1))

hx6dup = _upsample_like(hx6d,hx5)

hx5d = self.rebnconv5d(torch.cat((hx6dup,hx5),1))

hx5dup = _upsample_like(hx5d,hx4)

hx4d = self.rebnconv4d(torch.cat((hx5dup,hx4),1))

hx4dup = _upsample_like(hx4d,hx3)

hx3d = self.rebnconv3d(torch.cat((hx4dup,hx3),1))

hx3dup = _upsample_like(hx3d,hx2)

hx2d = self.rebnconv2d(torch.cat((hx3dup,hx2),1))

hx2dup = _upsample_like(hx2d,hx1)

hx1d = self.rebnconv1d(torch.cat((hx2dup,hx1),1))return hx1d + hxin

### RSU-6 ###classRSU6(nn.Module):#UNet06DRES(nn.Module):def__init__(self, in_ch=3, mid_ch=12, out_ch=3):super(RSU6,self).__init__()

self.rebnconvin = REBNCONV(in_ch,out_ch,dirate=1)

self.rebnconv1 = REBNCONV(out_ch,mid_ch,dirate=1)

self.pool1 = nn.MaxPool2d(2,stride=2,ceil_mode=True)

self.rebnconv2 = REBNCONV(mid_ch,mid_ch,dirate=1)

self.pool2 = nn.MaxPool2d(2,stride=2,ceil_mode=True)

self.rebnconv3 = REBNCONV(mid_ch,mid_ch,dirate=1)

self.pool3 = nn.MaxPool2d(2,stride=2,ceil_mode=True)

self.rebnconv4 = REBNCONV(mid_ch,mid_ch,dirate=1)

self.pool4 = nn.MaxPool2d(2,stride=2,ceil_mode=True)

self.rebnconv5 = REBNCONV(mid_ch,mid_ch,dirate=1)

self.rebnconv6 = REBNCONV(mid_ch,mid_ch,dirate=2)

self.rebnconv5d = REBNCONV(mid_ch*2,mid_ch,dirate=1)

self.rebnconv4d = REBNCONV(mid_ch*2,mid_ch,dirate=1)

self.rebnconv3d = REBNCONV(mid_ch*2,mid_ch,dirate=1)

self.rebnconv2d = REBNCONV(mid_ch*2,mid_ch,dirate=1)

self.rebnconv1d = REBNCONV(mid_ch*2,out_ch,dirate=1)defforward(self,x):

hx = x

hxin = self.rebnconvin(hx)

hx1 = self.rebnconv1(hxin)

hx = self.pool1(hx1)

hx2 = self.rebnconv2(hx)

hx = self.pool2(hx2)

hx3 = self.rebnconv3(hx)

hx = self.pool3(hx3)

hx4 = self.rebnconv4(hx)

hx = self.pool4(hx4)

hx5 = self.rebnconv5(hx)

hx6 = self.rebnconv6(hx5)

hx5d = self.rebnconv5d(torch.cat((hx6,hx5),1))

hx5dup = _upsample_like(hx5d,hx4)

hx4d = self.rebnconv4d(torch.cat((hx5dup,hx4),1))

hx4dup = _upsample_like(hx4d,hx3)

hx3d = self.rebnconv3d(torch.cat((hx4dup,hx3),1))

hx3dup = _upsample_like(hx3d,hx2)

hx2d = self.rebnconv2d(torch.cat((hx3dup,hx2),1))

hx2dup = _upsample_like(hx2d,hx1)

hx1d = self.rebnconv1d(torch.cat((hx2dup,hx1),1))return hx1d + hxin

### RSU-5 ###classRSU5(nn.Module):#UNet05DRES(nn.Module):def__init__(self, in_ch=3, mid_ch=12, out_ch=3):super(RSU5,self).__init__()

self.rebnconvin = REBNCONV(in_ch,out_ch,dirate=1)

self.rebnconv1 = REBNCONV(out_ch,mid_ch,dirate=1)

self.pool1 = nn.MaxPool2d(2,stride=2,ceil_mode=True)

self.rebnconv2 = REBNCONV(mid_ch,mid_ch,dirate=1)

self.pool2 = nn.MaxPool2d(2,stride=2,ceil_mode=True)

self.rebnconv3 = REBNCONV(mid_ch,mid_ch,dirate=1)

self.pool3 = nn.MaxPool2d(2,stride=2,ceil_mode=True)

self.rebnconv4 = REBNCONV(mid_ch,mid_ch,dirate=1)

self.rebnconv5 = REBNCONV(mid_ch,mid_ch,dirate=2)

self.rebnconv4d = REBNCONV(mid_ch*2,mid_ch,dirate=1)

self.rebnconv3d = REBNCONV(mid_ch*2,mid_ch,dirate=1)

self.rebnconv2d = REBNCONV(mid_ch*2,mid_ch,dirate=1)

self.rebnconv1d = REBNCONV(mid_ch*2,out_ch,dirate=1)defforward(self,x):

hx = x

hxin = self.rebnconvin(hx)

hx1 = self.rebnconv1(hxin)

hx = self.pool1(hx1)

hx2 = self.rebnconv2(hx)

hx = self.pool2(hx2)

hx3 = self.rebnconv3(hx)

hx = self.pool3(hx3)

hx4 = self.rebnconv4(hx)

hx5 = self.rebnconv5(hx4)

hx4d = self.rebnconv4d(torch.cat((hx5,hx4),1))

hx4dup = _upsample_like(hx4d,hx3)

hx3d = self.rebnconv3d(torch.cat((hx4dup,hx3),1))

hx3dup = _upsample_like(hx3d,hx2)

hx2d = self.rebnconv2d(torch.cat((hx3dup,hx2),1))

hx2dup = _upsample_like(hx2d,hx1)

hx1d = self.rebnconv1d(torch.cat((hx2dup,hx1),1))return hx1d + hxin

### RSU-4 ###classRSU4(nn.Module):#UNet04DRES(nn.Module):def__init__(self, in_ch=3, mid_ch=12, out_ch=3):super(RSU4,self).__init__()

self.rebnconvin = REBNCONV(in_ch,out_ch,dirate=1)

self.rebnconv1 = REBNCONV(out_ch,mid_ch,dirate=1)

self.pool1 = nn.MaxPool2d(2,stride=2,ceil_mode=True)

self.rebnconv2 = REBNCONV(mid_ch,mid_ch,dirate=1)

self.pool2 = nn.MaxPool2d(2,stride=2,ceil_mode=True)

self.rebnconv3 = REBNCONV(mid_ch,mid_ch,dirate=1)

self.rebnconv4 = REBNCONV(mid_ch,mid_ch,dirate=2)

self.rebnconv3d = REBNCONV(mid_ch*2,mid_ch,dirate=1)

self.rebnconv2d = REBNCONV(mid_ch*2,mid_ch,dirate=1)

self.rebnconv1d = REBNCONV(mid_ch*2,out_ch,dirate=1)defforward(self,x):

hx = x

hxin = self.rebnconvin(hx)

hx1 = self.rebnconv1(hxin)

hx = self.pool1(hx1)

hx2 = self.rebnconv2(hx)

hx = self.pool2(hx2)

hx3 = self.rebnconv3(hx)

hx4 = self.rebnconv4(hx3)

hx3d = self.rebnconv3d(torch.cat((hx4,hx3),1))

hx3dup = _upsample_like(hx3d,hx2)

hx2d = self.rebnconv2d(torch.cat((hx3dup,hx2),1))

hx2dup = _upsample_like(hx2d,hx1)

hx1d = self.rebnconv1d(torch.cat((hx2dup,hx1),1))return hx1d + hxin

### RSU-4F ###classRSU4F(nn.Module):#UNet04FRES(nn.Module):def__init__(self, in_ch=3, mid_ch=12, out_ch=3):super(RSU4F,self).__init__()

self.rebnconvin = REBNCONV(in_ch,out_ch,dirate=1)

self.rebnconv1 = REBNCONV(out_ch,mid_ch,dirate=1)

self.rebnconv2 = REBNCONV(mid_ch,mid_ch,dirate=2)

self.rebnconv3 = REBNCONV(mid_ch,mid_ch,dirate=4)

self.rebnconv4 = REBNCONV(mid_ch,mid_ch,dirate=8)

self.rebnconv3d = REBNCONV(mid_ch*2,mid_ch,dirate=4)

self.rebnconv2d = REBNCONV(mid_ch*2,mid_ch,dirate=2)

self.rebnconv1d = REBNCONV(mid_ch*2,out_ch,dirate=1)defforward(self,x):

hx = x

hxin = self.rebnconvin(hx)

hx1 = self.rebnconv1(hxin)

hx2 = self.rebnconv2(hx1)

hx3 = self.rebnconv3(hx2)

hx4 = self.rebnconv4(hx3)

hx3d = self.rebnconv3d(torch.cat((hx4,hx3),1))

hx2d = self.rebnconv2d(torch.cat((hx3d,hx2),1))

hx1d = self.rebnconv1d(torch.cat((hx2d,hx1),1))return hx1d + hxin

##### U^2-Net ####classU2NET(nn.Module):def__init__(self,in_ch=3,out_ch=1):super(U2NET,self).__init__()

self.stage1 = RSU7(in_ch,32,64)

self.pool12 = nn.MaxPool2d(2,stride=2,ceil_mode=True)

self.stage2 = RSU6(64,32,128)

self.pool23 = nn.MaxPool2d(2,stride=2,ceil_mode=True)

self.stage3 = RSU5(128,64,256)

self.pool34 = nn.MaxPool2d(2,stride=2,ceil_mode=True)

self.stage4 = RSU4(256,128,512)

self.pool45 = nn.MaxPool2d(2,stride=2,ceil_mode=True)

self.stage5 = RSU4F(512,256,512)

self.pool56 = nn.MaxPool2d(2,stride=2,ceil_mode=True)

self.stage6 = RSU4F(512,256,512)# decoder

self.stage5d = RSU4F(1024,256,512)

self.stage4d = RSU4(1024,128,256)

self.stage3d = RSU5(512,64,128)

self.stage2d = RSU6(256,32,64)

self.stage1d = RSU7(128,16,64)

self.side1 = nn.Conv2d(64,out_ch,3,padding=1)

self.side2 = nn.Conv2d(64,out_ch,3,padding=1)

self.side3 = nn.Conv2d(128,out_ch,3,padding=1)

self.side4 = nn.Conv2d(256,out_ch,3,padding=1)

self.side5 = nn.Conv2d(512,out_ch,3,padding=1)

self.side6 = nn.Conv2d(512,out_ch,3,padding=1)

self.outconv = nn.Conv2d(6,out_ch,1)defforward(self,x):

hx = x

#stage 1

hx1 = self.stage1(hx)

hx = self.pool12(hx1)#stage 2

hx2 = self.stage2(hx)

hx = self.pool23(hx2)#stage 3

hx3 = self.stage3(hx)

hx = self.pool34(hx3)#stage 4

hx4 = self.stage4(hx)

hx = self.pool45(hx4)#stage 5

hx5 = self.stage5(hx)

hx = self.pool56(hx5)#stage 6

hx6 = self.stage6(hx)

hx6up = _upsample_like(hx6,hx5)#-------------------- decoder --------------------

hx5d = self.stage5d(torch.cat((hx6up,hx5),1))

hx5dup = _upsample_like(hx5d,hx4)

hx4d = self.stage4d(torch.cat((hx5dup,hx4),1))

hx4dup = _upsample_like(hx4d,hx3)

hx3d = self.stage3d(torch.cat((hx4dup,hx3),1))

hx3dup = _upsample_like(hx3d,hx2)

hx2d = self.stage2d(torch.cat((hx3dup,hx2),1))

hx2dup = _upsample_like(hx2d,hx1)

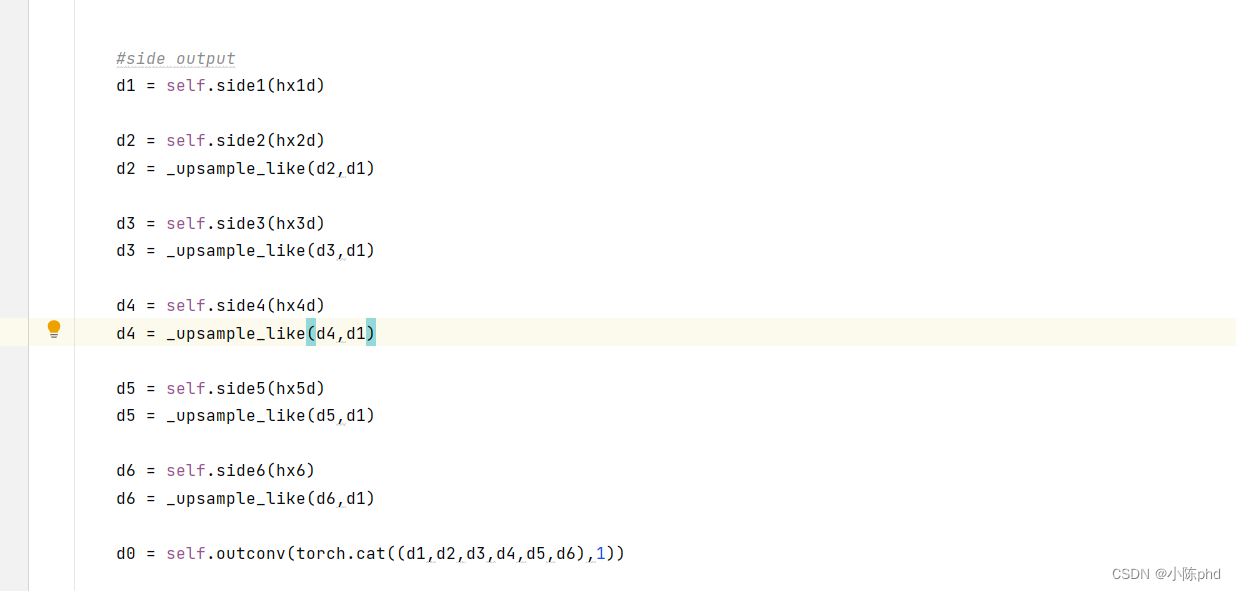

hx1d = self.stage1d(torch.cat((hx2dup,hx1),1))#side output

d1 = self.side1(hx1d)

d2 = self.side2(hx2d)

d2 = _upsample_like(d2,d1)

d3 = self.side3(hx3d)

d3 = _upsample_like(d3,d1)

d4 = self.side4(hx4d)

d4 = _upsample_like(d4,d1)

d5 = self.side5(hx5d)

d5 = _upsample_like(d5,d1)

d6 = self.side6(hx6)

d6 = _upsample_like(d6,d1)

d0 = self.outconv(torch.cat((d1,d2,d3,d4,d5,d6),1))return F.sigmoid(d0), F.sigmoid(d1), F.sigmoid(d2), F.sigmoid(d3), F.sigmoid(d4), F.sigmoid(d5), F.sigmoid(d6)### U^2-Net small ###classU2NETP(nn.Module):def__init__(self,in_ch=3,out_ch=1):super(U2NETP,self).__init__()

self.stage1 = RSU7(in_ch,16,64)

self.pool12 = nn.MaxPool2d(2,stride=2,ceil_mode=True)

self.stage2 = RSU6(64,16,64)

self.pool23 = nn.MaxPool2d(2,stride=2,ceil_mode=True)

self.stage3 = RSU5(64,16,64)

self.pool34 = nn.MaxPool2d(2,stride=2,ceil_mode=True)

self.stage4 = RSU4(64,16,64)

self.pool45 = nn.MaxPool2d(2,stride=2,ceil_mode=True)

self.stage5 = RSU4F(64,16,64)

self.pool56 = nn.MaxPool2d(2,stride=2,ceil_mode=True)

self.stage6 = RSU4F(64,16,64)# decoder

self.stage5d = RSU4F(128,16,64)

self.stage4d = RSU4(128,16,64)

self.stage3d = RSU5(128,16,64)

self.stage2d = RSU6(128,16,64)

self.stage1d = RSU7(128,16,64)

self.side1 = nn.Conv2d(64,out_ch,3,padding=1)

self.side2 = nn.Conv2d(64,out_ch,3,padding=1)

self.side3 = nn.Conv2d(64,out_ch,3,padding=1)

self.side4 = nn.Conv2d(64,out_ch,3,padding=1)

self.side5 = nn.Conv2d(64,out_ch,3,padding=1)

self.side6 = nn.Conv2d(64,out_ch,3,padding=1)

self.outconv = nn.Conv2d(6,out_ch,1)defforward(self,x):

hx = x

#stage 1

hx1 = self.stage1(hx)

hx = self.pool12(hx1)#stage 2

hx2 = self.stage2(hx)

hx = self.pool23(hx2)#stage 3

hx3 = self.stage3(hx)

hx = self.pool34(hx3)#stage 4

hx4 = self.stage4(hx)

hx = self.pool45(hx4)#stage 5

hx5 = self.stage5(hx)

hx = self.pool56(hx5)#stage 6

hx6 = self.stage6(hx)

hx6up = _upsample_like(hx6,hx5)#decoder

hx5d = self.stage5d(torch.cat((hx6up,hx5),1))

hx5dup = _upsample_like(hx5d,hx4)

hx4d = self.stage4d(torch.cat((hx5dup,hx4),1))

hx4dup = _upsample_like(hx4d,hx3)

hx3d = self.stage3d(torch.cat((hx4dup,hx3),1))

hx3dup = _upsample_like(hx3d,hx2)

hx2d = self.stage2d(torch.cat((hx3dup,hx2),1))

hx2dup = _upsample_like(hx2d,hx1)

hx1d = self.stage1d(torch.cat((hx2dup,hx1),1))#side output

d1 = self.side1(hx1d)

d2 = self.side2(hx2d)

d2 = _upsample_like(d2,d1)

d3 = self.side3(hx3d)

d3 = _upsample_like(d3,d1)

d4 = self.side4(hx4d)

d4 = _upsample_like(d4,d1)

d5 = self.side5(hx5d)

d5 = _upsample_like(d5,d1)

d6 = self.side6(hx6)

d6 = _upsample_like(d6,d1)

d0 = self.outconv(torch.cat((d1,d2,d3,d4,d5,d6),1))return d0,d1,d2,d3,d4,d5,d6

训练网络如下:

mutiunet_train.py

# -*-coding:utf-8-*-"""

Created on 2022.3.31

programing language:python

@author:夜剑听雨

"""# from model.dncnn import DnCNNfrom model.mutiunet import U2NET

from utils.dataset import MyDataset

from utils.SignalProcessing import batch_snr

from torch import optim

import torch.nn as nn

import torch

import time

import numpy as np

import matplotlib.pyplot as plt

import os

# 选择设备,有cuda用cuda,没有就用cpu

device = torch.device('cuda'if torch.cuda.is_available()else'cpu')# 加载网络,图片单通道1,分类为1。

my_net = U2NET(1,1)# 将网络拷贝到设备中

my_net.to(device=device)# 指定特征和标签数据地址,加载数据集

train_path_x ="..\\data\\feature\\"

train_path_y ="..\\data\\label\\"# 划分数据集,训练集:验证集:测试集 = 8:1:1

full_dataset = MyDataset(train_path_x, train_path_y)

valida_size =int(len(full_dataset)*0.1)

train_size =len(full_dataset)- valida_size *2# 指定加载数据的batch_size

batch_size =32# 划分数据集

train_dataset, test_dataset, valida_dataset = torch.utils.data.random_split(full_dataset,[train_size, valida_size, valida_size])# 加载并且乱序训练数据集

train_loader = torch.utils.data.DataLoader(dataset=train_dataset, batch_size=batch_size, shuffle=True)# 加载并且乱序验证数据集

valida_loader = torch.utils.data.DataLoader(dataset=valida_dataset, batch_size=batch_size, shuffle=True)# 加载测试数据集,测试数据不需要乱序

test_loader = torch.utils.data.DataLoader(dataset=test_dataset, batch_size=batch_size, shuffle=False)# 定义优化方法

epochs =20# 设置训练次数

LR =0.001# 设置学习率

optimizer = optim.Adam(my_net.parameters(), lr=LR)# 定义损失函数

criterion = nn.MSELoss(reduction='sum')# reduction='sum'表示不除以batch_size

temp_sets1 =[]# 用于记录训练,验证集的loss,每一个epoch都做一次训练,验证

temp_sets2 =[]# # 用于记录测试集的SNR,去噪前和去噪后都要记录

start_time = time.strftime("1. %Y-%m-%d %H:%M:%S", time.localtime())# 开始时间ifnot os.path.exists(r"./save_dir/"):

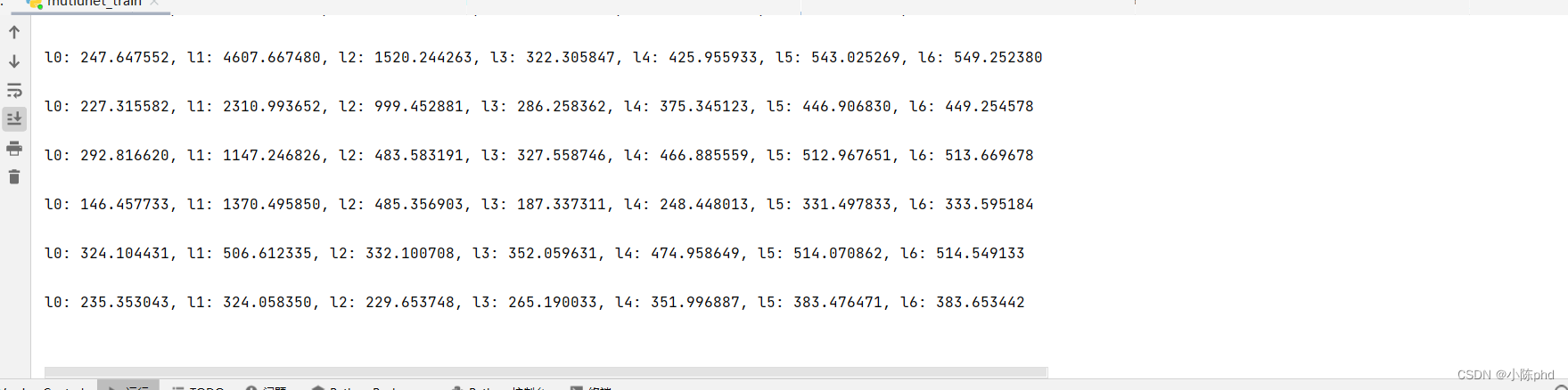

os.makedirs(r"./save_dir/")defmuti_bce_loss_fusion(d0, d1, d2, d3, d4, d5, d6, labels_v):

loss0 = criterion(d0, labels_v)

loss1 = criterion(d1, labels_v)

loss2 = criterion(d2, labels_v)

loss3 = criterion(d3, labels_v)

loss4 = criterion(d4, labels_v)

loss5 = criterion(d5, labels_v)

loss6 = criterion(d6, labels_v)

loss = loss0 + loss1 + loss2 + loss3 + loss4 + loss5 + loss6

print("l0: %3f, l1: %3f, l2: %3f, l3: %3f, l4: %3f, l5: %3f, l6: %3f\n"%(

loss0.item(), loss1.item(), loss2.item(), loss3.item(), loss4.item(), loss5.item(), loss6.item()))return loss0, loss

# 每一个epoch都做一次训练,验证,测试for epoch inrange(epochs):# 训练集训练网络

train_loss =0.0

my_net.train()# 开启训练模式for batch_idx1,(batch_x, batch_y)inenumerate(train_loader,0):# 0开始计数# 加载数据至GPU

batch_x = batch_x.to(device=device, dtype=torch.float32)

batch_y = batch_y.to(device=device, dtype=torch.float32)

d0, d1, d2, d3, d4, d5, d6 = my_net(batch_x)# 使用网络参数,输出预测结果# 计算loss

_, loss1 = muti_bce_loss_fusion(d0, d1, d2, d3, d4, d5, d6,(batch_x - batch_y))

train_loss += loss1.item()# 累加计算本次epoch的loss,最后还需要除以每个epoch可以抽取多少个batch数,即最后的n_count值

optimizer.zero_grad()# 先将梯度归零,等价于net.zero_grad(0

loss1.backward()# 反向传播计算得到每个参数的梯度值

optimizer.step()# 通过梯度下降执行一步参数更新

train_loss = train_loss /(batch_idx1 +1)# 本次epoch的平均loss# 验证集验证网络

my_net.eval()# 开启评估/测试模式

val_loss =0.0for batch_idx2,(val_x, val_y)inenumerate(valida_loader,0):# 加载数据至GPU

val_x = val_x.to(device=device, dtype=torch.float32)

val_y = val_y.to(device=device, dtype=torch.float32)with torch.no_grad():# 不需要做梯度更新,所以要关闭求梯度

d0, d1, d2, d3, d4, d5, d6 = my_net(val_x)# 使用网络参数,输出预测结果# 计算loss

_, loss2 = muti_bce_loss_fusion(d0, d1, d2, d3, d4, d5, d6,(val_x - val_y))

val_loss += loss2.item()# 累加计算本次epoch的loss,最后还需要除以每个epoch可以抽取多少个batch数,即最后的count值

val_loss = val_loss /(batch_idx2 +1)# 训练,验证,测试的loss保存至loss_sets中

loss_set =[train_loss, val_loss]

temp_sets1.append(loss_set)# {:.4f}值用format格式化输出,保留小数点后四位print("epoch={},训练集loss:{:.4f},验证集loss:{:.4f}".format(epoch +1, train_loss, val_loss))# 测试集测试网络,采用计算一个batch数据的信噪比(snr)作为评估指标

snr_set1 =0.0

snr_set2 =0.0for batch_idx3,(test_x, test_y)inenumerate(test_loader,0):# 加载数据至GPU

test_x = test_x.to(device=device, dtype=torch.float32)

test_y = test_y.to(device=device, dtype=torch.float32)with torch.no_grad():# 不需要做梯度更新,所以要关闭求梯度

d0, d1, d2, d3, d4, d5, d6 = my_net(test_x)# 使用网络参数,输出预测结果(训练的是噪声)# 含噪数据减去噪声得到的才是去噪后的数据

clean_out = test_x - d0

# 计算网络去噪后的数据和干净数据的信噪比(此处是计算了所有的数据,除以了batch_size求均值)

SNR1 = batch_snr(test_x, test_y)# 去噪前的信噪比

SNR2 = batch_snr(clean_out, test_y)# 去噪后的信噪比

snr_set1 += SNR1

snr_set2 += SNR2

# 累加计算本次epoch的loss,最后还需要除以每个epoch可以抽取多少个batch数,即最后的count值

snr_set1 = snr_set1 /(batch_idx3 +1)

snr_set2 = snr_set2 /(batch_idx3 +1)# 训练,验证,测试的loss保存至loss_sets中

snr_set =[snr_set1, snr_set2]

temp_sets2.append(snr_set)# {:.4f}值用format格式化输出,保留小数点后四位print("epoch={},去噪前的平均信噪比(SNR):{:.4f} dB,去噪后的平均信噪比(SNR):{:.4f} dB".format(epoch +1, snr_set1, snr_set2))# 保存网络模型

model_name =f'model_epoch{epoch +1}'# 模型命名

torch.save(my_net, os.path.join('./save_dir', model_name +'.pth'))# 保存整个神经网络的模型结构以及参数

end_time = time.strftime("1. %Y-%m-%d %H:%M:%S", time.localtime())# 结束时间# 将训练花费的时间写成一个txt文档,保存到当前文件夹下withopen('训练时间.txt','w', encoding='utf-8')as f:

f.write(start_time)

f.write(end_time)

f.close()print("训练开始时间{}>>>>>>>>>>>>>>>>训练结束时间{}".format(start_time, end_time))# 打印所用时间# temp_sets1是三维张量无法保存,需要变成2维数组才能存为txt文件

loss_sets =[]for sets in temp_sets1:for i inrange(2):

loss_sets.append(sets[i])

loss_sets = np.array(loss_sets).reshape(-1,2)# 重塑形状10*2,-1表示自动推导# fmt参数,指定保存的文件格式。将loss_sets存为txt文件

np.savetxt('loss_sets.txt', loss_sets, fmt='%.4f')# temp_sets2是三维张量无法保存,需要变成2维数组才能存为txt文件

snr_sets =[]for sets in temp_sets2:for i inrange(2):

snr_sets.append(sets[i])

snr_sets = np.array(snr_sets).reshape(-1,2)# 重塑形状10*2,-1表示自动推导# fmt参数,指定保存的文件格式。将loss_sets存为txt文件

np.savetxt('snr_sets.txt', snr_sets, fmt='%.4f')# 显示loss曲线

loss_lines = np.loadtxt('./loss_sets.txt')# 前面除以batch_size会导致数值太小了不易观察

train_line = loss_lines[:,0]/ batch_size

valida_line = loss_lines[:,1]/ batch_size

x1 =range(len(train_line))

fig1 = plt.figure()

plt.plot(x1, train_line, x1, valida_line)

plt.xlabel('epoch')

plt.ylabel('loss')

plt.legend(['train','valida'])

plt.savefig('loss_plot.png', bbox_inches='tight')

plt.tight_layout()# 显示snr曲线

snr_lines = np.loadtxt('./snr_sets.txt')

De_before = snr_lines[:,0]

De_after = snr_lines[:,1]

x2 =range(len(De_before))

fig2 = plt.figure()

plt.plot(x2, De_before, x2, De_after)

plt.xlabel('epoch')

plt.ylabel('SNR')

plt.legend(['noise','denoise'])

plt.savefig('snr_plot.png', bbox_inches='tight')

plt.tight_layout()

plt.show()

训练图像

模型优化方向

- 注意力以及编解码模型的运用,针对局部噪声,如果与其他空间位置有关系,可以采用注意力或者增大感受野的方式进行解决。更多偏向于模型对整体地震波的感知。

- 针对复杂噪声,模型可以多尺度化,不止是针对模型出现的噪声,更针对地震波在语义上的特征也可以进行去噪化。真实的地震波不止出现在最后,也可以在多个尺度内对地震波进行去噪。

- 优化函数多样化 针对的目标一致的情况下, 可以添加相对应的代价评估函数,损失函数设计等等,模型欠拟合可以采用非线性更为强悍的激活函数,模型过拟合时,常常要通过正则化、提前终止、学习率衰减等方式进行解决。视具体任务而言

- 预处理多样化 相对复杂的任务,可能模型的鲁棒性达不到要求,一般可以通过一些常规的算法进行数据的预处理工作,包括无监督,傅里叶去噪等等,模型学习通过预处理 的数据的时候加快收敛速度

参考bilibili 基于深度学习的地震数据去噪处理

有兴趣的小伙欢迎一起探讨技术,附个人微信

本人测井方向、数字岩心导电性的数值模拟。

版权归原作者 小陈phd 所有, 如有侵权,请联系我们删除。