一、前言

MobileOne论文:https://arxiv.org/abs/2206.04040

MobileOne github:https://github.com/apple/ml-mobileone

二、基本原理

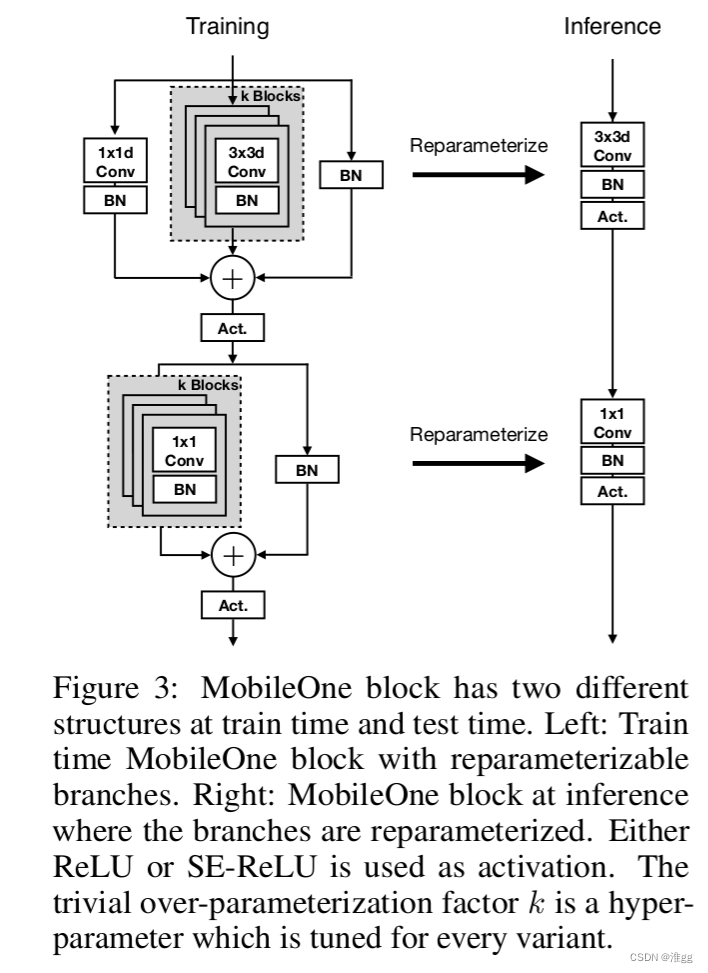

使用Reparameterize重参数化实现模型的轻量化,基本模块如下图所示。

三、改进方法

说明: 该部分的改进代码尽可能地根据官方代码的写法与YOLOv7项目进行整合;

3.1 改进分析

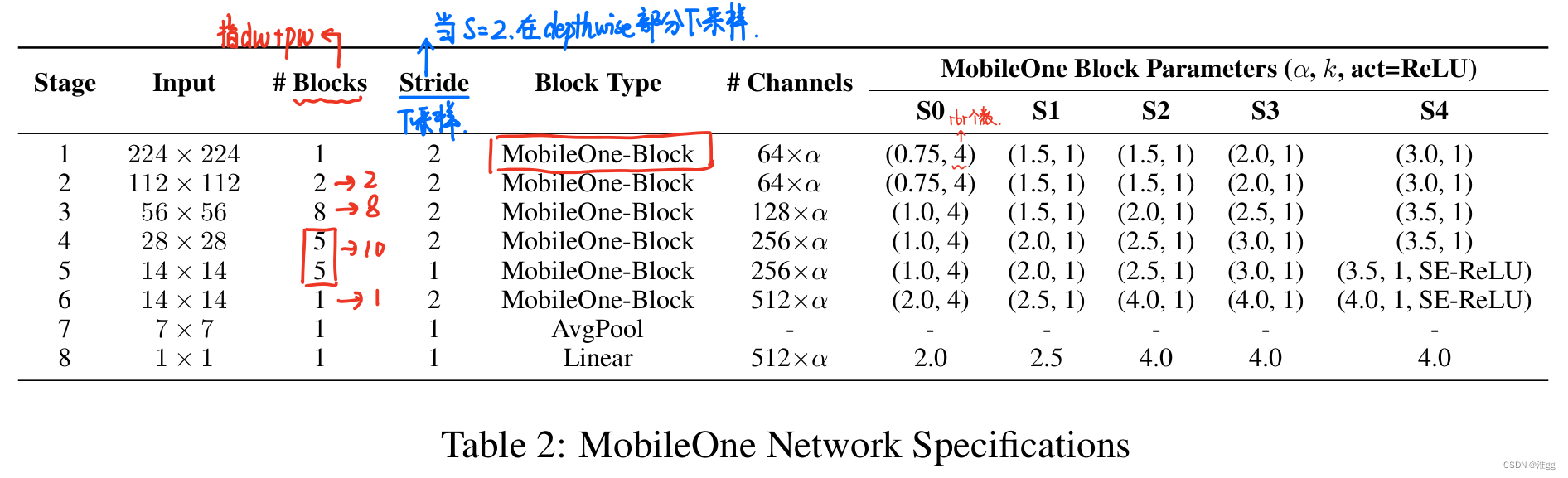

通过阅读MobileOne源码和结合论文中Table2可以发现以下两点:

(1)Table2中Block Type全写为MobileOne Block,但在源码中的Stage1和后面的Block是稍有不同的,因此在3.2改进YOLOv7时中使用MobileOne Block和MobileOne进行区分;

(2)源码将Stage4和Stage5写在了一起,因此在换Backbone时我们也写在一起,因此在yaml中会看到Stage1后面Blocks个数为【2,8,10,1】

3.2 实现步骤

步骤一:构建MobileOneBlock、MobileOne、SEBlock、reparameterize模块

在项目文件中的models/common.py中加入以下代码

#====MobileOne====#import copy as copy2 # 为防止与common原来引入的copy冲突, for mobileone reparameterizefrom typing import Optional, List, Tuple

classSEBlock(nn.Module):""" Squeeze and Excite module.

https://arxiv.org/pdf/1709.01507.pdf

"""def__init__(self, in_channels:int, rd_ratio:float=0.0625)->None:""" Construct a Squeeze and Excite Module.

:param in_channels: Number of input channels.

:param rd_ratio: Input channel reduction ratio.

"""super(SEBlock, self).__init__()

self.reduce= nn.Conv2d(in_channels=in_channels,out_channels=int(in_channels * rd_ratio), kernel_size=1, stride=1, bias=True)

self.expand = nn.Conv2d(in_channels=int(in_channels * rd_ratio),out_channels=in_channels, kernel_size=1, stride=1, bias=True)defforward(self, inputs: torch.Tensor)-> torch.Tensor:""" Apply forward pass. """

b, c, h, w = inputs.size()

x = F.avg_pool2d(inputs, kernel_size=[h, w])

x = self.reduce(x)

x = F.relu(x)

x = self.expand(x)

x = torch.sigmoid(x)

x = x.view(-1, c,1,1)return inputs * x

classMobileOneBlock(nn.Module):""" MobileOne building block. https://arxiv.org/pdf/2206.04040.pdf

"""def__init__(self, in_channels:int, out_channels:int, kernel_size:int, stride:int=1,

padding:int=0, dilation:int=1, groups:int=1, use_se:bool=False, num_conv_branches:int=1, inference_mode:bool=False)->None:""" Construct a MobileOneBlock module.

:param in_channels: Number of channels in the input.

:param out_channels: Number of channels produced by the block.

:param kernel_size: Size of the convolution kernel.

:param stride: Stride size.

:param padding: Zero-padding size.

:param dilation: Kernel dilation factor.

:param groups: Group number.

:param inference_mode: If True, instantiates model in inference mode.

:param use_se: Whether to use SE-ReLU activations.

:param num_conv_branches: Number of linear conv branches.

"""super(MobileOneBlock, self).__init__()

self.inference_mode = inference_mode

self.groups = groups

self.stride = stride

self.kernel_size = kernel_size

self.in_channels = in_channels

self.out_channels = out_channels

self.num_conv_branches = num_conv_branches # 4# Check if SE-ReLU is requestedif use_se:

self.se = SEBlock(out_channels)else:

self.se = nn.Identity()

self.activation = nn.ReLU()if inference_mode:

self.reparam_conv = nn.Conv2d(in_channels=in_channels, out_channels=out_channels, kernel_size=kernel_size,stride=stride, padding=padding, dilation=dilation, groups=groups, bias=True)else:# Re-parameterizable skip connection

self.rbr_skip = nn.BatchNorm2d(num_features=in_channels)if out_channels == in_channels and stride ==1elseNone# BN skip# Re-parameterizable conv branches

rbr_conv =list()for _ inrange(self.num_conv_branches):

rbr_conv.append(self._conv_bn(kernel_size=kernel_size, padding=padding))

self.rbr_conv = nn.ModuleList(rbr_conv)# Re-parameterizable scale branch

self.rbr_scale =Noneif kernel_size >1:

self.rbr_scale = self._conv_bn(kernel_size=1, padding=0)defforward(self, x: torch.Tensor)-> torch.Tensor:""" Apply forward pass. """# Inference mode forward pass.if self.inference_mode:return self.activation(self.se(self.reparam_conv(x)))# Multi-branched train-time forward pass.# Skip branch output

identity_out =0if self.rbr_skip isnotNone:

identity_out = self.rbr_skip(x)# Scale branch output

scale_out =0if self.rbr_scale isnotNone:

scale_out = self.rbr_scale(x)# Other branches

out = scale_out + identity_out

for ix inrange(self.num_conv_branches):

out += self.rbr_conv[ix](x)return self.activation(self.se(out))defreparameterize(self):""" Following works like `RepVGG: Making VGG-style ConvNets Great Again` -

https://arxiv.org/pdf/2101.03697.pdf. We re-parameterize multi-branched

architecture used at training time to obtain a plain CNN-like structure

for inference.

"""if self.inference_mode:return

kernel, bias = self._get_kernel_bias()

self.reparam_conv = nn.Conv2d(in_channels=self.rbr_conv[0].conv.in_channels,

out_channels=self.rbr_conv[0].conv.out_channels,

kernel_size=self.rbr_conv[0].conv.kernel_size,

stride=self.rbr_conv[0].conv.stride,

padding=self.rbr_conv[0].conv.padding,

dilation=self.rbr_conv[0].conv.dilation,

groups=self.rbr_conv[0].conv.groups,

bias=True)

self.reparam_conv.weight.data = kernel

self.reparam_conv.bias.data = bias

# Delete un-used branchesfor para in self.parameters():

para.detach_()

self.__delattr__('rbr_conv')

self.__delattr__('rbr_scale')ifhasattr(self,'rbr_skip'):

self.__delattr__('rbr_skip')

self.inference_mode =Truedef_get_kernel_bias(self)-> Tuple[torch.Tensor, torch.Tensor]:""" Method to obtain re-parameterized kernel and bias.

Reference: https://github.com/DingXiaoH/RepVGG/blob/main/repvgg.py#L83

:return: Tuple of (kernel, bias) after fusing branches.

"""# get weights and bias of scale branch

kernel_scale =0

bias_scale =0if self.rbr_scale isnotNone:

kernel_scale, bias_scale = self._fuse_bn_tensor(self.rbr_scale)# Pad scale branch kernel to match conv branch kernel size.

pad = self.kernel_size //2

kernel_scale = torch.nn.functional.pad(kernel_scale,[pad, pad, pad, pad])# get weights and bias of skip branch

kernel_identity =0

bias_identity =0if self.rbr_skip isnotNone:

kernel_identity, bias_identity = self._fuse_bn_tensor(self.rbr_skip)# get weights and bias of conv branches

kernel_conv =0

bias_conv =0for ix inrange(self.num_conv_branches):

_kernel, _bias = self._fuse_bn_tensor(self.rbr_conv[ix])

kernel_conv += _kernel

bias_conv += _bias

kernel_final = kernel_conv + kernel_scale + kernel_identity

bias_final = bias_conv + bias_scale + bias_identity

return kernel_final, bias_final

def_fuse_bn_tensor(self, branch)-> Tuple[torch.Tensor, torch.Tensor]:""" Method to fuse batchnorm layer with preceeding conv layer.

Reference: https://github.com/DingXiaoH/RepVGG/blob/main/repvgg.py#L95

:param branch:

:return: Tuple of (kernel, bias) after fusing batchnorm.

"""ifisinstance(branch, nn.Sequential):

kernel = branch.conv.weight

running_mean = branch.bn.running_mean

running_var = branch.bn.running_var

gamma = branch.bn.weight

beta = branch.bn.bias

eps = branch.bn.eps

else:assertisinstance(branch, nn.BatchNorm2d)ifnothasattr(self,'id_tensor'):

input_dim = self.in_channels // self.groups

kernel_value = torch.zeros((self.in_channels, input_dim, self.kernel_size, self.kernel_size),

dtype=branch.weight.dtype, device=branch.weight.device)for i inrange(self.in_channels):

kernel_value[i, i % input_dim,self.kernel_size //2, self.kernel_size //2]=1

self.id_tensor = kernel_value

kernel = self.id_tensor

running_mean = branch.running_mean

running_var = branch.running_var

gamma = branch.weight

beta = branch.bias

eps = branch.eps

std =(running_var + eps).sqrt()

t =(gamma / std).reshape(-1,1,1,1)return kernel * t, beta - running_mean * gamma / std

def_conv_bn(self, kernel_size:int, padding:int)-> nn.Sequential:""" Helper method to construct conv-batchnorm layers.

:param kernel_size: Size of the convolution kernel.

:param padding: Zero-padding size.

:return: Conv-BN module.

"""

mod_list = nn.Sequential()

mod_list.add_module('conv', nn.Conv2d(in_channels=self.in_channels,out_channels=self.out_channels,

kernel_size=kernel_size, stride=self.stride, padding=padding, groups=self.groups, bias=False))

mod_list.add_module('bn', nn.BatchNorm2d(num_features=self.out_channels))return mod_list

classMobileOne(nn.Module):""" MobileOne Model https://arxiv.org/pdf/2206.04040.pdf """def__init__(self,

in_channels, out_channels,

num_blocks_per_stage =2, num_conv_branches:int=1,

use_se:bool=False, num_se:int=0,

inference_mode:bool=False,)->None:""" Construct MobileOne model.

:param num_blocks_per_stage: List of number of blocks per stage.

:param num_classes: Number of classes in the dataset.

:param width_multipliers: List of width multiplier for blocks in a stage.

:param inference_mode: If True, instantiates model in inference mode.

:param use_se: Whether to use SE-ReLU activations.

:param num_conv_branches: Number of linear conv branches.

"""super().__init__()

self.inference_mode = inference_mode

self.use_se = use_se

self.num_conv_branches = num_conv_branches

self.stage = self._make_stage(in_channels, out_channels, num_blocks_per_stage, num_se_blocks= num_se if use_se else0)# planes指输出通道def_make_stage(self, in_channels, out_channels, num_blocks:int, num_se_blocks:int)-> nn.Sequential:""" Build a stage of MobileOne model.

:param planes: Number of output channels.

:param num_blocks: Number of blocks in this stage.

:param num_se_blocks: Number of SE blocks in this stage.

:return: A stage of MobileOne model.

"""# Get strides for all layers

strides =[2]+[1]*(num_blocks-1)

blocks =[]for ix, stride inenumerate(strides):# 用于训练几个blocks

use_se =Falseif num_se_blocks > num_blocks:raise ValueError("Number of SE blocks cannot ""exceed number of layers.")if ix >=(num_blocks - num_se_blocks):

use_se =True# Depthwise conv

blocks.append(MobileOneBlock(in_channels=in_channels, out_channels=in_channels,

kernel_size=3, stride=stride, padding=1, groups=in_channels,

inference_mode=self.inference_mode, use_se=use_se, num_conv_branches=self.num_conv_branches))# Pointwise conv

blocks.append(MobileOneBlock(in_channels=in_channels, out_channels=out_channels,

kernel_size=1, stride=1, padding=0, groups=1,

inference_mode=self.inference_mode, use_se=use_se, num_conv_branches=self.num_conv_branches))

in_channels = out_channels

return nn.Sequential(*blocks)defforward(self, x: torch.Tensor)-> torch.Tensor:""" Apply forward pass. """

x = self.stage(x)return x

defreparameterize_model(model: torch.nn.Module)-> nn.Module:""" Method returns a model where a multi-branched structure

used in training is re-parameterized into a single branch

for inference.

:param model: MobileOne model in train mode.

:return: MobileOne model in inference mode.

"""# Avoid editing original graph

model = copy2.deepcopy(model)for module in model.modules():ifhasattr(module,'reparameterize'):

module.reparameterize()return model

步骤二:在yolo.py的parse_model添加Mobileone的构建块

elif m in[MobileOneBlock, MobileOne]:

c1, c2 = ch[f], args[0]

args =[c1, c2,*args[1:]]

步骤三:创建新的模型文件

此处以更换yolov7-tiny的backbone为例,且修改为mobileone中的ms0模型,命名yolov7-tiny-ms0.yaml

# parameters

nc:3# number of classes

depth_multiple:1.0# model depth multiple

width_multiple:1.0# layer channel multiple# anchors

anchors:-[10,13,16,30,33,23]# P3/8-[30,61,62,45,59,119]# P4/16-[116,90,156,198,373,326]# P5/32# yolov7-tiny backbone

backbone:# [from, number, module, args] c2, k=1, s=1, p=None, g=1, act=True[[-1,1, MobileOneBlock,[48,3,2,1]],# 0[-1,1, MobileOne,[48,2,4,False,0]],# MobileOne [out_channels, num_blocks, num_conv_branches, use_se, num_se, inference_mode][-1,1, MobileOne,[128,8,4,False,0]],[-1,1, MobileOne,[256,10,4,False,0]],[-1,1, MobileOne,[512,1,4,False,0]],# 4]# yolov7-tiny head

head:[[-1,1, Conv,[256,1,1,None,1, nn.LeakyReLU(0.1)]],[-2,1, Conv,[256,1,1,None,1, nn.LeakyReLU(0.1)]],[-1,1, SP,[5]],[-2,1, SP,[9]],[-3,1, SP,[13]],[[-1,-2,-3,-4],1, Concat,[1]],[-1,1, Conv,[256,1,1,None,1, nn.LeakyReLU(0.1)]],[[-1,-7],1, Concat,[1]],[-1,1, Conv,[256,1,1,None,1, nn.LeakyReLU(0.1)]],# 13[-1,1, Conv,[128,1,1,None,1, nn.LeakyReLU(0.1)]],[-1,1, nn.Upsample,[None,2,'nearest']],[3,1, Conv,[128,1,1,None,1, nn.LeakyReLU(0.1)]],# route backbone P4[[-1,-2],1, Concat,[1]],[-1,1, Conv,[64,1,1,None,1, nn.LeakyReLU(0.1)]],[-2,1, Conv,[64,1,1,None,1, nn.LeakyReLU(0.1)]],[-1,1, Conv,[64,3,1,None,1, nn.LeakyReLU(0.1)]],[-1,1, Conv,[64,3,1,None,1, nn.LeakyReLU(0.1)]],[[-1,-2,-3,-4],1, Concat,[1]],[-1,1, Conv,[128,1,1,None,1, nn.LeakyReLU(0.1)]],# 23[-1,1, Conv,[64,1,1,None,1, nn.LeakyReLU(0.1)]],[-1,1, nn.Upsample,[None,2,'nearest']],[2,1, Conv,[64,1,1,None,1, nn.LeakyReLU(0.1)]],[[-1,-2],1, Concat,[1]],# 27[-1,1, Conv,[32,1,1,None,1, nn.LeakyReLU(0.1)]],[-2,1, Conv,[32,1,1,None,1, nn.LeakyReLU(0.1)]],[-1,1, Conv,[32,3,1,None,1, nn.LeakyReLU(0.1)]],[-1,1, Conv,[32,3,1,None,1, nn.LeakyReLU(0.1)]],[[-1,-2,-3,-4],1, Concat,[1]],[-1,1, Conv,[64,1,1,None,1, nn.LeakyReLU(0.1)]],# 33[-1,1, Conv,[128,3,2,None,1, nn.LeakyReLU(0.1)]],[[-1,23],1, Concat,[1]],[-1,1, Conv,[64,1,1,None,1, nn.LeakyReLU(0.1)]],[-2,1, Conv,[64,1,1,None,1, nn.LeakyReLU(0.1)]],[-1,1, Conv,[64,3,1,None,1, nn.LeakyReLU(0.1)]],[-1,1, Conv,[64,3,1,None,1, nn.LeakyReLU(0.1)]],[[-1,-2,-3,-4],1, Concat,[1]],[-1,1, Conv,[128,1,1,None,1, nn.LeakyReLU(0.1)]],# 41[-1,1, Conv,[256,3,2,None,1, nn.LeakyReLU(0.1)]],[[-1,13],1, Concat,[1]],[-1,1, Conv,[128,1,1,None,1, nn.LeakyReLU(0.1)]],[-2,1, Conv,[128,1,1,None,1, nn.LeakyReLU(0.1)]],[-1,1, Conv,[128,3,1,None,1, nn.LeakyReLU(0.1)]],[-1,1, Conv,[128,3,1,None,1, nn.LeakyReLU(0.1)]],[[-1,-2,-3,-4],1, Concat,[1]],[-1,1, Conv,[256,1,1,None,1, nn.LeakyReLU(0.1)]],# 49[33,1, Conv,[128,3,1,None,1, nn.LeakyReLU(0.1)]],[41,1, Conv,[256,3,1,None,1, nn.LeakyReLU(0.1)]],[49,1, Conv,[512,3,1,None,1, nn.LeakyReLU(0.1)]],# 52[[50,51,52],1, IDetect,[nc, anchors]],# Detect(P3, P4, P5)]

步骤五:推理部分reparameterize

在yolo.py文件中的Model类中的fuse方法,加入MobileOne和MobileOneBlock部分

deffuse(self):# fuse model Conv2d() + BatchNorm2d() layersprint('Fusing layers... ')for m in self.model.modules():ifisinstance(m, RepConv):#print(f" fuse_repvgg_block")

m.fuse_repvgg_block()elifisinstance(m, RepConv_OREPA):#print(f" switch_to_deploy")

m.switch_to_deploy()#======该部分elifisinstance(m,(MobileOne, MobileOneBlock))andhasattr(m,'reparameterize'):

m.reparameterize()#=======eliftype(m)is Conv andhasattr(m,'bn'):

m.conv = fuse_conv_and_bn(m.conv, m.bn)# update convdelattr(m,'bn')# remove batchnorm

m.forward = m.fuseforward # update forwardelifisinstance(m,(IDetect, IAuxDetect)):

m.fuse()

m.forward = m.fuseforward

self.info()return self

完成以上5步就可以正常开始训练和测试了~

四、预训练权重

该部分的与训练权重是在MobileOne官方的MobileOne-ms0的官方预训练权重,已兼容YOLOv7项目。

link:https://github.com/uniquechow/YOLO_series_doc/tree/main/lightweight/MobileOne

若有其他问题,可私信交流~~~

版权归原作者 淮gg 所有, 如有侵权,请联系我们删除。