关于DenseNet的原理和具体细节,可参见上篇解读:经典神经网络论文超详细解读(六)——DenseNet学习笔记(翻译+精读+代码复现)

接下来我们就来复现一下代码。

DenseNet模型简介

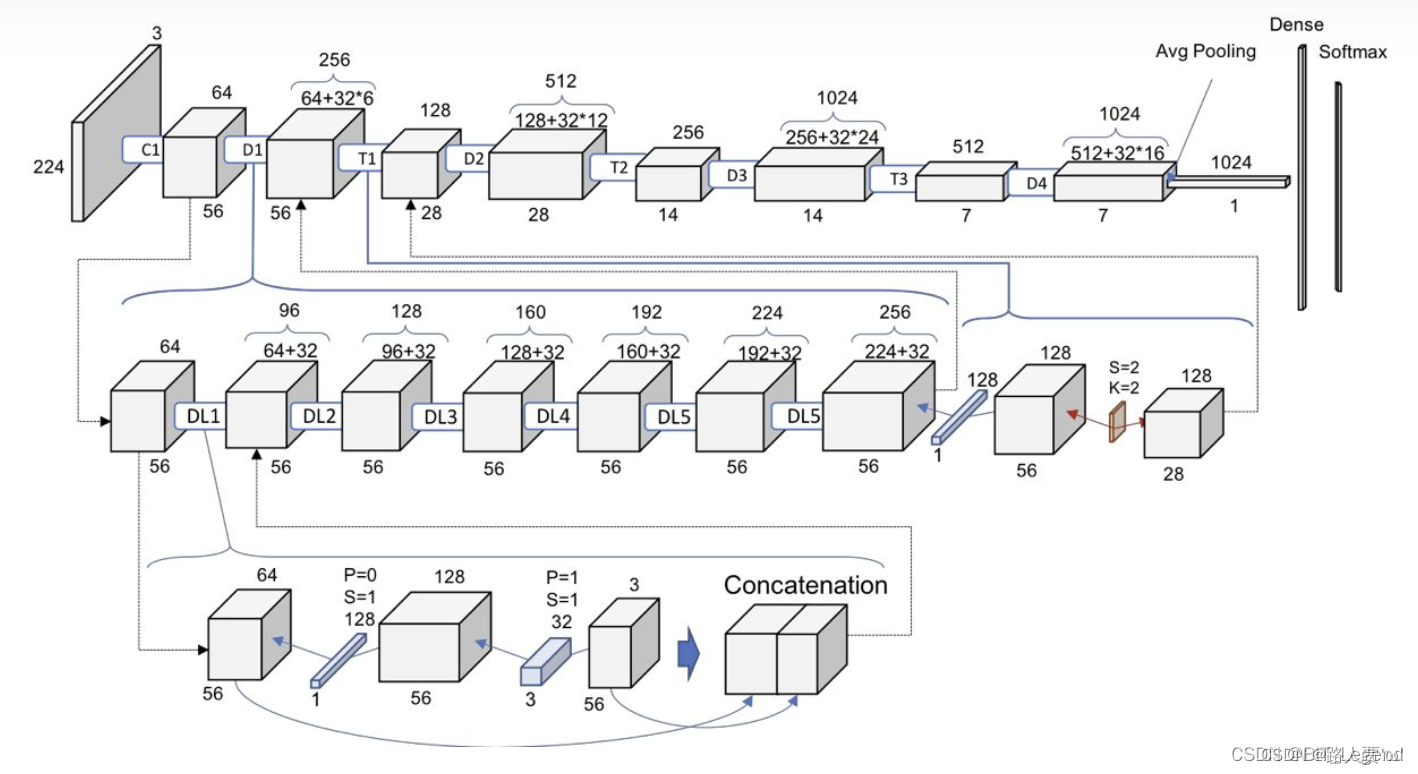

整个DenseNet模型主要包含三个核心细节结构,分别是DenseLayer(整个模型最基础的原子单元,完成一次最基础的特征提取,如下图第三行)、DenseBlock(整个模型密集连接的基础单元,如下图第二行左侧部分)和Transition(不同密集连接之间的过渡单元,如下图第二行右侧部分),通过以上结构的拼接+分类层即可完成整个模型的搭建。

**DenseLayer层 *包含BN + Relu + 11Conv + BN + Relu + 3*3Conv。在DenseBlock中,各个层的特征图大小一致,可以在channel维度上连接。所有DenseBlock中各个层卷积之后均输出 k个特征图,即得到的特征图的channel数为k ,或者说采用 k 个卷积核。 其中,k 在DenseNet称为growth rate,这是一个超参数。一般情况下使用较小的k(比如12),就可以得到较佳的性能。

**DenseBlock模块 **其实就是堆叠一定数量的DenseLayer层,在整个DenseBlock模块内不同DenseLayer层之间会发生密集连接,在DenseBlock模块内特征层宽度不变,不存在stride=2或者池化的情况。

*Transition模块 *包含BN + Relu + 11Conv + 2*2AvgPool,11Conv负责降低通道数,2*2AvgPool负责降低特征层宽度,降低到1/2。另外,Transition层可以起到压缩模型的作用。假定Transition的上接DenseBlock得到的特征图channels数为m,Transition层可以产生 ⌊θm⌋个特征(通过卷积层),其中θ∈(0,1] 是压缩系数(compression rate)。当θ=1 时,特征个数经过Transition层没有变化,即无压缩,而当压缩系数小于1时,这种结构称为DenseNet-C,文中使用θ=0.5 。对于使用bottleneck层的DenseBlock结构和压缩系数小于1的Transition组合结构称为DenseNet-BC。

一、构造初始卷积层

初始卷积层是由一个77的conv层和33的pooling层组成,stride都为2

代码

'''-------------一、构造初始卷积层-----------------------------'''

def Conv1(in_planes, places, stride=2):

return nn.Sequential(

nn.Conv2d(in_channels=in_planes,out_channels=places,kernel_size=7,stride=stride,padding=3, bias=False),

nn.BatchNorm2d(places),

nn.ReLU(inplace=True),

nn.MaxPool2d(kernel_size=3, stride=2, padding=1)

)

二、构造Dense Block模块

2.1 构造Dense Block内部结构

DenserLayer是Dense Block内部结构,BN+ReLU+1x1 Conv+BN+ReLU+3x3 Conv,最后也加入

dropout

层以用于训练过程。主要用于特征的提取等工作,控制输入经过网络后,输入的模型的特征数量,比如第一个模型输入是5个特征层,后面一个是四个特征层等。

但是可以发现一点,这个和别的网络有所不同的是,每一个DenseLayer虽然特征提取的函数一样的,因为要结合前面的特征最为新的网络的输入,所以模型每次的输入的维度是不同。比如groth_rate = 32,每次输入特征都会在原来的基础上增加32个通道。因此需要在函数中定义 num_layer个不同输入的网络模型,这也正是模型函数有意思的一点。

代码

'''---(1)构造Dense Block内部结构---'''

#BN+ReLU+1x1 Conv+BN+ReLU+3x3 Conv

class _DenseLayer(nn.Module):

def __init__(self, inplace, growth_rate, bn_size, drop_rate=0):

super(_DenseLayer, self).__init__()

self.drop_rate = drop_rate

self.dense_layer = nn.Sequential(

nn.BatchNorm2d(inplace),

nn.ReLU(inplace=True),

# growth_rate:增长率。一层产生多少个特征图

nn.Conv2d(in_channels=inplace, out_channels=bn_size * growth_rate, kernel_size=1, stride=1, padding=0, bias=False),

nn.BatchNorm2d(bn_size * growth_rate),

nn.ReLU(inplace=True),

nn.Conv2d(in_channels=bn_size * growth_rate, out_channels=growth_rate, kernel_size=3, stride=1, padding=1, bias=False),

)

self.dropout = nn.Dropout(p=self.drop_rate)

def forward(self, x):

y = self.dense_layer(x)

if self.drop_rate > 0:

y = self.dropout(y)

return torch.cat([x, y], 1)

2.2 构造Dense Block模块

再实现

DenseBlock

模块,内部是密集连接方式(输入特征数线性增长)

代码

'''---(2)构造Dense Block模块---'''

class DenseBlock(nn.Module):

def __init__(self, num_layers, inplances, growth_rate, bn_size , drop_rate=0):

super(DenseBlock, self).__init__()

layers = []

# 随着layer层数的增加,每增加一层,输入的特征图就增加一倍growth_rate

for i in range(num_layers):

layers.append(_DenseLayer(inplances + i * growth_rate, growth_rate, bn_size, drop_rate))

self.layers = nn.Sequential(*layers)

def forward(self, x):

return self.layers(x)

三、构造Transition模块

Transition模块降低模型复杂度。包含BN + Relu + 11Conv + 22AvgPool结构由于每个稠密块都会带来通道数的增加,使用过多则会带来过于复杂的模型。过渡层用来控制模型复杂度。它通过1 × 1 卷积层来减小通道数,并使用步幅为2的平均池化层减半高和宽。

代码

'''-------------三、构造Transition层-----------------------------'''

#BN+1×1Conv+2×2AveragePooling

class _TransitionLayer(nn.Module):

def __init__(self, inplace, plance):

super(_TransitionLayer, self).__init__()

self.transition_layer = nn.Sequential(

nn.BatchNorm2d(inplace),

nn.ReLU(inplace=True),

nn.Conv2d(in_channels=inplace,out_channels=plance,kernel_size=1,stride=1,padding=0,bias=False),

nn.AvgPool2d(kernel_size=2,stride=2),

)

def forward(self, x):

return self.transition_layer(x)

四、搭建DenseNet网络

DenseNet如下图所示,主要是由多个DenseBlock组成

代码

'''-------------四、搭建DenseNet网络-----------------------------'''

class DenseNet(nn.Module):

def __init__(self, init_channels=64, growth_rate=32, blocks=[6, 12, 24, 16],num_classes=10):

super(DenseNet, self).__init__()

bn_size = 4

drop_rate = 0

self.conv1 = Conv1(in_planes=3, places=init_channels)

blocks*4

#第一次执行特征的维度来自于前面的特征提取

num_features = init_channels

# 第1个DenseBlock有6个DenseLayer, 执行DenseBlock(6,64,32,4)

self.layer1 = DenseBlock(num_layers=blocks[0], inplances=num_features, growth_rate=growth_rate, bn_size=bn_size, drop_rate=drop_rate)

num_features = num_features + blocks[0] * growth_rate

# 第1个transition 执行 _TransitionLayer(256,128)

self.transition1 = _TransitionLayer(inplace=num_features, plance=num_features // 2)

#num_features减少为原来的一半,执行第1回合之后,第2个DenseBlock的输入的feature应该是:num_features = 128

num_features = num_features // 2

# 第2个DenseBlock有12个DenseLayer, 执行DenseBlock(12,128,32,4)

self.layer2 = DenseBlock(num_layers=blocks[1], inplances=num_features, growth_rate=growth_rate, bn_size=bn_size, drop_rate=drop_rate)

num_features = num_features + blocks[1] * growth_rate

# 第2个transition 执行 _TransitionLayer(512,256)

self.transition2 = _TransitionLayer(inplace=num_features, plance=num_features // 2)

# num_features减少为原来的一半,执行第2回合之后,第3个DenseBlock的输入的feature应该是:num_features = 256

num_features = num_features // 2

# 第3个DenseBlock有24个DenseLayer, 执行DenseBlock(24,256,32,4)

self.layer3 = DenseBlock(num_layers=blocks[2], inplances=num_features, growth_rate=growth_rate, bn_size=bn_size, drop_rate=drop_rate)

num_features = num_features + blocks[2] * growth_rate

# 第3个transition 执行 _TransitionLayer(1024,512)

self.transition3 = _TransitionLayer(inplace=num_features, plance=num_features // 2)

# num_features减少为原来的一半,执行第3回合之后,第4个DenseBlock的输入的feature应该是:num_features = 512

num_features = num_features // 2

# 第4个DenseBlock有16个DenseLayer, 执行DenseBlock(16,512,32,4)

self.layer4 = DenseBlock(num_layers=blocks[3], inplances=num_features, growth_rate=growth_rate, bn_size=bn_size, drop_rate=drop_rate)

num_features = num_features + blocks[3] * growth_rate

self.avgpool = nn.AvgPool2d(7, stride=1)

self.fc = nn.Linear(num_features, num_classes)

def forward(self, x):

x = self.conv1(x)

x = self.layer1(x)

x = self.transition1(x)

x = self.layer2(x)

x = self.transition2(x)

x = self.layer3(x)

x = self.transition3(x)

x = self.layer4(x)

x = self.avgpool(x)

x = x.view(x.size(0), -1)

x = self.fc(x)

return x

五、网络模型的创建和测试

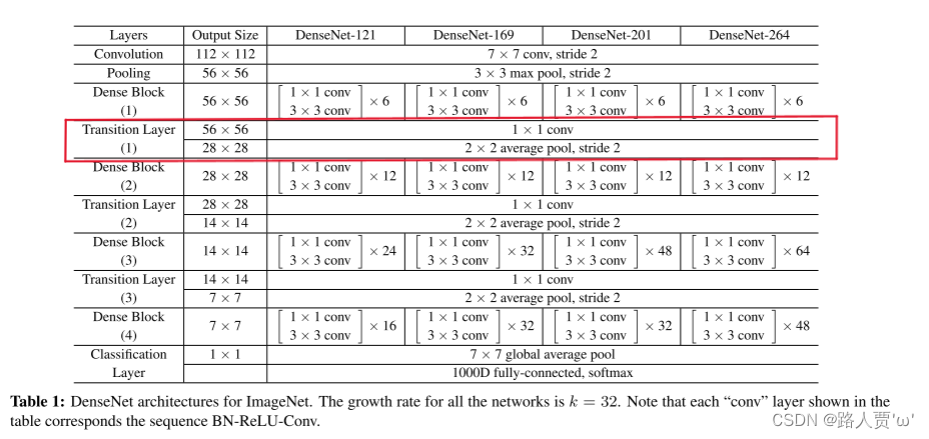

5.1 网络模型创建打印 densenet121

Q: densenet121 中的121 是如何来的?

从设置的参数可以看出来,block_num=4,且每个Block的num_layer=(6, 12, 24, 16),则总共有58个denselayer。

从代码中可以知道每个denselayer包含两个卷积。总共三个 _Transition层,每个层一个卷积。在最开始的时候一个卷积,结束的时候一个全连接层。则总计:58*2+3+1+1=121

代码

if __name__=='__main__':

# model = torchvision.models.densenet121()

model = DenseNet121()

print(model)

input = torch.randn(1, 3, 224, 224)

out = model(input)

print(out.shape)

打印模型如下

DenseNet(

(features): Sequential(

(conv0): Conv2d(3, 64, kernel_size=(7, 7), stride=(2, 2), padding=(3, 3), bias=False)

(norm0): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu0): ReLU(inplace=True)

(pool0): MaxPool2d(kernel_size=3, stride=2, padding=1, dilation=1, ceil_mode=False)

(denseblock1): _DenseBlock(

(denselayer1): _DenseLayer(

(norm1): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu1): ReLU(inplace=True)

(conv1): Conv2d(64, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)

(norm2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu2): ReLU(inplace=True)

(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

)

(denselayer2): _DenseLayer(

(norm1): BatchNorm2d(96, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu1): ReLU(inplace=True)

(conv1): Conv2d(96, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)

(norm2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu2): ReLU(inplace=True)

(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

)

(denselayer3): _DenseLayer(

(norm1): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu1): ReLU(inplace=True)

(conv1): Conv2d(128, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)

(norm2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu2): ReLU(inplace=True)

(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

)

(denselayer4): _DenseLayer(

(norm1): BatchNorm2d(160, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu1): ReLU(inplace=True)

(conv1): Conv2d(160, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)

(norm2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu2): ReLU(inplace=True)

(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

)

(denselayer5): _DenseLayer(

(norm1): BatchNorm2d(192, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu1): ReLU(inplace=True)

(conv1): Conv2d(192, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)

(norm2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu2): ReLU(inplace=True)

(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

)

(denselayer6): _DenseLayer(

(norm1): BatchNorm2d(224, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu1): ReLU(inplace=True)

(conv1): Conv2d(224, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)

(norm2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu2): ReLU(inplace=True)

(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

)

)

(transition1): _Transition(

(norm): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu): ReLU(inplace=True)

(conv): Conv2d(256, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)

(pool): AvgPool2d(kernel_size=2, stride=2, padding=0)

)

(denseblock2): _DenseBlock(

(denselayer1): _DenseLayer(

(norm1): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu1): ReLU(inplace=True)

(conv1): Conv2d(128, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)

(norm2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu2): ReLU(inplace=True)

(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

)

(denselayer2): _DenseLayer(

(norm1): BatchNorm2d(160, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu1): ReLU(inplace=True)

(conv1): Conv2d(160, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)

(norm2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu2): ReLU(inplace=True)

(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

)

(denselayer3): _DenseLayer(

(norm1): BatchNorm2d(192, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu1): ReLU(inplace=True)

(conv1): Conv2d(192, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)

(norm2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu2): ReLU(inplace=True)

(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

)

(denselayer4): _DenseLayer(

(norm1): BatchNorm2d(224, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu1): ReLU(inplace=True)

(conv1): Conv2d(224, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)

(norm2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu2): ReLU(inplace=True)

(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

)

(denselayer5): _DenseLayer(

(norm1): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu1): ReLU(inplace=True)

(conv1): Conv2d(256, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)

(norm2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu2): ReLU(inplace=True)

(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

)

(denselayer6): _DenseLayer(

(norm1): BatchNorm2d(288, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu1): ReLU(inplace=True)

(conv1): Conv2d(288, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)

(norm2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu2): ReLU(inplace=True)

(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

)

(denselayer7): _DenseLayer(

(norm1): BatchNorm2d(320, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu1): ReLU(inplace=True)

(conv1): Conv2d(320, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)

(norm2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu2): ReLU(inplace=True)

(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

)

(denselayer8): _DenseLayer(

(norm1): BatchNorm2d(352, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu1): ReLU(inplace=True)

(conv1): Conv2d(352, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)

(norm2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu2): ReLU(inplace=True)

(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

)

(denselayer9): _DenseLayer(

(norm1): BatchNorm2d(384, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu1): ReLU(inplace=True)

(conv1): Conv2d(384, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)

(norm2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu2): ReLU(inplace=True)

(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

)

(denselayer10): _DenseLayer(

(norm1): BatchNorm2d(416, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu1): ReLU(inplace=True)

(conv1): Conv2d(416, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)

(norm2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu2): ReLU(inplace=True)

(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

)

(denselayer11): _DenseLayer(

(norm1): BatchNorm2d(448, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu1): ReLU(inplace=True)

(conv1): Conv2d(448, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)

(norm2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu2): ReLU(inplace=True)

(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

)

(denselayer12): _DenseLayer(

(norm1): BatchNorm2d(480, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu1): ReLU(inplace=True)

(conv1): Conv2d(480, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)

(norm2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu2): ReLU(inplace=True)

(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

)

)

(transition2): _Transition(

(norm): BatchNorm2d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu): ReLU(inplace=True)

(conv): Conv2d(512, 256, kernel_size=(1, 1), stride=(1, 1), bias=False)

(pool): AvgPool2d(kernel_size=2, stride=2, padding=0)

)

(denseblock3): _DenseBlock(

(denselayer1): _DenseLayer(

(norm1): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu1): ReLU(inplace=True)

(conv1): Conv2d(256, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)

(norm2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu2): ReLU(inplace=True)

(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

)

(denselayer2): _DenseLayer(

(norm1): BatchNorm2d(288, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu1): ReLU(inplace=True)

(conv1): Conv2d(288, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)

(norm2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu2): ReLU(inplace=True)

(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

)

(denselayer3): _DenseLayer(

(norm1): BatchNorm2d(320, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu1): ReLU(inplace=True)

(conv1): Conv2d(320, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)

(norm2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu2): ReLU(inplace=True)

(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

)

(denselayer4): _DenseLayer(

(norm1): BatchNorm2d(352, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu1): ReLU(inplace=True)

(conv1): Conv2d(352, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)

(norm2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu2): ReLU(inplace=True)

(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

)

(denselayer5): _DenseLayer(

(norm1): BatchNorm2d(384, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu1): ReLU(inplace=True)

(conv1): Conv2d(384, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)

(norm2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu2): ReLU(inplace=True)

(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

)

(denselayer6): _DenseLayer(

(norm1): BatchNorm2d(416, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu1): ReLU(inplace=True)

(conv1): Conv2d(416, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)

(norm2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu2): ReLU(inplace=True)

(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

)

(denselayer7): _DenseLayer(

(norm1): BatchNorm2d(448, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu1): ReLU(inplace=True)

(conv1): Conv2d(448, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)

(norm2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu2): ReLU(inplace=True)

(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

)

(denselayer8): _DenseLayer(

(norm1): BatchNorm2d(480, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu1): ReLU(inplace=True)

(conv1): Conv2d(480, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)

(norm2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu2): ReLU(inplace=True)

(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

)

(denselayer9): _DenseLayer(

(norm1): BatchNorm2d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu1): ReLU(inplace=True)

(conv1): Conv2d(512, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)

(norm2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu2): ReLU(inplace=True)

(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

)

(denselayer10): _DenseLayer(

(norm1): BatchNorm2d(544, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu1): ReLU(inplace=True)

(conv1): Conv2d(544, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)

(norm2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu2): ReLU(inplace=True)

(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

)

(denselayer11): _DenseLayer(

(norm1): BatchNorm2d(576, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu1): ReLU(inplace=True)

(conv1): Conv2d(576, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)

(norm2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu2): ReLU(inplace=True)

(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

)

(denselayer12): _DenseLayer(

(norm1): BatchNorm2d(608, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu1): ReLU(inplace=True)

(conv1): Conv2d(608, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)

(norm2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu2): ReLU(inplace=True)

(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

)

(denselayer13): _DenseLayer(

(norm1): BatchNorm2d(640, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu1): ReLU(inplace=True)

(conv1): Conv2d(640, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)

(norm2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu2): ReLU(inplace=True)

(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

)

(denselayer14): _DenseLayer(

(norm1): BatchNorm2d(672, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu1): ReLU(inplace=True)

(conv1): Conv2d(672, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)

(norm2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu2): ReLU(inplace=True)

(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

)

(denselayer15): _DenseLayer(

(norm1): BatchNorm2d(704, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu1): ReLU(inplace=True)

(conv1): Conv2d(704, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)

(norm2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu2): ReLU(inplace=True)

(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

)

(denselayer16): _DenseLayer(

(norm1): BatchNorm2d(736, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu1): ReLU(inplace=True)

(conv1): Conv2d(736, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)

(norm2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu2): ReLU(inplace=True)

(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

)

(denselayer17): _DenseLayer(

(norm1): BatchNorm2d(768, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu1): ReLU(inplace=True)

(conv1): Conv2d(768, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)

(norm2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu2): ReLU(inplace=True)

(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

)

(denselayer18): _DenseLayer(

(norm1): BatchNorm2d(800, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu1): ReLU(inplace=True)

(conv1): Conv2d(800, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)

(norm2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu2): ReLU(inplace=True)

(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

)

(denselayer19): _DenseLayer(

(norm1): BatchNorm2d(832, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu1): ReLU(inplace=True)

(conv1): Conv2d(832, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)

(norm2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu2): ReLU(inplace=True)

(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

)

(denselayer20): _DenseLayer(

(norm1): BatchNorm2d(864, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu1): ReLU(inplace=True)

(conv1): Conv2d(864, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)

(norm2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu2): ReLU(inplace=True)

(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

)

(denselayer21): _DenseLayer(

(norm1): BatchNorm2d(896, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu1): ReLU(inplace=True)

(conv1): Conv2d(896, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)

(norm2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu2): ReLU(inplace=True)

(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

)

(denselayer22): _DenseLayer(

(norm1): BatchNorm2d(928, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu1): ReLU(inplace=True)

(conv1): Conv2d(928, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)

(norm2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu2): ReLU(inplace=True)

(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

)

(denselayer23): _DenseLayer(

(norm1): BatchNorm2d(960, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu1): ReLU(inplace=True)

(conv1): Conv2d(960, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)

(norm2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu2): ReLU(inplace=True)

(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

)

(denselayer24): _DenseLayer(

(norm1): BatchNorm2d(992, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu1): ReLU(inplace=True)

(conv1): Conv2d(992, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)

(norm2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu2): ReLU(inplace=True)

(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

)

)

(transition3): _Transition(

(norm): BatchNorm2d(1024, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu): ReLU(inplace=True)

(conv): Conv2d(1024, 512, kernel_size=(1, 1), stride=(1, 1), bias=False)

(pool): AvgPool2d(kernel_size=2, stride=2, padding=0)

)

(denseblock4): _DenseBlock(

(denselayer1): _DenseLayer(

(norm1): BatchNorm2d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu1): ReLU(inplace=True)

(conv1): Conv2d(512, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)

(norm2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu2): ReLU(inplace=True)

(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

)

(denselayer2): _DenseLayer(

(norm1): BatchNorm2d(544, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu1): ReLU(inplace=True)

(conv1): Conv2d(544, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)

(norm2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu2): ReLU(inplace=True)

(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

)

(denselayer3): _DenseLayer(

(norm1): BatchNorm2d(576, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu1): ReLU(inplace=True)

(conv1): Conv2d(576, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)

(norm2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu2): ReLU(inplace=True)

(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

)

(denselayer4): _DenseLayer(

(norm1): BatchNorm2d(608, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu1): ReLU(inplace=True)

(conv1): Conv2d(608, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)

(norm2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu2): ReLU(inplace=True)

(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

)

(denselayer5): _DenseLayer(

(norm1): BatchNorm2d(640, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu1): ReLU(inplace=True)

(conv1): Conv2d(640, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)

(norm2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu2): ReLU(inplace=True)

(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

)

(denselayer6): _DenseLayer(

(norm1): BatchNorm2d(672, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu1): ReLU(inplace=True)

(conv1): Conv2d(672, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)

(norm2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu2): ReLU(inplace=True)

(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

)

(denselayer7): _DenseLayer(

(norm1): BatchNorm2d(704, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu1): ReLU(inplace=True)

(conv1): Conv2d(704, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)

(norm2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu2): ReLU(inplace=True)

(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

)

(denselayer8): _DenseLayer(

(norm1): BatchNorm2d(736, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu1): ReLU(inplace=True)

(conv1): Conv2d(736, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)

(norm2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu2): ReLU(inplace=True)

(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

)

(denselayer9): _DenseLayer(

(norm1): BatchNorm2d(768, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu1): ReLU(inplace=True)

(conv1): Conv2d(768, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)

(norm2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu2): ReLU(inplace=True)

(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

)

(denselayer10): _DenseLayer(

(norm1): BatchNorm2d(800, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu1): ReLU(inplace=True)

(conv1): Conv2d(800, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)

(norm2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu2): ReLU(inplace=True)

(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

)

(denselayer11): _DenseLayer(

(norm1): BatchNorm2d(832, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu1): ReLU(inplace=True)

(conv1): Conv2d(832, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)

(norm2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu2): ReLU(inplace=True)

(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

)

(denselayer12): _DenseLayer(

(norm1): BatchNorm2d(864, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu1): ReLU(inplace=True)

(conv1): Conv2d(864, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)

(norm2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu2): ReLU(inplace=True)

(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

)

(denselayer13): _DenseLayer(

(norm1): BatchNorm2d(896, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu1): ReLU(inplace=True)

(conv1): Conv2d(896, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)

(norm2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu2): ReLU(inplace=True)

(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

)

(denselayer14): _DenseLayer(

(norm1): BatchNorm2d(928, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu1): ReLU(inplace=True)

(conv1): Conv2d(928, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)

(norm2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu2): ReLU(inplace=True)

(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

)

(denselayer15): _DenseLayer(

(norm1): BatchNorm2d(960, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu1): ReLU(inplace=True)

(conv1): Conv2d(960, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)

(norm2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu2): ReLU(inplace=True)

(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

)

(denselayer16): _DenseLayer(

(norm1): BatchNorm2d(992, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu1): ReLU(inplace=True)

(conv1): Conv2d(992, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)

(norm2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu2): ReLU(inplace=True)

(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

)

)

(norm5): BatchNorm2d(1024, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

)

(classifier): Linear(in_features=1024, out_features=1000, bias=True)

)

5.2 使用torchsummary打印每个网络模型的详细信息

代码

if __name__ == '__main__':

net = DenseNet(num_classes=10).cuda()

summary(net, (3, 224, 224))

打印模型如下

PyTorch Version: 1.12.0+cu113

Torchvision Version: 0.13.0+cu113

----------------------------------------------------------------

Layer (type) Output Shape Param #

================================================================

Conv2d-1 [-1, 64, 112, 112] 9,408

BatchNorm2d-2 [-1, 64, 112, 112] 128

ReLU-3 [-1, 64, 112, 112] 0

MaxPool2d-4 [-1, 64, 56, 56] 0

BatchNorm2d-5 [-1, 64, 56, 56] 128

ReLU-6 [-1, 64, 56, 56] 0

Conv2d-7 [-1, 128, 56, 56] 8,192

BatchNorm2d-8 [-1, 128, 56, 56] 256

ReLU-9 [-1, 128, 56, 56] 0

Conv2d-10 [-1, 32, 56, 56] 36,864

_DenseLayer-11 [-1, 96, 56, 56] 0

BatchNorm2d-12 [-1, 96, 56, 56] 192

ReLU-13 [-1, 96, 56, 56] 0

Conv2d-14 [-1, 128, 56, 56] 12,288

BatchNorm2d-15 [-1, 128, 56, 56] 256

ReLU-16 [-1, 128, 56, 56] 0

Conv2d-17 [-1, 32, 56, 56] 36,864

_DenseLayer-18 [-1, 128, 56, 56] 0

BatchNorm2d-19 [-1, 128, 56, 56] 256

ReLU-20 [-1, 128, 56, 56] 0

Conv2d-21 [-1, 128, 56, 56] 16,384

BatchNorm2d-22 [-1, 128, 56, 56] 256

ReLU-23 [-1, 128, 56, 56] 0

Conv2d-24 [-1, 32, 56, 56] 36,864

_DenseLayer-25 [-1, 160, 56, 56] 0

BatchNorm2d-26 [-1, 160, 56, 56] 320

ReLU-27 [-1, 160, 56, 56] 0

Conv2d-28 [-1, 128, 56, 56] 20,480

BatchNorm2d-29 [-1, 128, 56, 56] 256

ReLU-30 [-1, 128, 56, 56] 0

Conv2d-31 [-1, 32, 56, 56] 36,864

_DenseLayer-32 [-1, 192, 56, 56] 0

BatchNorm2d-33 [-1, 192, 56, 56] 384

ReLU-34 [-1, 192, 56, 56] 0

Conv2d-35 [-1, 128, 56, 56] 24,576

BatchNorm2d-36 [-1, 128, 56, 56] 256

ReLU-37 [-1, 128, 56, 56] 0

Conv2d-38 [-1, 32, 56, 56] 36,864

_DenseLayer-39 [-1, 224, 56, 56] 0

BatchNorm2d-40 [-1, 224, 56, 56] 448

ReLU-41 [-1, 224, 56, 56] 0

Conv2d-42 [-1, 128, 56, 56] 28,672

BatchNorm2d-43 [-1, 128, 56, 56] 256

ReLU-44 [-1, 128, 56, 56] 0

Conv2d-45 [-1, 32, 56, 56] 36,864

_DenseLayer-46 [-1, 256, 56, 56] 0

DenseBlock-47 [-1, 256, 56, 56] 0

BatchNorm2d-48 [-1, 256, 56, 56] 512

ReLU-49 [-1, 256, 56, 56] 0

Conv2d-50 [-1, 128, 56, 56] 32,768

AvgPool2d-51 [-1, 128, 28, 28] 0

_TransitionLayer-52 [-1, 128, 28, 28] 0

BatchNorm2d-53 [-1, 128, 28, 28] 256

ReLU-54 [-1, 128, 28, 28] 0

Conv2d-55 [-1, 128, 28, 28] 16,384

BatchNorm2d-56 [-1, 128, 28, 28] 256

ReLU-57 [-1, 128, 28, 28] 0

Conv2d-58 [-1, 32, 28, 28] 36,864

_DenseLayer-59 [-1, 160, 28, 28] 0

BatchNorm2d-60 [-1, 160, 28, 28] 320

ReLU-61 [-1, 160, 28, 28] 0

Conv2d-62 [-1, 128, 28, 28] 20,480

BatchNorm2d-63 [-1, 128, 28, 28] 256

ReLU-64 [-1, 128, 28, 28] 0

Conv2d-65 [-1, 32, 28, 28] 36,864

_DenseLayer-66 [-1, 192, 28, 28] 0

BatchNorm2d-67 [-1, 192, 28, 28] 384

ReLU-68 [-1, 192, 28, 28] 0

Conv2d-69 [-1, 128, 28, 28] 24,576

BatchNorm2d-70 [-1, 128, 28, 28] 256

ReLU-71 [-1, 128, 28, 28] 0

Conv2d-72 [-1, 32, 28, 28] 36,864

_DenseLayer-73 [-1, 224, 28, 28] 0

BatchNorm2d-74 [-1, 224, 28, 28] 448

ReLU-75 [-1, 224, 28, 28] 0

Conv2d-76 [-1, 128, 28, 28] 28,672

BatchNorm2d-77 [-1, 128, 28, 28] 256

ReLU-78 [-1, 128, 28, 28] 0

Conv2d-79 [-1, 32, 28, 28] 36,864

_DenseLayer-80 [-1, 256, 28, 28] 0

BatchNorm2d-81 [-1, 256, 28, 28] 512

ReLU-82 [-1, 256, 28, 28] 0

Conv2d-83 [-1, 128, 28, 28] 32,768

BatchNorm2d-84 [-1, 128, 28, 28] 256

ReLU-85 [-1, 128, 28, 28] 0

Conv2d-86 [-1, 32, 28, 28] 36,864

_DenseLayer-87 [-1, 288, 28, 28] 0

BatchNorm2d-88 [-1, 288, 28, 28] 576

ReLU-89 [-1, 288, 28, 28] 0

Conv2d-90 [-1, 128, 28, 28] 36,864

BatchNorm2d-91 [-1, 128, 28, 28] 256

ReLU-92 [-1, 128, 28, 28] 0

Conv2d-93 [-1, 32, 28, 28] 36,864

_DenseLayer-94 [-1, 320, 28, 28] 0

BatchNorm2d-95 [-1, 320, 28, 28] 640

ReLU-96 [-1, 320, 28, 28] 0

Conv2d-97 [-1, 128, 28, 28] 40,960

BatchNorm2d-98 [-1, 128, 28, 28] 256

ReLU-99 [-1, 128, 28, 28] 0

Conv2d-100 [-1, 32, 28, 28] 36,864

_DenseLayer-101 [-1, 352, 28, 28] 0

BatchNorm2d-102 [-1, 352, 28, 28] 704

ReLU-103 [-1, 352, 28, 28] 0

Conv2d-104 [-1, 128, 28, 28] 45,056

BatchNorm2d-105 [-1, 128, 28, 28] 256

ReLU-106 [-1, 128, 28, 28] 0

Conv2d-107 [-1, 32, 28, 28] 36,864

_DenseLayer-108 [-1, 384, 28, 28] 0

BatchNorm2d-109 [-1, 384, 28, 28] 768

ReLU-110 [-1, 384, 28, 28] 0

Conv2d-111 [-1, 128, 28, 28] 49,152

BatchNorm2d-112 [-1, 128, 28, 28] 256

ReLU-113 [-1, 128, 28, 28] 0

Conv2d-114 [-1, 32, 28, 28] 36,864

_DenseLayer-115 [-1, 416, 28, 28] 0

BatchNorm2d-116 [-1, 416, 28, 28] 832

ReLU-117 [-1, 416, 28, 28] 0

Conv2d-118 [-1, 128, 28, 28] 53,248

BatchNorm2d-119 [-1, 128, 28, 28] 256

ReLU-120 [-1, 128, 28, 28] 0

Conv2d-121 [-1, 32, 28, 28] 36,864

_DenseLayer-122 [-1, 448, 28, 28] 0

BatchNorm2d-123 [-1, 448, 28, 28] 896

ReLU-124 [-1, 448, 28, 28] 0

Conv2d-125 [-1, 128, 28, 28] 57,344

BatchNorm2d-126 [-1, 128, 28, 28] 256

ReLU-127 [-1, 128, 28, 28] 0

Conv2d-128 [-1, 32, 28, 28] 36,864

_DenseLayer-129 [-1, 480, 28, 28] 0

BatchNorm2d-130 [-1, 480, 28, 28] 960

ReLU-131 [-1, 480, 28, 28] 0

Conv2d-132 [-1, 128, 28, 28] 61,440

BatchNorm2d-133 [-1, 128, 28, 28] 256

ReLU-134 [-1, 128, 28, 28] 0

Conv2d-135 [-1, 32, 28, 28] 36,864

_DenseLayer-136 [-1, 512, 28, 28] 0

DenseBlock-137 [-1, 512, 28, 28] 0

BatchNorm2d-138 [-1, 512, 28, 28] 1,024

ReLU-139 [-1, 512, 28, 28] 0

Conv2d-140 [-1, 256, 28, 28] 131,072

AvgPool2d-141 [-1, 256, 14, 14] 0

_TransitionLayer-142 [-1, 256, 14, 14] 0

BatchNorm2d-143 [-1, 256, 14, 14] 512

ReLU-144 [-1, 256, 14, 14] 0

Conv2d-145 [-1, 128, 14, 14] 32,768

BatchNorm2d-146 [-1, 128, 14, 14] 256

ReLU-147 [-1, 128, 14, 14] 0

Conv2d-148 [-1, 32, 14, 14] 36,864

_DenseLayer-149 [-1, 288, 14, 14] 0

BatchNorm2d-150 [-1, 288, 14, 14] 576

ReLU-151 [-1, 288, 14, 14] 0

Conv2d-152 [-1, 128, 14, 14] 36,864

BatchNorm2d-153 [-1, 128, 14, 14] 256

ReLU-154 [-1, 128, 14, 14] 0

Conv2d-155 [-1, 32, 14, 14] 36,864

_DenseLayer-156 [-1, 320, 14, 14] 0

BatchNorm2d-157 [-1, 320, 14, 14] 640

ReLU-158 [-1, 320, 14, 14] 0

Conv2d-159 [-1, 128, 14, 14] 40,960

BatchNorm2d-160 [-1, 128, 14, 14] 256

ReLU-161 [-1, 128, 14, 14] 0

Conv2d-162 [-1, 32, 14, 14] 36,864

_DenseLayer-163 [-1, 352, 14, 14] 0

BatchNorm2d-164 [-1, 352, 14, 14] 704

ReLU-165 [-1, 352, 14, 14] 0

Conv2d-166 [-1, 128, 14, 14] 45,056

BatchNorm2d-167 [-1, 128, 14, 14] 256

ReLU-168 [-1, 128, 14, 14] 0

Conv2d-169 [-1, 32, 14, 14] 36,864

_DenseLayer-170 [-1, 384, 14, 14] 0

BatchNorm2d-171 [-1, 384, 14, 14] 768

ReLU-172 [-1, 384, 14, 14] 0

Conv2d-173 [-1, 128, 14, 14] 49,152

BatchNorm2d-174 [-1, 128, 14, 14] 256

ReLU-175 [-1, 128, 14, 14] 0

Conv2d-176 [-1, 32, 14, 14] 36,864

_DenseLayer-177 [-1, 416, 14, 14] 0

BatchNorm2d-178 [-1, 416, 14, 14] 832

ReLU-179 [-1, 416, 14, 14] 0

Conv2d-180 [-1, 128, 14, 14] 53,248

BatchNorm2d-181 [-1, 128, 14, 14] 256

ReLU-182 [-1, 128, 14, 14] 0

Conv2d-183 [-1, 32, 14, 14] 36,864

_DenseLayer-184 [-1, 448, 14, 14] 0

BatchNorm2d-185 [-1, 448, 14, 14] 896

ReLU-186 [-1, 448, 14, 14] 0

Conv2d-187 [-1, 128, 14, 14] 57,344

BatchNorm2d-188 [-1, 128, 14, 14] 256

ReLU-189 [-1, 128, 14, 14] 0

Conv2d-190 [-1, 32, 14, 14] 36,864

_DenseLayer-191 [-1, 480, 14, 14] 0

BatchNorm2d-192 [-1, 480, 14, 14] 960

ReLU-193 [-1, 480, 14, 14] 0

Conv2d-194 [-1, 128, 14, 14] 61,440

BatchNorm2d-195 [-1, 128, 14, 14] 256

ReLU-196 [-1, 128, 14, 14] 0

Conv2d-197 [-1, 32, 14, 14] 36,864

_DenseLayer-198 [-1, 512, 14, 14] 0

BatchNorm2d-199 [-1, 512, 14, 14] 1,024

ReLU-200 [-1, 512, 14, 14] 0

Conv2d-201 [-1, 128, 14, 14] 65,536

BatchNorm2d-202 [-1, 128, 14, 14] 256

ReLU-203 [-1, 128, 14, 14] 0

Conv2d-204 [-1, 32, 14, 14] 36,864

_DenseLayer-205 [-1, 544, 14, 14] 0

BatchNorm2d-206 [-1, 544, 14, 14] 1,088

ReLU-207 [-1, 544, 14, 14] 0

Conv2d-208 [-1, 128, 14, 14] 69,632

BatchNorm2d-209 [-1, 128, 14, 14] 256

ReLU-210 [-1, 128, 14, 14] 0

Conv2d-211 [-1, 32, 14, 14] 36,864

_DenseLayer-212 [-1, 576, 14, 14] 0

BatchNorm2d-213 [-1, 576, 14, 14] 1,152

ReLU-214 [-1, 576, 14, 14] 0

Conv2d-215 [-1, 128, 14, 14] 73,728

BatchNorm2d-216 [-1, 128, 14, 14] 256

ReLU-217 [-1, 128, 14, 14] 0

Conv2d-218 [-1, 32, 14, 14] 36,864

_DenseLayer-219 [-1, 608, 14, 14] 0

BatchNorm2d-220 [-1, 608, 14, 14] 1,216

ReLU-221 [-1, 608, 14, 14] 0

Conv2d-222 [-1, 128, 14, 14] 77,824

BatchNorm2d-223 [-1, 128, 14, 14] 256

ReLU-224 [-1, 128, 14, 14] 0

Conv2d-225 [-1, 32, 14, 14] 36,864

_DenseLayer-226 [-1, 640, 14, 14] 0

BatchNorm2d-227 [-1, 640, 14, 14] 1,280

ReLU-228 [-1, 640, 14, 14] 0

Conv2d-229 [-1, 128, 14, 14] 81,920

BatchNorm2d-230 [-1, 128, 14, 14] 256

ReLU-231 [-1, 128, 14, 14] 0

Conv2d-232 [-1, 32, 14, 14] 36,864

_DenseLayer-233 [-1, 672, 14, 14] 0

BatchNorm2d-234 [-1, 672, 14, 14] 1,344

ReLU-235 [-1, 672, 14, 14] 0

Conv2d-236 [-1, 128, 14, 14] 86,016

BatchNorm2d-237 [-1, 128, 14, 14] 256

ReLU-238 [-1, 128, 14, 14] 0

Conv2d-239 [-1, 32, 14, 14] 36,864

_DenseLayer-240 [-1, 704, 14, 14] 0

BatchNorm2d-241 [-1, 704, 14, 14] 1,408

ReLU-242 [-1, 704, 14, 14] 0

Conv2d-243 [-1, 128, 14, 14] 90,112

BatchNorm2d-244 [-1, 128, 14, 14] 256

ReLU-245 [-1, 128, 14, 14] 0

Conv2d-246 [-1, 32, 14, 14] 36,864

_DenseLayer-247 [-1, 736, 14, 14] 0

BatchNorm2d-248 [-1, 736, 14, 14] 1,472

ReLU-249 [-1, 736, 14, 14] 0

Conv2d-250 [-1, 128, 14, 14] 94,208

BatchNorm2d-251 [-1, 128, 14, 14] 256

ReLU-252 [-1, 128, 14, 14] 0

Conv2d-253 [-1, 32, 14, 14] 36,864

_DenseLayer-254 [-1, 768, 14, 14] 0

BatchNorm2d-255 [-1, 768, 14, 14] 1,536

ReLU-256 [-1, 768, 14, 14] 0

Conv2d-257 [-1, 128, 14, 14] 98,304

BatchNorm2d-258 [-1, 128, 14, 14] 256

ReLU-259 [-1, 128, 14, 14] 0

Conv2d-260 [-1, 32, 14, 14] 36,864

_DenseLayer-261 [-1, 800, 14, 14] 0

BatchNorm2d-262 [-1, 800, 14, 14] 1,600

ReLU-263 [-1, 800, 14, 14] 0

Conv2d-264 [-1, 128, 14, 14] 102,400

BatchNorm2d-265 [-1, 128, 14, 14] 256

ReLU-266 [-1, 128, 14, 14] 0

Conv2d-267 [-1, 32, 14, 14] 36,864

_DenseLayer-268 [-1, 832, 14, 14] 0

BatchNorm2d-269 [-1, 832, 14, 14] 1,664

ReLU-270 [-1, 832, 14, 14] 0

Conv2d-271 [-1, 128, 14, 14] 106,496

BatchNorm2d-272 [-1, 128, 14, 14] 256

ReLU-273 [-1, 128, 14, 14] 0

Conv2d-274 [-1, 32, 14, 14] 36,864

_DenseLayer-275 [-1, 864, 14, 14] 0

BatchNorm2d-276 [-1, 864, 14, 14] 1,728

ReLU-277 [-1, 864, 14, 14] 0

Conv2d-278 [-1, 128, 14, 14] 110,592

BatchNorm2d-279 [-1, 128, 14, 14] 256

ReLU-280 [-1, 128, 14, 14] 0

Conv2d-281 [-1, 32, 14, 14] 36,864

_DenseLayer-282 [-1, 896, 14, 14] 0

BatchNorm2d-283 [-1, 896, 14, 14] 1,792

ReLU-284 [-1, 896, 14, 14] 0

Conv2d-285 [-1, 128, 14, 14] 114,688

BatchNorm2d-286 [-1, 128, 14, 14] 256

ReLU-287 [-1, 128, 14, 14] 0

Conv2d-288 [-1, 32, 14, 14] 36,864

_DenseLayer-289 [-1, 928, 14, 14] 0

BatchNorm2d-290 [-1, 928, 14, 14] 1,856

ReLU-291 [-1, 928, 14, 14] 0

Conv2d-292 [-1, 128, 14, 14] 118,784

BatchNorm2d-293 [-1, 128, 14, 14] 256

ReLU-294 [-1, 128, 14, 14] 0

Conv2d-295 [-1, 32, 14, 14] 36,864

_DenseLayer-296 [-1, 960, 14, 14] 0

BatchNorm2d-297 [-1, 960, 14, 14] 1,920

ReLU-298 [-1, 960, 14, 14] 0

Conv2d-299 [-1, 128, 14, 14] 122,880

BatchNorm2d-300 [-1, 128, 14, 14] 256

ReLU-301 [-1, 128, 14, 14] 0

Conv2d-302 [-1, 32, 14, 14] 36,864

_DenseLayer-303 [-1, 992, 14, 14] 0

BatchNorm2d-304 [-1, 992, 14, 14] 1,984

ReLU-305 [-1, 992, 14, 14] 0

Conv2d-306 [-1, 128, 14, 14] 126,976

BatchNorm2d-307 [-1, 128, 14, 14] 256

ReLU-308 [-1, 128, 14, 14] 0

Conv2d-309 [-1, 32, 14, 14] 36,864

_DenseLayer-310 [-1, 1024, 14, 14] 0

DenseBlock-311 [-1, 1024, 14, 14] 0

BatchNorm2d-312 [-1, 1024, 14, 14] 2,048

ReLU-313 [-1, 1024, 14, 14] 0

Conv2d-314 [-1, 512, 14, 14] 524,288

AvgPool2d-315 [-1, 512, 7, 7] 0

_TransitionLayer-316 [-1, 512, 7, 7] 0

BatchNorm2d-317 [-1, 512, 7, 7] 1,024

ReLU-318 [-1, 512, 7, 7] 0

Conv2d-319 [-1, 128, 7, 7] 65,536

BatchNorm2d-320 [-1, 128, 7, 7] 256

ReLU-321 [-1, 128, 7, 7] 0

Conv2d-322 [-1, 32, 7, 7] 36,864

_DenseLayer-323 [-1, 544, 7, 7] 0

BatchNorm2d-324 [-1, 544, 7, 7] 1,088

ReLU-325 [-1, 544, 7, 7] 0

Conv2d-326 [-1, 128, 7, 7] 69,632

BatchNorm2d-327 [-1, 128, 7, 7] 256

ReLU-328 [-1, 128, 7, 7] 0

Conv2d-329 [-1, 32, 7, 7] 36,864

_DenseLayer-330 [-1, 576, 7, 7] 0

BatchNorm2d-331 [-1, 576, 7, 7] 1,152

ReLU-332 [-1, 576, 7, 7] 0

Conv2d-333 [-1, 128, 7, 7] 73,728

BatchNorm2d-334 [-1, 128, 7, 7] 256

ReLU-335 [-1, 128, 7, 7] 0

Conv2d-336 [-1, 32, 7, 7] 36,864

_DenseLayer-337 [-1, 608, 7, 7] 0

BatchNorm2d-338 [-1, 608, 7, 7] 1,216

ReLU-339 [-1, 608, 7, 7] 0

Conv2d-340 [-1, 128, 7, 7] 77,824

BatchNorm2d-341 [-1, 128, 7, 7] 256

ReLU-342 [-1, 128, 7, 7] 0

Conv2d-343 [-1, 32, 7, 7] 36,864

_DenseLayer-344 [-1, 640, 7, 7] 0

BatchNorm2d-345 [-1, 640, 7, 7] 1,280

ReLU-346 [-1, 640, 7, 7] 0

Conv2d-347 [-1, 128, 7, 7] 81,920

BatchNorm2d-348 [-1, 128, 7, 7] 256

ReLU-349 [-1, 128, 7, 7] 0

Conv2d-350 [-1, 32, 7, 7] 36,864

_DenseLayer-351 [-1, 672, 7, 7] 0

BatchNorm2d-352 [-1, 672, 7, 7] 1,344

ReLU-353 [-1, 672, 7, 7] 0

Conv2d-354 [-1, 128, 7, 7] 86,016

BatchNorm2d-355 [-1, 128, 7, 7] 256

ReLU-356 [-1, 128, 7, 7] 0

Conv2d-357 [-1, 32, 7, 7] 36,864

_DenseLayer-358 [-1, 704, 7, 7] 0

BatchNorm2d-359 [-1, 704, 7, 7] 1,408

ReLU-360 [-1, 704, 7, 7] 0

Conv2d-361 [-1, 128, 7, 7] 90,112

BatchNorm2d-362 [-1, 128, 7, 7] 256

ReLU-363 [-1, 128, 7, 7] 0

Conv2d-364 [-1, 32, 7, 7] 36,864

_DenseLayer-365 [-1, 736, 7, 7] 0

BatchNorm2d-366 [-1, 736, 7, 7] 1,472

ReLU-367 [-1, 736, 7, 7] 0

Conv2d-368 [-1, 128, 7, 7] 94,208

BatchNorm2d-369 [-1, 128, 7, 7] 256

ReLU-370 [-1, 128, 7, 7] 0

Conv2d-371 [-1, 32, 7, 7] 36,864

_DenseLayer-372 [-1, 768, 7, 7] 0

BatchNorm2d-373 [-1, 768, 7, 7] 1,536

ReLU-374 [-1, 768, 7, 7] 0

Conv2d-375 [-1, 128, 7, 7] 98,304

BatchNorm2d-376 [-1, 128, 7, 7] 256

ReLU-377 [-1, 128, 7, 7] 0

Conv2d-378 [-1, 32, 7, 7] 36,864

_DenseLayer-379 [-1, 800, 7, 7] 0

BatchNorm2d-380 [-1, 800, 7, 7] 1,600

ReLU-381 [-1, 800, 7, 7] 0

Conv2d-382 [-1, 128, 7, 7] 102,400

BatchNorm2d-383 [-1, 128, 7, 7] 256

ReLU-384 [-1, 128, 7, 7] 0

Conv2d-385 [-1, 32, 7, 7] 36,864

_DenseLayer-386 [-1, 832, 7, 7] 0

BatchNorm2d-387 [-1, 832, 7, 7] 1,664

ReLU-388 [-1, 832, 7, 7] 0

Conv2d-389 [-1, 128, 7, 7] 106,496

BatchNorm2d-390 [-1, 128, 7, 7] 256

ReLU-391 [-1, 128, 7, 7] 0

Conv2d-392 [-1, 32, 7, 7] 36,864

_DenseLayer-393 [-1, 864, 7, 7] 0

BatchNorm2d-394 [-1, 864, 7, 7] 1,728

ReLU-395 [-1, 864, 7, 7] 0

Conv2d-396 [-1, 128, 7, 7] 110,592

BatchNorm2d-397 [-1, 128, 7, 7] 256

ReLU-398 [-1, 128, 7, 7] 0

Conv2d-399 [-1, 32, 7, 7] 36,864

_DenseLayer-400 [-1, 896, 7, 7] 0

BatchNorm2d-401 [-1, 896, 7, 7] 1,792

ReLU-402 [-1, 896, 7, 7] 0

Conv2d-403 [-1, 128, 7, 7] 114,688

BatchNorm2d-404 [-1, 128, 7, 7] 256

ReLU-405 [-1, 128, 7, 7] 0

Conv2d-406 [-1, 32, 7, 7] 36,864

_DenseLayer-407 [-1, 928, 7, 7] 0

BatchNorm2d-408 [-1, 928, 7, 7] 1,856

ReLU-409 [-1, 928, 7, 7] 0

Conv2d-410 [-1, 128, 7, 7] 118,784

BatchNorm2d-411 [-1, 128, 7, 7] 256

ReLU-412 [-1, 128, 7, 7] 0

Conv2d-413 [-1, 32, 7, 7] 36,864

_DenseLayer-414 [-1, 960, 7, 7] 0

BatchNorm2d-415 [-1, 960, 7, 7] 1,920

ReLU-416 [-1, 960, 7, 7] 0

Conv2d-417 [-1, 128, 7, 7] 122,880

BatchNorm2d-418 [-1, 128, 7, 7] 256

ReLU-419 [-1, 128, 7, 7] 0

Conv2d-420 [-1, 32, 7, 7] 36,864

_DenseLayer-421 [-1, 992, 7, 7] 0

BatchNorm2d-422 [-1, 992, 7, 7] 1,984

ReLU-423 [-1, 992, 7, 7] 0

Conv2d-424 [-1, 128, 7, 7] 126,976

BatchNorm2d-425 [-1, 128, 7, 7] 256

ReLU-426 [-1, 128, 7, 7] 0

Conv2d-427 [-1, 32, 7, 7] 36,864

_DenseLayer-428 [-1, 1024, 7, 7] 0

DenseBlock-429 [-1, 1024, 7, 7] 0

AvgPool2d-430 [-1, 1024, 1, 1] 0

Linear-431 [-1, 10] 10,250

================================================================

Total params: 6,962,058

Trainable params: 6,962,058

Non-trainable params: 0

----------------------------------------------------------------

Input size (MB): 0.57

Forward/backward pass size (MB): 383.87

Params size (MB): 26.56

Estimated Total Size (MB): 411.01

----------------------------------------------------------------

Process finished with exit code 0

完整代码

import torch

import torch.nn as nn

import torchvision

# noinspection PyUnresolvedReferences

from torchsummary import summary

print("PyTorch Version: ",torch.__version__)

print("Torchvision Version: ",torchvision.__version__)

#可选择的densenet模型

__all__ = ['DenseNet121', 'DenseNet169','DenseNet201','DenseNet264']

'''-------------一、构造初始卷积层-----------------------------'''

def Conv1(in_planes, places, stride=2):

return nn.Sequential(

nn.Conv2d(in_channels=in_planes,out_channels=places,kernel_size=7,stride=stride,padding=3, bias=False),

nn.BatchNorm2d(places),

nn.ReLU(inplace=True),

nn.MaxPool2d(kernel_size=3, stride=2, padding=1)

)

'''-------------二、定义Dense Block模块-----------------------------'''

'''---(1)构造Dense Block内部结构---'''

#BN+ReLU+1x1 Conv+BN+ReLU+3x3 Conv

class _DenseLayer(nn.Module):

def __init__(self, inplace, growth_rate, bn_size, drop_rate=0):

super(_DenseLayer, self).__init__()

self.drop_rate = drop_rate

self.dense_layer = nn.Sequential(

nn.BatchNorm2d(inplace),

nn.ReLU(inplace=True),

# growth_rate:增长率。一层产生多少个特征图

nn.Conv2d(in_channels=inplace, out_channels=bn_size * growth_rate, kernel_size=1, stride=1, padding=0, bias=False),

nn.BatchNorm2d(bn_size * growth_rate),

nn.ReLU(inplace=True),

nn.Conv2d(in_channels=bn_size * growth_rate, out_channels=growth_rate, kernel_size=3, stride=1, padding=1, bias=False),

)

self.dropout = nn.Dropout(p=self.drop_rate)

def forward(self, x):

y = self.dense_layer(x)

if self.drop_rate > 0:

y = self.dropout(y)

return torch.cat([x, y], 1)

'''---(2)构造Dense Block模块---'''

class DenseBlock(nn.Module):

def __init__(self, num_layers, inplances, growth_rate, bn_size , drop_rate=0):

super(DenseBlock, self).__init__()

layers = []

# 随着layer层数的增加,每增加一层,输入的特征图就增加一倍growth_rate

for i in range(num_layers):

layers.append(_DenseLayer(inplances + i * growth_rate, growth_rate, bn_size, drop_rate))

self.layers = nn.Sequential(*layers)

def forward(self, x):

return self.layers(x)

'''-------------三、构造Transition层-----------------------------'''

#BN+1×1Conv+2×2AveragePooling

class _TransitionLayer(nn.Module):

def __init__(self, inplace, plance):

super(_TransitionLayer, self).__init__()

self.transition_layer = nn.Sequential(

nn.BatchNorm2d(inplace),

nn.ReLU(inplace=True),

nn.Conv2d(in_channels=inplace,out_channels=plance,kernel_size=1,stride=1,padding=0,bias=False),

nn.AvgPool2d(kernel_size=2,stride=2),

)

def forward(self, x):

return self.transition_layer(x)

'''-------------四、搭建DenseNet网络-----------------------------'''

class DenseNet(nn.Module):

def __init__(self, init_channels=64, growth_rate=32, blocks=[6, 12, 24, 16],num_classes=10):

super(DenseNet, self).__init__()

bn_size = 4

drop_rate = 0

self.conv1 = Conv1(in_planes=3, places=init_channels)

blocks*4

#第一次执行特征的维度来自于前面的特征提取

num_features = init_channels

# 第1个DenseBlock有6个DenseLayer, 执行DenseBlock(6,64,32,4)

self.layer1 = DenseBlock(num_layers=blocks[0], inplances=num_features, growth_rate=growth_rate, bn_size=bn_size, drop_rate=drop_rate)

num_features = num_features + blocks[0] * growth_rate

# 第1个transition 执行 _TransitionLayer(256,128)

self.transition1 = _TransitionLayer(inplace=num_features, plance=num_features // 2)

#num_features减少为原来的一半,执行第1回合之后,第2个DenseBlock的输入的feature应该是:num_features = 128

num_features = num_features // 2

# 第2个DenseBlock有12个DenseLayer, 执行DenseBlock(12,128,32,4)

self.layer2 = DenseBlock(num_layers=blocks[1], inplances=num_features, growth_rate=growth_rate, bn_size=bn_size, drop_rate=drop_rate)

num_features = num_features + blocks[1] * growth_rate

# 第2个transition 执行 _TransitionLayer(512,256)

self.transition2 = _TransitionLayer(inplace=num_features, plance=num_features // 2)

# num_features减少为原来的一半,执行第2回合之后,第3个DenseBlock的输入的feature应该是:num_features = 256

num_features = num_features // 2

# 第3个DenseBlock有24个DenseLayer, 执行DenseBlock(24,256,32,4)

self.layer3 = DenseBlock(num_layers=blocks[2], inplances=num_features, growth_rate=growth_rate, bn_size=bn_size, drop_rate=drop_rate)

num_features = num_features + blocks[2] * growth_rate

# 第3个transition 执行 _TransitionLayer(1024,512)

self.transition3 = _TransitionLayer(inplace=num_features, plance=num_features // 2)

# num_features减少为原来的一半,执行第3回合之后,第4个DenseBlock的输入的feature应该是:num_features = 512

num_features = num_features // 2

# 第4个DenseBlock有16个DenseLayer, 执行DenseBlock(16,512,32,4)

self.layer4 = DenseBlock(num_layers=blocks[3], inplances=num_features, growth_rate=growth_rate, bn_size=bn_size, drop_rate=drop_rate)

num_features = num_features + blocks[3] * growth_rate

self.avgpool = nn.AvgPool2d(7, stride=1)

self.fc = nn.Linear(num_features, num_classes)

def forward(self, x):

x = self.conv1(x)

x = self.layer1(x)

x = self.transition1(x)

x = self.layer2(x)

x = self.transition2(x)

x = self.layer3(x)

x = self.transition3(x)

x = self.layer4(x)

x = self.avgpool(x)

x = x.view(x.size(0), -1)

x = self.fc(x)

return x

def DenseNet121():

return DenseNet(init_channels=64, growth_rate=32, blocks=[6, 12, 24, 16])

def DenseNet169():

return DenseNet(init_channels=64, growth_rate=32, blocks=[6, 12, 32, 32])

def DenseNet201():

return DenseNet(init_channels=64, growth_rate=32, blocks=[6, 12, 48, 32])

def DenseNet264():

return DenseNet(init_channels=64, growth_rate=32, blocks=[6, 12, 64, 48])

'''

if __name__=='__main__':

# model = torchvision.models.densenet121()

model = DenseNet121()

print(model)

input = torch.randn(1, 3, 224, 224)

out = model(input)

print(out.shape)

'''

if __name__ == '__main__':

net = DenseNet(num_classes=10).cuda()

summary(net, (3, 224, 224))

版权归原作者 路人贾'ω' 所有, 如有侵权,请联系我们删除。