本文主要参考王树森老师的强化学习课程

1.A2C算法原理

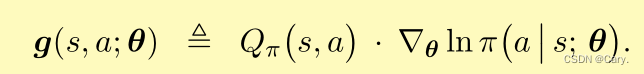

A2C算法是策略学习中比较经典的一个算法,是在 Barto 等人1983年提出的。我们知道策略梯度方法用策略梯度更新策略网络参数 θ,从而增大目标函数,即下面的随机梯度:

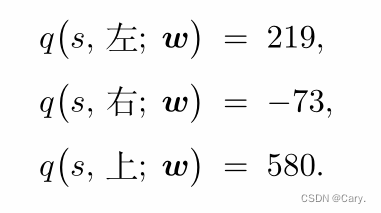

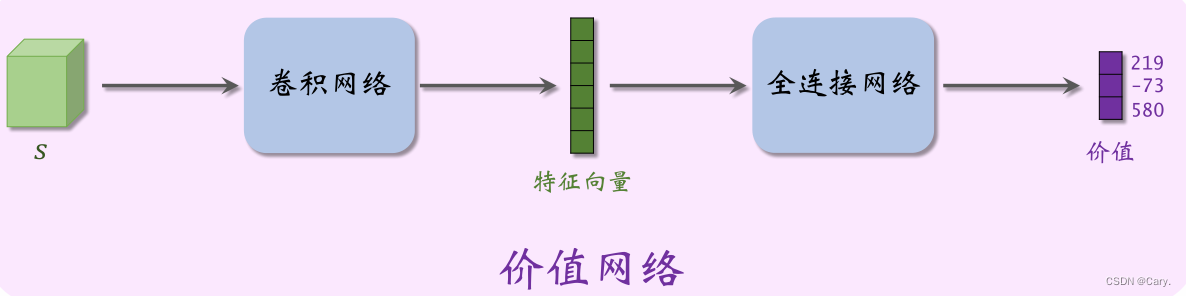

Actor-Critic 方法中用一个神经网络近似动作价值函数 Q π (s,a),这个神经网络叫做“价值网络”,记为 q(s,a;w),其中的 w 表示神经网络中可训练的参数。价值网络的输入是状态 s,输出是每个动作的价值。动作空间 A 中有多少种动作,那么价值网络的输出就是多少维的向量,向量每个元素对应一个动作。举个例子,动作空间是 A = {左,右,上},价值网络的输出是 :

神经网络可以采用以下结构:

虽然价值网络 q(s,a;w) 与DQN有相同的结构,但是两者的意义不同,训练算法也不同。、

- 价值网络是对动作价值函数 Q π (s,a) 的近似。而 DQN 则是对最优动作价值函数Q ⋆ (s,a) 的近似。

- 对价值网络的训练使用的是SARSA算法,它属于同策略,不能用经验回放。对DQN的训练使用的是 Q 学习算法,它属于异策略,可以用经验回放。

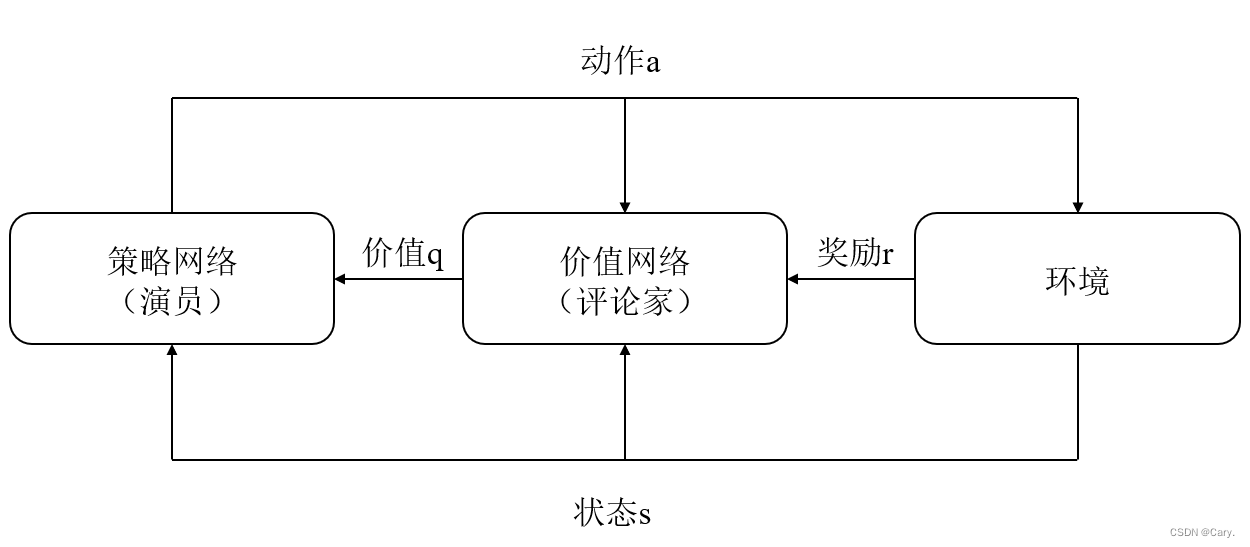

Actor-Critic 翻译成“演员—评论家”方法。策略网络 π(a|s;θ) 相当于演员,它基于状态 s 做出动作 a。价值网络 q(s,a;w) 相当于评论家,它给演员的表现打分,量化在状态 s的情况下做出动作 a 的好坏程度。策略网络(演员)和价值网络(评委)的关系如下图所示。

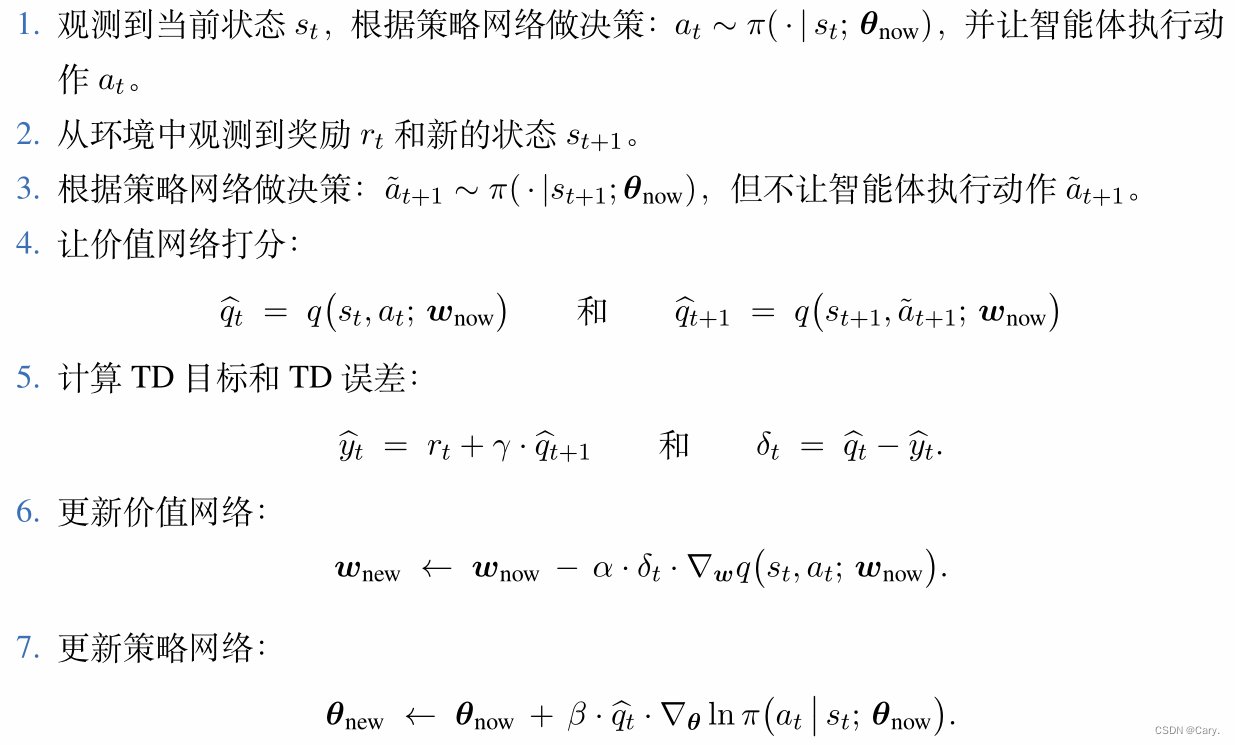

2. A2C算法训练流程

设当前策略网络参数是θnow ,价值网络参数是Wnow 。执行下面的步骤,将参数更新成 θnew 和 Wnew :

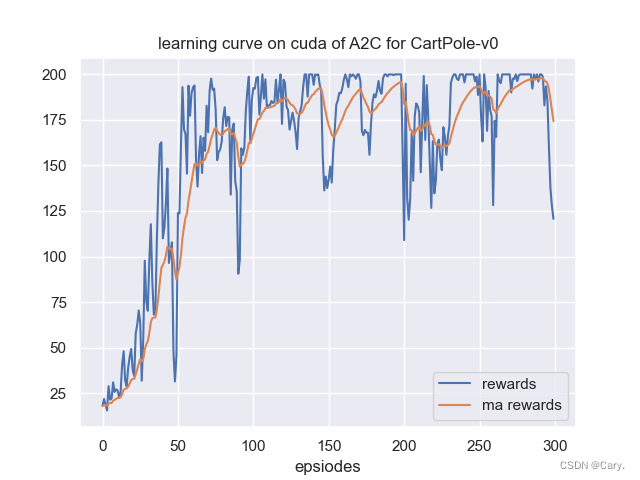

3.A2C代码实现

基于pytorch在gym基础环境中选择经典环境cartpole-v0倒立摆进行验证。

3.1 算法代码:

import torch.optim as optim

import torch.nn as nn

import torch.nn.functional as F

from torch.distributions import Categorical

class ActorCritic(nn.Module):

''' A2C网络模型,包含一个Actor和Critic

'''

def __init__(self, input_dim, output_dim, hidden_dim):

super(ActorCritic, self).__init__()

self.critic = nn.Sequential(

nn.Linear(input_dim, hidden_dim),

nn.ReLU(),

nn.Linear(hidden_dim, 1)

)

self.actor = nn.Sequential(

nn.Linear(input_dim, hidden_dim),

nn.ReLU(),

nn.Linear(hidden_dim, output_dim),

nn.Softmax(dim=1),

)

def forward(self, x):

value = self.critic(x)

probs = self.actor(x)

dist = Categorical(probs)

return dist, value

class A2C:

''' A2C算法

'''

def __init__(self,state_dim,action_dim,cfg) -> None:

self.gamma = cfg.gamma

self.device = cfg.device

self.model = ActorCritic(state_dim, action_dim, cfg.hidden_size).to(self.device)

self.optimizer = optim.Adam(self.model.parameters())

def compute_returns(self,next_value, rewards, masks):

R = next_value

returns = []

for step in reversed(range(len(rewards))):

R = rewards[step] + self.gamma * R * masks[step]

returns.insert(0, R)

return returns

3.2 实验代码:

import sys

import os

curr_path = os.path.dirname(os.path.abspath(__file__)) # 当前文件所在绝对路径

parent_path = os.path.dirname(curr_path) # 父路径

sys.path.append(parent_path) # 添加路径到系统路径

import gym

import numpy as np

import torch

import torch.optim as optim

import datetime

from common.multiprocessing_env import SubprocVecEnv

from a2c import ActorCritic

from common.utils import save_results, make_dir

from common.utils import plot_rewards

curr_time = datetime.datetime.now().strftime("%Y%m%d-%H%M%S") # 获取当前时间

algo_name = 'A2C' # 算法名称

env_name = 'CartPole-v0' # 环境名称

class A2CConfig:

def __init__(self) -> None:

self.algo_name = algo_name# 算法名称

self.env_name = env_name # 环境名称

self.n_envs = 8 # 异步的环境数目

self.gamma = 0.99 # 强化学习中的折扣因子

self.hidden_dim = 256

self.lr = 1e-3 # learning rate

self.max_frames = 30000

self.n_steps = 5

self.device = torch.device("cuda" if torch.cuda.is_available() else "cpu")

class PlotConfig:

def __init__(self) -> None:

self.algo_name = algo_name # 算法名称

self.env_name = env_name # 环境名称

self.device = torch.device("cuda" if torch.cuda.is_available() else "cpu") # 检测GPU

self.result_path = curr_path+"/outputs/" + self.env_name + \

'/'+curr_time+'/results/' # 保存结果的路径

self.model_path = curr_path+"/outputs/" + self.env_name + \

'/'+curr_time+'/models/' # 保存模型的路径

self.save = True # 是否保存图片

def make_envs(env_name):

def _thunk():

env = gym.make(env_name)

env.seed(2)

return env

return _thunk

def ceshi_env(env,model,vis=False):

state = env.reset()

if vis: env.render()

done = False

total_reward = 0

while not done:

state = torch.FloatTensor(state).unsqueeze(0).to(cfg.device)

dist, _ = model(state)

next_state, reward, done, _ = env.step(dist.sample().cpu().numpy()[0])

state = next_state

if vis: env.render()

total_reward += reward

return total_reward

def compute_returns(next_value, rewards, masks, gamma=0.99):

R = next_value

returns = []

for step in reversed(range(len(rewards))):

R = rewards[step] + gamma * R * masks[step]

returns.insert(0, R)

return returns

def train(cfg,envs):

print('开始训练!')

print(f'环境:{cfg.env_name}, 算法:{cfg.algo_name}, 设备:{cfg.device}')

env = gym.make(cfg.env_name) # a single env

env.seed(10)

state_dim = envs.observation_space.shape[0]

action_dim = envs.action_space.n

model = ActorCritic(state_dim, action_dim, cfg.hidden_dim).to(cfg.device)

optimizer = optim.Adam(model.parameters())

frame_idx = 0

test_rewards = []

test_ma_rewards = []

state = envs.reset()

while frame_idx < cfg.max_frames:

log_probs = []

values = []

rewards = []

masks = []

entropy = 0

# rollout trajectory

for _ in range(cfg.n_steps):

state = torch.FloatTensor(state).to(cfg.device)

dist, value = model(state)

action = dist.sample()

next_state, reward, done, _ = envs.step(action.cpu().numpy())

log_prob = dist.log_prob(action)

entropy += dist.entropy().mean()

log_probs.append(log_prob)

values.append(value)

rewards.append(torch.FloatTensor(reward).unsqueeze(1).to(cfg.device))

masks.append(torch.FloatTensor(1 - done).unsqueeze(1).to(cfg.device))

state = next_state

frame_idx += 1

if frame_idx % 100 == 0:

test_reward = np.mean([ceshi_env(env,model) for _ in range(10)])

print(f"frame_idx:{frame_idx}, test_reward:{test_reward}")

test_rewards.append(test_reward)

if test_ma_rewards:

test_ma_rewards.append(0.9*test_ma_rewards[-1]+0.1*test_reward)

else:

test_ma_rewards.append(test_reward)

# plot(frame_idx, test_rewards)

next_state = torch.FloatTensor(next_state).to(cfg.device)

_, next_value = model(next_state)

returns = compute_returns(next_value, rewards, masks)

log_probs = torch.cat(log_probs)

returns = torch.cat(returns).detach()

values = torch.cat(values)

advantage = returns - values

actor_loss = -(log_probs * advantage.detach()).mean()

critic_loss = advantage.pow(2).mean()

loss = actor_loss + 0.5 * critic_loss - 0.001 * entropy

optimizer.zero_grad()

loss.backward()

optimizer.step()

print('完成训练!')

return test_rewards, test_ma_rewards

if __name__ == "__main__":

cfg = A2CConfig()

plot_cfg = PlotConfig()

envs = [make_envs(cfg.env_name) for i in range(cfg.n_envs)]

envs = SubprocVecEnv(envs)

# 训练

rewards,ma_rewards = train(cfg,envs)

make_dir(plot_cfg.result_path,plot_cfg.model_path)

save_results(rewards, ma_rewards, tag='train', path=plot_cfg.result_path) # 保存结果

plot_rewards(rewards, ma_rewards, plot_cfg, tag="train") # 画出结果

3.2 一些依赖的文件(common文件夹)

3.2.1 multiprocessing_env.py(来自 openai baseline,用于多线程环境)

# 该代码来自 openai baseline,用于多线程环境

# https://github.com/openai/baselines/tree/master/baselines/common/vec_env

import numpy as np

from multiprocessing import Process, Pipe

def worker(remote, parent_remote, env_fn_wrapper):

parent_remote.close()

env = env_fn_wrapper.x()

while True:

cmd, data = remote.recv()

if cmd == 'step':

ob, reward, done, info = env.step(data)

if done:

ob = env.reset()

remote.send((ob, reward, done, info))

elif cmd == 'reset':

ob = env.reset()

remote.send(ob)

elif cmd == 'reset_task':

ob = env.reset_task()

remote.send(ob)

elif cmd == 'close':

remote.close()

break

elif cmd == 'get_spaces':

remote.send((env.observation_space, env.action_space))

else:

raise NotImplementedError

class VecEnv(object):

"""

An abstract asynchronous, vectorized environment.

"""

def __init__(self, num_envs, observation_space, action_space):

self.num_envs = num_envs

self.observation_space = observation_space

self.action_space = action_space

def reset(self):

"""

Reset all the environments and return an array of

observations, or a tuple of observation arrays.

If step_async is still doing work, that work will

be cancelled and step_wait() should not be called

until step_async() is invoked again.

"""

pass

def step_async(self, actions):

"""

Tell all the environments to start taking a step

with the given actions.

Call step_wait() to get the results of the step.

You should not call this if a step_async run is

already pending.

"""

pass

def step_wait(self):

"""

Wait for the step taken with step_async().

Returns (obs, rews, dones, infos):

- obs: an array of observations, or a tuple of

arrays of observations.

- rews: an array of rewards

- dones: an array of "episode done" booleans

- infos: a sequence of info objects

"""

pass

def close(self):

"""

Clean up the environments' resources.

"""

pass

def step(self, actions):

self.step_async(actions)

return self.step_wait()

class CloudpickleWrapper(object):

"""

Uses cloudpickle to serialize contents (otherwise multiprocessing tries to use pickle)

"""

def __init__(self, x):

self.x = x

def __getstate__(self):

import cloudpickle

return cloudpickle.dumps(self.x)

def __setstate__(self, ob):

import pickle

self.x = pickle.loads(ob)

class SubprocVecEnv(VecEnv):

def __init__(self, env_fns, spaces=None):

"""

envs: list of gym environments to run in subprocesses

"""

self.waiting = False

self.closed = False

nenvs = len(env_fns)

self.nenvs = nenvs

self.remotes, self.work_remotes = zip(*[Pipe() for _ in range(nenvs)])

self.ps = [Process(target=worker, args=(work_remote, remote, CloudpickleWrapper(env_fn)))

for (work_remote, remote, env_fn) in zip(self.work_remotes, self.remotes, env_fns)]

for p in self.ps:

p.daemon = True # if the main process crashes, we should not cause things to hang

p.start()

for remote in self.work_remotes:

remote.close()

self.remotes[0].send(('get_spaces', None))

observation_space, action_space = self.remotes[0].recv()

VecEnv.__init__(self, len(env_fns), observation_space, action_space)

def step_async(self, actions):

for remote, action in zip(self.remotes, actions):

remote.send(('step', action))

self.waiting = True

def step_wait(self):

results = [remote.recv() for remote in self.remotes]

self.waiting = False

obs, rews, dones, infos = zip(*results)

return np.stack(obs), np.stack(rews), np.stack(dones), infos

def reset(self):

for remote in self.remotes:

remote.send(('reset', None))

return np.stack([remote.recv() for remote in self.remotes])

def reset_task(self):

for remote in self.remotes:

remote.send(('reset_task', None))

return np.stack([remote.recv() for remote in self.remotes])

def close(self):

if self.closed:

return

if self.waiting:

for remote in self.remotes:

remote.recv()

for remote in self.remotes:

remote.send(('close', None))

for p in self.ps:

p.join()

self.closed = True

def __len__(self):

return self.nenvs

3.2.2 utils.py(主要是文件创建与绘图函数)

import os

import numpy as np

from pathlib import Path

import matplotlib.pyplot as plt

# import seaborn as sns

from matplotlib.font_manager import FontProperties # 导入字体模块

def chinese_font():

''' 设置中文字体,注意需要根据自己电脑情况更改字体路径,否则还是默认的字体

'''

try:

font = FontProperties(

fname='/System/Library/Fonts/STHeiti Light.ttc', size=15) # fname系统字体路径,此处是mac的

except:

font = None

return font

def plot_rewards_cn(rewards, ma_rewards, plot_cfg, tag='train'):

''' 中文画图

'''

# sns.set()

plt.figure()

plt.title(u"{}环境下{}算法的学习曲线".format(plot_cfg.env_name,

plot_cfg.algo_name), fontproperties=chinese_font())

plt.xlabel(u'回合数', fontproperties=chinese_font())

plt.plot(rewards)

plt.plot(ma_rewards)

plt.legend((u'奖励', u'滑动平均奖励',), loc="best", prop=chinese_font())

if plot_cfg.save:

plt.savefig(plot_cfg.result_path+f"{tag}_rewards_curve_cn")

# plt.show()

def plot_rewards(rewards, ma_rewards, plot_cfg, tag='train'):

# sns.set()

plt.figure() # 创建一个图形实例,方便同时多画几个图

plt.title("learning curve on {} of {} for {}".format(

plot_cfg.device, plot_cfg.algo_name, plot_cfg.env_name))

plt.xlabel('epsiodes')

plt.plot(rewards, label='rewards')

plt.plot(ma_rewards, label='ma rewards')

plt.legend()

if plot_cfg.save:

plt.savefig(plot_cfg.result_path+"{}_rewards_curve".format(tag))

plt.show()

def plot_losses(losses, algo="DQN", save=True, path='./'):

# sns.set()

plt.figure()

plt.title("loss curve of {}".format(algo))

plt.xlabel('epsiodes')

plt.plot(losses, label='rewards')

plt.legend()

if save:

plt.savefig(path+"losses_curve")

plt.show()

def save_results(rewards, ma_rewards, tag='train', path='./results'):

''' 保存奖励

'''

np.save(path+'{}_rewards.npy'.format(tag), rewards)

np.save(path+'{}_ma_rewards.npy'.format(tag), ma_rewards)

print('结果保存完毕!')

def make_dir(*paths):

''' 创建文件夹

'''

for path in paths:

Path(path).mkdir(parents=True, exist_ok=True)

def del_empty_dir(*paths):

''' 删除目录下所有空文件夹

'''

for path in paths:

dirs = os.listdir(path)

for dir in dirs:

if not os.listdir(os.path.join(path, dir)):

os.removedirs(os.path.join(path, dir))

4 实验结果

版权归原作者 Cary. 所有, 如有侵权,请联系我们删除。