①文生图基基础知识:

✔提示词:主体描述,细节描述,修饰词,艺术风格,艺术家

✔Lora模型:实现对特定主题、风格或任务的精细化控制

✔ComfyUI:模型微调、数据预处理、图像生成

✔参考图控制:openpose姿势控制,canny精准绘制,hed绘制,深度图midas,颜色color控制

②baseline相关:

✔Data-Juicer:数据处理和转换工具,旨在简化数据的提取、转换和加载过程

✔DiffSynth-Studio:高效微调训练大模型工具

☆代码的大致结构:

1. 导入库:首先,代码导入了需要用到的库,包括 data-juicer 和微调的工具 DiffSynth-Studio

2. 数据集构建:下载数据集kolors,处理数据集

3. 模型微调:模型微调训练,以及加载训练后的模型

4. 图片生成:调用训练好的模型生成图片

☆代码详情

1.环境安装

!pip install simple-aesthetics-predictor

!pip install -v -e data-juicer

!pip uninstall pytorch-lightning -y

!pip install peft lightning pandas torchvision

!pip install -e DiffSynth-Studio

2. 下载数据集

#下载数据集

from modelscope.msdatasets import MsDataset

ds = MsDataset.load(

'AI-ModelScope/lowres_anime',

subset_name='default',

split='train',

cache_dir="/mnt/workspace/kolors/data"

)

import json, os

from data_juicer.utils.mm_utils import SpecialTokens

from tqdm import tqdm

os.makedirs("./data/lora_dataset/train", exist_ok=True)

os.makedirs("./data/data-juicer/input", exist_ok=True)

with open("./data/data-juicer/input/metadata.jsonl", "w") as f:

for data_id, data in enumerate(tqdm(ds)):

image = data["image"].convert("RGB")

image.save(f"/mnt/workspace/kolors/data/lora_dataset/train/{data_id}.jpg")

metadata = {"text": "二次元", "image": [f"/mnt/workspace/kolors/data/lora_dataset/train/{data_id}.jpg"]}

f.write(json.dumps(metadata))

f.write("\n")

3. 处理数据集,保存数据处理结果

data_juicer_config = """

global parameters

project_name: 'data-process'

dataset_path: './data/data-juicer/input/metadata.jsonl' # path to your dataset directory or file

np: 4 # number of subprocess to process your dataset

text_keys: 'text'

image_key: 'image'

image_special_token: '<__dj__image>'

export_path: './data/data-juicer/output/result.jsonl'

process schedule

a list of several process operators with their arguments

process:

- image_shape_filter:

min_width: 1024

min_height: 1024

any_or_all: any

- image_aspect_ratio_filter:

min_ratio: 0.5

max_ratio: 2.0

any_or_all: any

"""

with open("data/data-juicer/data_juicer_config.yaml", "w") as file:

file.write(data_juicer_config.strip())

!dj-process --config data/data-juicer/data_juicer_config.yaml

import pandas as pd

import os, json

from PIL import Image

from tqdm import tqdm

texts, file_names = [], []

os.makedirs("./data/lora_dataset_processed/train", exist_ok=True)

with open("./data/data-juicer/output/result.jsonl", "r") as file:

for data_id, data in enumerate(tqdm(file.readlines())):

data = json.loads(data)

text = data["text"]

texts.append(text)

image = Image.open(data["image"][0])

image_path = f"./data/lora_dataset_processed/train/{data_id}.jpg"

image.save(image_path)

file_names.append(f"{data_id}.jpg")

data_frame = pd.DataFrame()

data_frame["file_name"] = file_names

data_frame["text"] = texts

data_frame.to_csv("./data/lora_dataset_processed/train/metadata.csv", index=False, encoding="utf-8-sig")

data_frame

4. lora微调

下载模型

from diffsynth import download_models

download_models(["Kolors", "SDXL-vae-fp16-fix"])

#模型训练

import os

cmd = """

python DiffSynth-Studio/examples/train/kolors/train_kolors_lora.py \

--pretrained_unet_path models/kolors/Kolors/unet/diffusion_pytorch_model.safetensors \

--pretrained_text_encoder_path models/kolors/Kolors/text_encoder \

--pretrained_fp16_vae_path models/sdxl-vae-fp16-fix/diffusion_pytorch_model.safetensors \

--lora_rank 16 \

--lora_alpha 4.0 \

--dataset_path data/lora_dataset_processed \

--output_path ./models \

--max_epochs 1 \

--center_crop \

--use_gradient_checkpointing \

--precision "16-mixed"

""".strip()

os.system(cmd)

5. 加载微调好的模型

from diffsynth import ModelManager, SDXLImagePipeline

from peft import LoraConfig, inject_adapter_in_model

import torch

def load_lora(model, lora_rank, lora_alpha, lora_path):

lora_config = LoraConfig(

r=lora_rank,

lora_alpha=lora_alpha,

init_lora_weights="gaussian",

target_modules=["to_q", "to_k", "to_v", "to_out"],

)

model = inject_adapter_in_model(lora_config, model)

state_dict = torch.load(lora_path, map_location="cpu")

model.load_state_dict(state_dict, strict=False)

return model

Load models

model_manager = ModelManager(torch_dtype=torch.float16, device="cuda",

file_path_list=[

"models/kolors/Kolors/text_encoder",

"models/kolors/Kolors/unet/diffusion_pytorch_model.safetensors",

"models/kolors/Kolors/vae/diffusion_pytorch_model.safetensors"

])

pipe = SDXLImagePipeline.from_model_manager(model_manager)

Load LoRA

pipe.unet = load_lora(

pipe.unet,

lora_rank=16, # This parameter should be consistent with that in your training script.

lora_alpha=2.0, # lora_alpha can control the weight of LoRA.

lora_path="models/lightning_logs/version_0/checkpoints/epoch=0-step=500.ckpt"

)

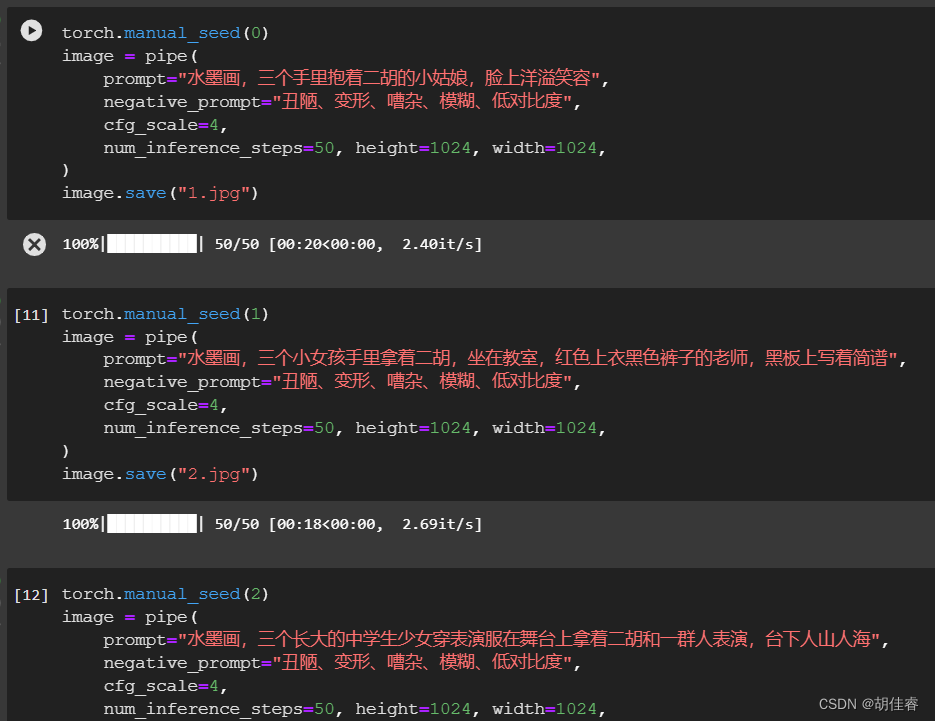

6. 图片生成

torch.manual_seed(0)

image = pipe(

prompt="二次元,一个紫色短发小女孩,在家中沙发上坐着,双手托着腮,很无聊,全身,粉色连衣裙",

negative_prompt="丑陋、变形、嘈杂、模糊、低对比度",

cfg_scale=4,

num_inference_steps=50, height=1024, width=1024,

)

image.save("1.jpg")

③实操中发现的一些问题

描述提示词过于简洁导致图片过于粗糙,缺少细节,且前后画风不一致。例如生成的二胡形象在图片中呈现了多个形象。

下次进行文生图时描述应该更加具体(对于人的五官,神情,手指动作,物象的状态外形,大环境等),保持人物形象描述的一致等细节。

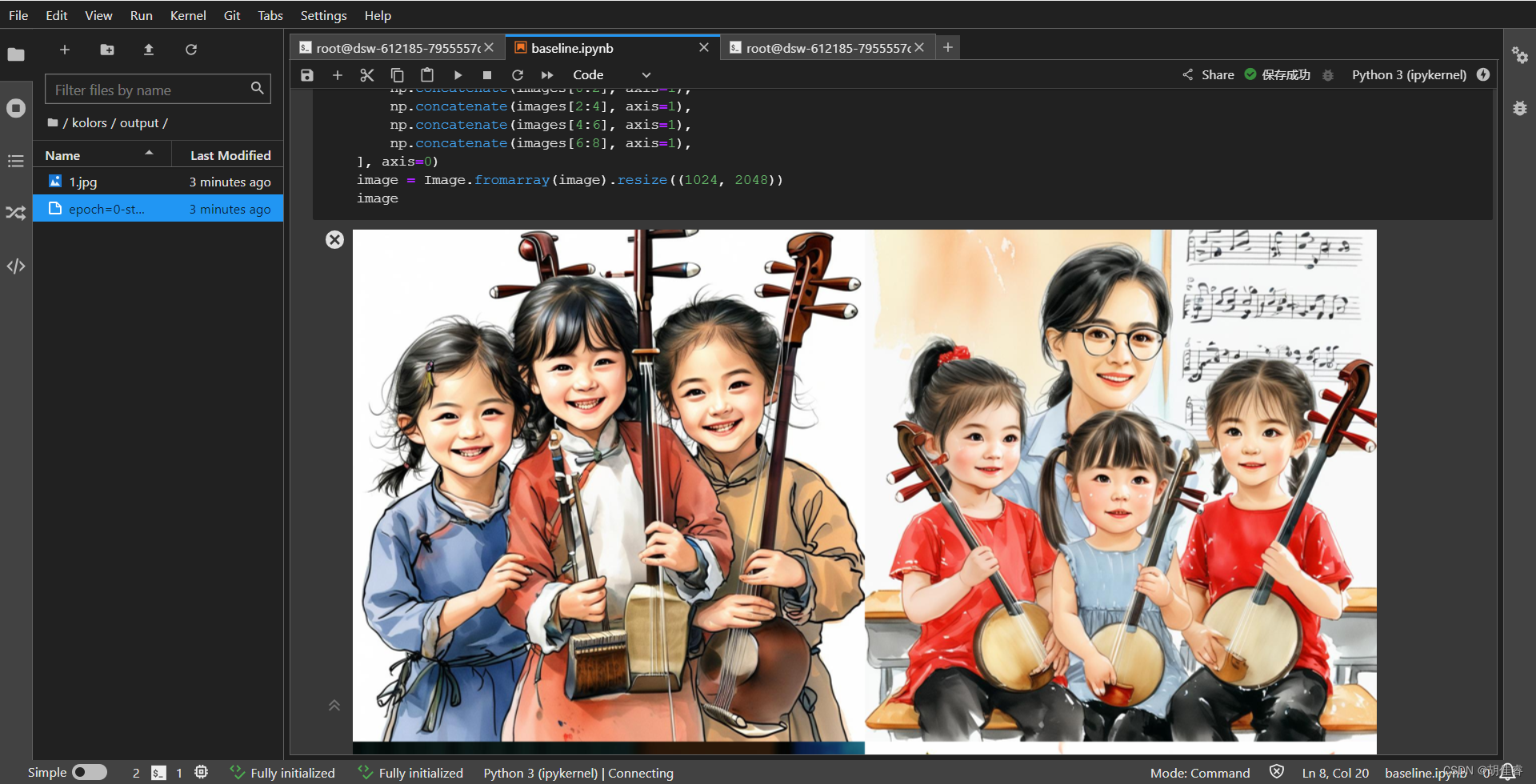

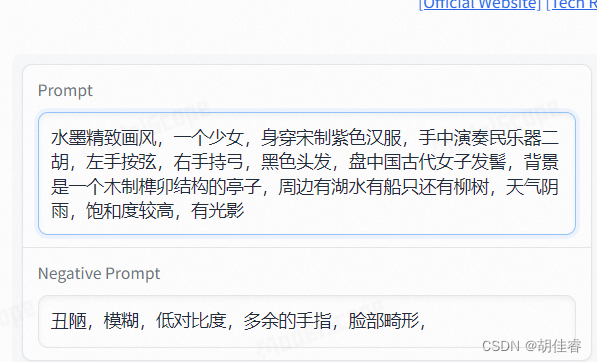

④魔搭热门文生图AI应用集锦试用——可图文生图

应用简介:可图是快手开源的一种名为Kolors(可图)的文本到图像生成模型,该模型具有对英语和汉语的深刻理解,并能够生成高质量、逼真的图像。

生成效果接近Midjourney-v6 水平,而且可输入长达256 tokens的文本,最重要的可以渲染中文。

吸取第一次跑baseline的经验,描述详细一些~

效果确实好很多,所以描述的越加详细,呈现出来的图片也更加精细

版权归原作者 胡佳睿 所有, 如有侵权,请联系我们删除。