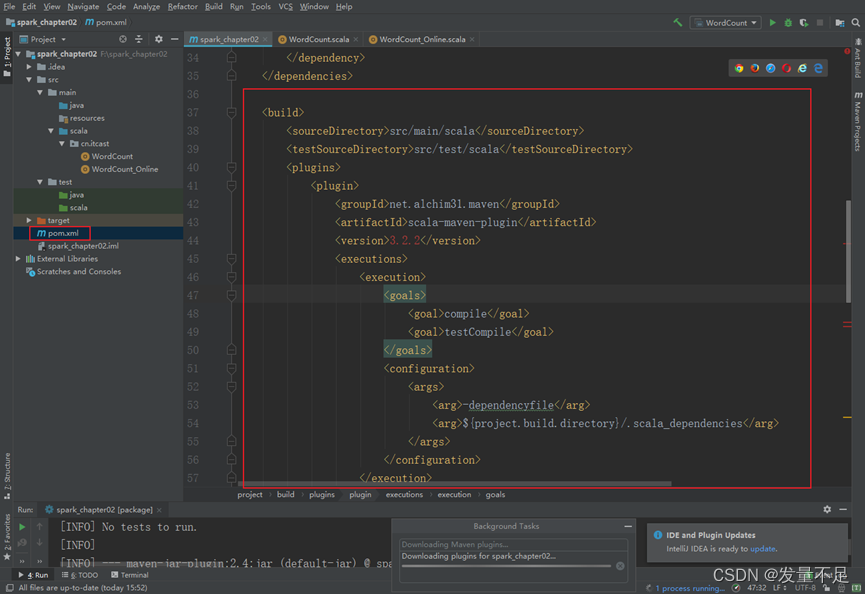

#添加打包插件

在pom.xml文件中添加所需插件

插入内容如下:

<build>

<sourceDirectory>src/main/scala</sourceDirectory>

<testSourceDirectory>src/test/scala</testSourceDirectory>

<plugins>

<plugin>

<groupId>net.alchim31.maven</groupId>

<artifactId>scala-maven-plugin</artifactId>

<version>3.2.2</version>

<executions>

<execution>

<goals>

<goal>compile</goal>

<goal>testCompile</goal>

</goals>

<configuration>

<args>

<arg>-dependencyfile</arg>

<arg>${project.build.directory}/.scala_dependencies</arg>

</args>

</configuration>

</execution>

</executions>

</plugin>

<plugin>

<groupId>org.apache.maven.plugins</groupId>

<artifactId>maven-shade-plugin</artifactId>

<version>2.4.3</version>

<executions>

<execution>

<phase>package</phase>

<goals>

<goal>shade</goal>

</goals>

<configuration>

<filters>

<filter>

<artifact>*:*</artifact>

<excludes>

<exclude>META-INF/*.SF</exclude>

<exclude>META-INF/*.DSA</exclude>

<exclude>META-INF/*.RSA</exclude>

</excludes>

</filter>

</filters>

<transformers>

<transformer implementation=

"org.apache.maven.plugins.shade.resource.ManifestResourceTransformer">

<mainClass></mainClass>

</transformer>

</transformers>

</configuration>

</execution>

</executions>

</plugin>

</plugins>

</build>

等待加载

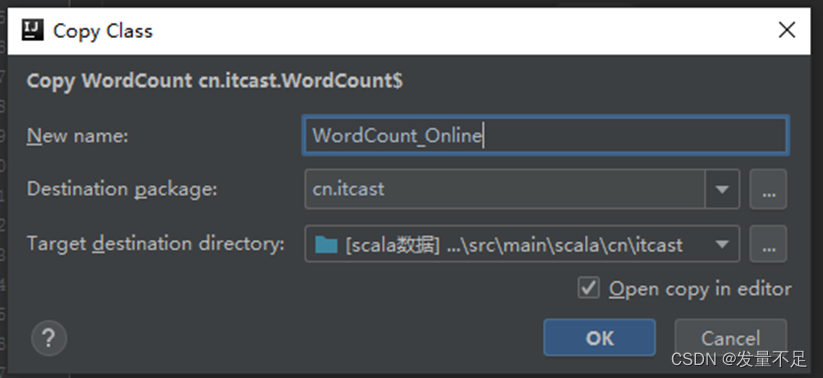

步骤1 将鼠标点在WordCount ,ctrl+c后ctrl+v复制,重新命名为WordCount_Online

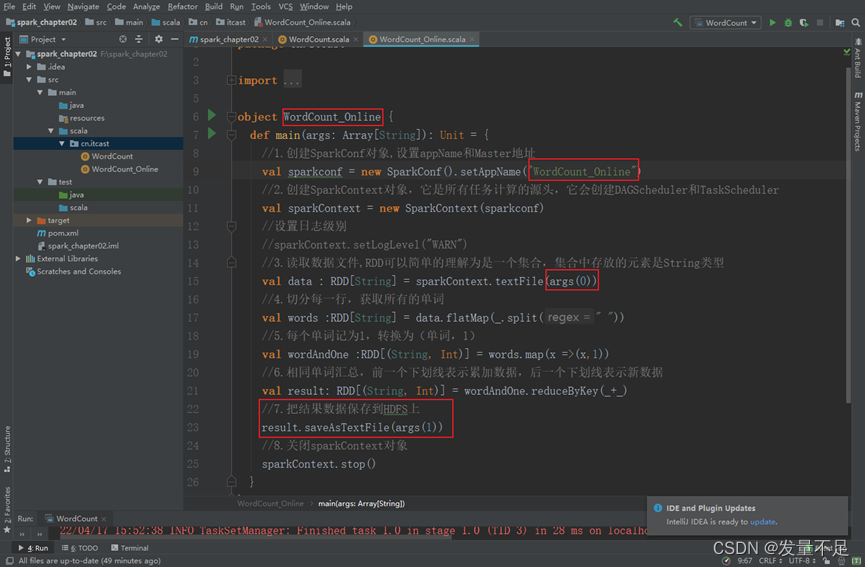

步骤2 修改代码

# 3.读取数据文件,RDD可以简单的理解为是一个集合,集合中存放的元素是String类型

val data : RDD[String] = sparkContext.textFile(args(0))

7.把结果数据保存到HDFS上

result.saveAsTextFile(args(1))

**# **修改以上这2行代码

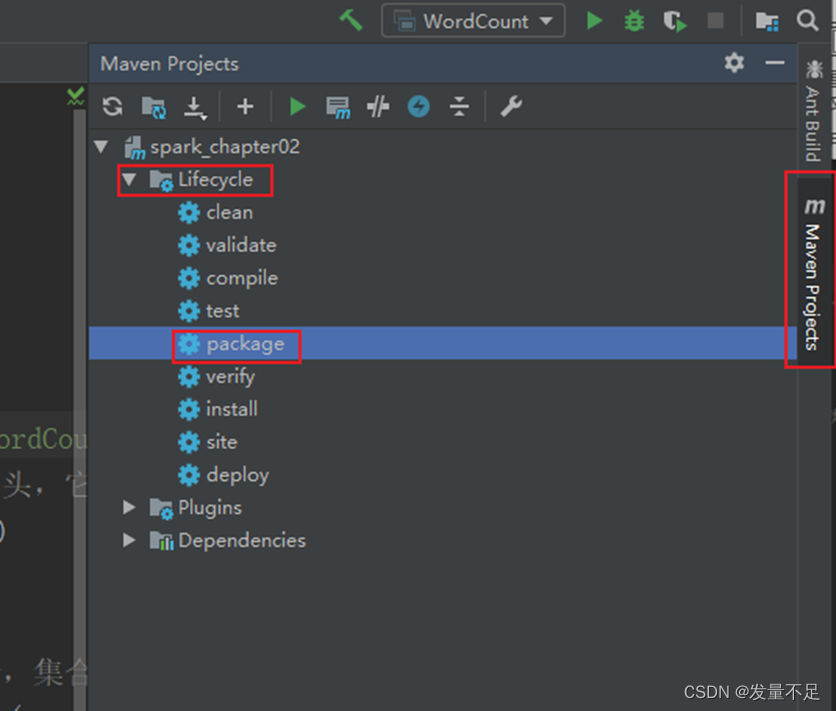

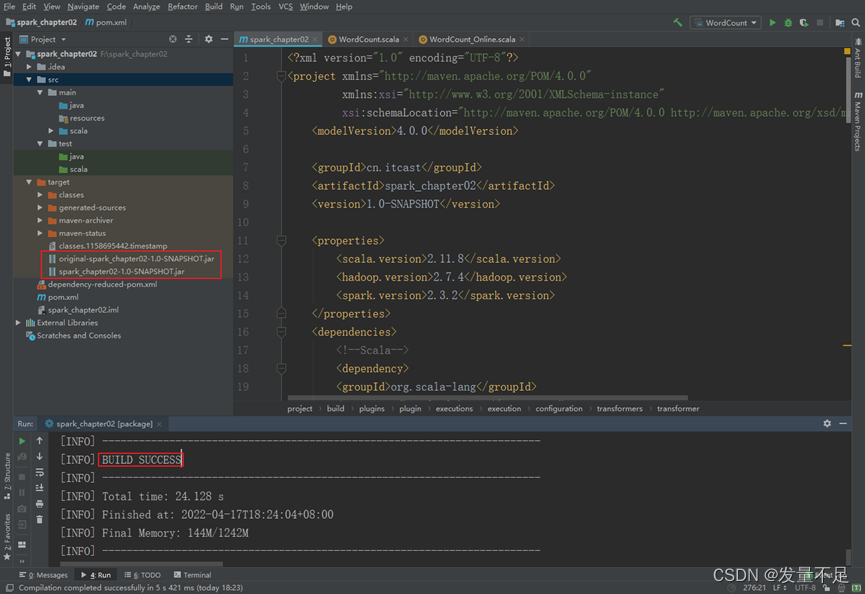

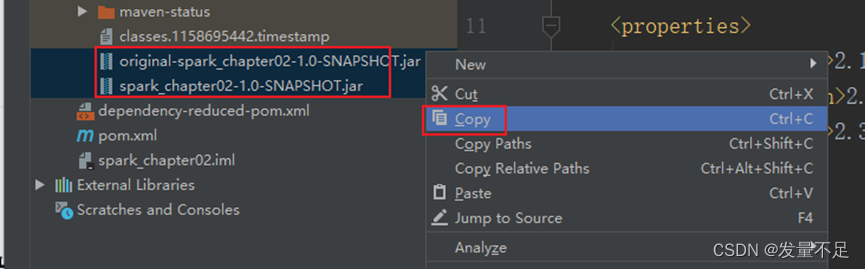

步骤3 点击右边【maven projects】—>双击【lifecycle】下的package,自动将项目打包成Jar包

打包成功标志: 显示BUILD SUCCESS,可以看到target目录下的2个jar包

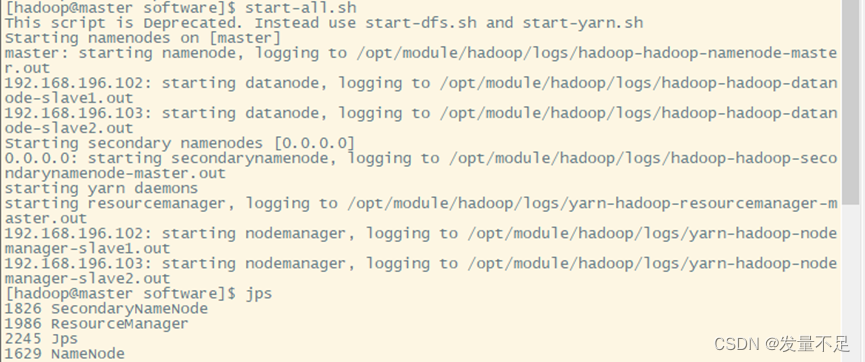

步骤4 启动Hadoop集群才能访问web页面

$ start-all.sh

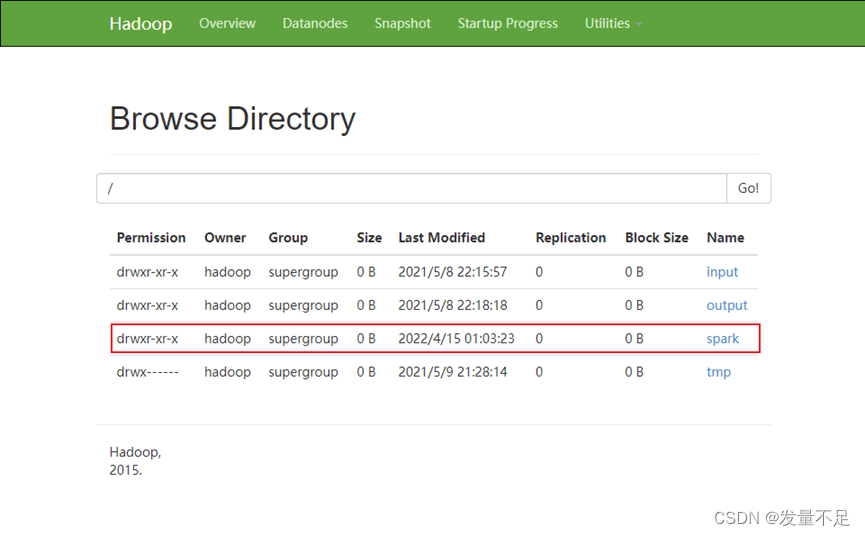

步骤5 访问192.168.196.101(master):50070 点击【utilities】—>【browse the file system】

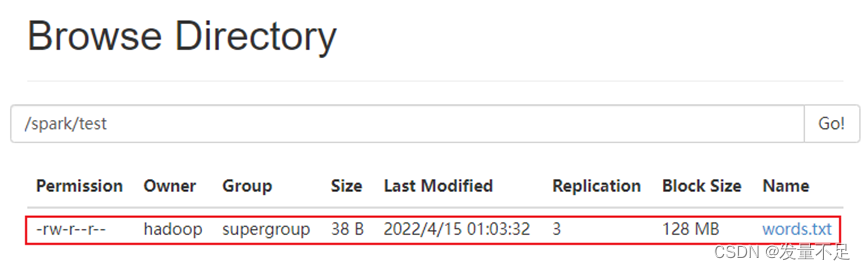

步骤6 点击【spark】—>【test】,可以看到words.txt

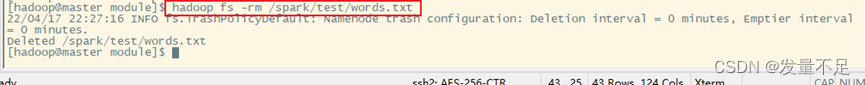

步骤7 将words.txt删除

$ hadoop fs -rm /spark/test/words.txt

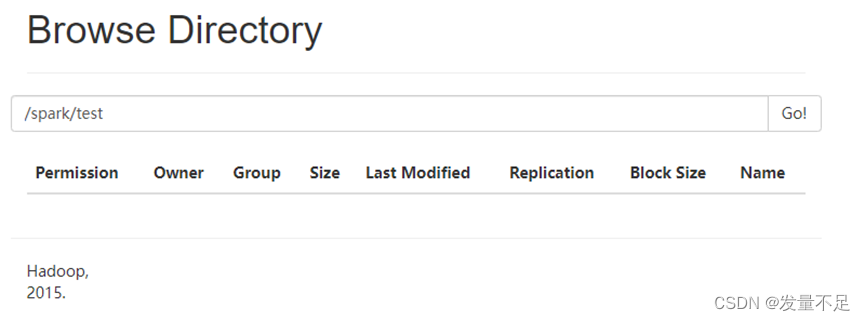

步骤8 刷新下页面。可以看到/spark/test路径下没有words.txt

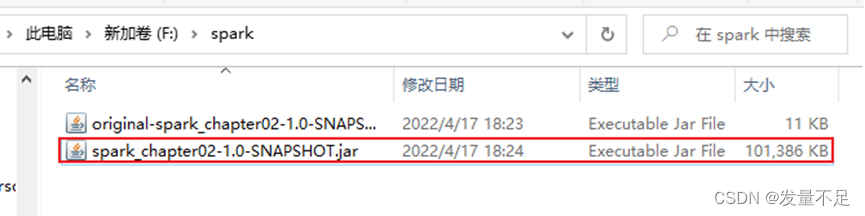

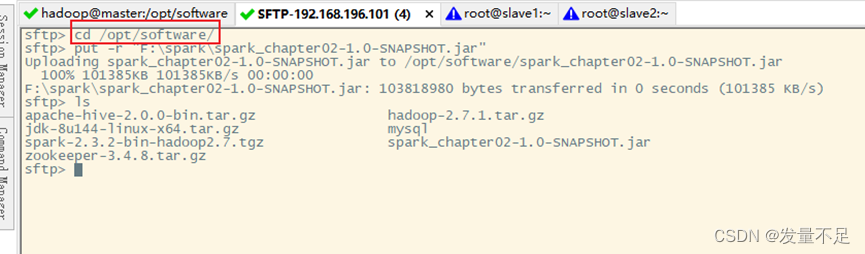

步骤9 Alt+p,切到/opt/software,把含有第3方jar的spark_chapter02-1.0-SNAPSHOT.jar包拉进

#先将解压的两个jar包复制出来

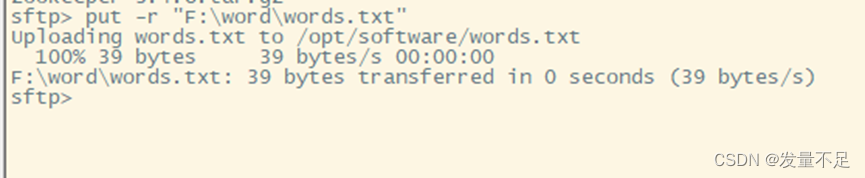

步骤10 也把F盘/word/words.txt直接拉进/opt/software

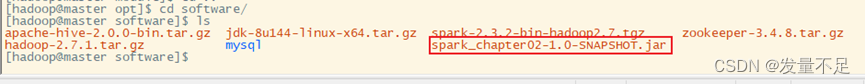

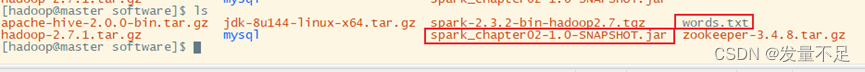

步骤11 查看有没有words.txt和spark_chapter02-1.0-SNAPSHOT.jar

步骤12 执行提交命令

**$***bin/spark-submit *

** --master spark://master:7077 **

** --executor-memory 1g **

** --total-executor-cores 1 **

**/opt/software/spark_chapter02-1.0-SNAPSHOT.jar **

**/spark/test/words.txt **

/spark/test/out

版权归原作者 发量不足 所有, 如有侵权,请联系我们删除。