文章目录

1、sparkthrift Server 启动命令

因为是测试命令,所以你需要和正式服务进行区别,不改变节点的情况下需要改变服务名称和服务端口。

sbin/start-thriftserver.sh \

--name spark_sql_thriftserver2 \

--master yarn --deploy-mode client \

--driver-cores 4 --driver-memory 8g \

--executor-cores 4 --executor-memory 6g \

--conf spark.scheduler.mode=FAIR \

--conf spark.hadoop.fs.hdfs.impl.disable.cache=true \

--conf spark.serializer=org.apache.spark.serializer.KryoSerializer \

--hiveconf hive.server2.thrift.bind.host=`hostname -i` \

--hiveconf hive.server2.thrift.port=10002

命令解析:

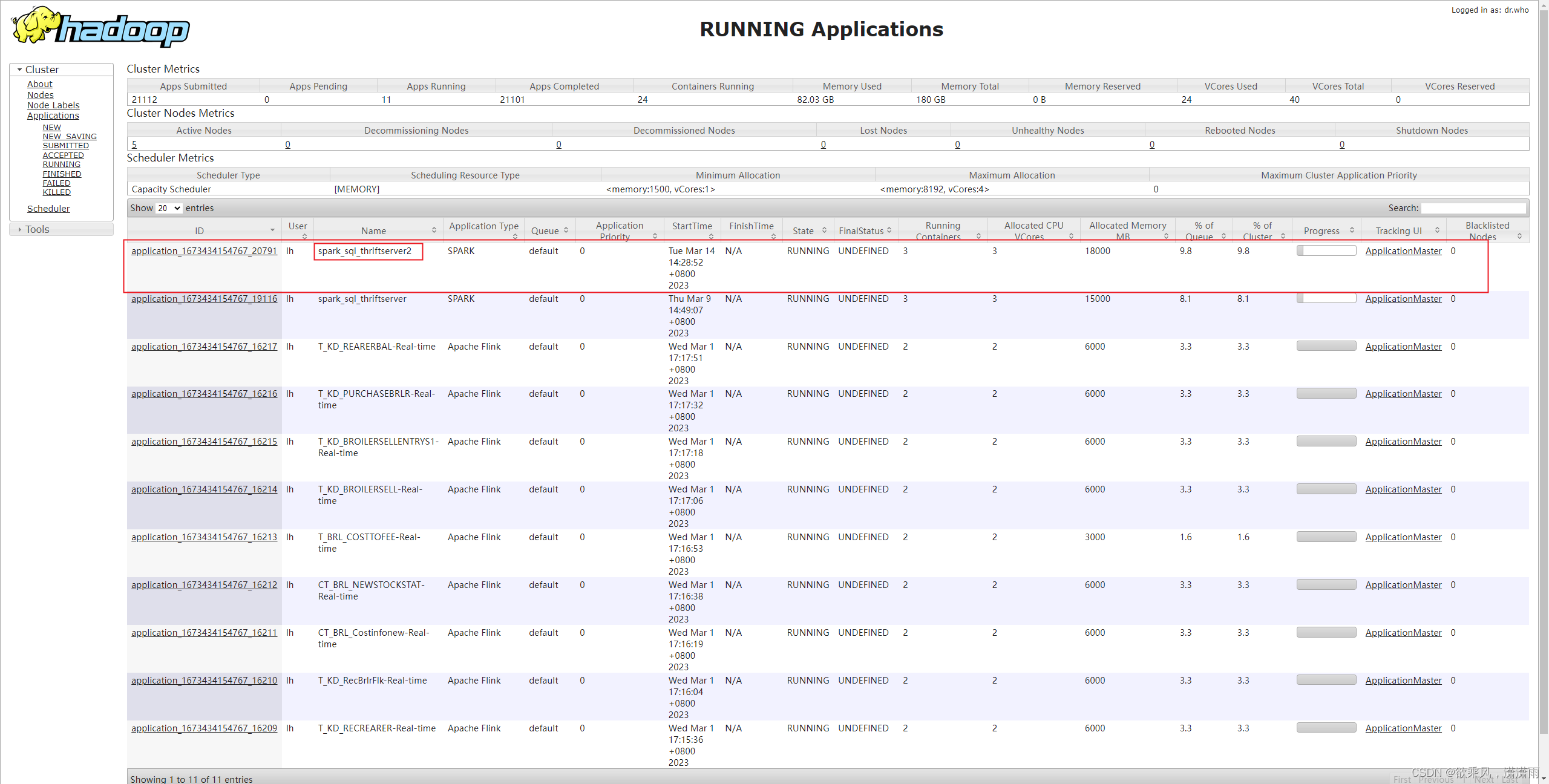

- –name spark_sql_thriftserver2 指定服务名称为 spark_sql_thriftserver2 效果如图:

- –master yarn --deploy-mode client 指定 spark Job 提交的运行模式为 yarn-client。 提交至 yarn 的运行模式有两种:yarn-client和yarn-cluster;yarn-client 的模式 driver 运行在本机,yarn-cluster 的模式 driver 提交给 yarn 进行指定,运行在 applicationMaster 所在节点。 因为 sparkthrift Server 是交互式的任务,需要固定节点和端口,所以只能使用 yarn-client 模式。

- –driver-cores 4 --driver-memory 8g 指定主程序运行的环境配置,这里需要考虑本机的资源进行合理配置,因为 sparkthrift Server 是常驻任务,并且提供连接查询数据,所以考虑给大一点,cpu给4个,内存给8g。

- –executor-cores 4 --executor-memory 6g 指定启动每个 executor 需要分配的资源,同样需要考虑yarn每个节点的剩余资源进行合理分配;cpu给4个,内存给6g。

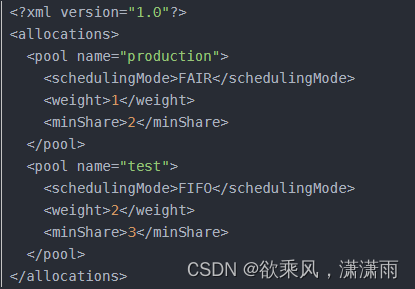

- –conf spark.scheduler.mode=FAIR 配置 spark 中 job 池的调度模式,该模式有两种:FIFO和FAIR。 FIFO:每个job没有优先级,不做任务调度,顺序执行。 FAIR:判断每个job的优先权重后,优先级高的,权重大的先调度执行。 当然每个池子都可以配置不同的模式(,权重,最小的资源使用量),参考如图:

- –conf spark.hadoop.fs.hdfs.impl.disable.cache=true 禁止该任务使用cache缓存。

- –conf spark.serializer=org.apache.spark.serializer.KryoSerializer 指定序列化类为Kryo,这个参数非必要,我测试的时候发现默认的序列化类就是这个。

- –hiveconf hive.server2.thrift.bind.host=

hostname -i指定提供查询的server2服务的地址:即本机ip即可。(hostname=>本机别称,hostname -i=>本机ip) - –hiveconf hive.server2.thrift.port=10002 指定提供查询的server2服务的端口为:10002。(注意查看一下该端口是否被其他服务占用,

lsof -i=>查询所有端口使用情况,lsof -i:端口=>查询某个端口的使用情况)

2、实际生产过程中的报错解决

2.1、Kryo serialization failed: Buffer overflow. Available: 0, required: 2428400. To avoid this, increase spark.kryoserializer.buffer.max value

查询一张300多万条数据的表时(查表直接用的是 select * from tablename),缓冲一段时间后报错:

Caused by:org.apache.spark.SparkException:Kryo serialization failed:Bufferoverflow. Available:0, required:2428400.To avoid this, increase spark.kryoserializer.buffer.max value. at

org.apache.spark.serializer.KryoSerializerInstance.serialize(KryoSerializer.scala:350) at

org.apache.spark.executor.Executor$TaskRunner.run(Executor.scala:393)...

报错信息提示:序列化的缓冲内存不足,需要扩大缓存内存的容量。

这里应该时 driver 解析 task 发送过来的数据,task 任务会将数据序列化后进行网络传输,driver 接收到数据流后对其反序列化解析数据,才能将数据实际呈现,至于是 写buffer 还是 读buffer 的内存不足这里不做深入。

直接根据其提示,配置相应的参数:

--conf spark.kryoserializer.buffer=64m //这个指定序列化的默认缓冲容量(这个配置可有可无,不影响)

--conf spark.kryoserializer.buffer.max=1024m //指定序列化的最大缓冲容量

2.2、java.lang.OutOfMemoryError: GC overhead limit exceeded

衔接2.1,解决了序列化缓冲的问题,查询同一张表,缓冲了很长一段时间后,任务失败,连同 sparkthrift 任务一同挂掉了。

查询 sparkthrift 任务的执行日志可见主要报错信息:

23/03/1411:18:29 WARN TransportChannelHandler:Exception in connection from /192.168.1.120:60792java.lang.OutOfMemoryError: GC overhead limit exceeded

at java.util.Arrays.copyOfRange(Arrays.java:3664)

at java.lang.String.<init>(String.java:207)

at java.lang.StringBuilder.toString(StringBuilder.java:407)

at java.lang.Class.getDeclaredMethod(Class.java:2130)

at java.io.ObjectStreamClass.getInheritableMethod(ObjectStreamClass.java:1611)

at java.io.ObjectStreamClass.access$2400(ObjectStreamClass.java:79)

at java.io.ObjectStreamClass$3.run(ObjectStreamClass.java:531)

at java.io.ObjectStreamClass$3.run(ObjectStreamClass.java:494)

at java.security.AccessController.doPrivileged(NativeMethod)

at java.io.ObjectStreamClass.<init>(ObjectStreamClass.java:494)

at java.io.ObjectStreamClass.lookup(ObjectStreamClass.java:391)

at java.io.ObjectStreamClass.initNonProxy(ObjectStreamClass.java:681)

at java.io.ObjectInputStream.readNonProxyDesc(ObjectInputStream.java:2001)

at java.io.ObjectInputStream.readClassDesc(ObjectInputStream.java:1848)

at java.io.ObjectInputStream.readOrdinaryObject(ObjectInputStream.java:2158)

at java.io.ObjectInputStream.readObject0(ObjectInputStream.java:1665)

at java.io.ObjectInputStream.defaultReadFields(ObjectInputStream.java:2403)

at java.io.ObjectInputStream.readSerialData(ObjectInputStream.java:2327)

at java.io.ObjectInputStream.readOrdinaryObject(ObjectInputStream.java:2185)

at java.io.ObjectInputStream.readObject0(ObjectInputStream.java:1665)

at java.io.ObjectInputStream.readObject(ObjectInputStream.java:501)

at java.io.ObjectInputStream.readObject(ObjectInputStream.java:459)

at org.apache.spark.serializer.JavaDeserializationStream.readObject(JavaSerializer.scala:76)

at org.apache.spark.serializer.JavaSerializerInstance.deserialize(JavaSerializer.scala:109)

at org.apache.spark.rpc.netty.NettyRpcEnv.$anonfun$deserialize$2(NettyRpcEnv.scala:299)

at org.apache.spark.rpc.netty.NettyRpcEnv$$Lambda$835/115143278.apply(UnknownSource)

at scala.util.DynamicVariable.withValue(DynamicVariable.scala:62)

at org.apache.spark.rpc.netty.NettyRpcEnv.deserialize(NettyRpcEnv.scala:352)

at org.apache.spark.rpc.netty.NettyRpcEnv.$anonfun$deserialize$1(NettyRpcEnv.scala:298)

at org.apache.spark.rpc.netty.NettyRpcEnv$$Lambda$834/1059022105.apply(UnknownSource)

at scala.util.DynamicVariable.withValue(DynamicVariable.scala:62)

at org.apache.spark.rpc.netty.NettyRpcEnv.deserialize(NettyRpcEnv.scala:298)

有报错信息可以得知是 GC 超限了。

复现问题的时候,任务执行期间,用 top 命令查询 任务资源消耗情况。发现 sparkthrift 任务内存使用情况比较稳定,稳定维持在20%以下,但是cpu的使用率却高达1200%以上。

同时可见报错信息主要来自于

java.io.ObjectInputStream

这个类,该类应该是 driver 端对数据流进行反序列化解析读取数据时调用的。

个人推测应该是 driver 端解析数据时,内存使用率爆满,但是并没有多余闲置的内存可以释放,所以 cpu 反复 GC 无果,最后报错 GC 超限了。

所以根据推断,我调整了扩大了 driver 端内存的容量:

--driver-cores 4 --driver-memory 12g //这个扩大了driver的内存大小,同时可以考虑调大cpu的数量,毕竟测试的时候cpu使用率都达到1200%以上了

2.3、Job aborted due to stage failure: Total size of serialized results of 7 tasks (1084.0 MiB) is bigger than spark.driver.maxResultSize (1024.0 MiB)

衔接2.2,GC超限问题解决后,查询同一张表,秒出报错信息:

SQL 错误:org.apache.hive.service.cli.HiveSQLException:Error running query:org.apache.spark.SparkException:Job aborted due tostage failure:Total size of serialized results of 7 tasks (1084.0MiB) is bigger than spark.driver.maxResultSize (1024.0MiB)

at org.apache.spark.sql.hive.thriftserver.SparkExecuteStatementOperation.org$apache$spark$sql$hive$thriftserver$SparkExecuteStatementOperation$$execute(SparkExecuteStatementOperation.scala:361)

at org.apache.spark.sql.hive.thriftserver.SparkExecuteStatementOperation$$anon$2$$anon$3.$anonfun$run$2(SparkExecuteStatementOperation.scala:263)

at scala.runtime.java8.JFunction0$mcV$sp.apply(JFunction0$mcV$sp.java:23)

at org.apache.spark.sql.hive.thriftserver.SparkOperation.withLocalProperties(SparkOperation.scala:78)

at org.apache.spark.sql.hive.thriftserver.SparkOperation.withLocalProperties$(SparkOperation.scala:62)

at org.apache.spark.sql.hive.thriftserver.SparkExecuteStatementOperation.withLocalProperties(SparkExecuteStatementOperation.scala:43)

at org.apache.spark.sql.hive.thriftserver.SparkExecuteStatementOperation$$anon$2$$anon$3.run(SparkExecuteStatementOperation.scala:263)

at org.apache.spark.sql.hive.thriftserver.SparkExecuteStatementOperation$$anon$2$$anon$3.run(SparkExecuteStatementOperation.scala:258)

at java.security.AccessController.doPrivileged(NativeMethod)

at javax.security.auth.Subject.doAs(Subject.java:422)

at org.apache.hadoop.security.UserGroupInformation.doAs(UserGroupInformation.java:1746)

at org.apache.spark.sql.hive.thriftserver.SparkExecuteStatementOperation$$anon$2.run(SparkExecuteStatementOperation.scala:272)

at java.util.concurrent.Executors$RunnableAdapter.call(Executors.java:511)

at java.util.concurrent.FutureTask.run(FutureTask.java:266)

at java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1149)

at java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:624)

at java.lang.Thread.run(Thread.java:748)Caused by:org.apache.spark.SparkException:Job aborted due tostage failure:Total size of serialized results of 7 tasks (1084.0MiB) is bigger than spark.driver.maxResultSize (1024.0MiB)

at org.apache.spark.scheduler.DAGScheduler.failJobAndIndependentStages(DAGScheduler.scala:2258)

at org.apache.spark.scheduler.DAGScheduler.$anonfun$abortStage$2(DAGScheduler.scala:2207)

at org.apache.spark.scheduler.DAGScheduler.$anonfun$abortStage$2$adapted(DAGScheduler.scala:2206)

at scala.collection.mutable.ResizableArray.foreach(ResizableArray.scala:62)

at scala.collection.mutable.ResizableArray.foreach$(ResizableArray.scala:55)

at scala.collection.mutable.ArrayBuffer.foreach(ArrayBuffer.scala:49)

at org.apache.spark.scheduler.DAGScheduler.abortStage(DAGScheduler.scala:2206)

at org.apache.spark.scheduler.DAGScheduler.$anonfun$handleTaskSetFailed$1(DAGScheduler.scala:1079)

at org.apache.spark.scheduler.DAGScheduler.$anonfun$handleTaskSetFailed$1$adapted(DAGScheduler.scala:1079)

at scala.Option.foreach(Option.scala:407)

at org.apache.spark.scheduler.DAGScheduler.handleTaskSetFailed(DAGScheduler.scala:1079)

at org.apache.spark.scheduler.DAGSchedulerEventProcessLoop.doOnReceive(DAGScheduler.scala:2445)

at org.apache.spark.scheduler.DAGSchedulerEventProcessLoop.onReceive(DAGScheduler.scala:2387)

at org.apache.spark.scheduler.DAGSchedulerEventProcessLoop.onReceive(DAGScheduler.scala:2376)

at org.apache.spark.util.EventLoop$$anon$1.run(EventLoop.scala:49)

at org.apache.spark.scheduler.DAGScheduler.runJob(DAGScheduler.scala:868)

at org.apache.spark.SparkContext.runJob(SparkContext.scala:2196)

at org.apache.spark.SparkContext.runJob(SparkContext.scala:2217)

at org.apache.spark.SparkContext.runJob(SparkContext.scala:2236)

at org.apache.spark.SparkContext.runJob(SparkContext.scala:2261)

at org.apache.spark.rdd.RDD.$anonfun$collect$1(RDD.scala:1030)

at org.apache.spark.rdd.RDDOperationScope$.withScope(RDDOperationScope.scala:151)

at org.apache.spark.rdd.RDDOperationScope$.withScope(RDDOperationScope.scala:112)

at org.apache.spark.rdd.RDD.withScope(RDD.scala:414)

at org.apache.spark.rdd.RDD.collect(RDD.scala:1029)

at org.apache.spark.sql.execution.SparkPlan.executeCollect(SparkPlan.scala:390)

at org.apache.spark.sql.Dataset.collectFromPlan(Dataset.scala:3696)

at org.apache.spark.sql.Dataset.$anonfun$collect$1(Dataset.scala:2965)

at org.apache.spark.sql.Dataset.$anonfun$withAction$1(Dataset.scala:3687)

at org.apache.spark.sql.execution.SQLExecution$.$anonfun$withNewExecutionId$5(SQLExecution.scala:103)

at org.apache.spark.sql.execution.SQLExecution$.withSQLConfPropagated(SQLExecution.scala:163)

at org.apache.spark.sql.execution.SQLExecution$.$anonfun$withNewExecutionId$1(SQLExecution.scala:90)

at org.apache.spark.sql.SparkSession.withActive(SparkSession.scala:775)

at org.apache.spark.sql.execution.SQLExecution$.withNewExecutionId(SQLExecution.scala:64)

at org.apache.spark.sql.Dataset.withAction(Dataset.scala:3685)

at org.apache.spark.sql.Dataset.collect(Dataset.scala:2965)

at org.apache.spark.sql.hive.thriftserver.SparkExecuteStatementOperation.org$apache$spark$sql$hive$thriftserver$SparkExecuteStatementOperation$$execute(SparkExecuteStatementOperation.scala:334)...16 more

由报错信息可知:某个 task 返回给 driver 的结果集已经超出了默认的大小,那么直接调大结果集的最大容量即可。

一开始我扩大了一倍到2g,但是后来2g又不够了,再给3g,继续不够,得最终直接给到6g算了,反正资源充足。

--conf spark.driver.maxResultSize=6g //如果需要不限制结果集大小的话,直接该参数 =0 即可。

但是该参数应该并不适合无限制放大,其他博主的方案是要减小分区的数量(写spark程序时需要进行优化考虑),以减小最后 driver 端的内存压力。

2.4、java.lang.OutOfMemoryError: Java heap space

当我查询更大的表时(数据量7000W行),最终还是支持不住,报错了:

SQL 错误:org.apache.hive.service.cli.HiveSQLException:Error running query:java.lang.OutOfMemoryError:Java heap space

at org.apache.spark.sql.hive.thriftserver.SparkExecuteStatementOperation.org$apache$spark$sql$hive$thriftserver$SparkExecuteStatementOperation$$execute(SparkExecuteStatementOperation.scala:361)

at org.apache.spark.sql.hive.thriftserver.SparkExecuteStatementOperation$$anon$2$$anon$3.$anonfun$run$2(SparkExecuteStatementOperation.scala:263)

at scala.runtime.java8.JFunction0$mcV$sp.apply(JFunction0$mcV$sp.java:23)

at org.apache.spark.sql.hive.thriftserver.SparkOperation.withLocalProperties(SparkOperation.scala:78)

at org.apache.spark.sql.hive.thriftserver.SparkOperation.withLocalProperties$(SparkOperation.scala:62)

at org.apache.spark.sql.hive.thriftserver.SparkExecuteStatementOperation.withLocalProperties(SparkExecuteStatementOperation.scala:43)

at org.apache.spark.sql.hive.thriftserver.SparkExecuteStatementOperation$$anon$2$$anon$3.run(SparkExecuteStatementOperation.scala:263)

at org.apache.spark.sql.hive.thriftserver.SparkExecuteStatementOperation$$anon$2$$anon$3.run(SparkExecuteStatementOperation.scala:258)

at java.security.AccessController.doPrivileged(NativeMethod)

at javax.security.auth.Subject.doAs(Subject.java:422)

at org.apache.hadoop.security.UserGroupInformation.doAs(UserGroupInformation.java:1746)

at org.apache.spark.sql.hive.thriftserver.SparkExecuteStatementOperation$$anon$2.run(SparkExecuteStatementOperation.scala:272)

at java.util.concurrent.Executors$RunnableAdapter.call(Executors.java:511)

at java.util.concurrent.FutureTask.run(FutureTask.java:266)

at java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1149)

at java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:624)

at java.lang.Thread.run(Thread.java:748)Caused by:java.lang.OutOfMemoryError:Java heap space

at org.apache.spark.sql.execution.SparkPlan$$anon$1.next(SparkPlan.scala:373)

at org.apache.spark.sql.execution.SparkPlan$$anon$1.next(SparkPlan.scala:369)

at scala.collection.Iterator.foreach(Iterator.scala:941)

at scala.collection.Iterator.foreach$(Iterator.scala:941)

at org.apache.spark.sql.execution.SparkPlan$$anon$1.foreach(SparkPlan.scala:369)

at org.apache.spark.sql.execution.SparkPlan.$anonfun$executeCollect$1(SparkPlan.scala:391)

at org.apache.spark.sql.execution.SparkPlan.$anonfun$executeCollect$1$adapted(SparkPlan.scala:390)

at org.apache.spark.sql.execution.SparkPlan$$Lambda$3241/463491470.apply(UnknownSource)

at scala.collection.IndexedSeqOptimized.foreach(IndexedSeqOptimized.scala:36)

at scala.collection.IndexedSeqOptimized.foreach$(IndexedSeqOptimized.scala:33)

at scala.collection.mutable.ArrayOps$ofRef.foreach(ArrayOps.scala:198)

at org.apache.spark.sql.execution.SparkPlan.executeCollect(SparkPlan.scala:390)

at org.apache.spark.sql.Dataset.collectFromPlan(Dataset.scala:3696)

at org.apache.spark.sql.Dataset.$anonfun$collect$1(Dataset.scala:2965)

at org.apache.spark.sql.Dataset$$Lambda$2425/999790851.apply(UnknownSource)

at org.apache.spark.sql.Dataset.$anonfun$withAction$1(Dataset.scala:3687)

at org.apache.spark.sql.Dataset$$Lambda$1881/1956032123.apply(UnknownSource)

at org.apache.spark.sql.execution.SQLExecution$.$anonfun$withNewExecutionId$5(SQLExecution.scala:103)

at org.apache.spark.sql.execution.SQLExecution$$$Lambda$1889/493204045.apply(UnknownSource)

at org.apache.spark.sql.execution.SQLExecution$.withSQLConfPropagated(SQLExecution.scala:163)

at org.apache.spark.sql.execution.SQLExecution$.$anonfun$withNewExecutionId$1(SQLExecution.scala:90)

at org.apache.spark.sql.execution.SQLExecution$$$Lambda$1882/1152849040.apply(UnknownSource)

at org.apache.spark.sql.SparkSession.withActive(SparkSession.scala:775)

at org.apache.spark.sql.execution.SQLExecution$.withNewExecutionId(SQLExecution.scala:64)

at org.apache.spark.sql.Dataset.withAction(Dataset.scala:3685)

at org.apache.spark.sql.Dataset.collect(Dataset.scala:2965)

at org.apache.spark.sql.hive.thriftserver.SparkExecuteStatementOperation.org$apache$spark$sql$hive$thriftserver$SparkExecuteStatementOperation$$execute(SparkExecuteStatementOperation.scala:334)

at org.apache.spark.sql.hive.thriftserver.SparkExecuteStatementOperation$$anon$2$$anon$3.$anonfun$run$2(SparkExecuteStatementOperation.scala:263)

at org.apache.spark.sql.hive.thriftserver.SparkExecuteStatementOperation$$anon$2$$anon$3$$Lambda$2021/540239691.apply$mcV$sp(UnknownSource)

at scala.runtime.java8.JFunction0$mcV$sp.apply(JFunction0$mcV$sp.java:23)

at org.apache.spark.sql.hive.thriftserver.SparkOperation.withLocalProperties(SparkOperation.scala:78)

at org.apache.spark.sql.hive.thriftserver.SparkOperation.withLocalProperties$(SparkOperation.scala:62)

这里总结一下,Spark 常见的两类 OOM 问题:Driver OOM 和 Executor OOM。

如果是 driver 端的 OOM,可以考虑减少分区的数量和扩大 driver 端的内存容量。

如果发生在 executor,可以通过增加分区数量,减少每个 executor 负载。但是此时,会增加 driver 的负载。所以,可能同时需要增加 driver 内存。定位问题时,一定要先判断是哪里出现了 OOM ,对症下药,才能事半功倍。

3、问题留言

最后,我怀疑,是不是 spark 不适合一次性返回这么大的数据量(select * from tablename 这种方式),毕竟这么大数据量都是要进行网络传输的,无论如何 driver 端的压力都会是巨大的。如果有大神知道,望留言解答,蟹蟹各位!

版权归原作者 欲乘风,潇潇雨 所有, 如有侵权,请联系我们删除。