目录

一、基本概念

(一)Hive概念

Hive是基于Hadoop的数据仓库解决方案,将结构化的数据文件映射为数据库表,Hive提供类sql的查询语言HQL(Hive Query Language),Hive让更多的人使用Hadoop。

Hive官网:https://hive.apache.org/

(二)优势和特点

- 提供了一个简单的优化模型

- HQL类SQL语法,简化MR开发

- 支持在不同的计算框架上运行

- 支持在HDFS和HBase上临时查询数据

- 支持用户自定义函数、格式

- 常用于ETL操作和BI

- 稳定可靠(真实生产环境)的批处理

- 有庞大活跃的社区

- MapReduce执行效率更快,Hive开发效率更快

(三)Hive元数据管理

记录数据仓库中模型的定义、各层级间的映射关系:

- Hive存储在关系数据库中,默认的Hive默认数据库是Derby,轻量级内嵌SQL数据库,Derby非常适合测试和演示,存储在metastore_db目录中,实际生产一般存储在MySql中,修改配置文件hive-site.xml。

- HCatalog:将Hive元数据共享给其他应用程序。

- Hive的数据存储在hdfs上,Hive的select语句交给mapreduce来操作,减少写mapreduce的操作。

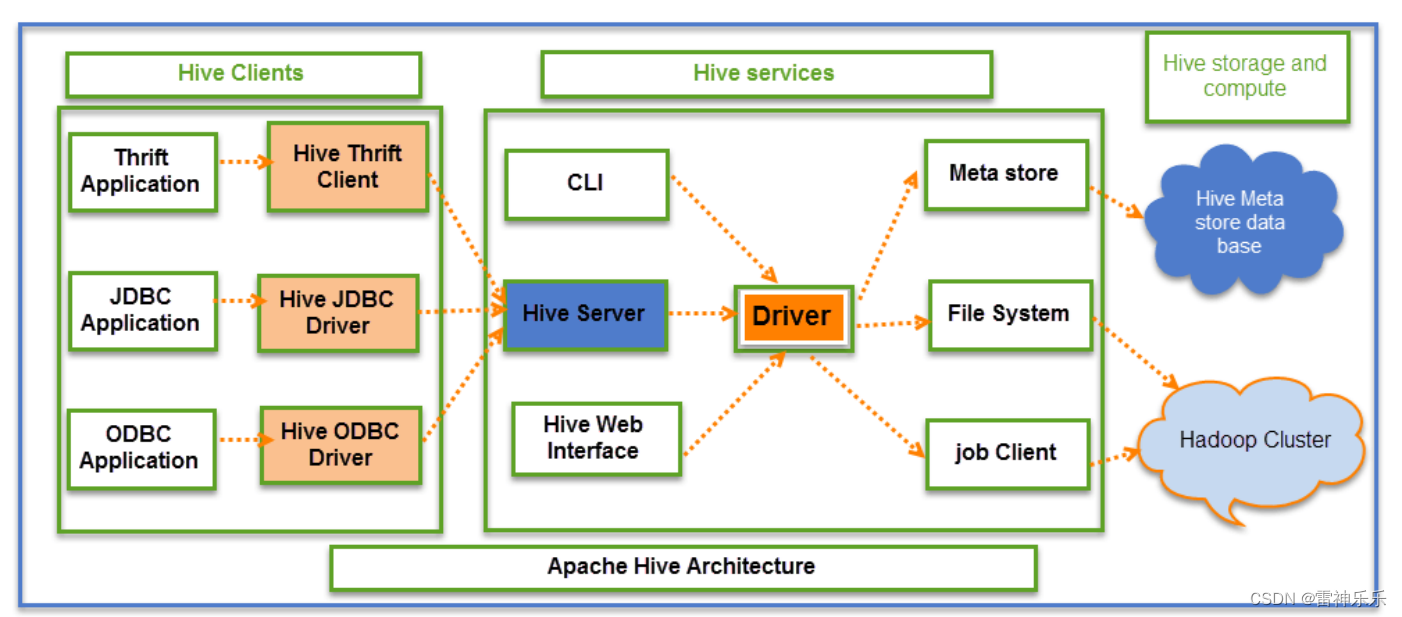

(四)Hive架构

Hive元数据存放在mysql中,表存放在hdfs中

(五)Hive Interface – 其他使用环境

1.Hive Web Interface

2.Hue (Cloudera)

3.Ambari Hive View (Hortonworks)

4.JDBC/ODBC(ETL工具,商业智能工具,集成开发环境)Informatica, Talend,Tableau, QlikView, Zeppelin,Oracle SQL Developer, DB Visualizer等。

二、Hive环境搭建

1.自动安装脚本

(解压、修改文件名、配置环境变量)

#! /bin/bash

echo 'auto install begining...'

# global var

hive=true

if [ "$hive" = true ];then

echo 'hive install set true'

echo 'setup apache-hive-3.1.2-bin.tar.gz'

tar -zxf /opt/install/apache-hive-3.1.2-bin.tar.gz -C /opt/soft/

mv /opt/soft/apache-hive-3.1.2-bin /opt/soft/hive312

sed -i '73a\export PATH=$PATH:$HIVE_HOME/bin' /etc/profile

sed -i '73a\export HIVE_HOME=/opt/soft/hive312' /etc/profile

sed -i '73a\# HIVE_HOME' /etc/profile

echo 'setup hive success!!!'

fi

2./opt/soft/hive312/conf目录下创建hive配置文件hive-site.xml

<?xml version="1.0" encoding="UTF-8" standalone="no"?>

<?xml-stylesheet type="text/xsl" href="configuration.xsl"?>

<configuration>

<property>

<name>hive.metastore.warehouse.dir</name>

<value>/opt/soft/hive312/warehouse</value>

<description></description>

</property>

<property>

<name>hive.metastore.db.type</name>

<value>mysql</value>

<description></description>

</property>

<property>

<name>javax.jdo.option.ConnectionURL</name>

<value>jdbc:mysql://192.168.180.141:3306/hive147?createDatabaseIfNotExist=true</value>

<description></description>

</property>

<property>

<name>javax.jdo.option.ConnectionDriverName</name>

<value>com.mysql.cj.jdbc.Driver</value>

<description></description>

</property>

<property>

<name>javax.jdo.option.ConnectionUserName</name>

<value>root</value>

<description></description>

</property>

<property>

<name>javax.jdo.option.ConnectionPassword</name>

<value>root</value>

<description></description>

</property>

<property>

<name>hive.metastore.schema.verification</name>

<value>false</value>

<description>关闭schema验证</description>

</property>

<property>

<name>hive.cli.print.current.db</name>

<value>true</value>

<description>提示当前数据库名</description>

</property>

<property>

<name>hive.cli.print.header</name>

<value>true</value>

<description>查询输出时带列名一起输出</description>

</property>

</configuration>

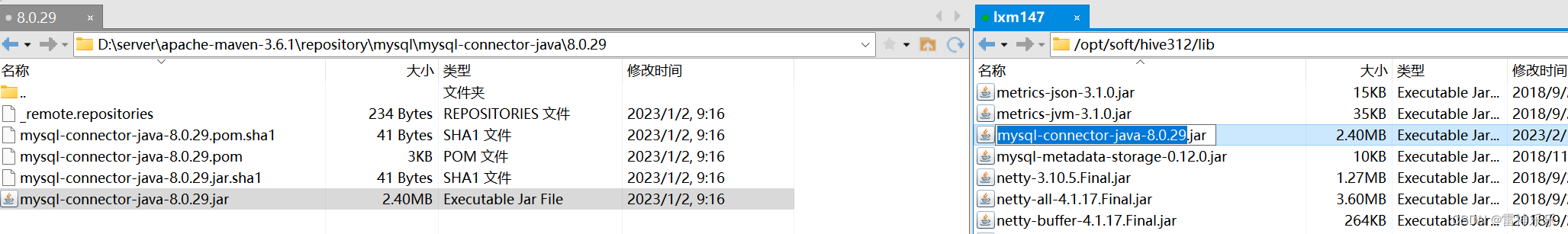

3.拷贝一个jar包到hive下面的lib目录下

4.删除hive的guava,拷贝hadoop下的guava

[root@lxm147 lib]# ls ./ | grep mysql-connector-java-8.0.29.jar

mysql-connector-java-8.0.29.jar

[root@lxm147 lib]# ls ./ | grep guava-19.0.jar

guava-19.0.jar

[root@lxm147 lib]# rm -f ./guava-19.0.jar

[root@lxm147 lib]# ls ./ | grep guava-19.0.jar

[root@lxm147 lib]# find /opt/soft/hadoop313/ -name guava*

/opt/soft/hadoop313/share/hadoop/common/lib/guava-27.0-jre.jar

/opt/soft/hadoop313/share/hadoop/hdfs/lib/guava-27.0-jre.jar

[root@lxm147 lib]# cp /opt/soft/hadoop313/share/hadoop/common/lib/guava-27.0-jre.jar ./

[root@lxm147 lib]# ls ./ | grep guava-27.0-jre.jar

guava-27.0-jre.jar

5.重启环境变量

source /etc/profile

6.启动hadoop服务

start-dfs.sh

start-yarn.sh

7.启动历史服务器

[root@lxm147 hive312]# mr-jobhistory-daemon.sh start historyserver

8.修改hive日志文件的存放位置

hive日志文件默认存放路径:/tmp/root/hive.log

修改hive-log4j2.properties.template文件

[root@lxm148 ~]# cd /opt/soft/hive312/conf/

[root@lxm148 conf]# mv ./hive-log4j2.properties.template ./hive-log4j2.properties

[root@lxm148 conf]# vim hive-log4j2.properties

# 修改第24行

property.hive.log.dir = /opt/soft/hive312/logs

9.HVM堆内存设置

[root@lxm148 conf]# pwd

/opt/module/hive312/conf

[root@lxm148 conf]# mv hive-env.sh.template hive-env.sh

# 将hive-env.sh其中的参数 export HADOOP_HEAPSIZE修改为2048

[root@lxm148 conf]# vim ./hive-env.sh

# The heap size of the jvm stared by hive shell script can be controlled via:

export HADOOP_HEAPSIZE=2048

10.首次连接Hive要初始化数据到mysql中

[root@lxm147 hive312]# schematool -dbType mysql -initSchema

**如果初始化出现错误,需要将mysql数据库中的hive147删除,然后hive再重新初始化 **

如果/tmp/root/目录下有hive.log,需要将其删除

11.启动hive的两种方法

第一种方法: 本地连接Hive

[root@lxm147 ~]# hive

which: no hbase in (/usr/local/sbin:/usr/local/bin:/usr/sbin:/usr/bin:/opt/soft/jdk180/bin:/opt/soft/hadoop313/bin:/opt/soft/hadoop313/sbin:/opt/soft/hadoop313/lib:/opt/soft/hive312/bin:/root/bin)

SLF4J: Class path contains multiple SLF4J bindings.

SLF4J: Found binding in [jar:file:/opt/soft/hive312/lib/log4j-slf4j-impl-2.10.0.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: Found binding in [jar:file:/opt/soft/hadoop313/share/hadoop/common/lib/slf4j-log4j12-1.7.25.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: See http://www.slf4j.org/codes.html#multiple_bindings for an explanation.

SLF4J: Actual binding is of type [org.apache.logging.slf4j.Log4jLoggerFactory]

Hive Session ID = b02fa8fb-4597-4106-bc19-717baaf09932

Logging initialized using configuration in jar:file:/opt/soft/hive312/lib/hive-common-3.1.2.jar!/hive-log4j2.properties Async: true

Hive-on-MR is deprecated in Hive 2 and may not be available in the future versions. Consider using a different execution engine (i.e. spark, tez) or using Hive 1.X releases.

Hive Session ID = 8ad40a3f-3ac3-461f-80eb-f9c656aab10b

hive (default)> show databases;

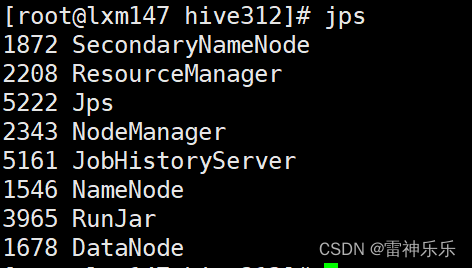

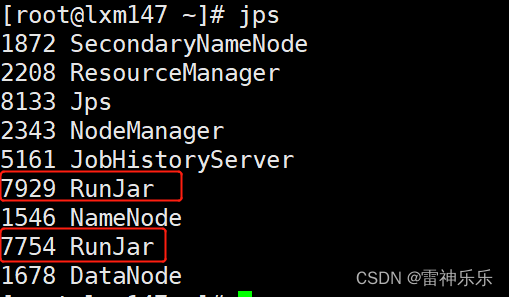

**Ctrl+c后RunJar就会退出 **

第二种:开启远程连接

# 先启动hiveserver2的服务

[root@lxm147 ~]# hive --service hiveserver2

# 再启动远程连接

[root@lxm147 ~]# beeline -u jdbc:hive2://192.168.180.147:10000

[root@lxm147 ~]# hive --service hiveserver2

which: no hbase in (/usr/local/sbin:/usr/local/bin:/usr/sbin:/usr/bin:/opt/soft/jdk180/bin:/opt/soft/hadoop313/bin:/opt/soft/hadoop313/sbin:/opt/soft/hadoop313/lib:/opt/soft/hive312/bin:/root/bin)

2023-02-17 08:49:45: Starting HiveServer2

SLF4J: Class path contains multiple SLF4J bindings.

SLF4J: Found binding in [jar:file:/opt/soft/hive312/lib/log4j-slf4j-impl-2.10.0.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: Found binding in [jar:file:/opt/soft/hadoop313/share/hadoop/common/lib/slf4j-log4j12-1.7.25.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: See http://www.slf4j.org/codes.html#multiple_bindings for an explanation.

SLF4J: Actual binding is of type [org.apache.logging.slf4j.Log4jLoggerFactory]

Hive Session ID = 836e26fd-a569-429d-9d0c-e126ef369e04

2023-02-17 08:49:58,019 Log4j2-TF-2-AsyncLogger[AsyncContext@2471cca7]-1 ERROR Attempted to append to non-started appender query-routing

Hive Session ID = b3870d50-c9c2-46a5-bc08-dc17998ef08b

Hive Session ID = cc262716-a704-4eeb-8393-a34c4872cb61

Hive Session ID = 7cc18075-3afb-44d8-9ff2-b2400f0126ef

OK

[root@lxm147 ~]# beeline -u jdbc:hive2://192.168.180.147:10000

SLF4J: Class path contains multiple SLF4J bindings.

SLF4J: Found binding in [jar:file:/opt/soft/hive312/lib/log4j-slf4j-impl-2.10.0.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: Found binding in [jar:file:/opt/soft/hadoop313/share/hadoop/common/lib/slf4j-log4j12-1.7.25.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: See http://www.slf4j.org/codes.html#multiple_bindings for an explanation.

SLF4J: Actual binding is of type [org.apache.logging.slf4j.Log4jLoggerFactory]

Connecting to jdbc:hive2://192.168.180.147:10000

Connected to: Apache Hive (version 3.1.2)

Driver: Hive JDBC (version 3.1.2)

Transaction isolation: TRANSACTION_REPEATABLE_READ

Beeline version 3.1.2 by Apache Hive

0: jdbc:hive2://192.168.180.147:10000>

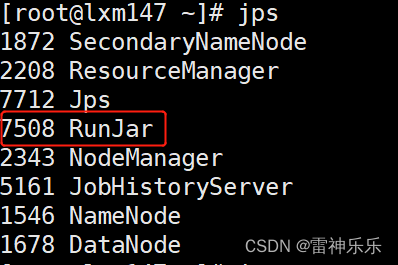

一个RunJar是hiveserver2,一个RunJar是beeline

必须先开启hiveservice2,才可以开启beeline

12.安装net-tools查看端口状态

[root@lxm147 ~]# yum -y install net-tools

[root@lxm147 ~]# netstat -nltp | grep 10000

tcp6 0 0 :::10000 :::* LISTEN 7754/java

13.赋予权限

[root@lxm147 ~]# hdfs dfs -chmod -R 777 /tmp

14.关闭所有hive后台运行下面的命令

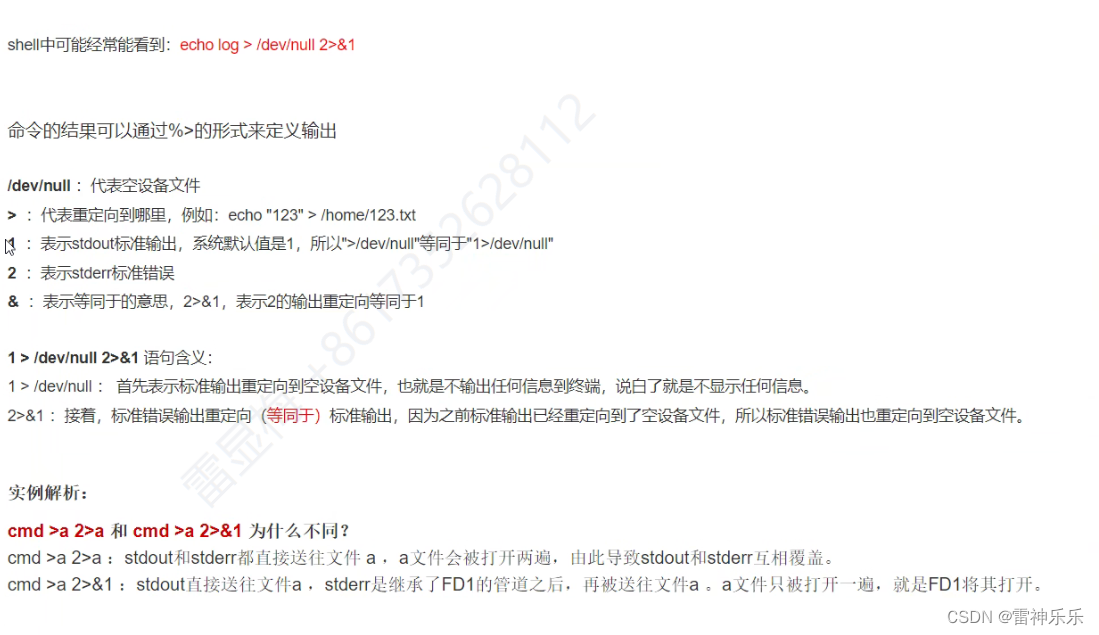

[root@lxm147 ~]# nohup hiveserver2 1>/dev/null 2>&1 &

[1] 3140

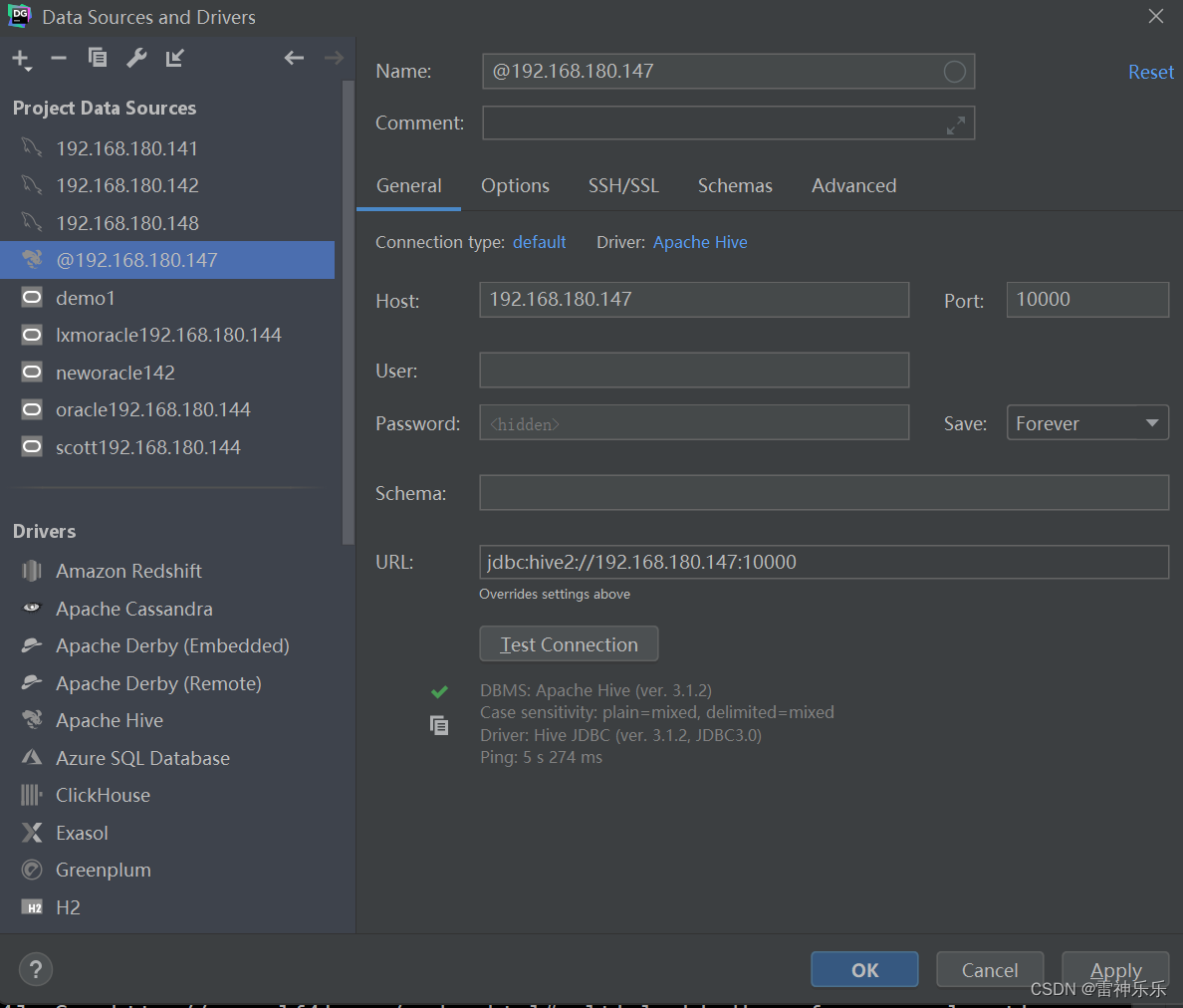

15.Datagrip远程连接

启动hive客户端:先启动hiveserver2(nohup hiveserver2 1>/dev/null 2>&1 &),然后datagrip才能连接

Hive元数据存放在mysql中,表存放在hdfs中

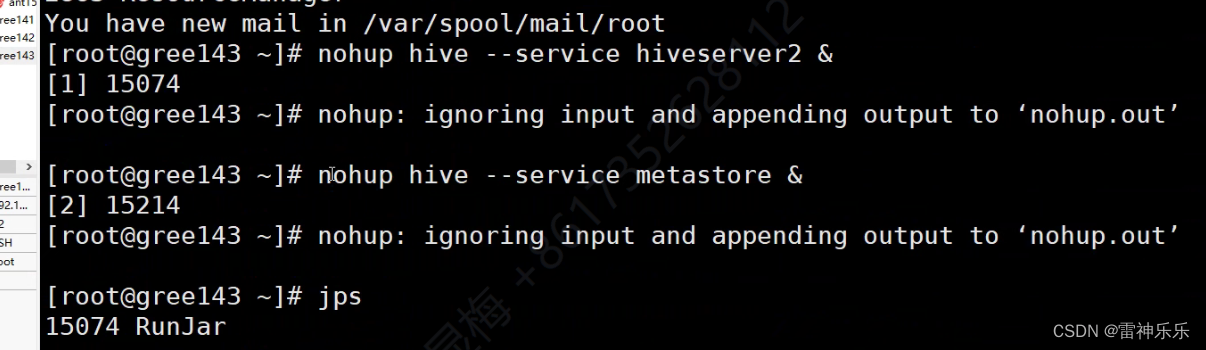

启动元数据服务

nohup hive --service hiveserver2 &

nohup hive --service metastore &

版权归原作者 雷神乐乐 所有, 如有侵权,请联系我们删除。