文章目录

安装部署

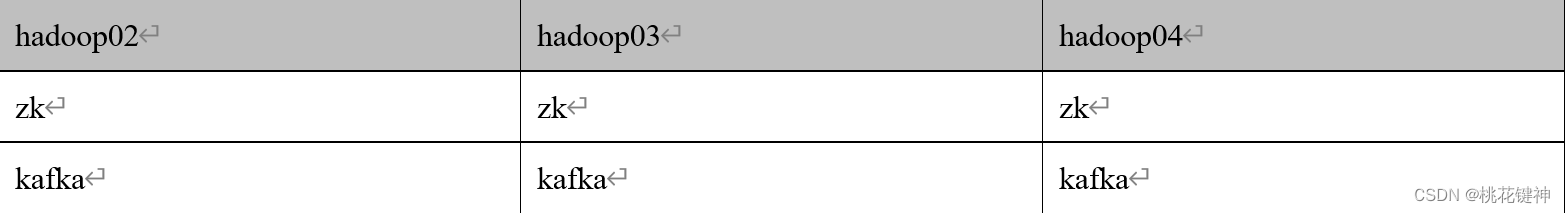

集群规划

集群部署

- 官方下载地址:http://kafka.apache.org/downloads.html

- 上传安装包到02的/opt/software目录下

[atguigu@hadoop02 software]$ ll

-rw-rw-r--. 1 atguigu atguigu 86486610 3月 10 12:33 kafka_2.12-3.0.0.tgz

- 解压安装包到/opt/module/目录下

[atguigu@hadoop02 software]$ tar -zxvf kafka_2.12-3.0.0.tgz -C /opt/module/

- 进入到/opt/module目录下,修改解压包名为kafka

[atguigu@hadoop02 module]$ mv kafka_2.12-3.0.0 kafka

- 修改config目录下的配置文件server.properties内容如下

[atguigu@hadoop02 kafka]$ cd config/

[atguigu@hadoop02 config]$ vim server.properties

#broker的全局唯一编号,不能重复,只能是数字。

broker.id=02

#处理网络请求的线程数量

num.network.threads=3

#用来处理磁盘IO的线程数量

num.io.threads=8

#发送套接字的缓冲区大小

socket.send.buffer.bytes=102400

#接收套接字的缓冲区大小

socket.receive.buffer.bytes=102400

#请求套接字的缓冲区大小

socket.request.max.bytes=104857600

#kafka运行日志(数据)存放的路径,路径不需要提前创建,kafka自动帮你创建,可以配置多个磁盘路径,路径与路径之间可以用","分隔

log.dirs=/opt/module/kafka/datas

#topic在当前broker上的分区个数

num.partitions=1

#用来恢复和清理data下数据的线程数量

num.recovery.threads.per.data.dir=1

# 每个topic创建时的副本数,默认时1个副本

offsets.topic.replication.factor=1

#segment文件保留的最长时间,超时将被删除

log.retention.hours=168

#每个segment文件的大小,默认最大1G

log.segment.bytes=1073741824

# 检查过期数据的时间,默认5分钟检查一次是否数据过期

log.retention.check.interval.ms=300000

#配置连接Zookeeper集群地址(在zk根目录下创建/kafka,方便管理)

zookeeper.connect=hadoop02:2181,hadoop03:2181,hadoop04:2181/kafka

- 配置环境变量

[atguigu@hadoop02 kafka]$ sudo vim /etc/profile.d/my_env.sh

#KAFKA_HOME

export KAFKA_HOME=/opt/module/kafka

export PATH=$PATH:$KAFKA_HOME/bin

[atguigu@hadoop02 kafka]$ source /etc/profile

- 分发环境变量文件并source

[atguigu@hadoop02 kafka]$ xsync /etc/profile.d/my_env.sh

==================== hadoop02 ====================

sending incremental file list

sent 47 bytes received 12 bytes 39.33 bytes/sec

total size is 371 speedup is 6.29

==================== hadoop03 ====================

sending incremental file list

my_env.sh

rsync: mkstemp "/etc/profile.d/.my_env.sh.Sd7MUA" failed: Permission denied (13)

sent 465 bytes received 126 bytes 394.00 bytes/sec

total size is 371 speedup is 0.63

rsync error: some files/attrs were not transferred (see previous errors)(code 23) at main.c(1178)[sender=3.1.2]

==================== hadoop04 ====================

sending incremental file list

my_env.sh

rsync: mkstemp "/etc/profile.d/.my_env.sh.vb8jRj" failed: Permission denied (13)

sent 465 bytes received 126 bytes 1,182.00 bytes/sec

total size is 371 speedup is 0.63

rsync error: some files/attrs were not transferred (see previous errors)(code 23) at main.c(1178)[sender=3.1.2],

# 这时你觉得适用sudo就可以了,但是真的是这样吗?[atguigu@hadoop02 kafka]$ sudo xsync /etc/profile.d/my_env.sh

sudo: xsync:找不到命令

# 这时需要将xsync的命令文件,copy到/usr/bin/下,sudo(root)才能找到xsync命令[atguigu@hadoop02 kafka]$ sudo cp/home/atguigu/bin/xsync /usr/bin/

[atguigu@hadoop02 kafka]$ sudo xsync /etc/profile.d/my_env.sh

# 在每个节点上执行source命令,如何你没有xcall脚本,就手动在三台节点上执行source命令。[atguigu@hadoop02 kafka]$ xcall source /etc/profile

- 分发安装包

[atguigu@hadoop02 module]$ xsync kafka/

- 修改配置文件中的brokerid 分别在hadoop03和hadoop04上修改配置文件server.properties中broker.id=03、broker.id=04

[atguigu@hadoop03 kafka]$ vim config/server.properties

broker.id=03

[atguigu@hadoop04 kafka]$ vim config/server.properties

broker.id=04

- 启动集群 ① 先启动Zookeeper集群

[atguigu@hadoop02 kafka]$ zk.sh start

② 依次在hadoop02、hadoop03、hadoop04节点上启动kafka

[atguigu@hadoop02 kafka]$ bin/kafka-server-start.sh -daemon config/server.properties

[atguigu@hadoop03 kafka]$ bin/kafka-server-start.sh -daemon config/server.properties

[atguigu@hadoop04 kafka]$ bin/kafka-server-start.sh -daemon config/server.properties

- 关闭集群

[atguigu@hadoop02 kafka]$ bin/kafka-server-stop.sh

[atguigu@hadoop03 kafka]$ bin/kafka-server-stop.sh

[atguigu@hadoop04 kafka]$ bin/kafka-server-stop.sh

kafka群起脚本

- 脚本编写 在/home/atguigu/bin目录下创建文件kafka.sh脚本文件:

#! /bin/bashif(($#==0)); thenecho-e "请输入参数:\n start 启动kafka集群;\n stop 停止kafka集群;\n" && exit

fi

case $1 in

"start")for host in hadoop03 hadoop02 hadoop04

doecho"---------- $1$host 的kafka ----------"

ssh $host"/opt/module/kafka/bin/kafka-server-start.sh -daemon /opt/module/kafka/config/server.properties"

done

;;"stop")for host in hadoop03 hadoop02 hadoop04

doecho"---------- $1$host 的kafka ----------"

ssh $host"/opt/module/kafka/bin/kafka-server-stop.sh"

done

;;*)echo-e "---------- 请输入正确的参数 ----------\n"echo-e "start 启动kafka集群;\n stop 停止kafka集群;\n" && exit;;

esac

- 脚本文件添加权限

[atguigu@hadoop02 bin]$ chmod +x kafka.sh

Kafka命令行操作

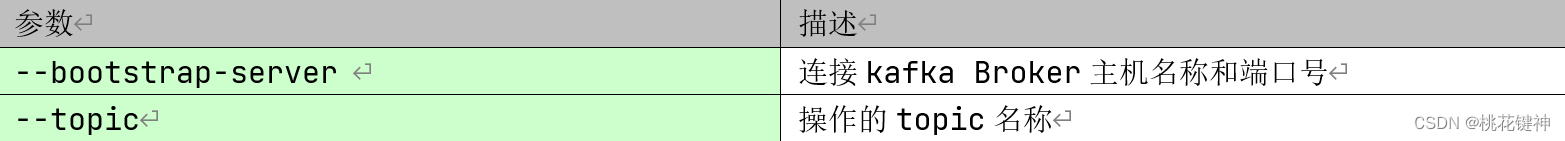

主题命令行操作

- 查看操作主题命令需要的参数

[atguigu@hadoop02 kafka]$ bin/kafka-topics.sh

- 重要的参数如下

- 查看当前服务器中的所有topic

[atguigu@hadoop02 kafka]$ bin/kafka-topics.sh --bootstrap-server hadoop102:9092 --list

- 创建一个主题名为first的topic

[atguigu@hadoop02 kafka]$ bin/kafka-topics.sh --bootstrap-server hadoop02:9092 --create --replication-factor 3 --partitions 3 --topic first

- 查看Topic的详情

[atguigu@hadoop02 kafka]$ bin/kafka-topics.sh --bootstrap-server hadoop02:9092 --describe --topic first

Topic: first TopicId: EVV4qHcSR_q0O8YyD32gFg PartitionCount: 1 ReplicationFactor: 3 Configs: segment.bytes=1073741824

Topic: first Partition: 0 Leader: 02 Replicas: 02,03,04 Isr: 02,03,04

- 修改分区数(注意:分区数只能增加,不能减少)

[atguigu@hadoop02 kafka]$ bin/kafka-topics.sh --bootstrap-server hadoop02:9092 --alter --topic first --partitions 3

- 再次查看Topic的详情

[atguigu@hadoop02 kafka]$ bin/kafka-topics.sh --bootstrap-server hadoop02:9092 --describe --topic first

Topic: first TopicId: EVV4qHcSR_q0O8YyD32gFg PartitionCount: 3 ReplicationFactor: 3 Configs: segment.bytes=1073741824

Topic: first Partition: 0 Leader: 02 Replicas: 02,03,04 Isr: 02,03,04

Topic: first Partition: 1 Leader: 03 Replicas: 03,04,02 Isr: 03,04,02

Topic: first Partition: 2 Leader: 04 Replicas: 04,02,03 Isr: 04,02,03

- 删除topic

[atguigu@hadoop02 kafka]$ bin/kafka-topics.sh --bootstrap-server hadoop02:9092 --delete --topic first

生产者命令行操

- 查看命令行生产者的参数

[atguigu@hadoop02 kafka]$ bin/kafka-console-producer.sh

- 重要的参数如下:

- 生产消息

[atguigu@hadoop02 kafka]$ bin/kafka-console-producer.sh --broker-list hadoop02:9092 --topic first

>hello world

>atguigu atguigu

消费者命令行操作

- 查看命令行消费者的参数

[atguigu@hadoop02 kafka]$ bin/kafka-console-consumer.sh

- 重要的参数如下:

- 消费消息

[atguigu@hadoop02 kafka]$ bin/kafka-console-consumer.sh --bootstrap-server hadoop02:9092 --topic first

- 从头开始消费

[atguigu@hadoop02 kafka]$ bin/kafka-console-consumer.sh --bootstrap-server hadoop02:9092 --from-beginning --topic first

本文转载自: https://blog.csdn.net/weixin_50843918/article/details/128606111

版权归原作者 桃花键神 所有, 如有侵权,请联系我们删除。

版权归原作者 桃花键神 所有, 如有侵权,请联系我们删除。