前面我们搭建了hadoop集群,spark集群,也利用容器构建了spark的编程环境。但是一般来说,就并行计算程序的开发,一刚开始一般是在单机上的,比如hadoop的single node。但是老师弄个容器或虚拟机用vscode远程访问式开发,终究还是有些不爽。还好,hadoop和spark都是支持windows的。不妨,我们弄个windows下的开发环境。

然而,windows下开发环境的构建,需要一个转换程序winutils.exe,这个需要根据下载的hadoop的版本对应编译。而且,编译好的exe文件在网上并不好找,一些大虾们编译完了,往往挂在csdn上还要收点费……。所以,还是自己编译吧,好歹还能学点东西。

一、预备环境构建

很多网上的攻略仅仅提到了需要安装些什么软件,但并没有提及为什么。所以,我在安装的时候,本着剃刀原则,尽量少装,然后摔一个坑多装一个。所以,也基本搞清了这些工具的作用——实际上就是,maven集成了一堆的跨平台编译器,并通过插件来调用它们。如果调用的时候找不到,自然就会报错。

所欲i,这里提到的软件,有一个算一个,都得装。

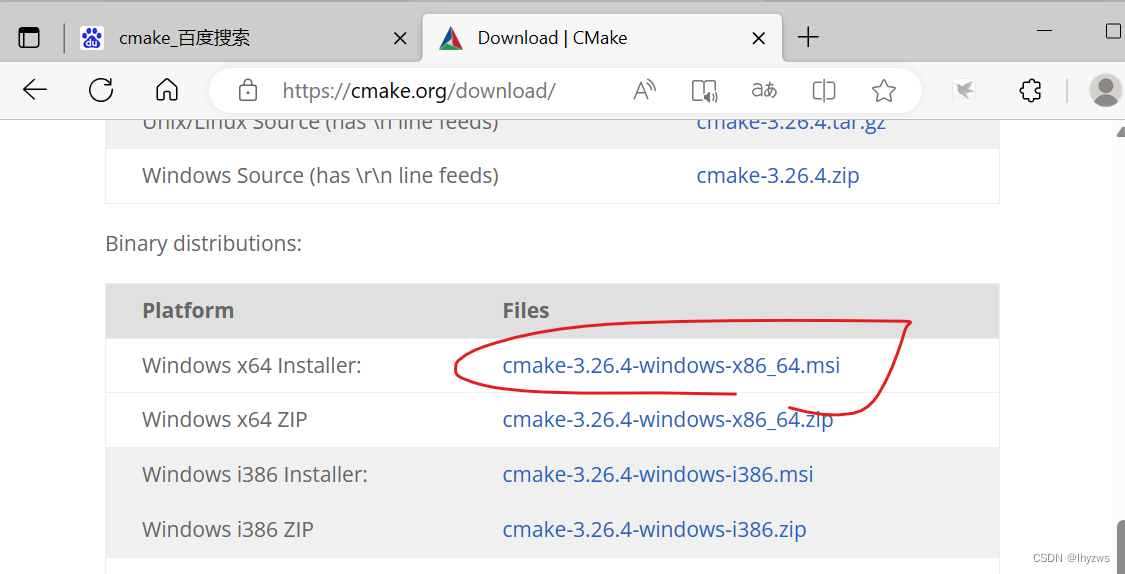

1. CMAKE

到官方网站Download | CMake下载安装包进行安装:

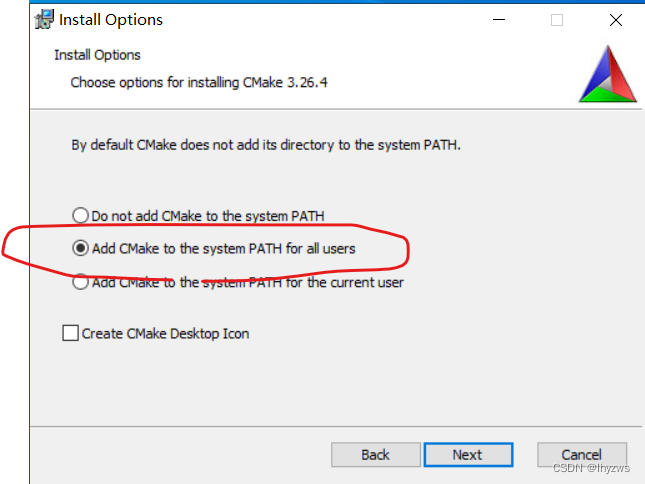

如果不打算手工修改环境变量的话,记得勾上这个:

成功安装,则可在命令行执行版本检查:

Microsoft Windows [版本 10.0.19044.1288]

(c) Microsoft Corporation。保留所有权利。

C:\Users\pig>cmake -version

cmake version 3.26.4

CMake suite maintained and supported by Kitware (kitware.com/cmake).

C:\Users\pig>

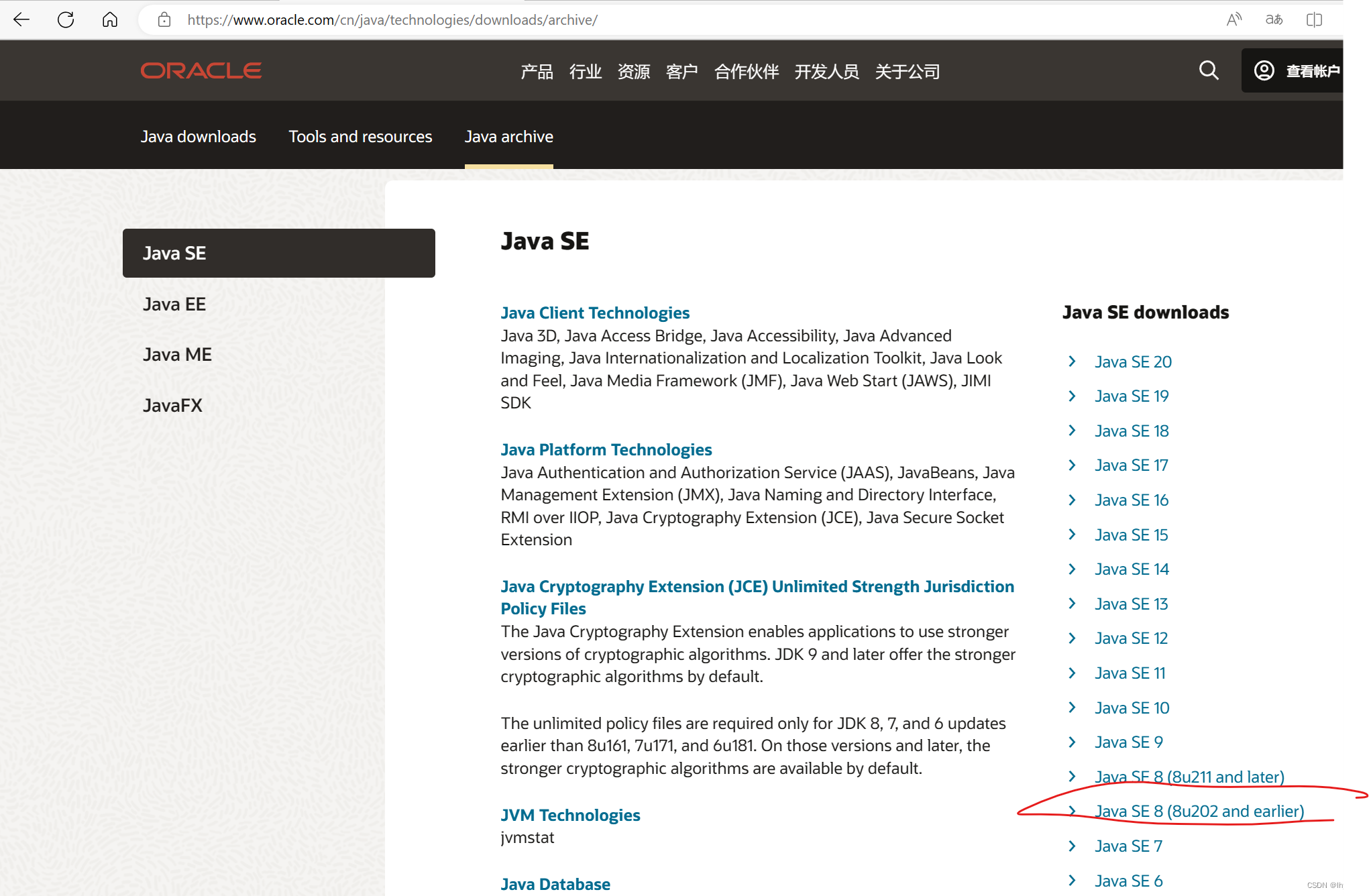

2. JDK 1.8

不论是maven的配置文件,还是hadoop的pom文件,默认的java版本都是JDK 1.8。虽然我们之前落过1.8的坑,换成了11,但这里还是改回来的好。否则,不知哪个犄角旮旯的1.8没有改成11,都有可能造成编译失败。

下载后安装:

安装成功后,应该能够在cmd中查看版本号:

Microsoft Windows [版本 10.0.19044.1288]

(c) Microsoft Corporation。保留所有权利。

C:\Users\pig>java -version

java version "1.8.0_202"

Java(TM) SE Runtime Environment (build 1.8.0_202-b08)

Java HotSpot(TM) 64-Bit Server VM (build 25.202-b08, mixed mode)

C:\Users\pig>

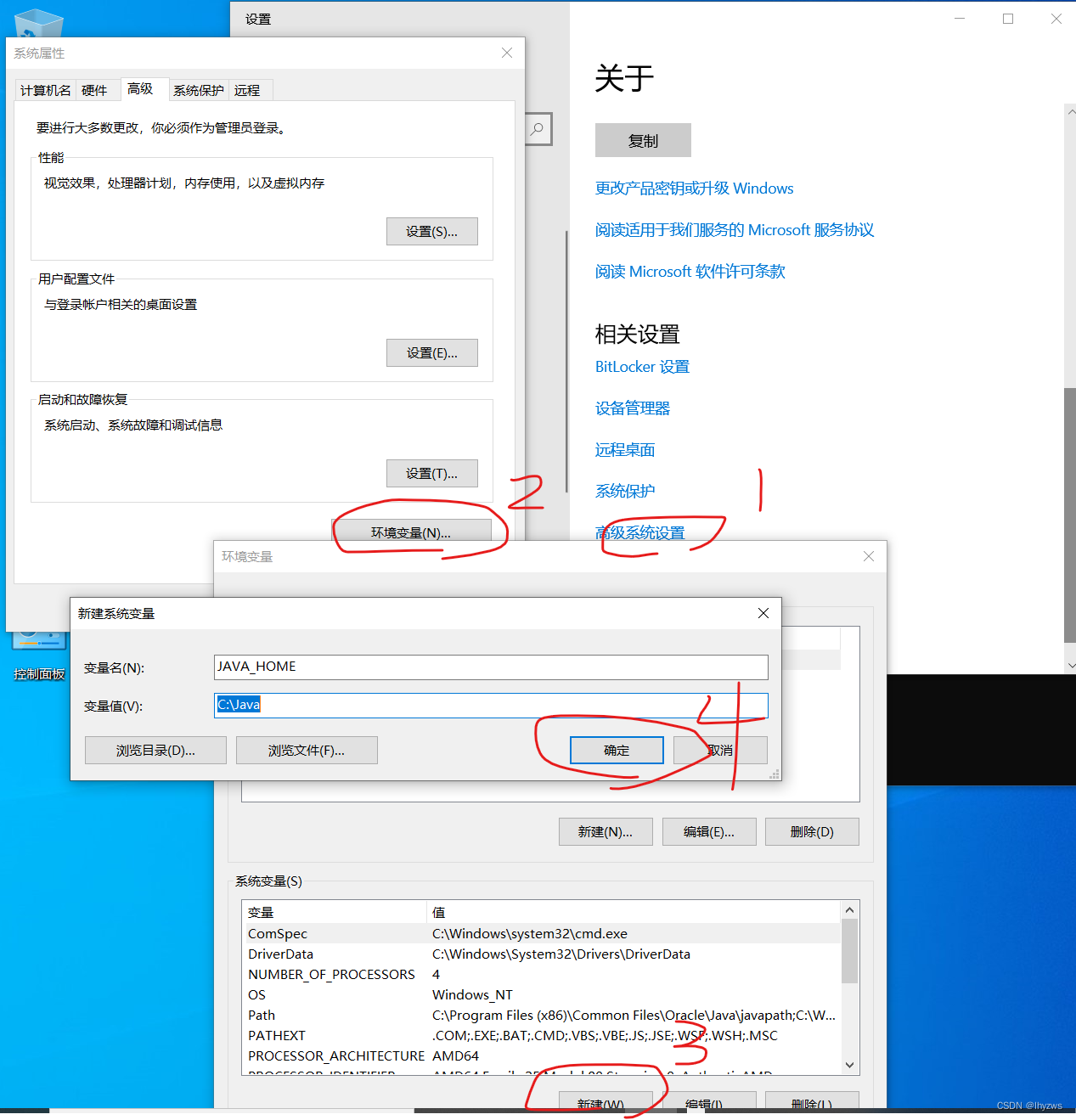

然后在高级系统设置中设置环境变量JAVA_HOME,指向刚才的安装目录。这里我们的安装目录更改过,默认应该在program files目录下面。

3. Maven

3. Maven

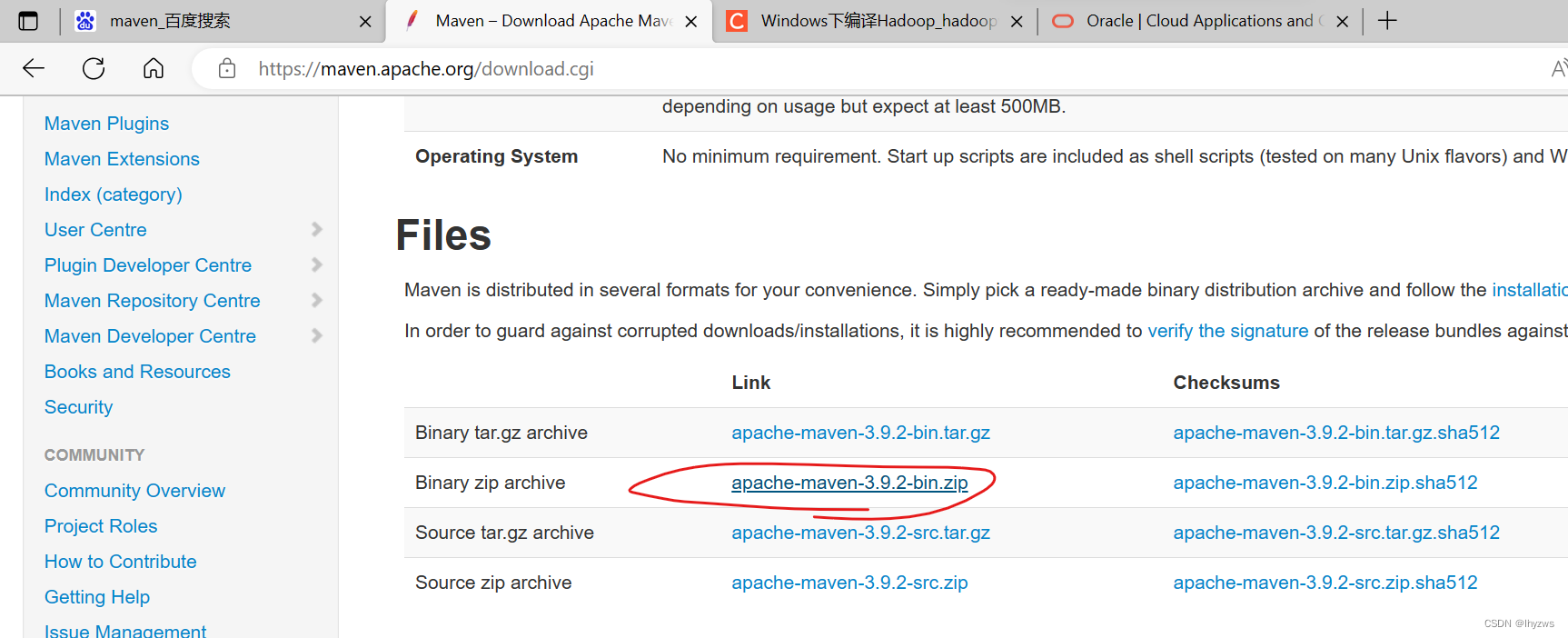

在Maven的官方网站Maven – Download Apache Maven下载编译好的binary zip版本,直接解压到windows里。

(1)设置环境变量

maven有2个环境变量需要设置,一个是PATH,一个是MAVEN_HOME

MAVEN_HOME需要设置为我们释放它的目录:

PATH则是需要将maven目录下的bin的绝对目录添加进去:

设置完成后,重启一个cmd,输入mvn -v命令,应该能看到如下结果:

C:\Users\pig>mvn -v

Apache Maven 3.9.2 (c9616018c7a021c1c39be70fb2843d6f5f9b8a1c)

Maven home: C:\maven

Java version: 1.8.0_202, vendor: Oracle Corporation, runtime: C:\Java\jre

Default locale: zh_CN, platform encoding: GBK

OS name: "windows 10", version: "10.0", arch: "amd64", family: "windows"

C:\Users\pig>

(2)设置本地仓库及阿里镜像

在MAVEN_HOME/conf目录下的settings.xml文件中,如下设置:

** 本地仓库目录为c:/m2repo,**对应新建这个目录。默认这个目录应该在当前用户目录下的.m2目录中,方便起见,改一下。

<!-- localRepository

| The path to the local repository maven will use to store artifacts.

|

| Default: ${user.home}/.m2/repository

<localRepository>/path/to/local/repo</localRepository>

-->

<localRepository>c:/m2repo</localRepository>

** 添加阿里镜像,**这样下载能够快不少:

<mirrors>

<!-- mirror

| Specifies a repository mirror site to use instead of a given repository. The repository that

| this mirror serves has an ID that matches the mirrorOf element of this mirror. IDs are used

| for inheritance and direct lookup purposes, and must be unique across the set of mirrors.

|

<mirror>

<id>mirrorId</id>

<mirrorOf>repositoryId</mirrorOf>

<name>Human Readable Name for this Mirror.</name>

<url>http://my.repository.com/repo/path</url>

</mirror>

-->

<mirror>

<id>aliyunmaven</id>

<mirrorOf>*</mirrorOf>

<name>阿里云公共仓库</name>

<url>https://maven.aliyun.com/repository/public</url>

</mirror>

<mirror>

<id>maven-default-http-blocker</id>

<mirrorOf>external:http:*</mirrorOf>

<name>Pseudo repository to mirror external repositories initially using HTTP.</name>

<url>http://0.0.0.0/</url>

<blocked>true</blocked>

</mirror>

</mirrors>

配置好后,新开cmd窗口,执行 mvn help:system命令

C:\Users\pig>mvn help:system

[INFO] Scanning for projects...

Downloading from aliyunmaven: https://maven.aliyun.com/repository/public/org/apache/maven/plugins/maven-clean-plugin/3.2.0/maven-clean-plugin-3.2.0.pom

…………

…………

MAVEN_PROJECTBASEDIR=C:\Users\pig

[INFO] ------------------------------------------------------------------------

[INFO] BUILD SUCCESS

[INFO] ------------------------------------------------------------------------

[INFO] Total time: 13.828 s

[INFO] Finished at: 2023-06-10T21:02:29+08:00

[INFO] ------------------------------------------------------------------------

[WARNING]

[WARNING] Plugin validation issues were detected in 1 plugin(s)

[WARNING]

[WARNING] * org.apache.maven.plugins:maven-site-plugin:3.12.1

[WARNING]

[WARNING] For more or less details, use 'maven.plugin.validation' property with one of the values (case insensitive): [BRIEF, DEFAULT, VERBOSE]

[WARNING]

顺利的话,mvn会下载一些默认的依赖项,此时指定的本地库目录下应该就不为空了:

4. 安装Visual Studio

如果安装这个大家伙的唯一目的是编译protobuf获得protoc.exe文件。如果你决定下载已编译好的可执行文件用的话(也就是不编译protobuf),完全可以选择避开这玩意。如果要编译,那就装2010以上的版本。最好2010,因为上到2019,还会出现一些别的问题。

** 然而,如果还打算继续编译hadoop,并且不希望看到诸如“ Failed to execute goal org.codehaus.mojo:exec-maven-plugin:1.3.1:exec”这样的错误的话,VS还是得装的,毕竟maven可能会通过exec插件来调用vs的编译器来进行编译。**

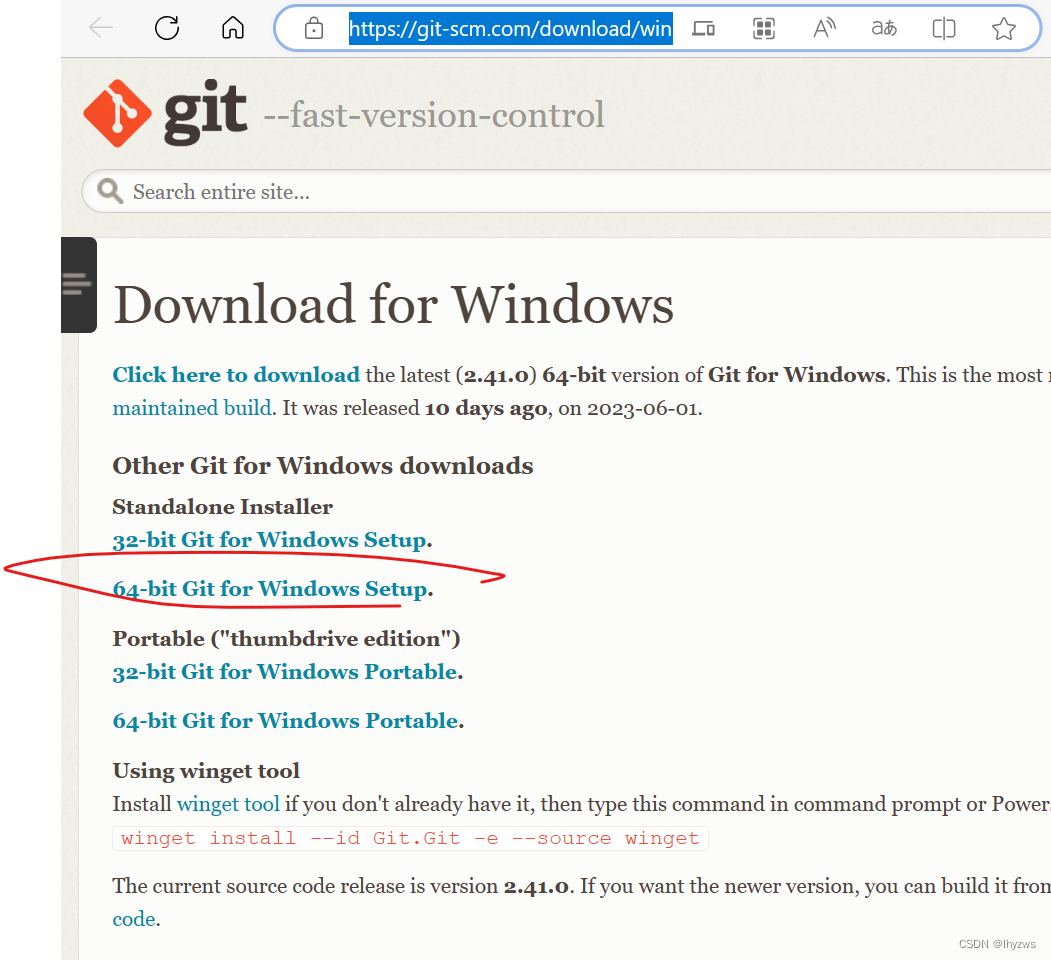

** 5. 安装Git**

安装git,除了可能会用到git clone或者git submodule update以外,最主要的作用,是使用Git中自带的git bash。因为后面在编译的时候,maven会使用ant插件运行sh脚本,这个需要bash支持。

下载的官网地址在Git - Downloading Package (git-scm.com)

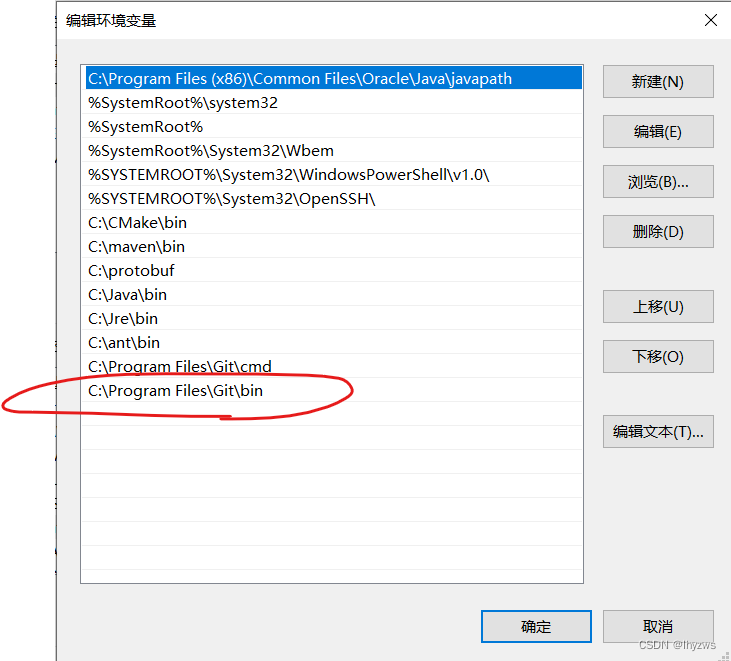

一路默认安装就好,但是默认条件下,加入环境变量PATH的是git-cmd目录,bash并不在这个目录中,

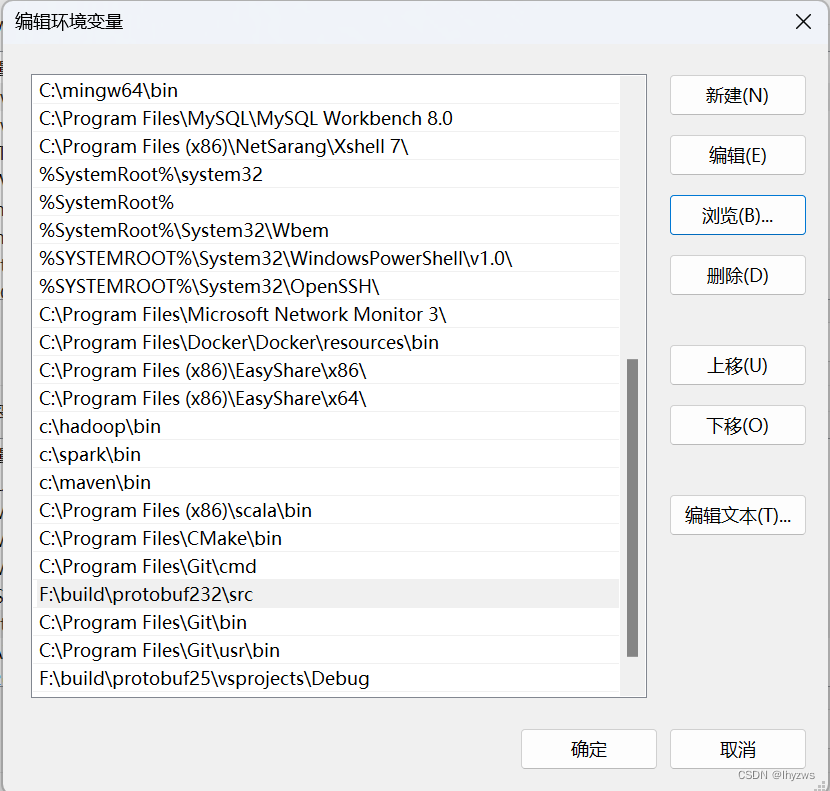

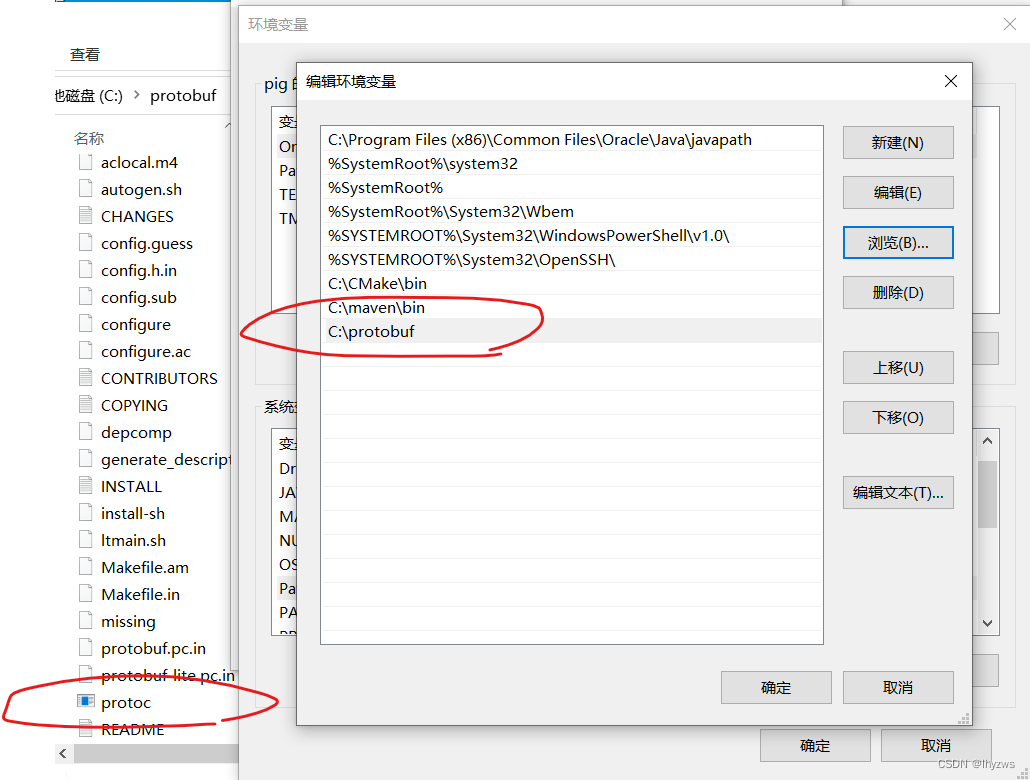

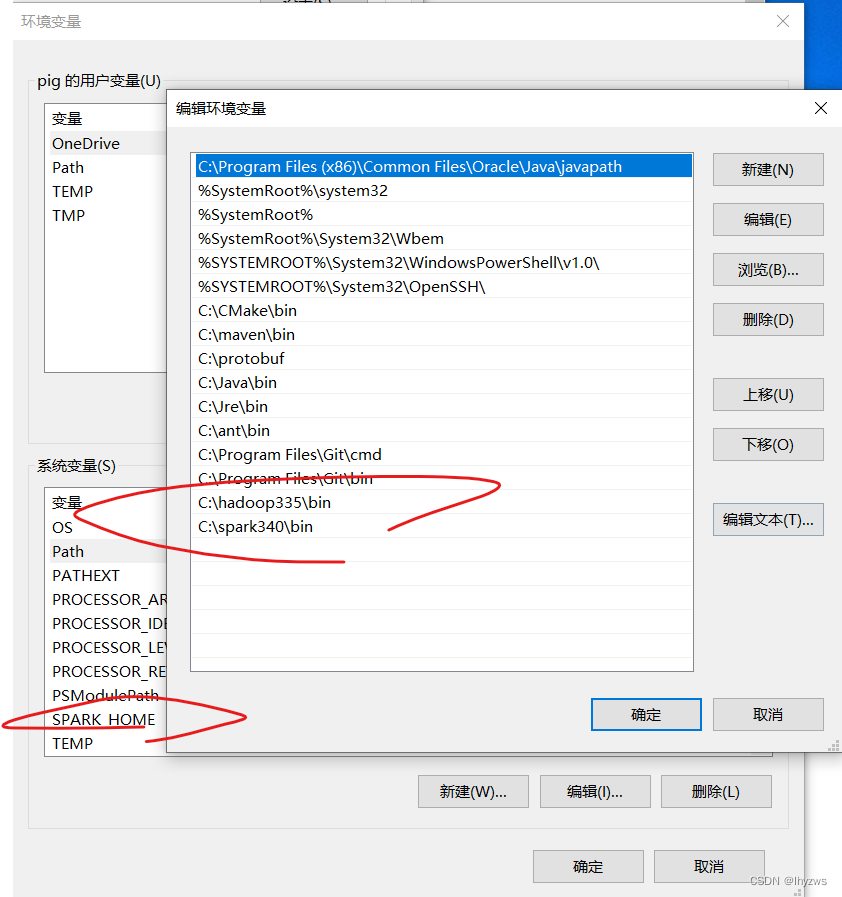

这也是我最后编译成功的时候的PATH环境变量情况。可以忽略上面那个ant,保险起见我也手工下载安装了一个,但是感觉没啥作用。maven的antrun插件应该取代它的作用了。

二、编译protobuf

之所以编译protobuf,是因为hadoop编译中需要protoc.exe这个编译工具。所以如果不想麻烦的话,直接去下载编译好的exe文件也行:https://github.com/protocolbuffers/protobuf/releases/download/v2.5.0/protoc-2.5.0-win32.zip。

但是不管是编译源码还是直接下载可执行文件,**都一定要认准2.5.0版本**——因为hadoop目前的源码编译中要求,不是该版本会造成各种莫名奇妙的插件执行错误。**切记切记!!!**

1. 下载protobuf

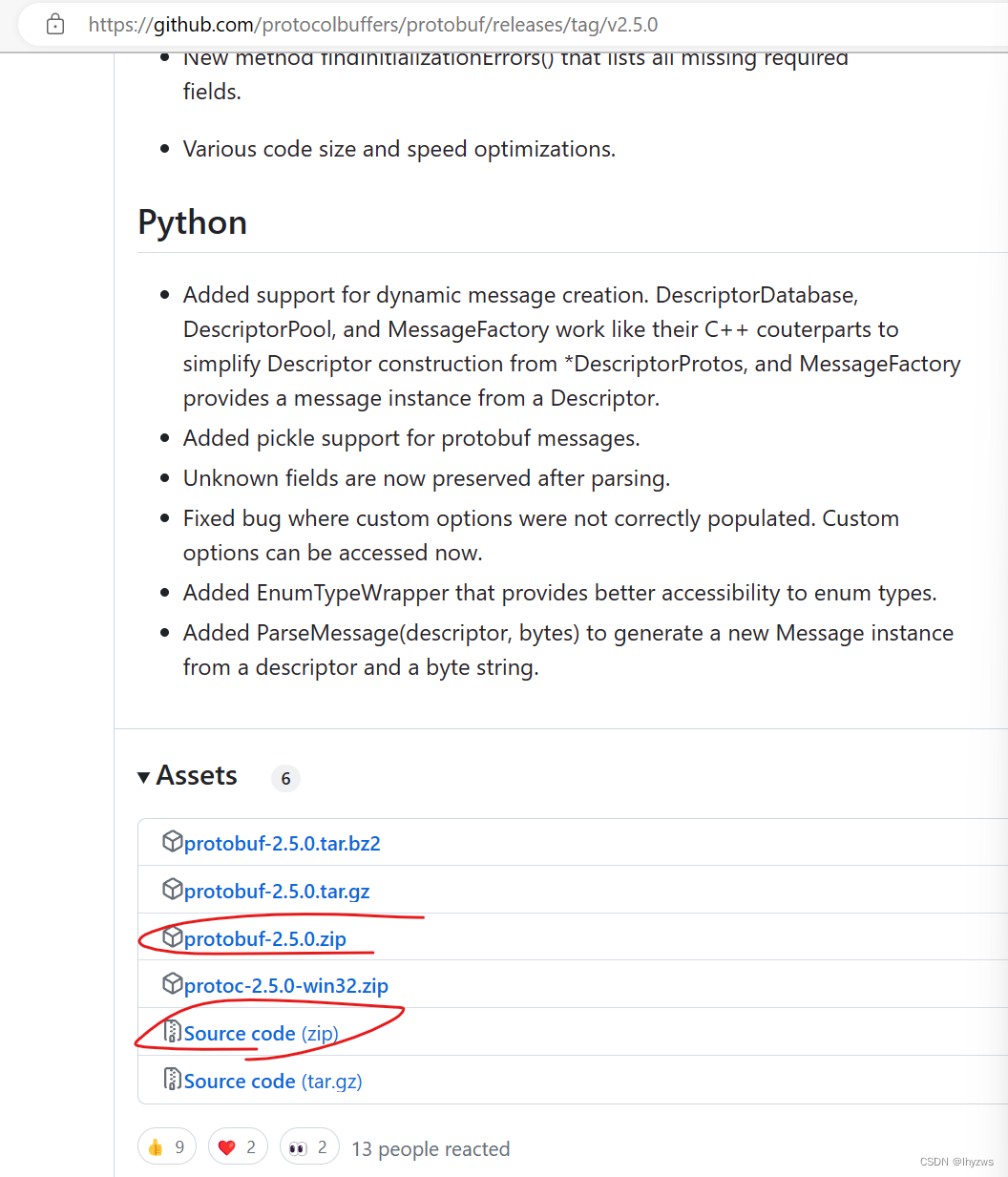

protobuf的下载地址在github上:Release Protocol Buffers v2.5.0 · protocolbuffers/protobuf · GitHub

因为魔法的缘故,打开这个网站需要碰运气,多刷几次就行:

两个红圈中的都是源代码,看起来连接不一样,实际下下来的压缩包完全一样。如果是要下可执行文件,中间那个就是,对应上面我们已经给出的链接。

2. 编译生成protoc

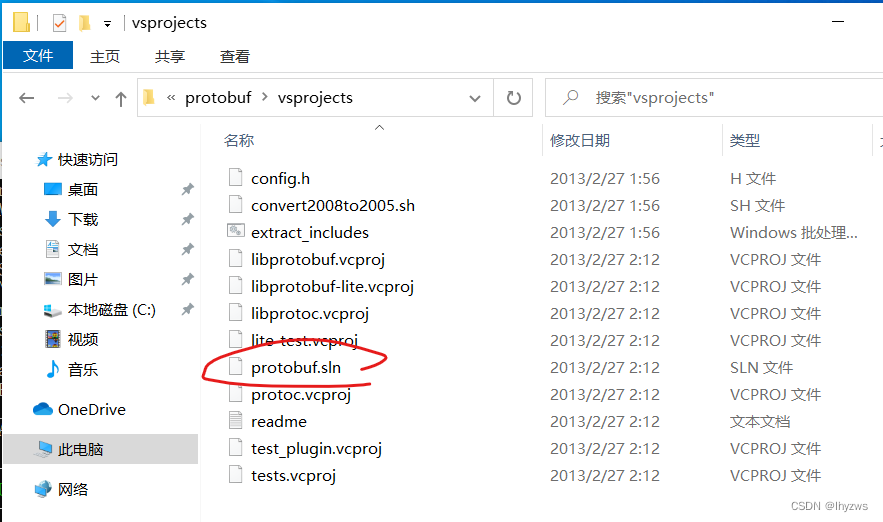

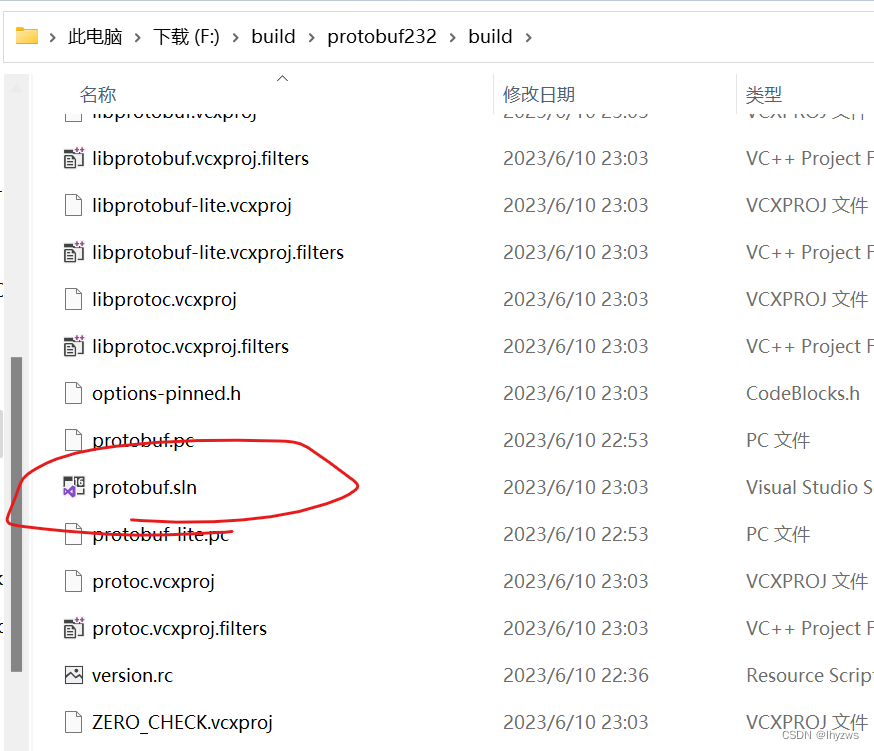

2. 2.5.0版本的protobuf编译起来十分简单,因为它提供了visual studio的工程文件sln,在protobuf\vsprojects目录下,使用VS Studio将其打开即可:

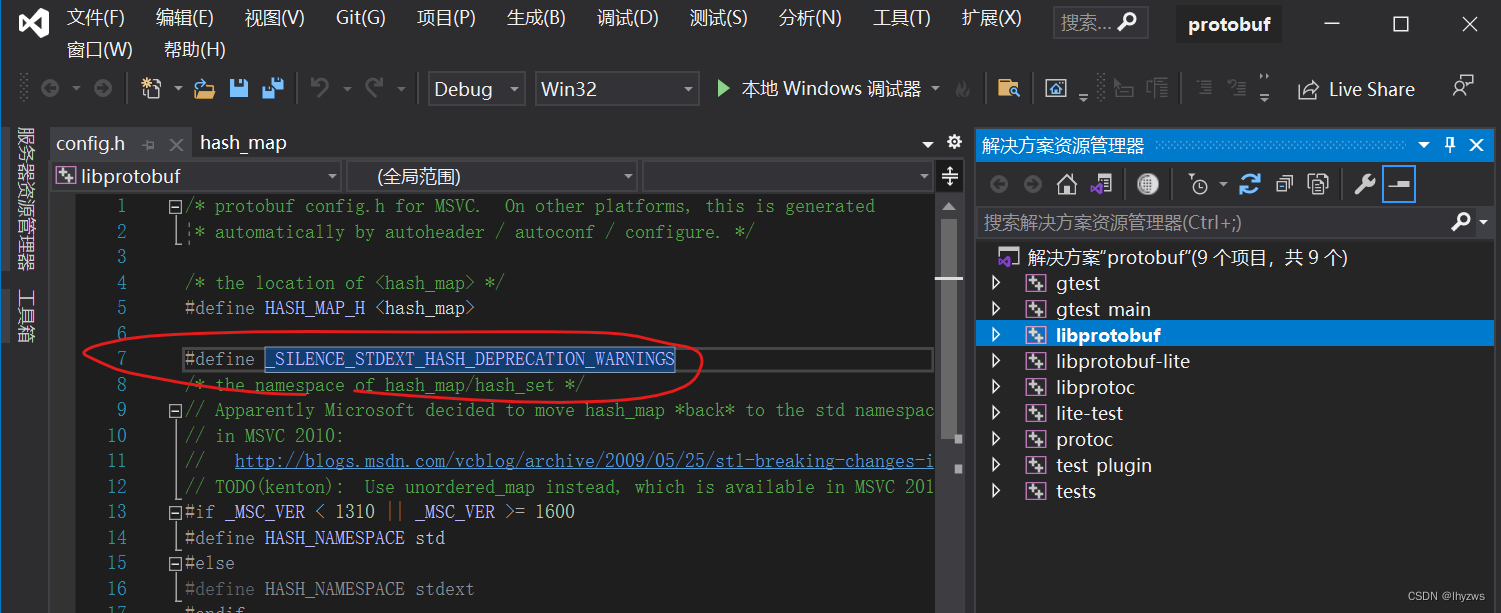

使用Visual Studio编译的过程就不赘述了,点几个按钮而已。 当然,如果使用的是VS 2019,需要注意以下这个问题:

在默认启动项libprotobuf的header文件加中,找到config.h文件,在其中添加一个宏定义

#define _SILENCE_STDEXT_HASH_DEPRECATION_WARNINGS

以避免因为版本问题出现hash_map错误

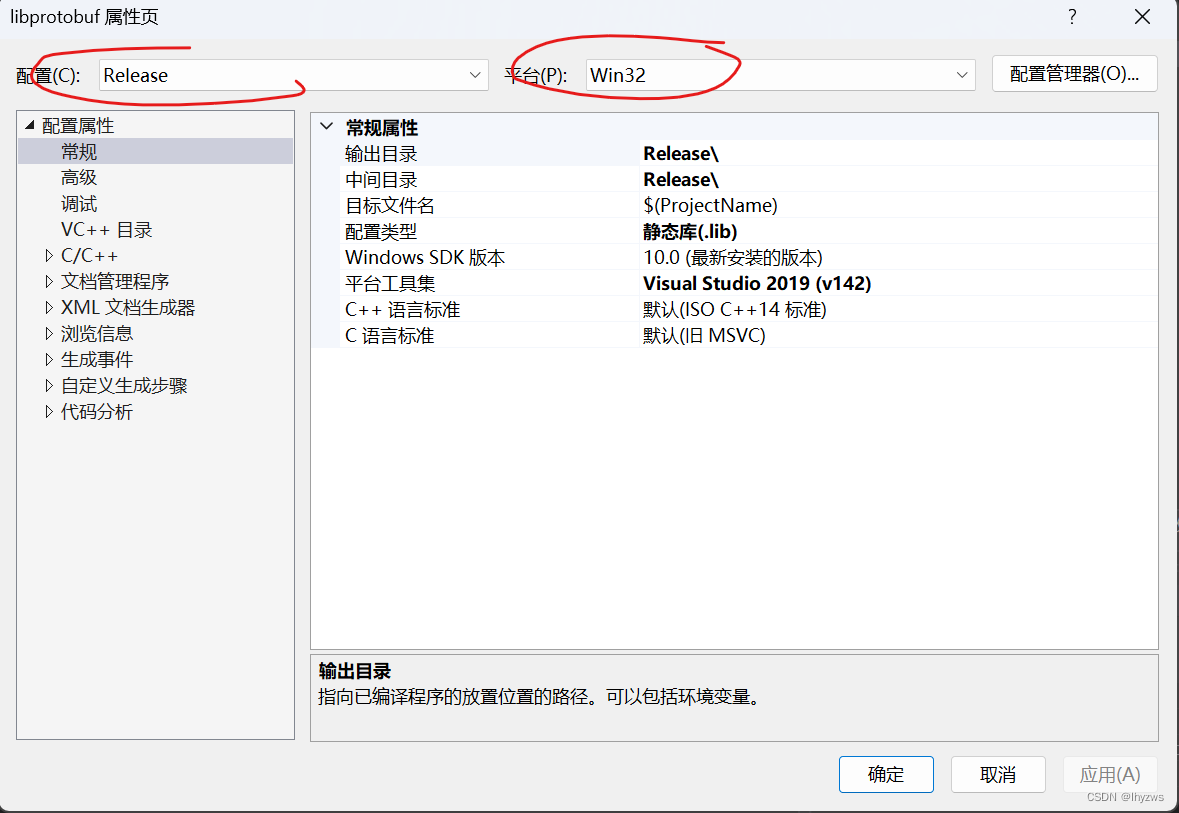

并且,为了代码能够运行得快点,应该打开各项目的属性面板,选择release 和win32版本:

另外 ,为了避免编译顺序引起的不知名错误,建议一个一个项目的单独编译,不要一上来就直接编译整个工程。总之我这么干冒出来好几百个错误,如果一个一个来,就很顺利通过了。

另外 ,为了避免编译顺序引起的不知名错误,建议一个一个项目的单独编译,不要一上来就直接编译整个工程。总之我这么干冒出来好几百个错误,如果一个一个来,就很顺利通过了。

3. latest protobuf

虽然用不着,但既然我之前掉坑里了(直接选用了23版本的最新protobuf),这里还是说几句。最新版的protobuf没有提供vsprojects目录和sln文件,需要通过命令行生成;当然也可以使用Ninja进行编译。所有这些的详细细节,都在protobuf\cmake\目录下的Readme.rd文件中。

(1)安装git,并使用git更新子模块

其实这一步基本用不上,想用也不好用,所以我就没有把它写在预备条件章节。

官方的说明是,如果我们是使用git clone获得的源码,则需要在protobuf目录下执行更新命令更新子模块,如果是直接下载的zip包或者tar包则不需要。虽然下一步也确实遭遇子模块不齐整的问题,但可以通过直接下载方法解决,这里就不赘述。

C:\protobuf> git submodule update --init --recursive

(2)生成Visual Sutdio工程文件

根据官方的说明,需要在有CMakeList.txt文件的目录下执行cmake(也就是根目录下):

PS F:\build\protobuf232> cmake -G "Visual Studio 16 2019" -A Win32 -B ./build -DCMAKE_INSTALL_PREFIX=./build/output -DCMAKE_CXX_STANDARD=17

CMake Warning:

Ignoring extra path from command line:

"./build/output"

-- Selecting Windows SDK version 10.0.19041.0 to target Windows 10.0.22621.

--

-- 23.2.0

-- Could NOT find ZLIB (missing: ZLIB_LIBRARY ZLIB_INCLUDE_DIR)

CMake Warning at cmake/abseil-cpp.cmake:28 (message):

protobuf_ABSL_PROVIDER is "module" but ABSL_ROOT_DIR is wrong

Call Stack (most recent call first):

CMakeLists.txt:339 (include)

CMake Error at third_party/utf8_range/CMakeLists.txt:31 (add_subdirectory):

The source directory

F:/build/protobuf232/third_party/abseil-cpp

does not contain a CMakeLists.txt file.

-- Configuring incomplete, errors occurred!

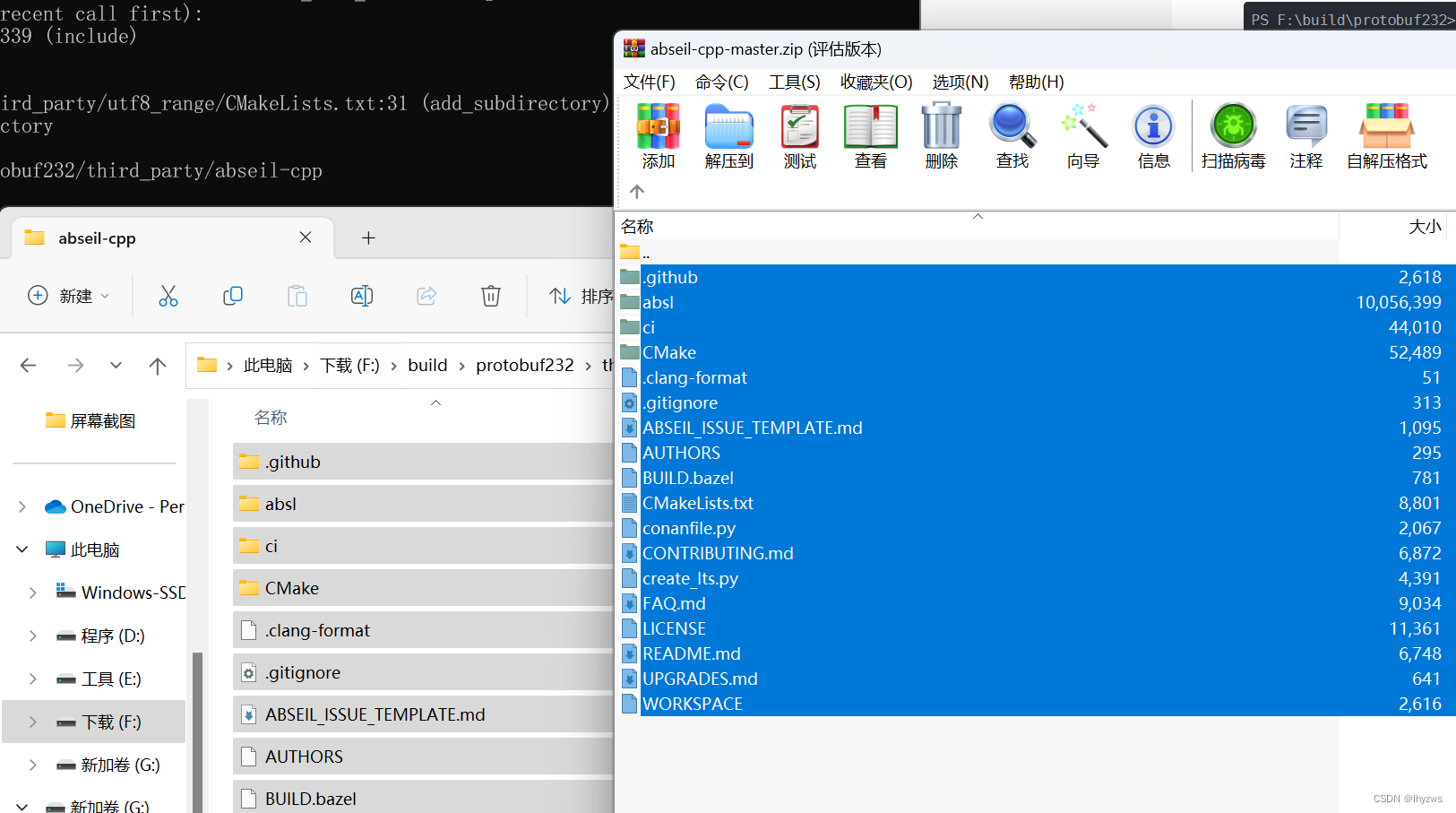

然而,执行结果是缺少abseil-cpp第三方文件——提示中对应的那个目录是空的。并且,cmake会提示说要不要通过git submodule update来更新这个第三方插件……因为有魔法的缘故,这件事是比较玄幻的……我曾经git clone成功下载protobuf23.2,也曾经通过git update成功下载abseil-cpp;但重现这一过程的时候,这两样都无论如何不行了,即使用魔法对抗魔法也没有作用。

PS:

如果还提示说googletest不齐整,不要犹豫,直接使用protobuf_BUILD_TESTS=OFF干掉之:

PS F:\build\protobuf232> cmake -G "Visual Studio 16 2019" -A Win32 -B ./build -DCMAKE_INSTALL_PREFIX=./build/output -DCMAKE_CXX_STANDARD=17 -Dprotobuf_BUILD_TESTS=OFF

** 下载并补齐abseil-cpp**

好在这个文件夹是可以在GitHub - abseil/abseil-cpp: Abseil Common Libraries (C++)上下载的:

对应拷贝进去就行了:

再次执行:

PS F:\build\protobuf232> cmake -G "Visual Studio 16 2019" -A Win32 -B ./build -DCMAKE_INSTALL_PREFIX=./build/output -DCMAKE_CXX_STANDARD=17

CMake Warning:

Ignoring extra path from command line:

"./build/output"

-- Selecting Windows SDK version 10.0.19041.0 to target Windows 10.0.22621.

--

-- 23.2.0

-- Could NOT find ZLIB (missing: ZLIB_LIBRARY ZLIB_INCLUDE_DIR)

CMake Warning at third_party/abseil-cpp/CMakeLists.txt:72 (message):

A future Abseil release will default ABSL_PROPAGATE_CXX_STD to ON for CMake

3.8 and up. We recommend enabling this option to ensure your project still

builds correctly.

-- Performing Test ABSL_INTERNAL_AT_LEAST_CXX17

-- Performing Test ABSL_INTERNAL_AT_LEAST_CXX17 - Success

-- Configuring done (1.9s)

-- Generating done (1.7s)

-- Build files have been written to: F:/build/protobuf232/build

PS F:\build\protobuf232>

Visual Studio 2019可以用的工程就已经生成了。

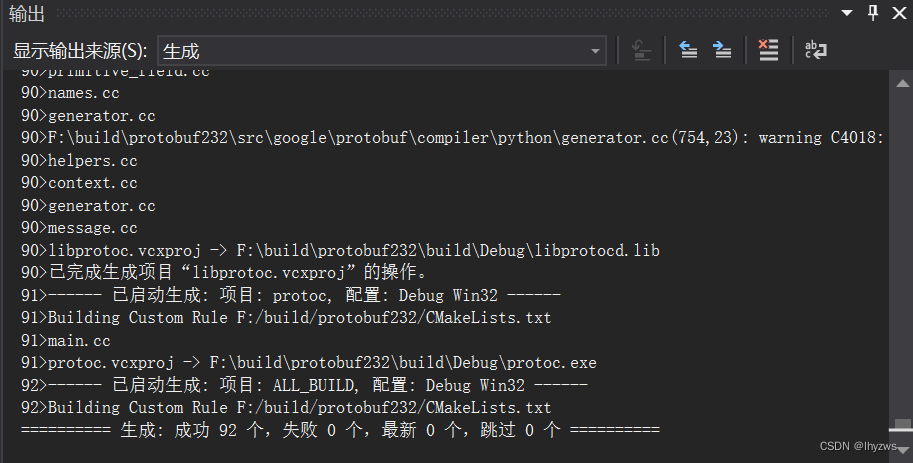

(3)编译

VS2019打开,直接在ALL BUILD上右键生成——请忽略我这个灰色,因为我已经点下去了……

一阵有惊无险的warning后,好在是成功生成了:

找到debug底下,这个protoc已经生成了,执行看看版本:

PS F:\build\protobuf232\build\Debug> ./protoc --version

libprotoc 23.2

(4)其它方法

我第一次成功生成并没有参考官方的方法,而是自己一阵误打误撞进去的cmake+nmake方法。不同之处在于使用cmake生成的nmake文件,而不是sln:

cmake -G “NMake Makefiles” -DCMAKE_BUILD_TYPE=Debug -Dprotobuf_BUILD_TESTS=OFF

然后在对其nmake && nmake install就行了。具体可参考protobuf windows编译和安装_wx637304bacd051的技术博客_51CTO博客

(5)继续生成java版本的protobuf

还是以protobuf 23.2版为例(2.5.0版本一样,莫名其妙的问题还少些)。按照官方的说明,将刚生成的protoc.exe文件拷贝到protobuf所在目录的src子目录下。并且在环境变量PATH中设置这个位置,以确保执行时使用的是这个版本。

网上也有说拷贝到system32下面的。这和windows中cmd寻找命令对应可执行文件的优先序有关,一般来说是先找系统目录,再找环境变量PATH下面,再本级目录(映像如此,准确与否不保证,试试便知)。既然官方说拷贝到src下,应该这个就可以。保险起见,我才加了path。

然后到java目录下,执行mvn test

F:\build\protobuf232\java>mvn test

[INFO] Scanning for projects...

Downloading from aliyunmaven: https://maven.aliyun.com/repository/public/org/apache/felix/maven-bundle-plugin/3.0.1/maven-bundle-plugin-3.0.1.pom

………………

………………

[WARNING]

[ERROR] Failed to execute goal org.apache.maven.plugins:maven-antrun-plugin:3.0.0:run (generate-sources) on project protobuf-javalite: An Ant BuildException has occured: The following error occurred while executing this line:

[ERROR] F:\build\protobuf232\java\lite\generate-sources-build.xml:4: Execute failed: java.io.IOException: Cannot run program "F:\build\protobuf232\java\lite\..\..\protoc" (in directory "F:\build\protobuf232\java\lite"): CreateProcess error=2, 系统找不到指定的文 件。

[ERROR] around Ant part ...<ant antfile="generate-sources-build.xml" />... @ 4:49 in F:\build\protobuf232\java\lite\target\antrun\build-main.xml

[ERROR] -> [Help 1]

[ERROR]

[ERROR] To see the full stack trace of the errors, re-run Maven with the -e switch.

[ERROR] Re-run Maven using the -X switch to enable full debug logging.

[ERROR]

[ERROR] For more information about the errors and possible solutions, please read the following articles:

[ERROR] [Help 1] http://cwiki.apache.org/confluence/display/MAVEN/MojoExecutionException

[ERROR]

然后出现如上错误。

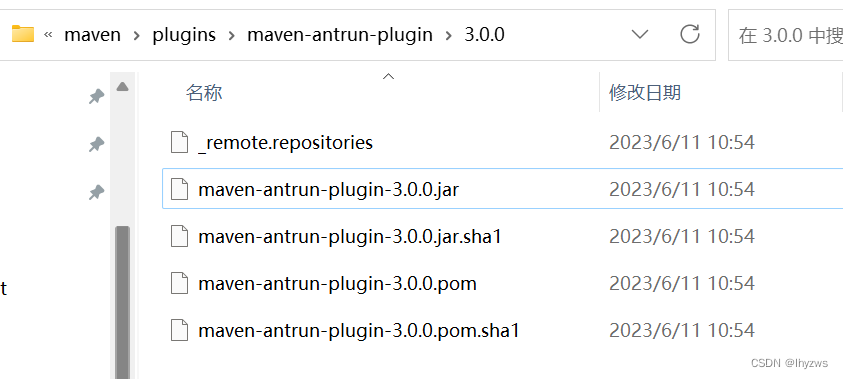

检查本地库,antrun-plugin好好的在那里,那就不是插件的问题

仔细看ERROR信息,实际的意思就是说再protobuf工作目录的根目录上,没有找到protoc.exe,这个真是……。**把src里面那个在根里再拷贝一份吧。**

接下来,得到新的错误:

[ERROR] Failed to execute goal org.apache.maven.plugins:maven-compiler-plugin:3.6.1:testCompile (default-testCompile) on project protobuf-java: Compilation failure: Compilation failure:

[ERROR] /F:/build/protobuf232/java/core/src/test/java/com/google/protobuf/DescriptorsTest.java:[68,25] 找不到符号

[ERROR] 符号: 类 UnittestRetention

[ERROR] 位置: 程序包 protobuf_unittest

[ERROR] /F:/build/protobuf232/java/core/src/test/java/com/google/protobuf/DescriptorsTest.java:[572,27] 找不到符号

[ERROR] 符号: 变量 UnittestRetention

[ERROR] 位置: 类 com.google.protobuf.DescriptorsTest

[ERROR] /F:/build/protobuf232/java/core/src/test/java/com/google/protobuf/DescriptorsTest.java:[573,37] 找不到符号

[ERROR] 符号: 变量 UnittestRetention

[ERROR] 位置: 类 com.google.protobuf.DescriptorsTest

[ERROR] /F:/build/protobuf232/java/core/src/test/java/com/google/protobuf/DescriptorsTest.java:[574,37] 找不到符号

[ERROR] 符号: 变量 UnittestRetention

[ERROR] 位置: 类 com.google.protobuf.DescriptorsTest

[ERROR] /F:/build/protobuf232/java/core/src/test/java/com/google/protobuf/DescriptorsTest.java:[575,37] 找不到符号

[ERROR] 符号: 变量 UnittestRetention

[ERROR] 位置: 类 com.google.protobuf.DescriptorsTest

这个错误对应的DescriptorsTest.java源文件在路径protobuf/java/core/src/test/java/com/google/protobuf/下,引起错误的原因是import protobuf_unittest.UnittestRetention找不到,而这个东西对应的目录在F:\build/protobuf232\java\lite\target\test-classes\protobuf_unittest下面。

问题是在src里面找不到对应的这个类,Google也找不到,不知为何?

** 好在只是在测试代码中,直接把测试代码的编译忽略掉好了。**

** 加上-Dmaven.test.skip=true。**

** 直接install,这样就搞定了**

F:\build\protobuf232\java>mvn install -Dmaven.test.skip=true

[INFO] Scanning for projects...

[INFO] ------------------------------------------------------------------------

[INFO] Reactor Build Order:

[INFO]

[INFO] Protocol Buffers [BOM] [pom]

[INFO] Protocol Buffers [Parent] [pom]

[INFO] Protocol Buffers [Lite] [bundle]

[INFO] Protocol Buffers [Core] [bundle]

[INFO] Protocol Buffers [Util] [bundle]

[INFO] Protocol Buffers [Kotlin-Core] [jar]

[INFO] Protocol Buffers [Kotlin-Lite] [jar]

[INFO]

[INFO] ------------------< com.google.protobuf:protobuf-bom >------------------

[INFO] Building Protocol Buffers [BOM] 3.23.2 [1/7]

[INFO] from bom\pom.xml

[INFO] --------------------------------[ pom ]---------------------------------

[INFO]

[INFO] --- install:3.1.0:install (default-install) @ protobuf-bom ---

[INFO] Installing F:\build\protobuf232\java\bom\pom.xml to

…………

…………

[INFO] Protocol Buffers [Kotlin-Lite] ..................... SUCCESS [ 9.266 s]

[INFO] ------------------------------------------------------------------------

[INFO] BUILD SUCCESS

[INFO] ------------------------------------------------------------------------

[INFO] Total time: 02:59 min

[INFO] Finished at: 2023-06-11T13:27:16+08:00

[INFO] ------------------------------------------------------------------------

[WARNING]

[WARNING] Plugin validation issues were detected in 9 plugin(s)

[WARNING]

[WARNING] * org.apache.maven.plugins:maven-jar-plugin:2.6

[WARNING] * org.apache.maven.plugins:maven-compiler-plugin:3.6.1

[WARNING] * org.apache.maven.plugins:maven-resources-plugin:3.1.0

[WARNING] * org.jetbrains.kotlin:kotlin-maven-plugin:1.6.0

[WARNING] * org.codehaus.mojo:build-helper-maven-plugin:1.10

[WARNING] * org.codehaus.mojo:animal-sniffer-maven-plugin:1.20

[WARNING] * org.apache.maven.plugins:maven-antrun-plugin:3.0.0

[WARNING] * org.apache.maven.plugins:maven-surefire-plugin:3.0.0-M5

[WARNING] * org.apache.felix:maven-bundle-plugin:3.0.1

[WARNING]

[WARNING] For more or less details, use 'maven.plugin.validation' property with one of the values (case insensitive): [BRIEF, DEFAULT, VERBOSE]

[WARNING]

F:\build\protobuf232\java>

最后package一下:

F:\build\protobuf232\java>mvn package -Dmaven.test.skip=true

[INFO] Scanning for projects...

------------------------------------------------------------------------

………………

…………

……

[INFO] BUILD SUCCESS

[INFO] ------------------------------------------------------------------------

[INFO] Total time: 02:55 min

[INFO] Finished at: 2023-06-11T13:32:50+08:00

[INFO] ------------------------------------------------------------------------

[WARNING]

[WARNING] Plugin validation issues were detected in 9 plugin(s)

[WARNING]

[WARNING] * org.apache.maven.plugins:maven-jar-plugin:2.6

[WARNING] * org.apache.maven.plugins:maven-compiler-plugin:3.6.1

[WARNING] * org.apache.maven.plugins:maven-resources-plugin:3.1.0

[WARNING] * org.jetbrains.kotlin:kotlin-maven-plugin:1.6.0

[WARNING] * org.codehaus.mojo:build-helper-maven-plugin:1.10

[WARNING] * org.codehaus.mojo:animal-sniffer-maven-plugin:1.20

[WARNING] * org.apache.maven.plugins:maven-antrun-plugin:3.0.0

[WARNING] * org.apache.maven.plugins:maven-surefire-plugin:3.0.0-M5

[WARNING] * org.apache.felix:maven-bundle-plugin:3.0.1

[WARNING]

[WARNING] For more or less details, use 'maven.plugin.validation' property with one of the values (case insensitive): [BRIEF, DEFAULT, VERBOSE]

[WARNING]

之所以把中间的错误贴出来,其实只是分享一下我解决这个问题的方式,实际上在不同的环境、不同的各类软件版本中,可能出现多种多样的错误,一般而言,无非就是缺少了代码、缺少了dependency、plugin之类的错误,找出来,想办法补上或者绕过去就好了。

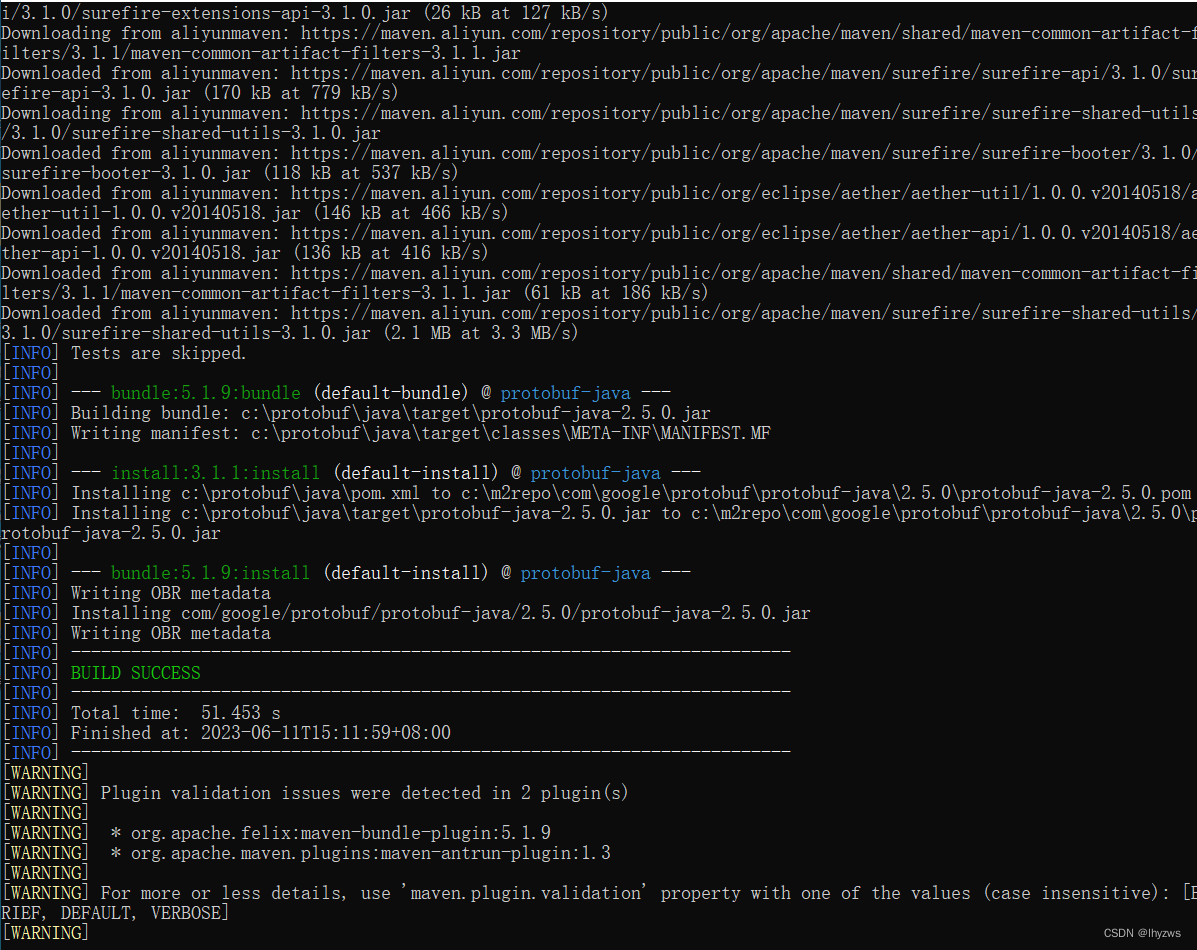

(6)PS:安装 protobuf 2.5.0

同样,在我们编译hadoop之前,需要按照官方要求,将protobuf 2.5.0给编译了,并且install到本地仓库中去。过程嘛,和上面23.2版本的编译是一样的。这里以直接下载protoc.exe 2.5.0版本的为例。

同样,在protobuf的根目录、src子目录中放入protoc.exe(当然必须和protobuf源代码版本一致,2.5.0版的),然后设好路径,新启动cmd,查看如下:

Microsoft Windows [版本 10.0.19044.1288]

(c) Microsoft Corporation。保留所有权利。

C:\Users\pig>protoc --version

libprotoc 2.5.0

C:\Users\pig>

然后到protobuf的java目录 下,直接mvn install:

Microsoft Windows [版本 10.0.19044.1288]

(c) Microsoft Corporation。保留所有权利。

C:\Users\pig>cd c:\protobuf

c:\protobuf>cd java

c:\protobuf\java>mvn install -Dmaven.test.skip=true

然后一把过:

** (7)其它方法**

其实不止使用Visual Studio和maven可以编译protobuf。作为跨平台软件,protobuf还支持其它好多种编译方式,这些编译方式都在对应子目录的Readme.MD中详细记载。

比如参考https://github.com/protocolbuffers/protobuf/blob/main/src/README.md ,可以使用bazel在centos7上进行编译:

首先安装bazel:

** dnf install dnf-plugins-core**

[root@pighost abseil-cpp]# yum install dnf-plugins-core -y

上次元数据过期检查:2:09:55 前,执行于 2023年06月03日 星期六 23时34分20秒。

软件包 dnf-plugins-core-4.0.21-11.el8.noarch 已安装。

依赖关系解决。

============================================================================================================================================

软件包 架构 版本 仓库 大小

============================================================================================================================================

升级:

dnf-plugins-core noarch 4.0.21-18.el8 baseos 75 k

python3-dnf-plugins-core noarch 4.0.21-18.el8 baseos 258 k

事务概要

============================================================================================================================================

升级 2 软件包

** dnf copr enable vbatts/bazel**

这一句实际我没有执行

** yum install bazel5**

[root@pighost abseil-cpp]# yum install bazel5 -y

上次元数据过期检查:0:00:17 前,执行于 2023年06月04日 星期日 01时44分57秒。

依赖关系解决。

============================================================================================================================================

软件包 架构 版本 仓库 大小

============================================================================================================================================

安装:

bazel5 x86_64 5.4.0-0.el8 copr:copr.fedorainfracloud.org:vbatts:bazel 28 M

安装依赖关系:

copy-jdk-configs noarch 4.0-2.el8 appstream 31 k

java-11-openjdk x86_64 1:11.0.18.0.9-0.3.ea.el8 appstream 468 k

java-11-openjdk-devel x86_64 1:11.0.18.0.9-0.3.ea.el8 appstream 3.4 M

java-11-openjdk-headless x86_64 1:11.0.18.0.9-0.3.ea.el8 appstream 41 M

javapackages-filesystem noarch 5.3.0-1.module_el8.0.0+11+5b8c10bd appstream 30 k

lksctp-tools x86_64 1.0.18-3.el8 baseos 100 k

ttmkfdir x86_64 3.0.9-54.el8 appstream 62 k

tzdata-java noarch 2023c-1.el8 appstream 267 k

xorg-x11-fonts-Type1 noarch 7.5-19.el8 appstream 522 k

启用模块流:

javapackages-runtime 201801

然后编译,在protobuf的根目录下执行:

** bazel build :protoc :protobuf**

生成结果位于bazel-bin目录下。

三、编译Hadoop

完成protobuf 2.5.0的install后,就可以开始编译hadoop了。

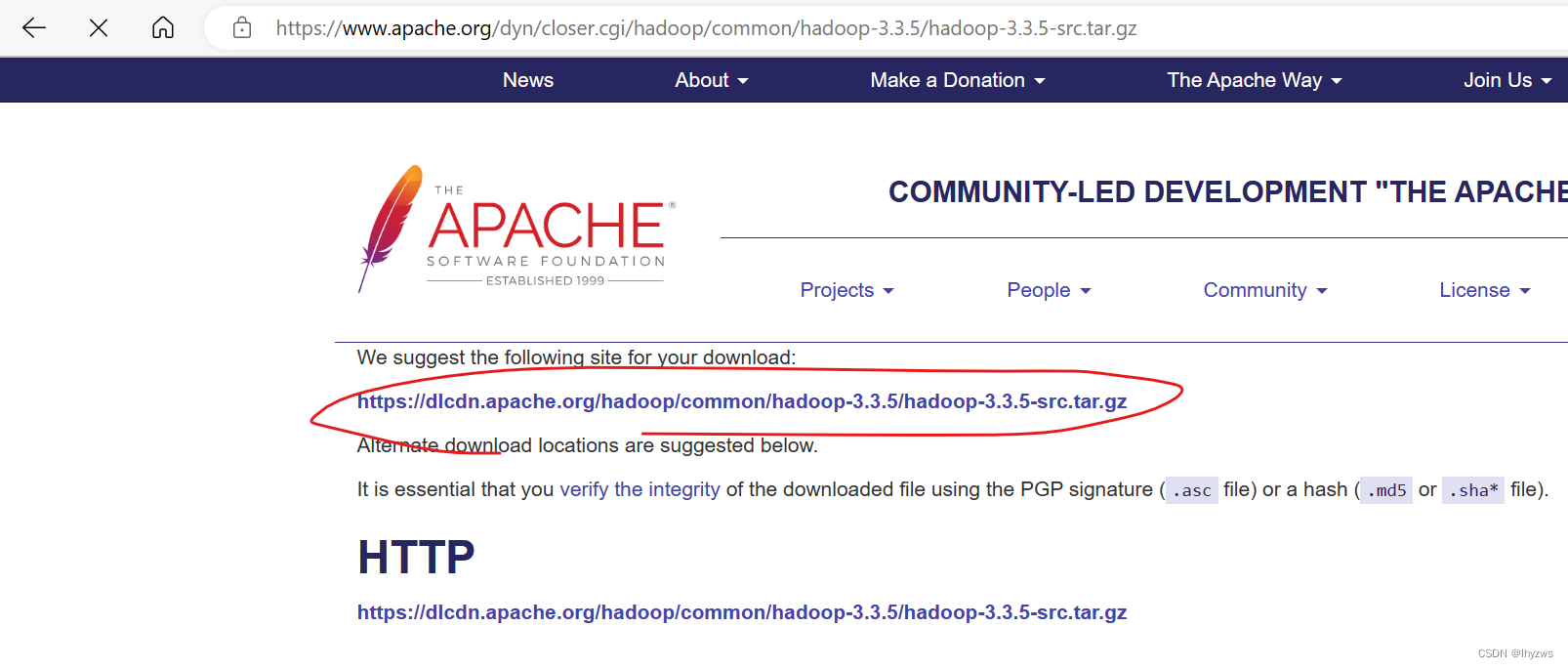

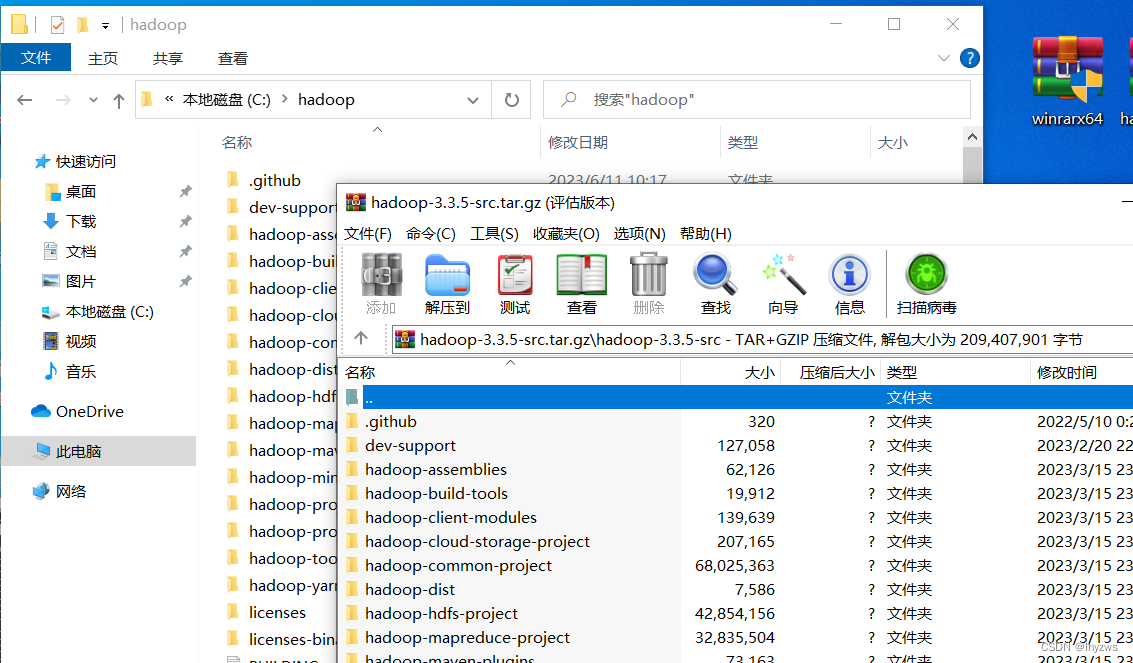

1. 下载Hadoop源码

直接到官方下载源码包,解压到需要的目录下面就行

我是在c盘下建立了一个hadoop目录,然后把源代码解压进去了。需要注意的是,最后那个hadoop-yarn-project的子目录很深,所以要尽可能把代码解压到靠近根目录的位置。否则,在我正版的win11家庭版上,会因为目录长度限制而无法解压(其实似乎无论如何都规避不了,不过好在我们最后并不需要这个子目录里面的东西,所以直接无视了);而在我下载的未激活版本的windows专业版上,这个问题不存在。

2. 直接编译

c:\hadoop>mvn package -Dmaven.test.skip=true

[INFO] Scanning for projects...

(1)没有安装VS2019导致的错误

直接上手package,一阵子以后,收到以下错误:

[ERROR] Failed to execute goal org.codehaus.mojo:exec-maven-plugin:1.3.1:exec (convert-ms-winutils) on project hadoop-common: Command execution failed.: Process exited with an error: 1 (Exit value: 1) -> [Help 1]

[ERROR]

[ERROR] To see the full stack trace of the errors, re-run Maven with the -e switch.

[ERROR] Re-run Maven using the -X switch to enable full debug logging.

[ERROR]

[ERROR] For more information about the errors and possible solutions, please read the following articles:

[ERROR] [Help 1] http://cwiki.apache.org/confluence/display/MAVEN/MojoExecutionException

[ERROR]

[ERROR] After correcting the problems, you can resume the build with the command

[ERROR] mvn <args> -rf :hadoop-common

每次收到这个错误都是崩溃的。因为引起它的原因千奇百怪。这次的原因,是因为在虚拟机里面,我偷懒没有安装Visual Studio,导致无法编译winutils。

所以下载并安装——本来是为了避免在虚拟机中如此痛苦——结果也没避开。

使用Visual Studio的命令行工具进去重新mvn pakcage,这次成功过了winutils这一关,并且顺利生成了WinUtils.exe。其实我们需要的仅此而已。

使用Visual Studio的命令行工具进去重新mvn pakcage,这次成功过了winutils这一关,并且顺利生成了WinUtils.exe。其实我们需要的仅此而已。

(2)没有安装git-bash导致的错误

** ** 不过接着往下,我们又遇到了新的错误:

[ERROR] Failed to execute goal org.apache.maven.plugins:maven-antrun-plugin:1.7:run (common-test-bats-driver) on project hadoop-common: An Ant BuildException has occured: Execute failed: java.io.IOException: Cannot run program "bash" (in directory "C:\hadoop\hadoop-common-project\hadoop-common\src\test\scripts"): CreateProcess error=2, 系统找不到指定的文件。

[ERROR] around Ant part ...<exec failonerror="true" dir="src/test/scripts" executable="bash">... @ 4:69 in C:\hadoop\hadoop-common-project\hadoop-common\target\antrun\build-main.xml

[ERROR] -> [Help 1]

[ERROR]

[ERROR] To see the full stack trace of the errors, re-run Maven with the -e switch.

[ERROR] Re-run Maven using the -X switch to enable full debug logging.

[ERROR]

[ERROR] For more information about the errors and possible solutions, please read the following articles:

[ERROR] [Help 1] http://cwiki.apache.org/confluence/display/MAVEN/MojoExecutionException

[ERROR]

[ERROR] After correcting the problems, you can resume the build with the command

[ERROR] mvn <args> -rf :hadoop-common

这个错误出现的原因,是因为没有安装git-bash,看ERROR报出的信息,实际就是maven使用antrun插件试图去执行build-main.xml文档(实际使用bash调用对应的sh脚本)时,无法找到bash文件。

所以,记得安装git-bash,并且正确的设置PATH,使编译器能够找到git/bin/bash。

(3)跳过不太重要的部分

接着往下,马上又碰到新的错误:

[ERROR] Failed to execute goal org.apache.maven.plugins:maven-antrun-plugin:1.7:run (make) on project hadoop-hdfs-native-client: An Ant BuildException has occured: exec returned: 1

[ERROR] around Ant part ...<exec failonerror="true" dir="C:\hadoop\hadoop-hdfs-project\hadoop-hdfs-native-client\target/native" executable="cmake">... @ 5:123 in C:\hadoop\hadoop-hdfs-project\hadoop-hdfs-native-client\target\antrun\build-main.xml

[ERROR] -> [Help 1]

[ERROR]

[ERROR] To see the full stack trace of the errors, re-run Maven with the -e switch.

[ERROR] Re-run Maven using the -X switch to enable full debug logging.

[ERROR]

[ERROR] For more information about the errors and possible solutions, please read the following articles:

[ERROR] [Help 1] http://cwiki.apache.org/confluence/display/MAVEN/MojoExecutionException

[ERROR]

[ERROR] After correcting the problems, you can resume the build with the command

[ERROR] mvn <args> -rf :hadoop-hdfs-native-client

从错误调试日志来看,应该是使用cmake和msbuild生成项目文件时,都出现了项目文件不存在的情况(似乎这个版本在这个client处缺少源文件)

Execute:Java13CommandLauncher: Executing 'cmake' with arguments:

'C:\hadoop\hadoop-hdfs-project\hadoop-hdfs-native-client/src/'

'-DGENERATED_JAVAH=C:\hadoop\hadoop-hdfs-project\hadoop-hdfs-native-client\target/native/javah'

'-DJVM_ARCH_DATA_MODEL=64'

'-DHADOOP_BUILD=1'

'-DREQUIRE_FUSE=false'

'-DREQUIRE_VALGRIND=false'

'-A'

'x64'

The ' characters around the executable and arguments are

not part of the command.

[exec] CMake Warning (dev) in CMakeLists.txt:

[exec] No project() command is present. The top-level CMakeLists.txt file must

[exec] contain a li-- Selecting Windows SDK version 10.0.19041.0 to target Windows 10.0.19044.

[exec] teral, direct call to the project() command. Add a line of

[exec] code such as

[exec]

[exec] project(ProjectName)

[exec]

[exec] near the top of the file, but after cmake_minimum_required().

[exec]

[exec] CMake is pretending there is a "project(Project)" command on the first

[exec] line.

[exec] This warning is for project developers. Use -Wno-dev to suppress it.

[exec]

[exec] CMake Warning (dev) in CMakeLists.txt:

[exec] cmake_minimum_required() should be called prior to this top-level project()

[exec] call. Please see the cmake-commands(7) manual for usage documentation of

[exec] both commands.

[exec] This warning is for project developers. Use -Wno-dev to suppress it.

[exec]

[exec] CUSTOM_OPENSSL_PREFIX =

[exec] -- Performing Test THREAD_LOCAL_SUPPORTED

[exec] Cannot find a usable OpenSSL library. OPENSSL_LIBRARY=OPENSSL_LIBRARY-NOTFOUND, OPENSSL_INCLUDE_DIR=OPENSSL_INCLUDE_DIR-NOTFOUND, CUSTOM_OPENSSL_LIB=, CUSTOM_OPENSSL_PREFIX=, CUSTOM_OPENSSL_INCLUDE=

[exec] CMake Deprecation Warning at main/native/libhdfspp/CMakeLists.txt:29 (cmake_minimum_required):

[exec] Compatibility with CMake < 2.8.12 will be removed from a future version of

[exec] CMake.

[exec]

[exec] Update the VERSION argument <min> value or use a ...<max> suffix to tell-- Performing Test THREAD_LOCAL_SUPPORTED - Success

[exec]

[exec] CMake that the project does not need compatibility with older versions.

[exec]

[exec]

[exec] -- Could NOT find Doxygen (missing: DOXYGEN_EXECUTABLE)

[exec] CMake Error at C:/CMake/share/cmake-3.26/Modules/FindPackageHandleStandardArgs.cmake:230 (message):

[exec] Could NOT find OpenSSL, tr-- Configuring incomplete, errors occurred!

[exec] y to set the path to OpenSSL root folder in the

[exec] system variable OPENSSL_ROOT_DIR (missing: OPENSSL_CRYPTO_LIBRARY

[exec] OPENSSL_INCLUDE_DIR)

[exec] Call Stack (most recent call first):

[exec] C:/CMake/share/cmake-3.26/Modules/FindPackageHandleStandardArgs.cmake:600 (_FPHSA_FAILURE_MESSAGE)

[exec] C:/CMake/share/cmake-3.26/Modules/FindOpenSSL.cmake:670 (find_package_handle_standard_args)

[exec] main/native/libhdfspp/CMakeLists.txt:44 (find_package)

[exec]

[exec]

[exec] Result: 1

[exec] Current OS is Windows 10

[exec] Executing 'msbuild' with arguments:

[exec] 'ALL_BUILD.vcxproj'

[exec] '/nologo'

[exec] '/p:Configuration=RelWithDebInfo'

[exec] '/p:LinkIncremental=false'

[exec]

[exec] The ' characters around the executable and arguments are

[exec] not part of the command.

Execute:Java13CommandLauncher: Executing 'msbuild' with arguments:

'ALL_BUILD.vcxproj'

'/nologo'

'/p:Configuration=RelWithDebInfo'

'/p:LinkIncremental=false'

The ' characters around the executable and arguments are

not part of the command.

[exec] MSBUILD : error MSB1009: 项目文件不存在。

而且这个native-client底下的缺失较多,需要采取多种方法来绕过。具体可以参考

win10编译hadoop3.2.1_windows编译hadoop_冰上浮云的博客-CSDN博客。当然,最简单的绕过方法,是直接从下一个包开始编译:

mvn package -Dmaven.test.skip=true -rf :hadoop-hdfs-nfs

只要这个native-client不影响咱们啥就好;具体啥原因这里就不深究了。

不过不知道是不是我们skip了test的原因……因为在上一次成功编译时,我使用的mvn test,没有遇到过类似问题。

(4)编译到中途,缺少denpendcy,或者pom路径不对

先多说一句,如果遇上duplicate jar库,需要在mvn命令中添加clean参数。当然,这有可能把(3)中我们手工构建的目录删掉,没关系,碰到(3)错误的时候重新构建并去掉clean参数继续执行就行了。

最终是需要mvn install package一起上的。

然后在即将大功告成的某步,居然被告知ensure-jars-have-correct-contents.sh找不到文件:

[DEBUG] env: __VSCMD_PREINIT_PATH=C:\Program Files (x86)\Common Files\Oracle\Java\javapath;C:\Windows\system32;C:\Windows;C:\Windows\System32\Wbem;C:\Windows\System32\WindowsPowerShell\v1.0\;C:\Windows\System32\OpenSSH\;C:\CMake\bin;C:\maven\bin;C:\protobuf;C:\Java\bin;C:\Jre\bin;C:\ant\bin;C:\Program Files\Git\cmd;C:\Program Files\Git\bin;C:\hadoop335\bin;C:\spark340\bin;C:\Users\pig\AppData\Local\Microsoft\WindowsApps;

[DEBUG] env: __VSCMD_SCRIPT_ERR_COUNT=0

[DEBUG] Executing command line: [bash, ensure-jars-have-correct-contents.sh, c:\m2repo\org\apache\hadoop\hadoop-client-api\3.3.5\hadoop-client-api-3.3.5.jar;c:\m2repo\org\apache\hadoop\hadoop-client-runtime\3.3.5\hadoop-client-runtime-3.3.5.jar]

/usr/bin/bash: ensure-jars-have-correct-contents.sh: No such file or directory

[INFO] ------------------------------------------------------------------------

[INFO] Reactor Summary for Apache Hadoop Client Packaging Invariants 3.3.5:

[INFO]

[INFO] Apache Hadoop Client Packaging Invariants .......... FAILURE [ 2.375 s]

[INFO] Apache Hadoop Client Test Minicluster .............. SKIPPED

[INFO] Apache Hadoop Client Packaging Invariants for Test . SKIPPED

[INFO] Apache Hadoop Client Packaging Integration Tests ... SKIPPED

[INFO] Apache Hadoop Distribution ......................... SKIPPED

[INFO] Apache Hadoop Client Modules ....................... SKIPPED

[INFO] Apache Hadoop Tencent COS Support .................. SKIPPED

[INFO] Apache Hadoop Cloud Storage ........................ SKIPPED

[INFO] Apache Hadoop Cloud Storage Project ................ SKIPPED

[INFO] ------------------------------------------------------------------------

[INFO] BUILD FAILURE

[INFO] ------------------------------------------------------------------------

[INFO] Total time: 4.406 s

[INFO] Finished at: 2023-06-11T22:10:37+08:00

[INFO] ------------------------------------------------------------------------

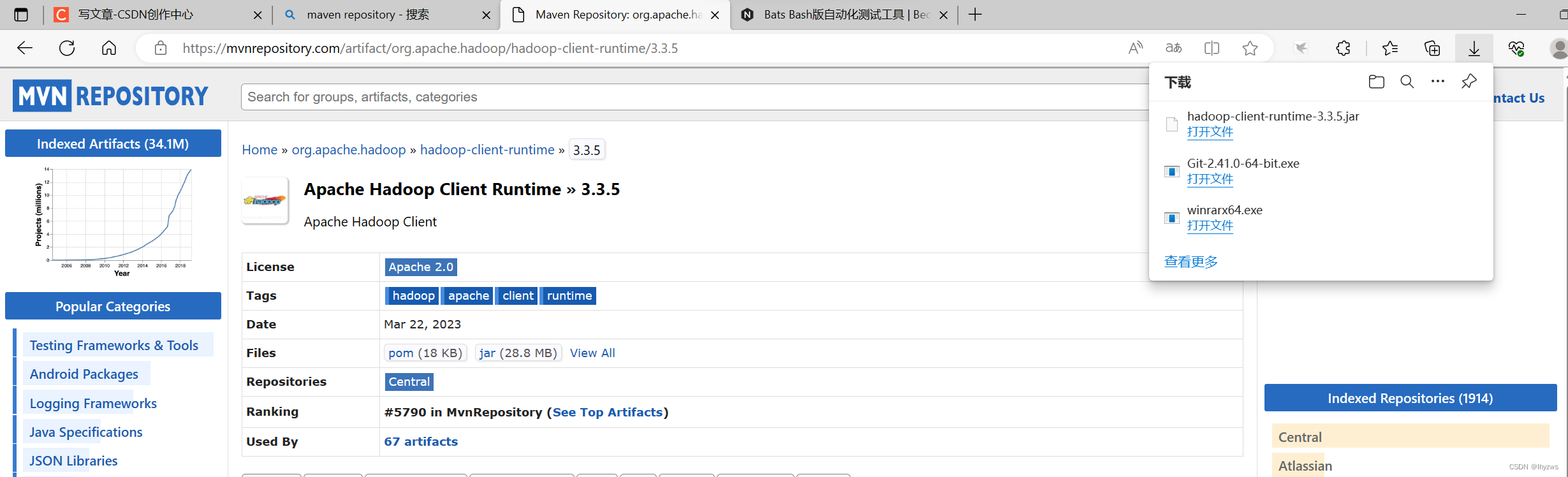

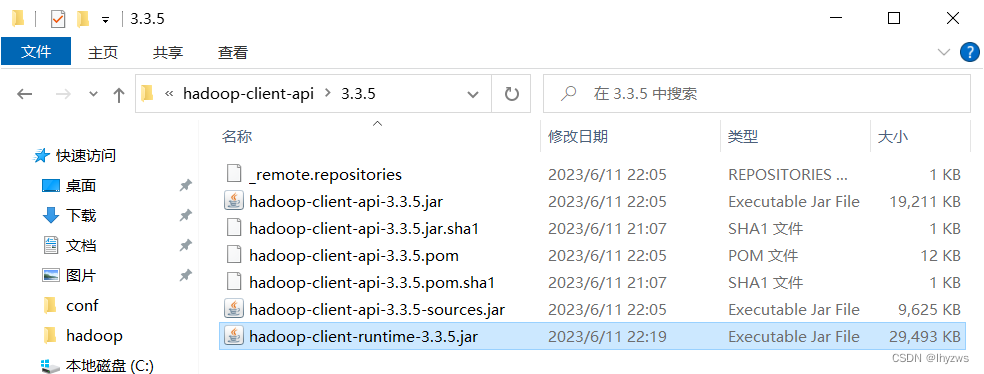

根据调试信息顺藤摸瓜,直到是本地库C:\m2repo\org\apache\hadoop\hadoop-client-api\3.3.5目录下依赖库c:\m2repo\org\apache\hadoop\hadoop-client-runtime\3.3.5\hadoop-client-runtime-3.3.5.jar少了:

一般而言,这里的jar文件都是可以在pom文件中指定,并由maven下载的。

由于我们装到中途,改pom文件就比较麻烦,不如直接下jar包放到库里了。

另外,这里bash用来执行的路径使用了相对路径ensure-jars-have-correct-contents.sh,但并没有正确指明相对的位置(比如使用环境变量);所以在hadoop\hadoop-client-modules\hadoop-client-check-invariants目录下找到pom文件,将其改为绝对地址:

<executions>

<execution>

<id>check-jar-contents</id>

<phase>integration-test</phase>

<goals>

<goal>exec</goal>

</goals>

<configuration>

<executable>${shell-executable}</executable>

<workingDirectory>${project.build.testOutputDirectory}</workingDirectory>

<requiresOnline>false</requiresOnline>

<arguments>

<argument>C:\hadoop\hadoop-client-modules\hadoop-client-check-invariants\src\test\resources\ensure-jars-have-correct-contents.sh</argument>

<argument>${hadoop-client-artifacts}</argument>

</arguments>

</configuration>

</execution>

</executions>

(5)Shade打包时jar包冲突

[INFO] Apache Hadoop Client Test Minicluster .............. FAILURE [02:13 min]

[INFO] Apache Hadoop Client Packaging Invariants for Test . SKIPPED

[INFO] Apache Hadoop Client Packaging Integration Tests ... SKIPPED

[INFO] Apache Hadoop Distribution ......................... SKIPPED

[INFO] Apache Hadoop Client Modules ....................... SKIPPED

[INFO] Apache Hadoop Tencent COS Support .................. SKIPPED

[INFO] Apache Hadoop Cloud Storage ........................ SKIPPED

[INFO] Apache Hadoop Cloud Storage Project ................ SKIPPED

[INFO] ------------------------------------------------------------------------

[INFO] BUILD FAILURE

[INFO] ------------------------------------------------------------------------

[INFO] Total time: 02:15 min

[INFO] Finished at: 2023-06-11T22:43:57+08:00

[INFO] ------------------------------------------------------------------------

[WARNING]

[WARNING] Plugin validation issues were detected in 9 plugin(s)

[WARNING]

[WARNING] * org.apache.maven.plugins:maven-jar-plugin:2.5

[WARNING] * org.apache.maven.plugins:maven-resources-plugin:2.6

[WARNING] * org.apache.maven.plugins:maven-site-plugin:3.9.1

[WARNING] * org.apache.maven.plugins:maven-surefire-plugin:3.0.0-M1

[WARNING] * org.apache.maven.plugins:maven-compiler-plugin:3.1

[WARNING] * org.apache.maven.plugins:maven-shade-plugin:3.2.1

[WARNING] * org.apache.maven.plugins:maven-antrun-plugin:1.7

[WARNING] * org.apache.maven.plugins:maven-enforcer-plugin:3.0.0-M1

[WARNING] * com.google.code.maven-replacer-plugin:replacer:1.5.3

[WARNING]

[WARNING] For more or less details, use 'maven.plugin.validation' property with one of the values (case insensitive): [BRIEF, DEFAULT, VERBOSE]

[WARNING]

[ERROR] Failed to execute goal org.apache.maven.plugins:maven-shade-plugin:3.2.1:shade (default) on project hadoop-client-minicluster: Error creating shaded jar: duplicate entry: META-INF/services/org.apache.hadoop.shaded.javax.websocket.ContainerProvider -> [Help 1]

org.apache.maven.lifecycle.LifecycleExecutionException: Failed to execute goal org.apache.maven.plugins:maven-shade-plugin:3.2.1:shade (default) on project hadoop-client-minicluster: Error creating shaded jar: duplicate entry: META-INF/services/org.apache.hadoop.shaded.javax.websocket.ContainerProvider

这个一般就是打包时发现同一个class类在多个jar包里面了

直接就地clean接着来

c:\hadoop>mvn clean install package -Dmaven.test.skip=true -rf :hadoop-client-minicluster

** (6)编译完成**

[INFO] Reactor Summary for Apache Hadoop HDFS-NFS 3.3.5:

[INFO]

[INFO] Apache Hadoop HDFS-NFS ............................. SUCCESS [ 11.250 s]

[INFO] Apache Hadoop HDFS-RBF ............................. SUCCESS [ 10.765 s]

[INFO] Apache Hadoop HDFS Project ......................... SUCCESS [ 0.079 s]

[INFO] Apache Hadoop YARN ................................. SUCCESS [ 0.046 s]

[INFO] Apache Hadoop YARN API ............................. SUCCESS [ 19.828 s]

[INFO] Apache Hadoop YARN Common .......................... SUCCESS [ 23.548 s]

[INFO] Apache Hadoop YARN Server .......................... SUCCESS [ 0.046 s]

[INFO] Apache Hadoop YARN Server Common ................... SUCCESS [ 11.844 s]

[INFO] Apache Hadoop YARN NodeManager ..................... SUCCESS [ 19.625 s]

[INFO] Apache Hadoop YARN Web Proxy ....................... SUCCESS [ 1.156 s]

[INFO] Apache Hadoop YARN ApplicationHistoryService ....... SUCCESS [ 3.375 s]

[INFO] Apache Hadoop YARN Timeline Service ................ SUCCESS [ 2.282 s]

[INFO] Apache Hadoop YARN ResourceManager ................. SUCCESS [ 24.031 s]

[INFO] Apache Hadoop YARN Server Tests .................... SUCCESS [ 1.110 s]

[INFO] Apache Hadoop YARN Client .......................... SUCCESS [ 3.109 s]

[INFO] Apache Hadoop YARN SharedCacheManager .............. SUCCESS [ 0.672 s]

[INFO] Apache Hadoop YARN Timeline Plugin Storage ......... SUCCESS [ 0.797 s]

[INFO] Apache Hadoop YARN TimelineService HBase Backend ... SUCCESS [ 0.062 s]

[INFO] Apache Hadoop YARN TimelineService HBase Common .... SUCCESS [ 6.782 s]

[INFO] Apache Hadoop YARN TimelineService HBase Client .... SUCCESS [ 8.499 s]

[INFO] Apache Hadoop YARN TimelineService HBase Servers ... SUCCESS [ 0.047 s]

[INFO] Apache Hadoop YARN TimelineService HBase Server 1.2 SUCCESS [ 5.688 s]

[INFO] Apache Hadoop YARN TimelineService HBase tests ..... SUCCESS [ 7.468 s]

[INFO] Apache Hadoop YARN Router .......................... SUCCESS [ 1.219 s]

[INFO] Apache Hadoop YARN TimelineService DocumentStore ... SUCCESS [ 8.031 s]

[INFO] Apache Hadoop YARN Applications .................... SUCCESS [ 0.032 s]

[INFO] Apache Hadoop YARN DistributedShell ................ SUCCESS [ 0.875 s]

[INFO] Apache Hadoop YARN Unmanaged Am Launcher ........... SUCCESS [ 0.250 s]

[INFO] Apache Hadoop MapReduce Client ..................... SUCCESS [ 0.062 s]

[INFO] Apache Hadoop MapReduce Core ....................... SUCCESS [ 13.141 s]

[INFO] Apache Hadoop MapReduce Common ..................... SUCCESS [ 4.375 s]

[INFO] Apache Hadoop MapReduce Shuffle .................... SUCCESS [ 0.703 s]

[INFO] Apache Hadoop MapReduce App ........................ SUCCESS [ 4.562 s]

[INFO] Apache Hadoop MapReduce HistoryServer .............. SUCCESS [ 2.235 s]

[INFO] Apache Hadoop MapReduce JobClient .................. SUCCESS [ 2.531 s]

[INFO] Apache Hadoop Mini-Cluster ......................... SUCCESS [ 0.516 s]

[INFO] Apache Hadoop YARN Services ........................ SUCCESS [ 0.031 s]

[INFO] Apache Hadoop YARN Services Core ................... SUCCESS [ 5.344 s]

[INFO] Apache Hadoop YARN Services API .................... SUCCESS [ 0.968 s]

[INFO] Apache Hadoop YARN Application Catalog ............. SUCCESS [ 0.032 s]

[INFO] Apache Hadoop YARN Application Catalog Webapp ...... SUCCESS [01:52 min]

[INFO] Apache Hadoop YARN Application Catalog Docker Image SUCCESS [ 0.046 s]

[INFO] Apache Hadoop YARN Application MaWo ................ SUCCESS [ 0.063 s]

[INFO] Apache Hadoop YARN Application MaWo Core ........... SUCCESS [ 1.000 s]

[INFO] Apache Hadoop YARN Site ............................ SUCCESS [ 0.047 s]

[INFO] Apache Hadoop YARN Registry ........................ SUCCESS [ 0.078 s]

[INFO] Apache Hadoop YARN UI .............................. SUCCESS [ 0.031 s]

[INFO] Apache Hadoop YARN CSI ............................. SUCCESS [ 10.375 s]

[INFO] Apache Hadoop YARN Project ......................... SUCCESS [ 0.094 s]

[INFO] Apache Hadoop MapReduce HistoryServer Plugins ...... SUCCESS [ 0.203 s]

[INFO] Apache Hadoop MapReduce NativeTask ................. SUCCESS [ 1.266 s]

[INFO] Apache Hadoop MapReduce Uploader ................... SUCCESS [ 0.281 s]

[INFO] Apache Hadoop MapReduce Examples ................... SUCCESS [ 1.859 s]

[INFO] Apache Hadoop MapReduce ............................ SUCCESS [ 0.094 s]

[INFO] Apache Hadoop MapReduce Streaming .................. SUCCESS [ 2.266 s]

[INFO] Apache Hadoop Distributed Copy ..................... SUCCESS [ 2.031 s]

[INFO] Apache Hadoop Client Aggregator .................... SUCCESS [ 0.062 s]

[INFO] Apache Hadoop Dynamometer Workload Simulator ....... SUCCESS [ 0.657 s]

[INFO] Apache Hadoop Dynamometer Cluster Simulator ........ SUCCESS [ 1.234 s]

[INFO] Apache Hadoop Dynamometer Block Listing Generator .. SUCCESS [ 0.375 s]

[INFO] Apache Hadoop Dynamometer Dist ..................... SUCCESS [ 0.141 s]

[INFO] Apache Hadoop Dynamometer .......................... SUCCESS [ 0.031 s]

[INFO] Apache Hadoop Archives ............................. SUCCESS [ 0.328 s]

[INFO] Apache Hadoop Archive Logs ......................... SUCCESS [ 0.469 s]

[INFO] Apache Hadoop Rumen ................................ SUCCESS [ 2.391 s]

[INFO] Apache Hadoop Gridmix .............................. SUCCESS [ 1.687 s]

[INFO] Apache Hadoop Data Join ............................ SUCCESS [ 0.437 s]

[INFO] Apache Hadoop Extras ............................... SUCCESS [ 0.422 s]

[INFO] Apache Hadoop Pipes ................................ SUCCESS [ 0.032 s]

[INFO] Apache Hadoop Amazon Web Services support .......... SUCCESS [ 35.218 s]

[INFO] Apache Hadoop Kafka Library support ................ SUCCESS [ 1.938 s]

[INFO] Apache Hadoop Azure support ........................ SUCCESS [ 7.593 s]

[INFO] Apache Hadoop Aliyun OSS support ................... SUCCESS [ 2.688 s]

[INFO] Apache Hadoop Scheduler Load Simulator ............. SUCCESS [ 1.813 s]

[INFO] Apache Hadoop Resource Estimator Service ........... SUCCESS [ 1.640 s]

[INFO] Apache Hadoop Azure Data Lake support .............. SUCCESS [ 1.641 s]

[INFO] Apache Hadoop Image Generation Tool ................ SUCCESS [ 0.656 s]

[INFO] Apache Hadoop Tools Dist ........................... SUCCESS [ 0.141 s]

[INFO] Apache Hadoop OpenStack support .................... SUCCESS [ 0.062 s]

[INFO] Apache Hadoop Common Benchmark ..................... SUCCESS [ 10.490 s]

[INFO] Apache Hadoop Tools ................................ SUCCESS [ 0.031 s]

[INFO] Apache Hadoop Client API ........................... SUCCESS [ 59.321 s]

[INFO] Apache Hadoop Client Runtime ....................... SUCCESS [ 50.175 s]

[INFO] Apache Hadoop Client Packaging Invariants .......... SUCCESS [ 0.798 s]

[INFO] Apache Hadoop Client Test Minicluster .............. SUCCESS [01:12 min]

[INFO] Apache Hadoop Client Packaging Invariants for Test . SUCCESS [ 0.110 s]

[INFO] Apache Hadoop Client Packaging Integration Tests ... SUCCESS [ 0.515 s]

[INFO] Apache Hadoop Distribution ......................... SUCCESS [ 0.063 s]

[INFO] Apache Hadoop Client Modules ....................... SUCCESS [ 0.031 s]

[INFO] Apache Hadoop Tencent COS Support .................. SUCCESS [ 2.281 s]

[INFO] Apache Hadoop Cloud Storage ........................ SUCCESS [ 1.313 s]

[INFO] Apache Hadoop Cloud Storage Project ................ SUCCESS [ 0.031 s]

[INFO] ------------------------------------------------------------------------

[INFO] BUILD SUCCESS

[INFO] ------------------------------------------------------------------------

[INFO] Total time: 10:00 min

[INFO] Finished at: 2023-06-11T20:21:34+08:00

[INFO] ------------------------------------------------------------------------

[WARNING]

[WARNING] Plugin validation issues were detected in 22 plugin(s)

[WARNING]

[WARNING] * org.apache.maven.plugins:maven-assembly-plugin:2.4

[WARNING] * org.apache.maven.plugins:maven-resources-plugin:2.6

[WARNING] * org.codehaus.mojo:findbugs-maven-plugin:3.0.5

[WARNING] * com.github.eirslett:frontend-maven-plugin:1.11.2

[WARNING] * org.apache.maven.plugins:maven-compiler-plugin:3.1

[WARNING] * org.apache.maven.plugins:maven-war-plugin:3.1.0

[WARNING] * org.apache.maven.plugins:maven-shade-plugin:3.2.1

[WARNING] * com.github.klieber:phantomjs-maven-plugin:0.7

[WARNING] * com.google.code.maven-replacer-plugin:replacer:1.5.3

[WARNING] * org.apache.maven.plugins:maven-enforcer-plugin:3.0.0-M1

[WARNING] * org.apache.maven.plugins:maven-dependency-plugin:3.0.2

[WARNING] * org.apache.maven.plugins:maven-source-plugin:2.3

[WARNING] * org.codehaus.mojo:build-helper-maven-plugin:1.9

[WARNING] * org.apache.maven.plugins:maven-jar-plugin:2.5

[WARNING] * org.apache.avro:avro-maven-plugin:1.7.7

[WARNING] * org.codehaus.mojo:license-maven-plugin:1.10

[WARNING] * org.apache.maven.plugins:maven-site-plugin:3.9.1

[WARNING] * org.apache.maven.plugins:maven-surefire-plugin:3.0.0-M1

[WARNING] * org.xolstice.maven.plugins:protobuf-maven-plugin:0.5.1

[WARNING] * com.github.searls:jasmine-maven-plugin:2.1

[WARNING] * org.apache.maven.plugins:maven-antrun-plugin:1.7

[WARNING] * org.apache.hadoop:hadoop-maven-plugins:3.3.5

[WARNING]

[WARNING] For more or less details, use 'maven.plugin.validation' property with one of the values (case insensitive): [BRIEF, DEFAULT, VERBOSE]

[WARNING]

如果编译成功,长这样。

** (7)还不算完**

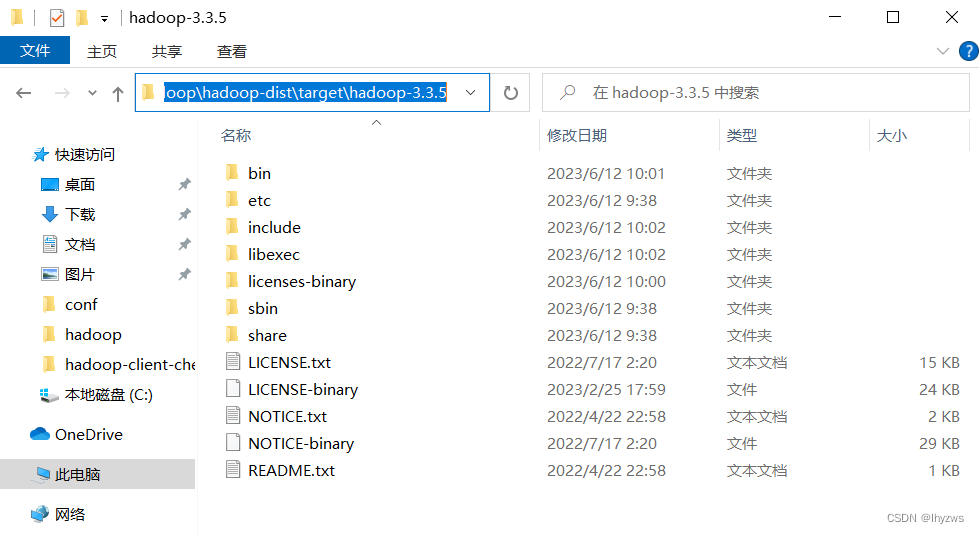

虽然编译成功了,我们还是只能得到一大堆的jar,也就是说,如果需求是改造hadoop代码,替换原来的jar包,那么到这一步可以结束了。但是如果需求是得到我们自己的hadoop安装包,还需要本地重新编译一下:

使用本地编译命令:

mvn package -Pdist -DskipTests -Dtar -Dmaven.javadoc.skip=true

或者

mvn package -Pdist,native-win -DskipTests -Dtar -Dmaven.javadoc.skip=true

从Maven Embedder - Maven CLI Options Reference (apache.org) 语焉不详的说明来看,我认为是-Pdist 和-Dtar起的作用。也就是说生成分发包和tar包(估计是根据不同的编译平台生成不同的结果)……不深究了,总之管用就行。

这一轮编译完成后,才算是能看到可部署的Hadoop文件,在C:\hadoop\hadoop-dist\target\hadoop-3.3.5下:

弄完以后发现一篇写得比较详尽的文章,参考价值较高:Compile and Build Hadoop 3.2.1 on Windows 10 Guide (kontext.tech)

四、Hadoop + Spark

当然,如果你不太相信自己编译的这个Hadoop,完全可以官网上下一个已经编译好的,至少Spark我们就打算这么干了。

把从官网下载的binary版本的压缩包解压到主机上:

(1)设置环境变量

设置好环境变量。对于hadoop和spark来说,需要JAVA_HOME、HADOOP_HOME、SPARK_HOME3个环境变量,还需要配置PATH环境变量以便调用hdfs、spark-shell之类的命令。

设置前改个名字,避免出现空格横线之类特别的字符。

在PATH变量中,主要加入HADOOP和SPARK的bin目录位置,因为我们要调用的命令都在这里,sbin里面主要是用于管理集群的工具,所以无所谓。

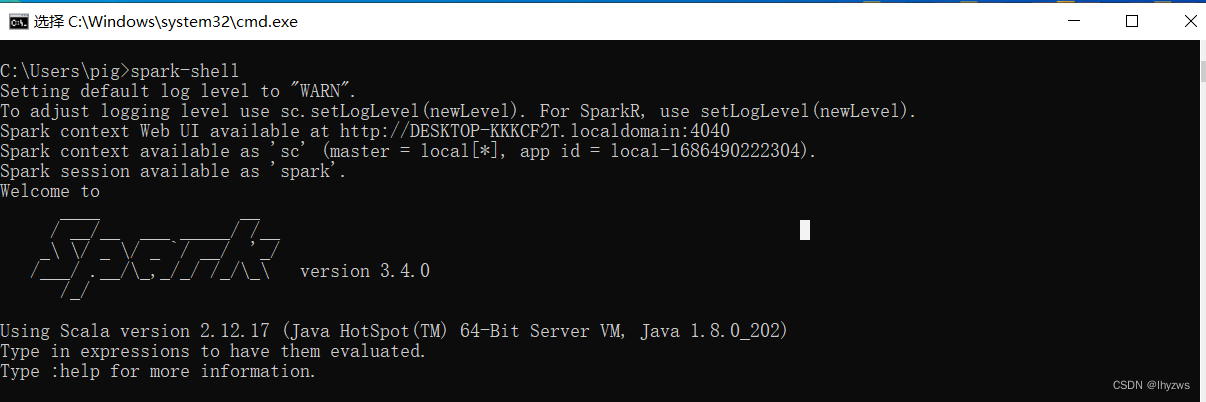

(2)运行看看

设置好后,直接运行该spark-shell可以发现,一个是前面会提示缺失winutils.exe,后面也不能“很干净”的退出。

当然,如果只做交互式分析,而且没有强迫症的话,这也没啥。

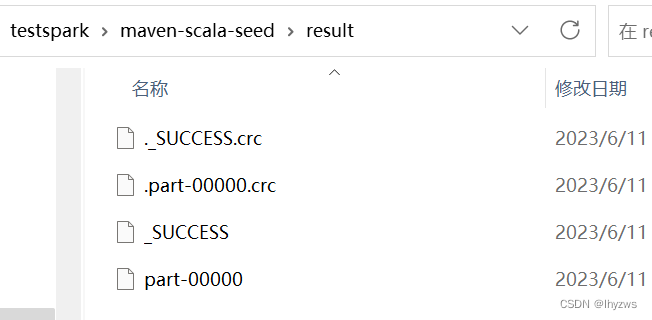

但是,如果是编程, 如下的这一句执行下来,你会发现目录下仅仅多了一个空的result目录,里面没有任何结果。这就是因为本应由winutils提供的接口不存在所导致的。

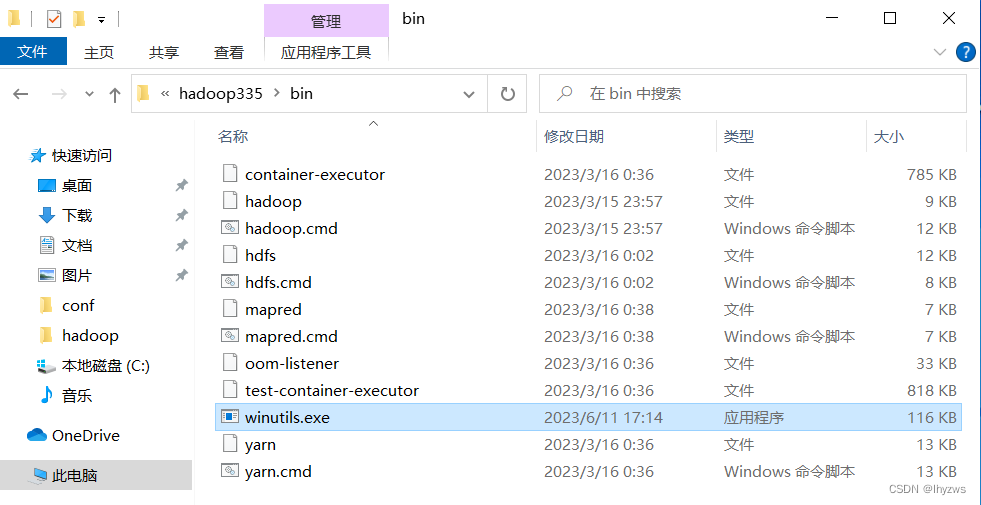

(3)拷贝winutils

解决办法也很简单,直接把我们刚才编译产生的winutils拷贝到hadoop的bin目录下即可。生成的文件在\hadoop\hadoop-common-project\hadoop-common\target\bin下面:

然后重新开启一个cmd执行spark-shell:

可以看到winutils的问题已经解决了。

统计结果也顺利生成。

五、搭建hadoop + Spark + VSCODE

写了一整天,实在写不动了。VSCODE的搭建没有什么幺蛾子了,和前面几篇讲的方法一样,不赘述。

版权归原作者 lhyzws 所有, 如有侵权,请联系我们删除。