pom依赖添加hive-jdbc

根据实际情况添加依赖,主要看服务器hive版本

<dependency>

<groupId>org.apache.hive</groupId>

<artifactId>hive-jdbc</artifactId>

<version>2.1.1-cdh6.3.2</version>

<exclusions>

<exclusion>

<groupId>org.slf4j</groupId>

<artifactId>*</artifactId>

</exclusion>

<exclusion>

<groupId>org.apache.logging.log4j</groupId>

<artifactId>log4j-slf4j-impl</artifactId>

</exclusion>

<exclusion>

<artifactId>log4j</artifactId>

<groupId>log4j</groupId>

</exclusion>

<exclusion>

<artifactId>slf4j-log4j12</artifactId>

<groupId>org.slf4j</groupId>

</exclusion>

</exclusions>

</dependency>

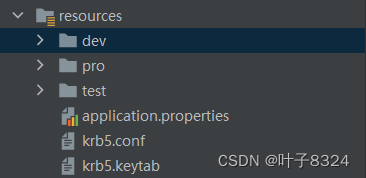

认证文件

配置文件krb5.conf,认证文件krb5.keytab,一般由服务器生成后获取

放到resources目录下

认证方法KerberosAuth.java

指定krb5配置文件:krb5.conf,根据实际情况替换

认证文件:krb5.keytab,根据实际情况替换

认证用户:hive,根据实际情况修改

这里是通过将配置文件和认证文件拷贝到临时目录进行认证,可以根据需要指定固定目录认证

public class KerberosAuth {

private static Logger log = LoggerFactory.getLogger(KerberosConnect.class);

// kerberos配置文件,从服务上获取

private static String krbConfig="krb5.conf";

// kerberos认证文件

private static String krbKeytab="krb5.keytab";

// kerberos认证用户

private static String krbUser="hive";

private static String tempDir;

public static void init(){

initkerberos();

}

public static void initkerberos() {

log.info("Kerberos 登陆验证");

try {

// java临时目录,window为C:\Users\登录用户\AppData\Local\Temp\,linux为/tmp,需要根据情况添加斜杠

String javaTempDir = System.getProperty("java.io.tmpdir");

// 将之前的临时认证文件删除

// deleteTempDir();

tempDir = (javaTempDir.lastIndexOf(File.separator)==javaTempDir.length()-1?javaTempDir:javaTempDir+File.separator)+"tomcat_"+System.currentTimeMillis();

String configPath = krbConfig.contains(File.separator) ? krbConfig : getTempPath(tempDir,krbConfig);

String keytabPath = krbKeytab.contains(File.separator) ? krbKeytab : getTempPath(tempDir,krbKeytab);

log.debug(configPath);

log.debug(keytabPath);

System.setProperty("java.security.krb5.conf", configPath);//设置krb配置文件路径,注意一定要放在Configuration前面,不然不生效

Configuration conf = new Configuration();

conf.set("hadoop.security.authentication", "Kerberos");//设置认证模式Kerberos

UserGroupInformation.setConfiguration(conf);

UserGroupInformation.loginUserFromKeytab(krbUser, keytabPath);//设置认证用户和krb认证文件路径

log.info("Kerberos 验证成功");

} catch (Exception e) {

log.error("Kerberos 验证失败", e);

}

}

/**

* 根据文件名称获取文件路径临时路径(解决jar包不支持获取resource下文件问题)

* @param tempPath 临时目录

* @param fileName 文件名称

* @return 文件临时路径

*/

public static String getTempPath(String tempPath, String fileName){

// ClassPathResource krbConfigRes = new ClassPathResource(fileName);

// String path = this.getClass().getClassLoader().getResource(fileName);

InputStream in = KerberosConnect.class.getResourceAsStream("/" + fileName);

String krbConfigFileTempPath = tempPath+File.separator+fileName;

File krbConfigFileTemp = new File(krbConfigFileTempPath);

File tempPathFile = new File(tempPath);

if (!tempPathFile.exists()) {

tempPathFile.mkdir();

}

try {

MyFileUtils.copyInputStreamToFile(in,krbConfigFileTemp);

} catch (Exception e) {

log.error("getTempPath",e);

}

return krbConfigFileTemp.getPath();

}

}

HiveUtils

kerberos认证需要在获取Connection 之前

而且jdb连接格式如下

jdbc:hive2://10...**:10000/test_db;principal=hive/hostname@HADOOP.COM

说明

principal:

hive/hostname:这里指定认证的hive的hostname @HADOOP.COM:realms和krb5.conf文件里一致即可

import com.gientech.schedule.config.KerberosConnect;

import org.slf4j.Logger;

import org.slf4j.LoggerFactory;

import java.sql.*;

import java.util.*;

public class HiveUtils {

private static Logger logger = LoggerFactory.getLogger(HiveUtils.class.getName());

private static String driverName = "org.apache.hive.jdbc.HiveDriver";

private static String url = "jdbc:hive2://10.10.10.10:10000/test_db;principal=hive/[email protected]";//端口默认10000

/**

* 获取Connection

* @return conn

* @throws SQLException

* @throws ClassNotFoundException

*/

public static Connection getConnection() throws SQLException {

Connection conn = null;

try {

KerberosAuth.init();

conn = DriverManager.getConnection(url);

} catch (SQLException e) {

logger.info("获取数据库连接失败!");

throw e;

}

return conn;

}

// 创建数据库

public static void createDatabase(String databaseName) throws Exception {

String sql = "create database "+databaseName;

logger.info("Running: " + sql);

Connection conn = getConnection();

Statement stmt = conn.createStatement();

stmt.execute(sql);

closeConnection(conn);

closeStatement(stmt);

}

// 查询所有数据库

public static void showDatabases() throws Exception {

String sql = "show databases";

logger.info("Running: " + sql);

Connection conn = getConnection();

Statement stmt = conn.createStatement();

ResultSet rs = stmt.executeQuery(sql);

while (rs.next()) {

logger.info(rs.getString(1));

}

closeConnection(rs,stmt,conn);

}

/**

* 创建表(分割符为“,”)

* 如create table tableName(name string,sex string) row format delimited fields terminated by ','

* @param sql

* @throws Exception

*/

public static void createTable(String sql) throws Exception {

logger.info("Running: " + sql);

Connection conn = getConnection();

Statement stmt = conn.createStatement();

stmt.execute(sql);

closeConnection(conn);

closeStatement(stmt);

}

// 查询所有表

public static void showTables() throws Exception {

String sql = "show tables";

logger.info("Running: " + sql);

getConnection();

Connection conn = getConnection();

Statement stmt = conn.createStatement();

ResultSet rs = stmt.executeQuery(sql);

while (rs.next()) {

logger.info(rs.getString(1));

}

closeConnection(rs,stmt,conn);

}

// 查看表结构

public static void descTable(String tableName) throws Exception {

String sql = "desc formatted "+tableName;

logger.info("Running: " + sql);

Connection conn = getConnection();

Statement stmt = conn.createStatement();

ResultSet rs = stmt.executeQuery(sql);

while (rs.next()) {

logger.info(rs.getString(1) + "\t" + rs.getString(2));

}

closeConnection(rs,stmt,conn);

}

// 加载数据(请确保文件权限)

public static void loadData(String filePath,String tableName) throws Exception {

String sql = "load data inpath '" + filePath + "' into table tableName";

logger.info("Running: " + sql);

Connection conn = getConnection();

Statement stmt = conn.createStatement();

stmt.execute(sql);

closeConnection(conn);

closeStatement(stmt);

}

// 查询数据

public static void selectData(String sql) throws Exception {

logger.info("Running: " + sql);

Connection conn = getConnection();

Statement stmt = conn.createStatement();

ResultSet rs = stmt.executeQuery(sql);

rs = stmt.executeQuery(sql);

while (rs.next()) {

logger.info(rs.getString(1));

}

closeConnection(rs,stmt,conn);

}

// 删除数据库

public static void dropDatabase(String databaseName) throws Exception {

String sql = "drop database if exists "+databaseName;

logger.info("Running: " + sql);

Connection conn = getConnection();

Statement stmt = conn.createStatement();

stmt.execute(sql);

closeConnection(conn);

closeStatement(stmt);

}

// 删除数据库表

public static void deopTable(String tableName) throws Exception {

String sql = "drop table if exists "+tableName;

logger.info("Running: " + sql);

Connection conn = getConnection();

Statement stmt = conn.createStatement();

stmt.execute(sql);

closeConnection(conn);

closeStatement(stmt);

}

public static Map<String,Object> queryMapBySql(String sql){

//定义数据库连接

Connection conn = null;

//定义PreparedStatement对象

PreparedStatement ps = null;

//定义查询的结果集

ResultSet rs = null;

try {

conn = getConnection();

//定义执行的sql语句

ps = conn.prepareStatement(sql);

rs = ps.executeQuery();

return getMapFromResultSet(rs);

} catch (Exception e) {

logger.info("queryDataListBySql"+e.getMessage());

}finally {

closeConnection(rs,ps,conn);

}

return Collections.emptyMap();

}

/**

* 关闭ResultSet、Statement、Connection

*

* @param rs

* @param stmt

* @param con

*/

public static void closeConnection(ResultSet rs, Statement stmt, Connection con) {

closeResultSet(rs);

closeStatement(stmt);

closeConnection(con);

}

/**

* 关闭ResultSet

*

* @param rs

*/

public static void closeResultSet(ResultSet rs) {

if (rs != null) {

try {

rs.close();

} catch (SQLException e) {

logger.info(e.getMessage());

}

}

}

/**

* 关闭Statement

*

* @param stmt

*/

public static void closeStatement(Statement stmt) {

if (stmt != null) {

try {

stmt.close();

} catch (Exception e) {

logger.info(e.getMessage());

}

}

}

/**

* 关闭Connection

*

* @param con

*/

public static void closeConnection(Connection con) {

if (con != null) {

try {

con.close();

} catch (Exception e) {

logger.info(e.getMessage());

}

}

}

/**

* 将resultset结果转为sonObject

* @param rs ResultSet

* @return List

* @throws SQLException 异常

*/

public static Map<String,Object> getMapFromResultSet(ResultSet rs)

throws SQLException {

Map<String,Object> hm = new HashMap();

ResultSetMetaData rsmd = rs.getMetaData();

int count = rsmd.getColumnCount();// 获取列的数量

while(rs.next()) {

for (int i = 1; i <= count; i++) {

String key = rsmd.getColumnLabel(i);

Object value = rs.getObject(i);

hm.put(key, value);

}

}

return hm;

}

public static List<Map<String,Object>> queryListBySql(String sql){

//定义数据库连接

Connection conn = null;

//定义PreparedStatement对象

PreparedStatement ps = null;

//定义查询的结果集

ResultSet rs = null;

try {

conn = getConnection();

//定义执行的sql语句

ps = conn.prepareStatement(sql);

rs = ps.executeQuery();

return getListFromResultSet(rs);

} catch (Exception e) {

logger.info("queryDataListBySql"+e.getMessage());

}finally {

closeConnection(rs,ps,conn);

}

return Collections.emptyList();

}

/**

* 将resultset结果转为list

* @param rs ResultSet

* @return List

* @throws SQLException 异常

*/

private static List<Map<String,Object>> getListFromResultSet(ResultSet rs)

throws SQLException {

List<Map<String,Object>> results= new ArrayList<>();//结果数据

ResultSetMetaData metaData = rs.getMetaData(); // 获得列的结果

List<String> colNameList= new ArrayList<>();

int cols_len = metaData.getColumnCount(); // 获取总的列数

for (int i = 0; i < cols_len; i++) {

colNameList.add(metaData.getColumnName(i+1));

}

while (rs.next()) {

Map<String, Object> map= new HashMap<>();

for(int i=0;i<cols_len;i++){

String key=colNameList.get(i);

Object value=rs.getString(colNameList.get(i));

map.put(key, value);

}

results.add(map);

}

return results;

}

public static void main(String[] args) throws Exception {

String sql = "SELECT * FROM `t1` LIMIT 1";

List<Map<String, Object>> maps = queryListBySql(sql);

logger.info(maps.toString());

}

}

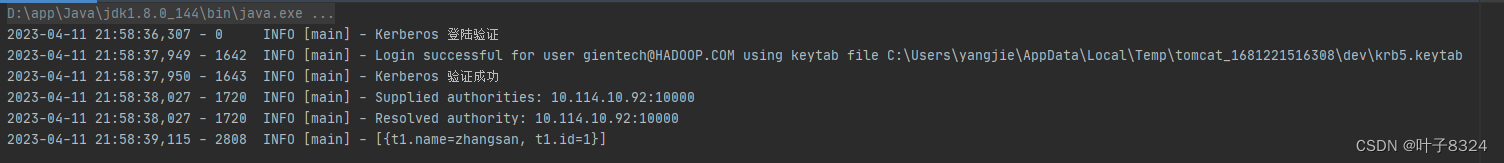

执行main方法查询效果如下

常见问题

1、Peer indicated failure: Unsupported mechanism type GSSAPI

hive服务中关闭了kerberos,但是连接中使用了kerberos认证,导致此错误

2、Unsupported mechanism type PLAIN

代表hive服务已经开启了kerberos,但是链接字符串未使用kerberos认证方式(principal=hive/hostname@HADOOP.COM),所以报错。

版权归原作者 叶子8324 所有, 如有侵权,请联系我们删除。