Spark高效数据分析01、idea开发环境搭建

📋前言📋

💝博客:【红目香薰的博客_CSDN博客-计算机理论,2022年蓝桥杯,MySQL领域博主】💝

✍本文由在下【红目香薰】原创,首发于CSDN✍

🤗2022年最大愿望:【服务百万技术人次】🤗

💝Spark初始环境地址:【Spark高效数据分析01、idea开发环境搭建】💝

环境需求

环境:win10

开发工具:IntelliJ IDEA 2020.1.3 x64

maven版本:3.0.5

环境搭建

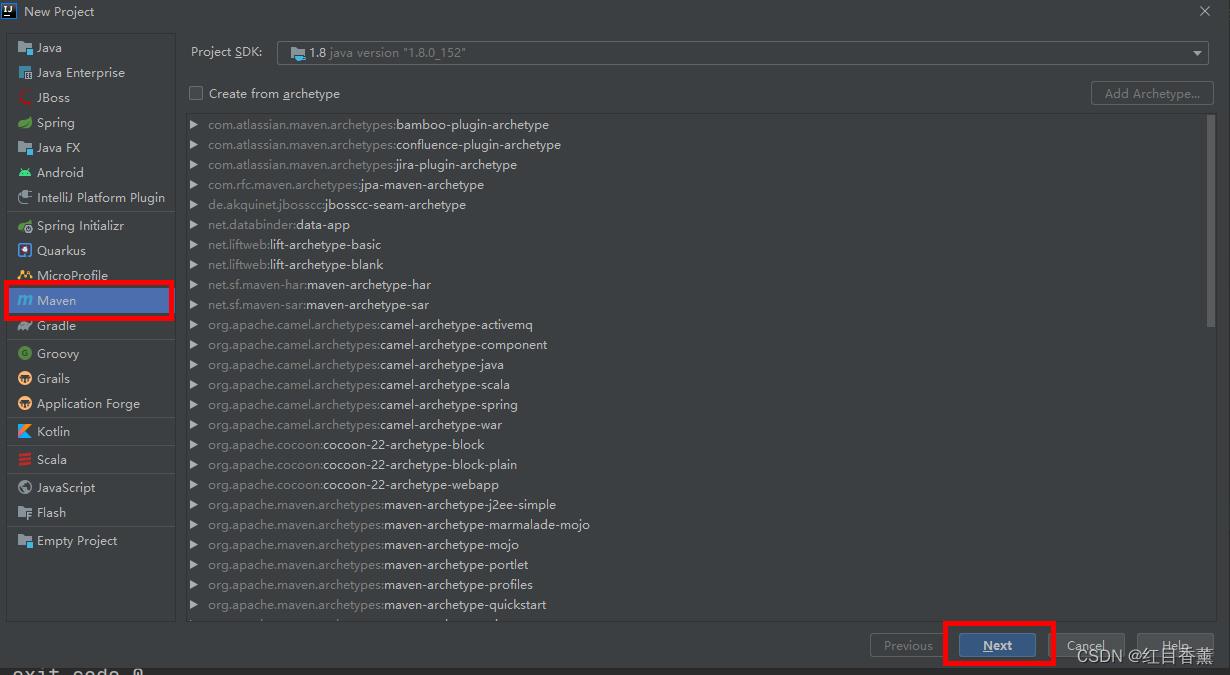

创建maven项目

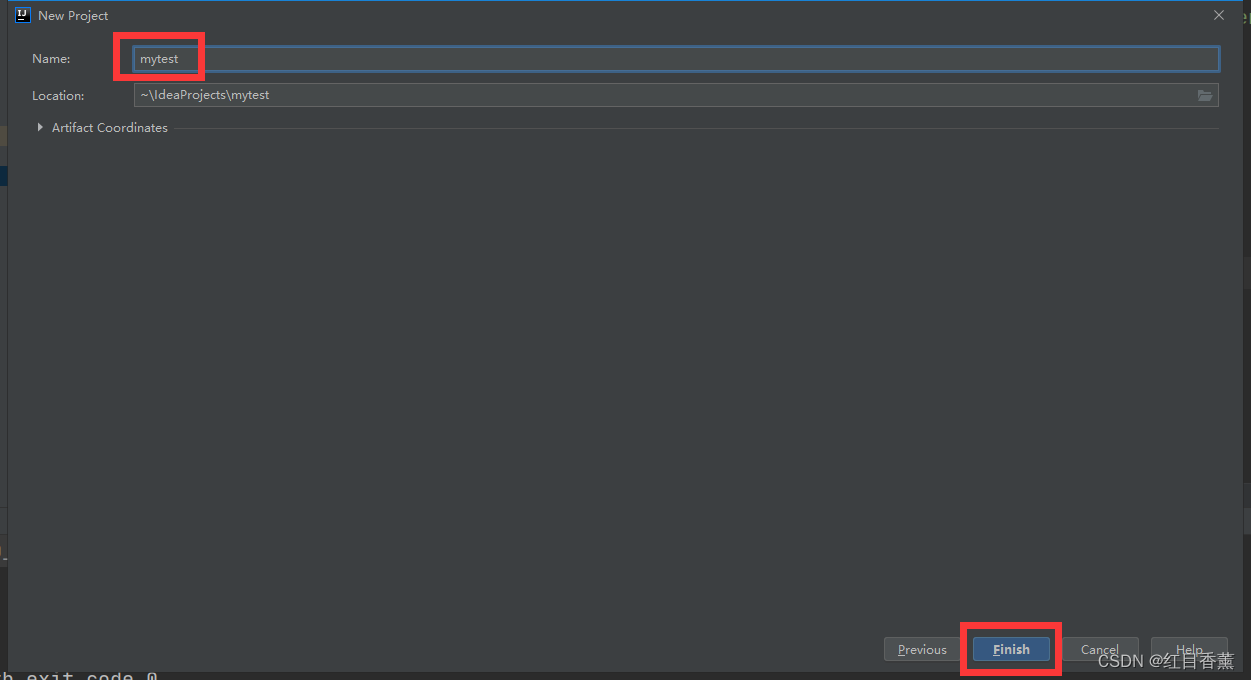

起一个名字

只要是【3.0】以上的版本都可以正常使用

【settings.xml】这里使用的是阿里的镜像位置,默认库位置在【D:\maven\repository】

<?xml version="1.0" encoding="UTF-8"?>

<settings xmlns="http://maven.apache.org/SETTINGS/1.0.0"

xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xsi:schemaLocation="http://maven.apache.org/SETTINGS/1.0.0 http://maven.apache.org/xsd/settings-1.0.0.xsd">

<localRepository>D:\maven\repository</localRepository>

<pluginGroups>

</pluginGroups>

<proxies>

</proxies>

<servers>

</servers>

<mirrors>

<!-- 阿里云镜像 -->

<mirror>

<id>alimaven</id>

<name>aliyun maven</name>

<url>http://maven.aliyun.com/nexus/content/repositories/central/</url>

<mirrorOf>central</mirrorOf>

</mirror>

<!-- junit镜像地址 -->

<mirror>

<id>junit</id>

<name>junit Address/</name>

<url>http://jcenter.bintray.com/</url>

<mirrorOf>central</mirrorOf>

</mirror>

<mirror>

<id>alimaven</id>

<name>aliyun maven</name>

<url>http://maven.aliyun.com/nexus/content/groups/public/</url>

<mirrorOf>central</mirrorOf>

</mirror>

</mirrors>

<profiles>

</profiles>

</settings>

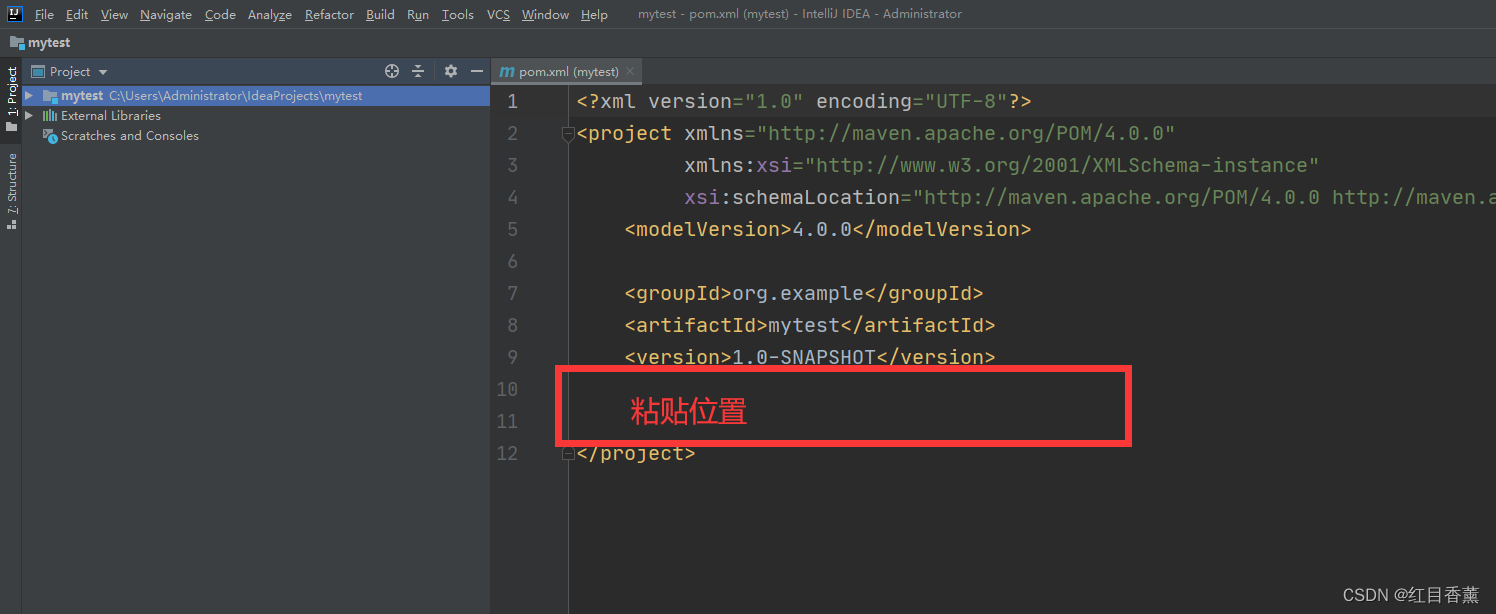

pom.xml需求

<dependencies>

<!-- https://mvnrepository.com/artifact/org.apache.spark/spark-core -->

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-core_2.13</artifactId>

<version>3.3.0</version>

</dependency>

<!-- https://mvnrepository.com/artifact/org.apache.spark/spark-sql -->

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-sql_2.13</artifactId>

<version>3.3.0</version>

<scope>provided</scope>

</dependency>

<!-- https://mvnrepository.com/artifact/org.apache.spark/spark-streaming -->

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-streaming_2.13</artifactId>

<version>3.3.0</version>

<scope>provided</scope>

</dependency>

<!-- https://mvnrepository.com/artifact/org.apache.spark/spark-mllib -->

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-mllib_2.13</artifactId>

<version>3.3.0</version>

<scope>provided</scope>

</dependency>

<!-- https://mvnrepository.com/artifact/org.apache.spark/spark-hive -->

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-hive_2.13</artifactId>

<version>3.3.0</version>

<scope>provided</scope>

</dependency>

</dependencies>

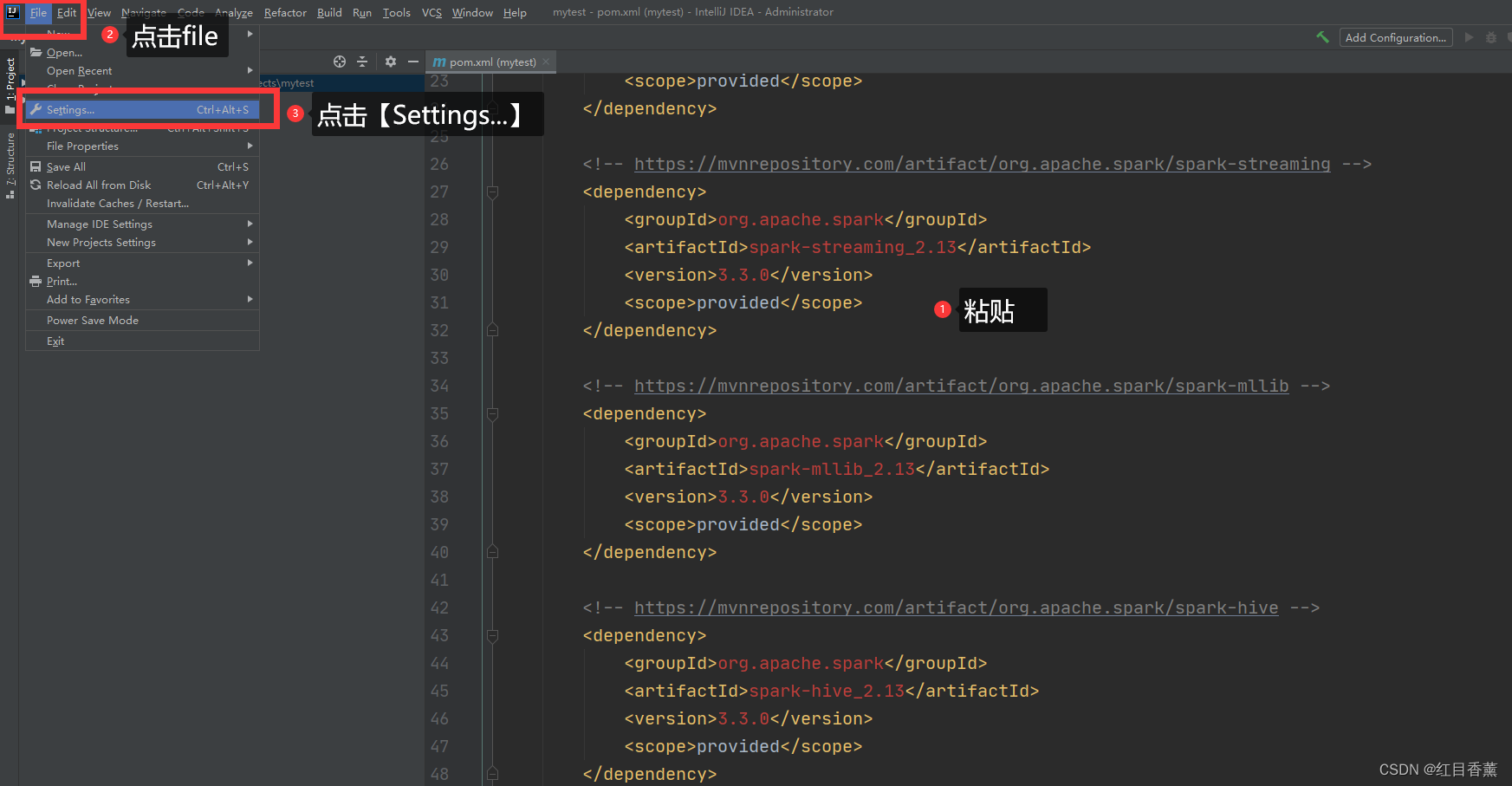

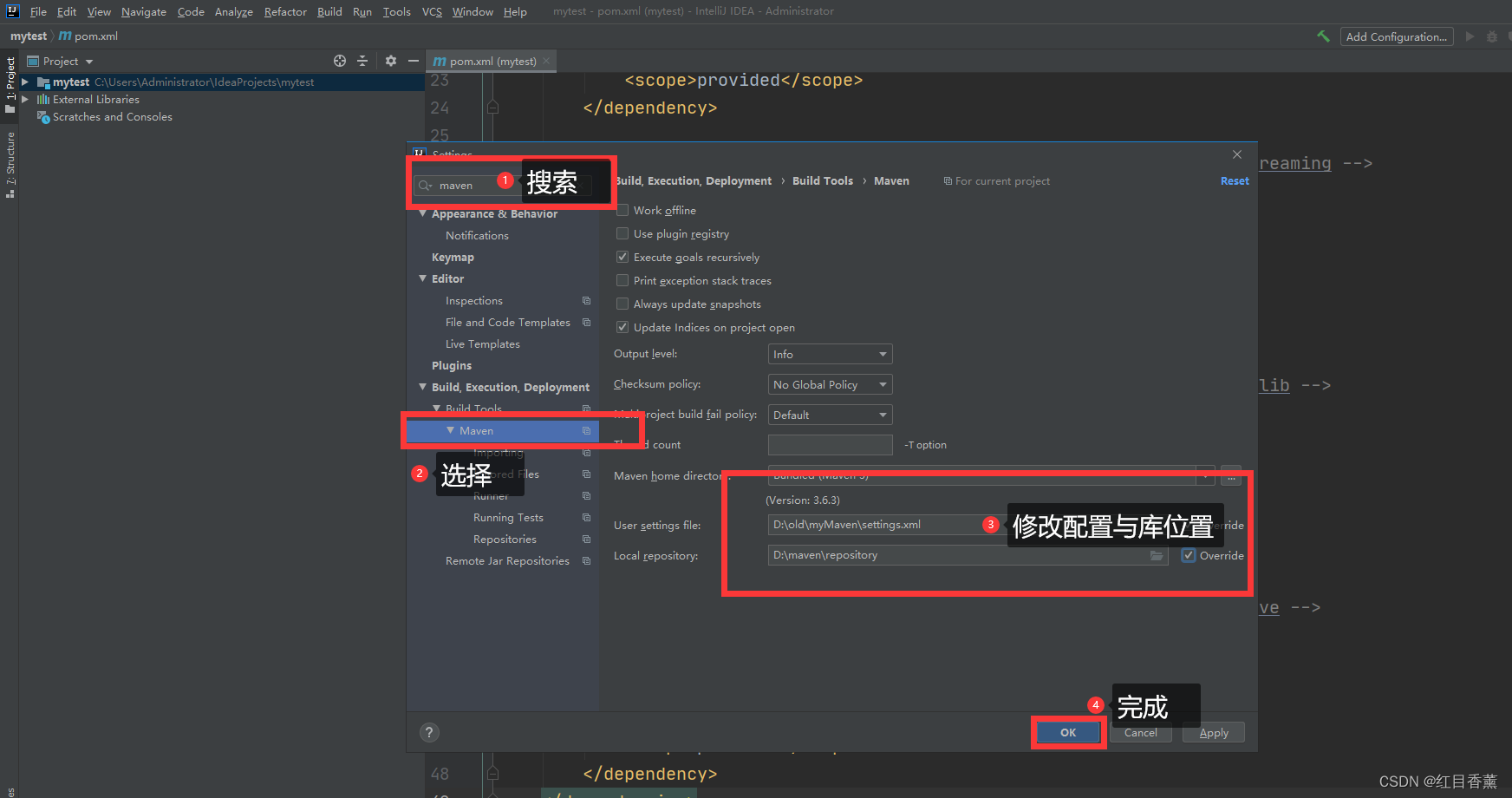

修改【maven】

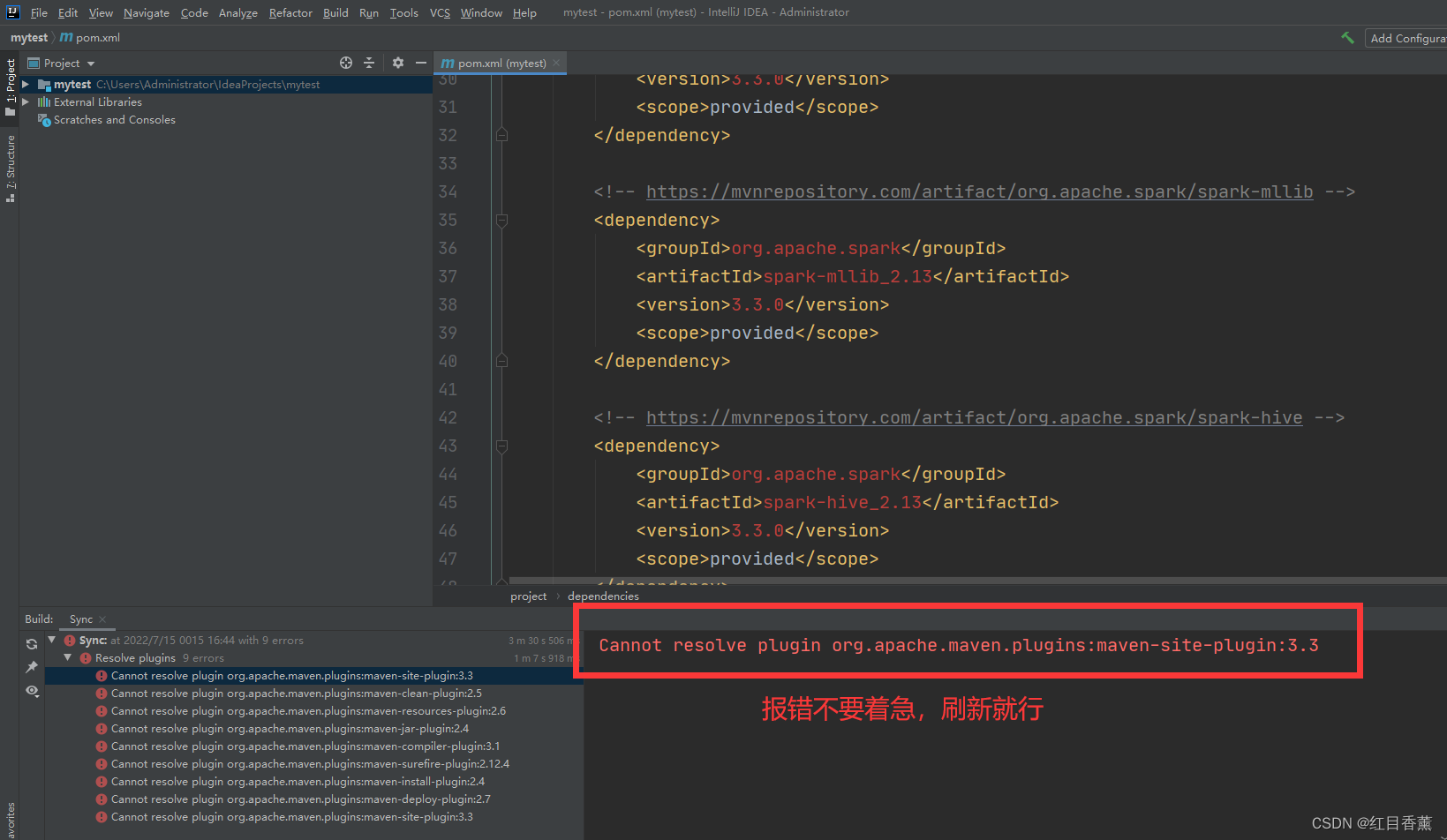

未引入错误提示:

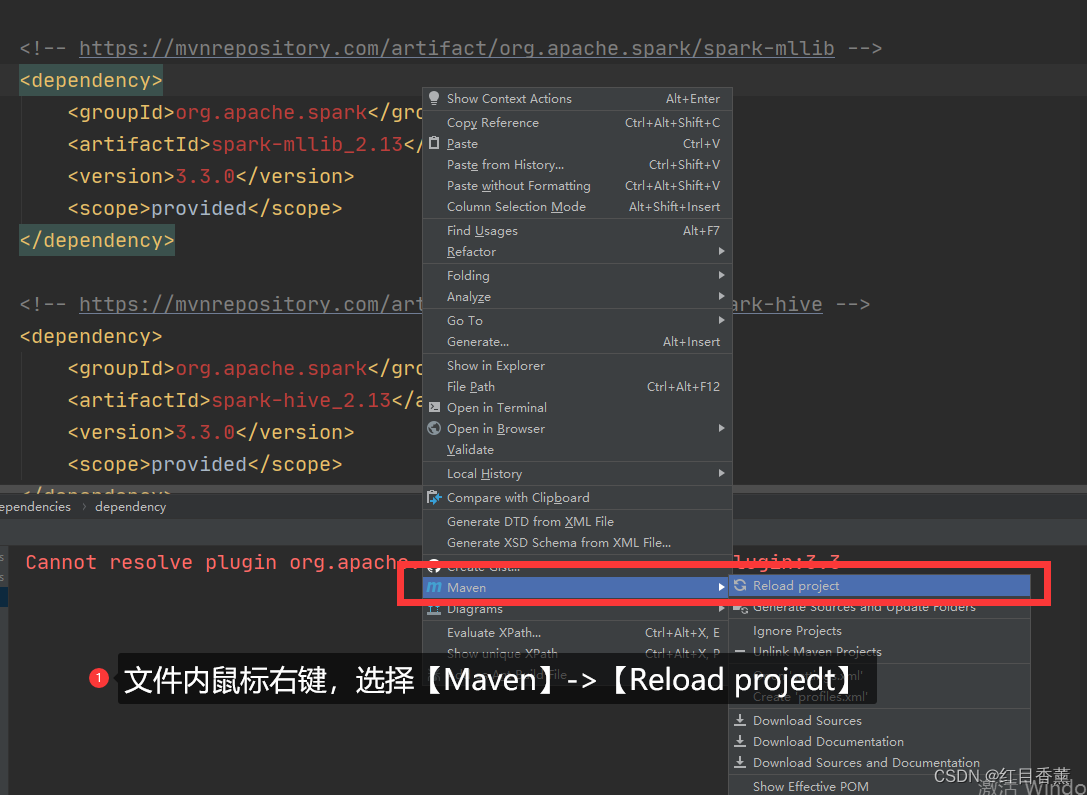

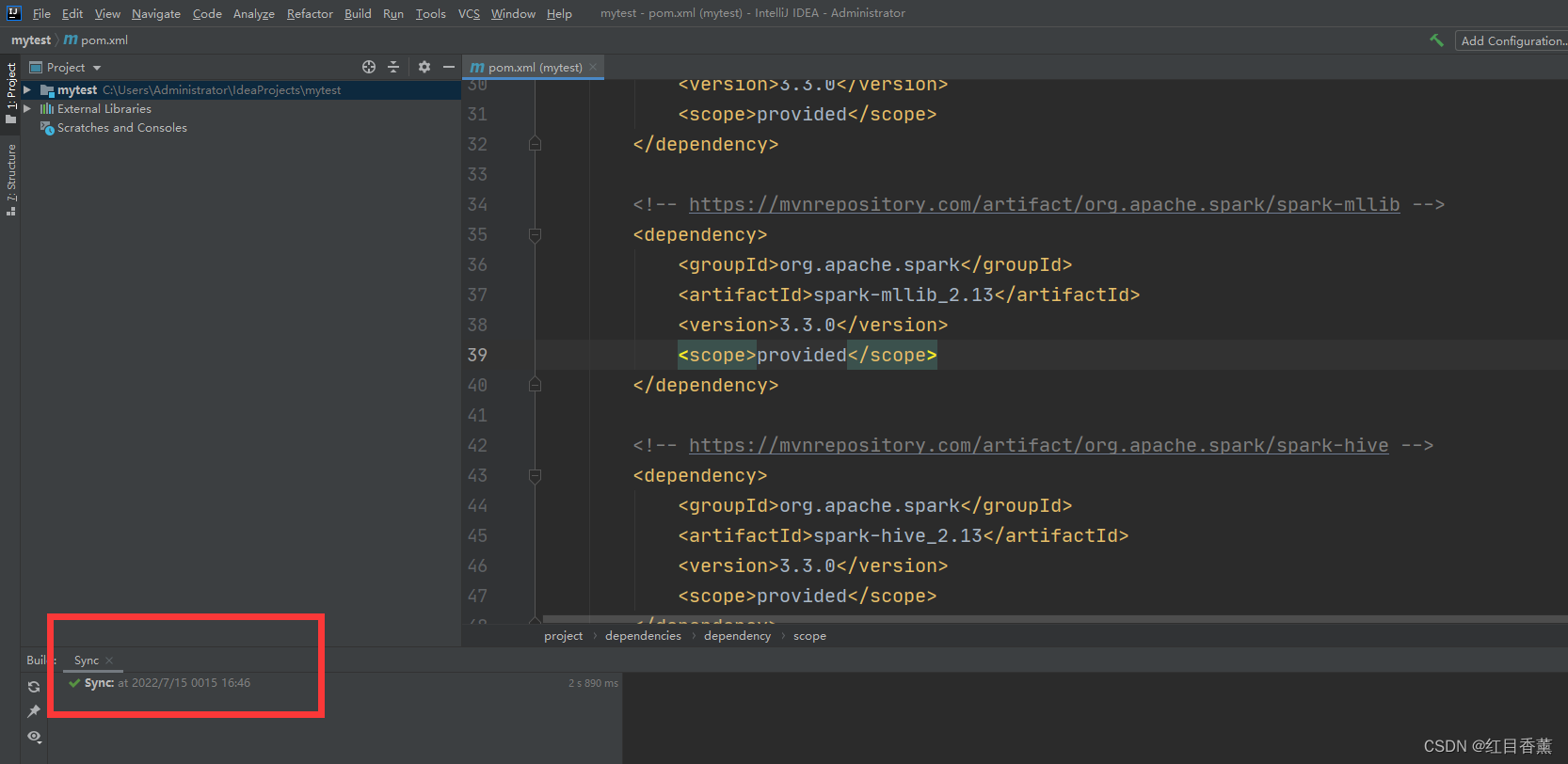

刷新【maven】配置文件

下载完成: 整个包内容大概【500M】,别用流量下载,很亏的。

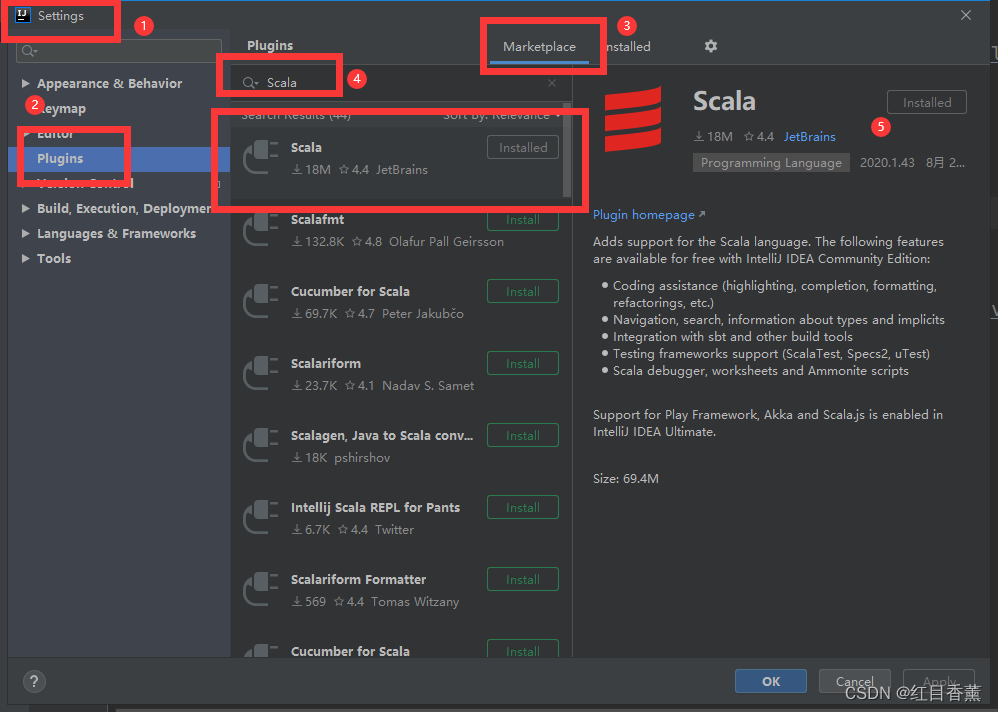

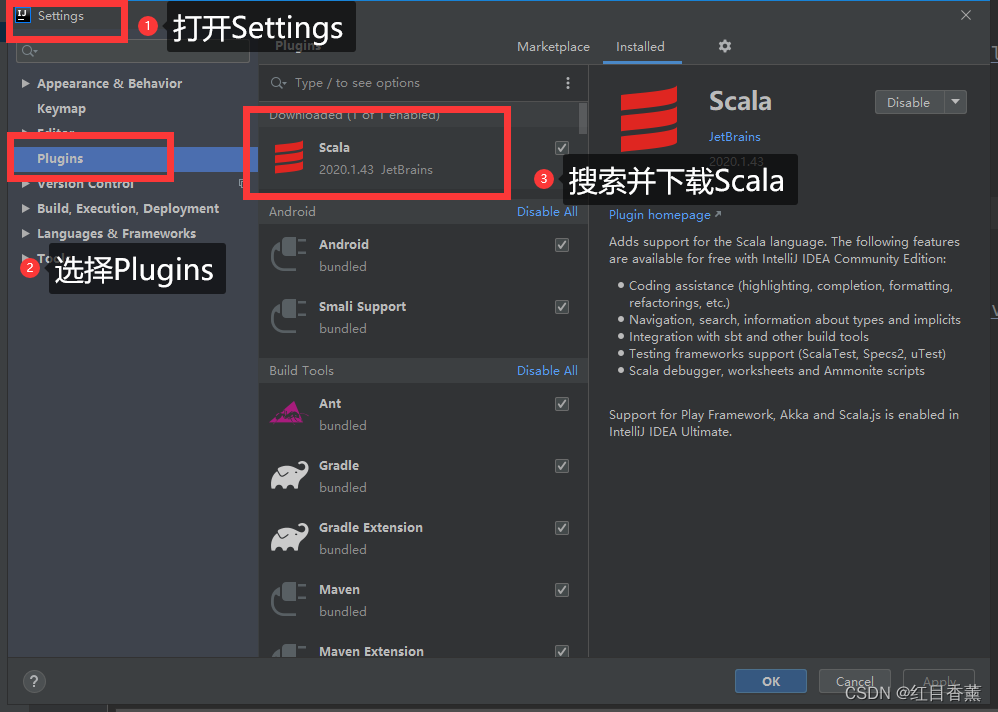

下载【Scala】插件

在【Installed】中可以查看是否安装完毕。

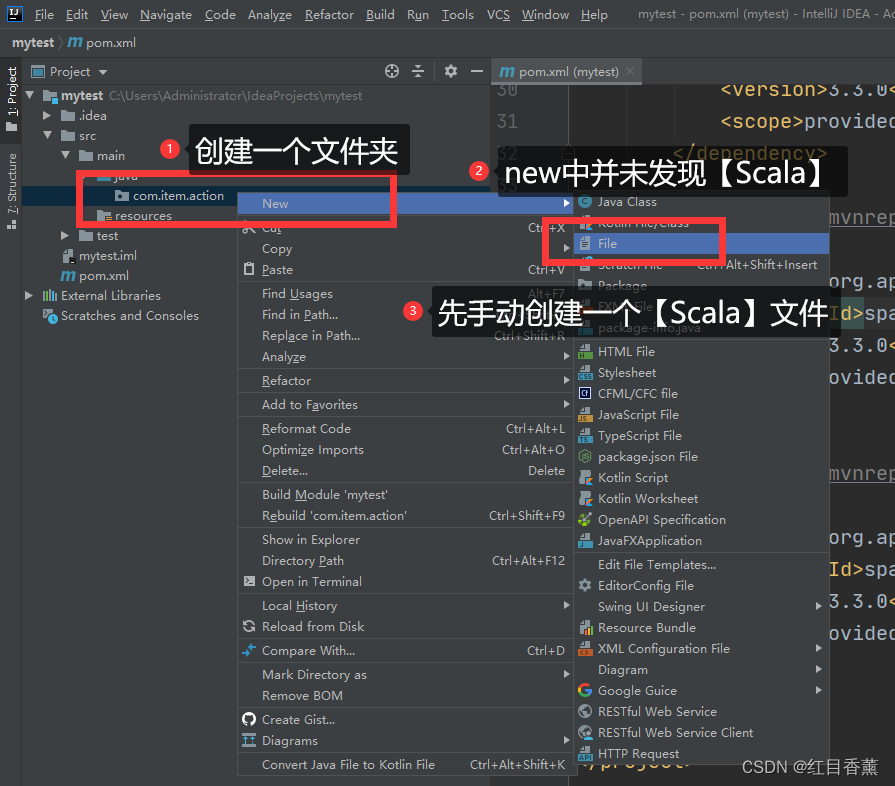

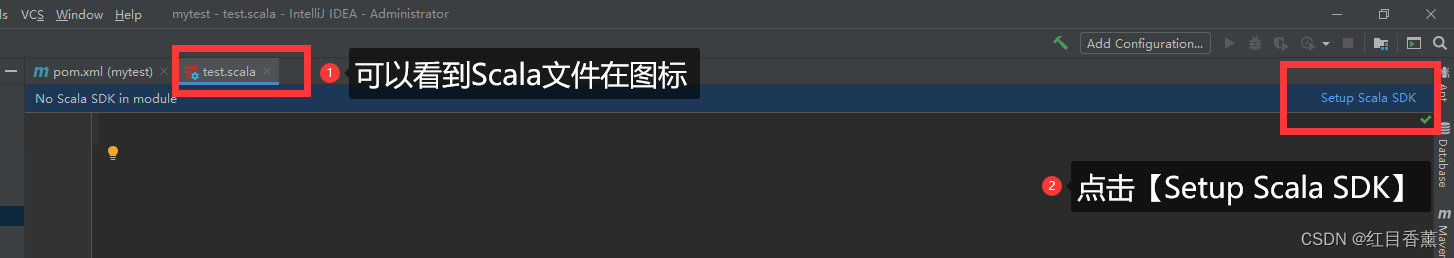

创建【Scala】文件

**手动创建【scala】文件 **

点击设置Scala的SDK

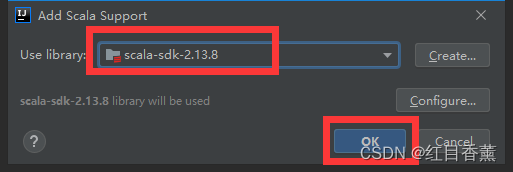

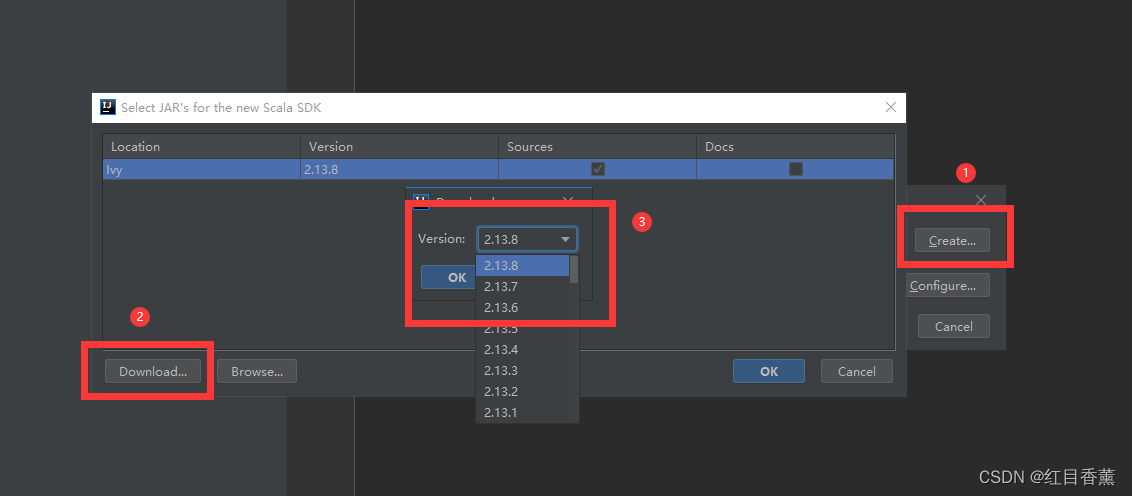

我这里有【2.13.8】版本的,如果没有,点击【Create】去下载一个。

如果是下载的话得多等一会,挺慢的。

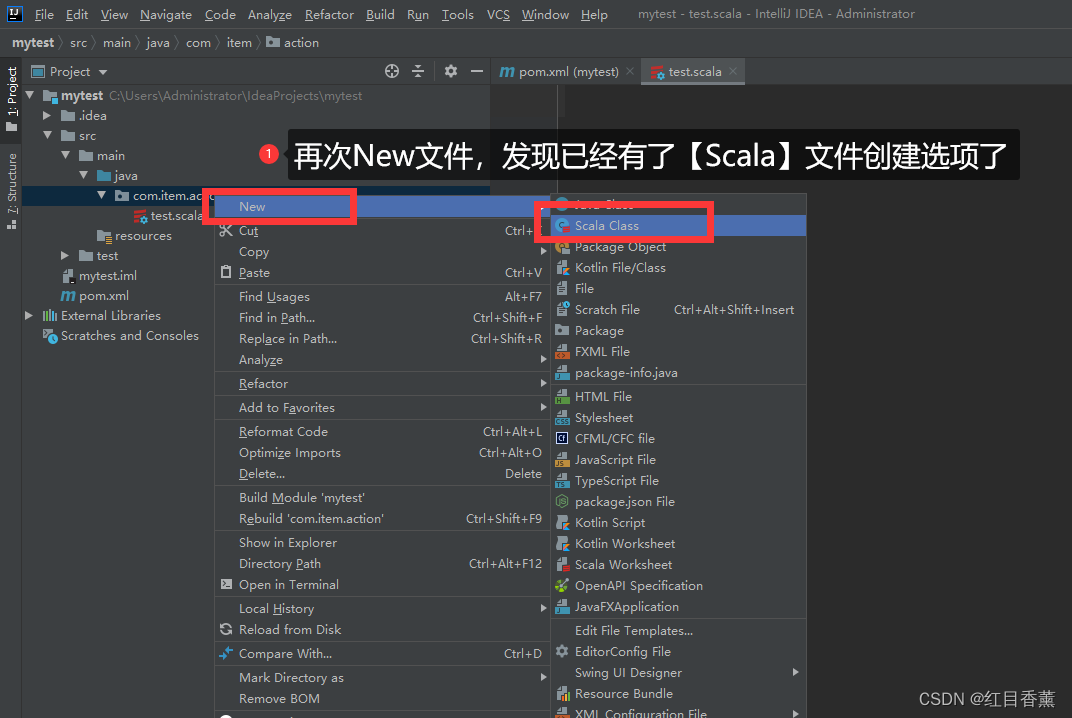

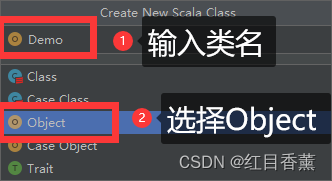

**可创建: **

Scala的类名需要首字母大写

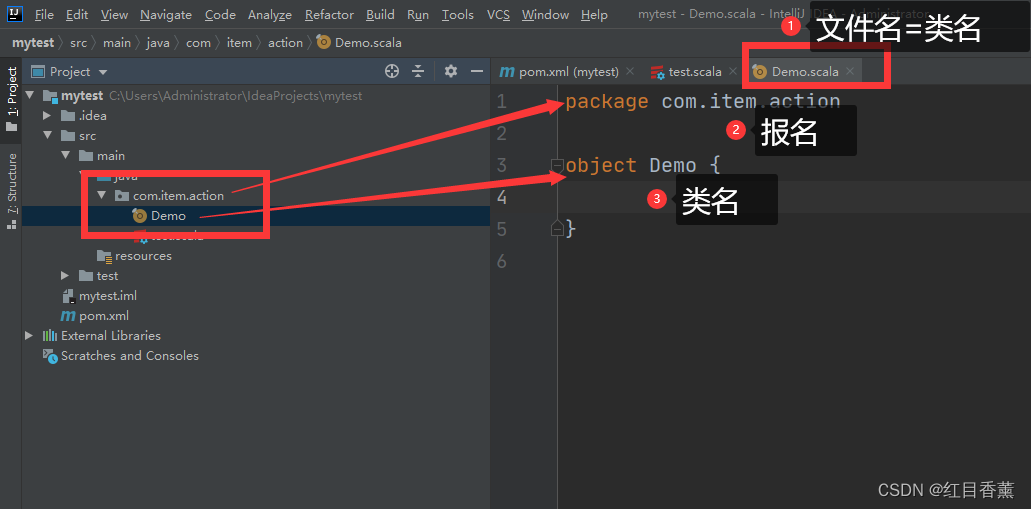

创建成功

执行测试文件:

package com.item.action

import org.apache.spark.{SparkConf, SparkContext}

object Demo {

def main(args: Array[String]): Unit = {

// 创建 Spark 运行配置对象

val sparkConf = new SparkConf().setMaster("local[*]").setAppName("单词数量统计:")

// 创建 Spark 上下文环境对象(连接对象)

val sc = new SparkContext(sparkConf)

// 读取文件

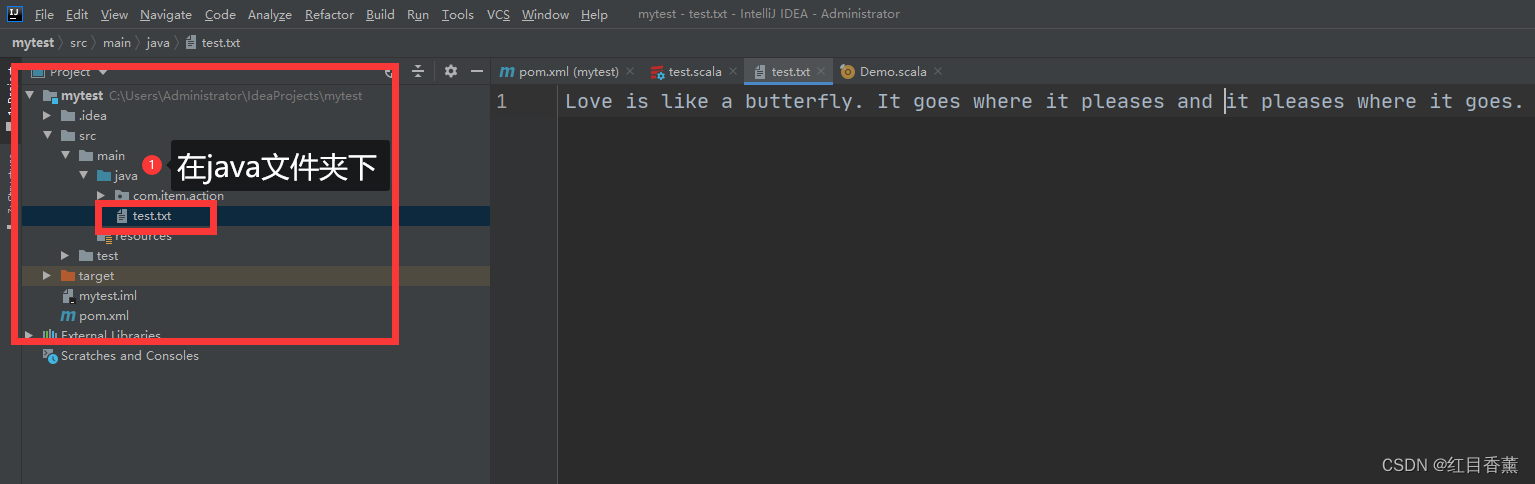

var input=sc.textFile("src/main/java/test.txt");

// 分词

var lines=input.flatMap(line=>line.split(" "))

// 分组计数

var count=lines.map(word=>(word,1)).reduceByKey{(x,y)=>x+y}

// 打印结果

count.foreach(println)

//关闭 Spark 连接

sc.stop()

}

}

读取文件:

Love is like a butterfly. It goes where it pleases and it pleases where it goes.

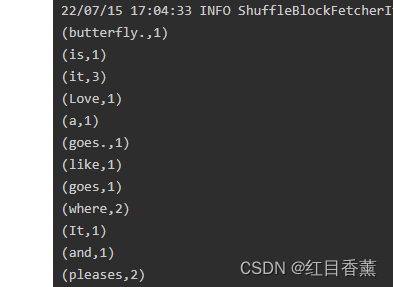

**打印结果: **

C:\java\jdk\jdk1.8.0_152\bin\java.exe "-javaagent:C:\java\IDEA\IntelliJ IDEA 2020.1.3\lib\idea_rt.jar=57562:C:\java\IDEA\IntelliJ IDEA 2020.1.3\bin" -Dfile.encoding=UTF-8 -classpath C:\java\jdk\jdk1.8.0_152\jre\lib\charsets.jar;C:\java\jdk\jdk1.8.0_152\jre\lib\deploy.jar;C:\java\jdk\jdk1.8.0_152\jre\lib\ext\access-bridge-64.jar;C:\java\jdk\jdk1.8.0_152\jre\lib\ext\cldrdata.jar;C:\java\jdk\jdk1.8.0_152\jre\lib\ext\dnsns.jar;C:\java\jdk\jdk1.8.0_152\jre\lib\ext\jaccess.jar;C:\java\jdk\jdk1.8.0_152\jre\lib\ext\jfxrt.jar;C:\java\jdk\jdk1.8.0_152\jre\lib\ext\localedata.jar;C:\java\jdk\jdk1.8.0_152\jre\lib\ext\nashorn.jar;C:\java\jdk\jdk1.8.0_152\jre\lib\ext\sunec.jar;C:\java\jdk\jdk1.8.0_152\jre\lib\ext\sunjce_provider.jar;C:\java\jdk\jdk1.8.0_152\jre\lib\ext\sunmscapi.jar;C:\java\jdk\jdk1.8.0_152\jre\lib\ext\sunpkcs11.jar;C:\java\jdk\jdk1.8.0_152\jre\lib\ext\zipfs.jar;C:\java\jdk\jdk1.8.0_152\jre\lib\javaws.jar;C:\java\jdk\jdk1.8.0_152\jre\lib\jce.jar;C:\java\jdk\jdk1.8.0_152\jre\lib\jfr.jar;C:\java\jdk\jdk1.8.0_152\jre\lib\jfxswt.jar;C:\java\jdk\jdk1.8.0_152\jre\lib\jsse.jar;C:\java\jdk\jdk1.8.0_152\jre\lib\management-agent.jar;C:\java\jdk\jdk1.8.0_152\jre\lib\plugin.jar;C:\java\jdk\jdk1.8.0_152\jre\lib\resources.jar;C:\java\jdk\jdk1.8.0_152\jre\lib\rt.jar;C:\Users\Administrator\IdeaProjects\mytest\target\classes;D:\old\newPro\org\apache\spark\spark-core_2.13\3.3.0\spark-core_2.13-3.3.0.jar;D:\old\newPro\org\scala-lang\modules\scala-parallel-collections_2.13\1.0.3\scala-parallel-collections_2.13-1.0.3.jar;D:\old\newPro\org\apache\avro\avro\1.11.0\avro-1.11.0.jar;D:\old\newPro\com\fasterxml\jackson\core\jackson-core\2.12.5\jackson-core-2.12.5.jar;D:\old\newPro\org\apache\commons\commons-compress\1.21\commons-compress-1.21.jar;D:\old\newPro\org\apache\avro\avro-mapred\1.11.0\avro-mapred-1.11.0.jar;D:\old\newPro\org\apache\avro\avro-ipc\1.11.0\avro-ipc-1.11.0.jar;D:\old\newPro\org\tukaani\xz\1.9\xz-1.9.jar;D:\old\newPro\com\twitter\chill_2.13\0.10.0\chill_2.13-0.10.0.jar;D:\old\newPro\com\esotericsoftware\kryo-shaded\4.0.2\kryo-shaded-4.0.2.jar;D:\old\newPro\com\esotericsoftware\minlog\1.3.0\minlog-1.3.0.jar;D:\old\newPro\org\objenesis\objenesis\2.5.1\objenesis-2.5.1.jar;D:\old\newPro\com\twitter\chill-java\0.10.0\chill-java-0.10.0.jar;D:\old\newPro\org\apache\xbean\xbean-asm9-shaded\4.20\xbean-asm9-shaded-4.20.jar;D:\old\newPro\org\apache\hadoop\hadoop-client-api\3.3.2\hadoop-client-api-3.3.2.jar;D:\old\newPro\org\apache\hadoop\hadoop-client-runtime\3.3.2\hadoop-client-runtime-3.3.2.jar;D:\old\newPro\commons-logging\commons-logging\1.1.3\commons-logging-1.1.3.jar;D:\old\newPro\org\apache\spark\spark-launcher_2.13\3.3.0\spark-launcher_2.13-3.3.0.jar;D:\old\newPro\org\apache\spark\spark-kvstore_2.13\3.3.0\spark-kvstore_2.13-3.3.0.jar;D:\old\newPro\org\fusesource\leveldbjni\leveldbjni-all\1.8\leveldbjni-all-1.8.jar;D:\old\newPro\com\fasterxml\jackson\core\jackson-annotations\2.13.3\jackson-annotations-2.13.3.jar;D:\old\newPro\org\apache\spark\spark-network-common_2.13\3.3.0\spark-network-common_2.13-3.3.0.jar;D:\old\newPro\com\google\crypto\tink\tink\1.6.1\tink-1.6.1.jar;D:\old\newPro\org\apache\spark\spark-network-shuffle_2.13\3.3.0\spark-network-shuffle_2.13-3.3.0.jar;D:\old\newPro\org\apache\spark\spark-unsafe_2.13\3.3.0\spark-unsafe_2.13-3.3.0.jar;D:\old\newPro\javax\activation\activation\1.1.1\activation-1.1.1.jar;D:\old\newPro\org\apache\curator\curator-recipes\2.13.0\curator-recipes-2.13.0.jar;D:\old\newPro\org\apache\curator\curator-framework\2.13.0\curator-framework-2.13.0.jar;D:\old\newPro\org\apache\curator\curator-client\2.13.0\curator-client-2.13.0.jar;D:\old\newPro\org\apache\zookeeper\zookeeper\3.6.2\zookeeper-3.6.2.jar;D:\old\newPro\commons-lang\commons-lang\2.6\commons-lang-2.6.jar;D:\old\newPro\org\apache\zookeeper\zookeeper-jute\3.6.2\zookeeper-jute-3.6.2.jar;D:\old\newPro\org\apache\yetus\audience-annotations\0.5.0\audience-annotations-0.5.0.jar;D:\old\newPro\jakarta\servlet\jakarta.servlet-api\4.0.3\jakarta.servlet-api-4.0.3.jar;D:\old\newPro\commons-codec\commons-codec\1.15\commons-codec-1.15.jar;D:\old\newPro\org\apache\commons\commons-lang3\3.12.0\commons-lang3-3.12.0.jar;D:\old\newPro\org\apache\commons\commons-math3\3.6.1\commons-math3-3.6.1.jar;D:\old\newPro\org\apache\commons\commons-text\1.9\commons-text-1.9.jar;D:\old\newPro\commons-io\commons-io\2.11.0\commons-io-2.11.0.jar;D:\old\newPro\commons-collections\commons-collections\3.2.2\commons-collections-3.2.2.jar;D:\old\newPro\org\apache\commons\commons-collections4\4.4\commons-collections4-4.4.jar;D:\old\newPro\com\google\code\findbugs\jsr305\3.0.0\jsr305-3.0.0.jar;D:\old\newPro\org\slf4j\slf4j-api\1.7.32\slf4j-api-1.7.32.jar;D:\old\newPro\org\slf4j\jul-to-slf4j\1.7.32\jul-to-slf4j-1.7.32.jar;D:\old\newPro\org\slf4j\jcl-over-slf4j\1.7.32\jcl-over-slf4j-1.7.32.jar;D:\old\newPro\org\apache\logging\log4j\log4j-slf4j-impl\2.17.2\log4j-slf4j-impl-2.17.2.jar;D:\old\newPro\org\apache\logging\log4j\log4j-api\2.17.2\log4j-api-2.17.2.jar;D:\old\newPro\org\apache\logging\log4j\log4j-core\2.17.2\log4j-core-2.17.2.jar;D:\old\newPro\org\apache\logging\log4j\log4j-1.2-api\2.17.2\log4j-1.2-api-2.17.2.jar;D:\old\newPro\com\ning\compress-lzf\1.1\compress-lzf-1.1.jar;D:\old\newPro\org\xerial\snappy\snappy-java\1.1.8.4\snappy-java-1.1.8.4.jar;D:\old\newPro\org\lz4\lz4-java\1.8.0\lz4-java-1.8.0.jar;D:\old\newPro\com\github\luben\zstd-jni\1.5.2-1\zstd-jni-1.5.2-1.jar;D:\old\newPro\org\roaringbitmap\RoaringBitmap\0.9.25\RoaringBitmap-0.9.25.jar;D:\old\newPro\org\roaringbitmap\shims\0.9.25\shims-0.9.25.jar;D:\old\newPro\org\scala-lang\modules\scala-xml_2.13\1.2.0\scala-xml_2.13-1.2.0.jar;D:\old\newPro\org\scala-lang\scala-library\2.13.8\scala-library-2.13.8.jar;D:\old\newPro\org\scala-lang\scala-reflect\2.13.8\scala-reflect-2.13.8.jar;D:\old\newPro\org\json4s\json4s-jackson_2.13\3.7.0-M11\json4s-jackson_2.13-3.7.0-M11.jar;D:\old\newPro\org\json4s\json4s-core_2.13\3.7.0-M11\json4s-core_2.13-3.7.0-M11.jar;D:\old\newPro\org\json4s\json4s-ast_2.13\3.7.0-M11\json4s-ast_2.13-3.7.0-M11.jar;D:\old\newPro\org\json4s\json4s-scalap_2.13\3.7.0-M11\json4s-scalap_2.13-3.7.0-M11.jar;D:\old\newPro\org\glassfish\jersey\core\jersey-client\2.34\jersey-client-2.34.jar;D:\old\newPro\jakarta\ws\rs\jakarta.ws.rs-api\2.1.6\jakarta.ws.rs-api-2.1.6.jar;D:\old\newPro\org\glassfish\hk2\external\jakarta.inject\2.6.1\jakarta.inject-2.6.1.jar;D:\old\newPro\org\glassfish\jersey\core\jersey-common\2.34\jersey-common-2.34.jar;D:\old\newPro\jakarta\annotation\jakarta.annotation-api\1.3.5\jakarta.annotation-api-1.3.5.jar;D:\old\newPro\org\glassfish\hk2\osgi-resource-locator\1.0.3\osgi-resource-locator-1.0.3.jar;D:\old\newPro\org\glassfish\jersey\core\jersey-server\2.34\jersey-server-2.34.jar;D:\old\newPro\jakarta\validation\jakarta.validation-api\2.0.2\jakarta.validation-api-2.0.2.jar;D:\old\newPro\org\glassfish\jersey\containers\jersey-container-servlet\2.34\jersey-container-servlet-2.34.jar;D:\old\newPro\org\glassfish\jersey\containers\jersey-container-servlet-core\2.34\jersey-container-servlet-core-2.34.jar;D:\old\newPro\org\glassfish\jersey\inject\jersey-hk2\2.34\jersey-hk2-2.34.jar;D:\old\newPro\org\glassfish\hk2\hk2-locator\2.6.1\hk2-locator-2.6.1.jar;D:\old\newPro\org\glassfish\hk2\external\aopalliance-repackaged\2.6.1\aopalliance-repackaged-2.6.1.jar;D:\old\newPro\org\glassfish\hk2\hk2-api\2.6.1\hk2-api-2.6.1.jar;D:\old\newPro\org\glassfish\hk2\hk2-utils\2.6.1\hk2-utils-2.6.1.jar;D:\old\newPro\org\javassist\javassist\3.25.0-GA\javassist-3.25.0-GA.jar;D:\old\newPro\io\netty\netty-all\4.1.74.Final\netty-all-4.1.74.Final.jar;D:\old\newPro\io\netty\netty-buffer\4.1.74.Final\netty-buffer-4.1.74.Final.jar;D:\old\newPro\io\netty\netty-codec\4.1.74.Final\netty-codec-4.1.74.Final.jar;D:\old\newPro\io\netty\netty-common\4.1.74.Final\netty-common-4.1.74.Final.jar;D:\old\newPro\io\netty\netty-handler\4.1.74.Final\netty-handler-4.1.74.Final.jar;D:\old\newPro\io\netty\netty-tcnative-classes\2.0.48.Final\netty-tcnative-classes-2.0.48.Final.jar;D:\old\newPro\io\netty\netty-resolver\4.1.74.Final\netty-resolver-4.1.74.Final.jar;D:\old\newPro\io\netty\netty-transport\4.1.74.Final\netty-transport-4.1.74.Final.jar;D:\old\newPro\io\netty\netty-transport-classes-epoll\4.1.74.Final\netty-transport-classes-epoll-4.1.74.Final.jar;D:\old\newPro\io\netty\netty-transport-native-unix-common\4.1.74.Final\netty-transport-native-unix-common-4.1.74.Final.jar;D:\old\newPro\io\netty\netty-transport-classes-kqueue\4.1.74.Final\netty-transport-classes-kqueue-4.1.74.Final.jar;D:\old\newPro\io\netty\netty-transport-native-epoll\4.1.74.Final\netty-transport-native-epoll-4.1.74.Final-linux-x86_64.jar;D:\old\newPro\io\netty\netty-transport-native-epoll\4.1.74.Final\netty-transport-native-epoll-4.1.74.Final-linux-aarch_64.jar;D:\old\newPro\io\netty\netty-transport-native-kqueue\4.1.74.Final\netty-transport-native-kqueue-4.1.74.Final-osx-x86_64.jar;D:\old\newPro\io\netty\netty-transport-native-kqueue\4.1.74.Final\netty-transport-native-kqueue-4.1.74.Final-osx-aarch_64.jar;D:\old\newPro\com\clearspring\analytics\stream\2.9.6\stream-2.9.6.jar;D:\old\newPro\io\dropwizard\metrics\metrics-core\4.2.7\metrics-core-4.2.7.jar;D:\old\newPro\io\dropwizard\metrics\metrics-jvm\4.2.7\metrics-jvm-4.2.7.jar;D:\old\newPro\io\dropwizard\metrics\metrics-json\4.2.7\metrics-json-4.2.7.jar;D:\old\newPro\io\dropwizard\metrics\metrics-graphite\4.2.7\metrics-graphite-4.2.7.jar;D:\old\newPro\io\dropwizard\metrics\metrics-jmx\4.2.7\metrics-jmx-4.2.7.jar;D:\old\newPro\com\fasterxml\jackson\core\jackson-databind\2.13.3\jackson-databind-2.13.3.jar;D:\old\newPro\com\fasterxml\jackson\module\jackson-module-scala_2.13\2.13.3\jackson-module-scala_2.13-2.13.3.jar;D:\old\newPro\com\thoughtworks\paranamer\paranamer\2.8\paranamer-2.8.jar;D:\old\newPro\org\apache\ivy\ivy\2.5.0\ivy-2.5.0.jar;D:\old\newPro\oro\oro\2.0.8\oro-2.0.8.jar;D:\old\newPro\net\razorvine\pickle\1.2\pickle-1.2.jar;D:\old\newPro\net\sf\py4j\py4j\0.10.9.5\py4j-0.10.9.5.jar;D:\old\newPro\org\apache\spark\spark-tags_2.13\3.3.0\spark-tags_2.13-3.3.0.jar;D:\old\newPro\org\apache\commons\commons-crypto\1.1.0\commons-crypto-1.1.0.jar;D:\old\newPro\org\spark-project\spark\unused\1.0.0\unused-1.0.0.jar;D:\old\newPro\org\rocksdb\rocksdbjni\6.20.3\rocksdbjni-6.20.3.jar;D:\old\newPro\com\google\protobuf\protobuf-java\2.5.0\protobuf-java-2.5.0.jar;D:\old\newPro\com\google\guava\guava\14.0.1\guava-14.0.1.jar;D:\old\newPro\com\google\code\gson\gson\2.2.4\gson-2.2.4.jar;C:\Users\Administrator\.ivy2\cache\org.scala-lang\scala-library\jars\scala-library-2.13.8.jar;C:\Users\Administrator\.ivy2\cache\org.scala-lang\scala-reflect\jars\scala-reflect-2.13.8.jar;C:\Users\Administrator\.ivy2\cache\org.scala-lang\scala-library\srcs\scala-library-2.13.8-sources.jar com.item.action.Demo

Using Spark's default log4j profile: org/apache/spark/log4j2-defaults.properties

22/07/15 17:04:29 INFO SparkContext: Running Spark version 3.3.0

22/07/15 17:04:29 INFO ResourceUtils: ==============================================================

22/07/15 17:04:29 INFO ResourceUtils: No custom resources configured for spark.driver.

22/07/15 17:04:29 INFO ResourceUtils: ==============================================================

22/07/15 17:04:29 INFO SparkContext: Submitted application: 单词数量统计:

22/07/15 17:04:29 INFO ResourceProfile: Default ResourceProfile created, executor resources: Map(cores -> name: cores, amount: 1, script: , vendor: , memory -> name: memory, amount: 1024, script: , vendor: , offHeap -> name: offHeap, amount: 0, script: , vendor: ), task resources: Map(cpus -> name: cpus, amount: 1.0)

22/07/15 17:04:29 INFO ResourceProfile: Limiting resource is cpu

22/07/15 17:04:29 INFO ResourceProfileManager: Added ResourceProfile id: 0

22/07/15 17:04:29 INFO SecurityManager: Changing view acls to: Administrator

22/07/15 17:04:29 INFO SecurityManager: Changing modify acls to: Administrator

22/07/15 17:04:29 INFO SecurityManager: Changing view acls groups to:

22/07/15 17:04:29 INFO SecurityManager: Changing modify acls groups to:

22/07/15 17:04:29 INFO SecurityManager: SecurityManager: authentication disabled; ui acls disabled; users with view permissions: Set(Administrator); groups with view permissions: Set(); users with modify permissions: Set(Administrator); groups with modify permissions: Set()

22/07/15 17:04:30 INFO Utils: Successfully started service 'sparkDriver' on port 57604.

22/07/15 17:04:30 INFO SparkEnv: Registering MapOutputTracker

22/07/15 17:04:30 INFO SparkEnv: Registering BlockManagerMaster

22/07/15 17:04:30 INFO BlockManagerMasterEndpoint: Using org.apache.spark.storage.DefaultTopologyMapper for getting topology information

22/07/15 17:04:30 INFO BlockManagerMasterEndpoint: BlockManagerMasterEndpoint up

22/07/15 17:04:30 INFO SparkEnv: Registering BlockManagerMasterHeartbeat

22/07/15 17:04:30 INFO DiskBlockManager: Created local directory at C:\Users\Administrator\AppData\Local\Temp\blockmgr-f0d03bba-e54f-4c5a-81a6-edfdafbb455a

22/07/15 17:04:30 INFO MemoryStore: MemoryStore started with capacity 898.5 MiB

22/07/15 17:04:30 INFO SparkEnv: Registering OutputCommitCoordinator

22/07/15 17:04:30 INFO Utils: Successfully started service 'SparkUI' on port 4040.

22/07/15 17:04:30 INFO Executor: Starting executor ID driver on host 192.168.15.19

22/07/15 17:04:30 INFO Executor: Starting executor with user classpath (userClassPathFirst = false): ''

22/07/15 17:04:31 INFO Utils: Successfully started service 'org.apache.spark.network.netty.NettyBlockTransferService' on port 57647.

22/07/15 17:04:31 INFO NettyBlockTransferService: Server created on 192.168.15.19:57647

22/07/15 17:04:31 INFO BlockManager: Using org.apache.spark.storage.RandomBlockReplicationPolicy for block replication policy

22/07/15 17:04:31 INFO BlockManagerMaster: Registering BlockManager BlockManagerId(driver, 192.168.15.19, 57647, None)

22/07/15 17:04:31 INFO BlockManagerMasterEndpoint: Registering block manager 192.168.15.19:57647 with 898.5 MiB RAM, BlockManagerId(driver, 192.168.15.19, 57647, None)

22/07/15 17:04:31 INFO BlockManagerMaster: Registered BlockManager BlockManagerId(driver, 192.168.15.19, 57647, None)

22/07/15 17:04:31 INFO BlockManager: Initialized BlockManager: BlockManagerId(driver, 192.168.15.19, 57647, None)

22/07/15 17:04:31 INFO MemoryStore: Block broadcast_0 stored as values in memory (estimated size 358.0 KiB, free 898.2 MiB)

22/07/15 17:04:31 INFO MemoryStore: Block broadcast_0_piece0 stored as bytes in memory (estimated size 32.3 KiB, free 898.1 MiB)

22/07/15 17:04:31 INFO BlockManagerInfo: Added broadcast_0_piece0 in memory on 192.168.15.19:57647 (size: 32.3 KiB, free: 898.5 MiB)

22/07/15 17:04:31 INFO SparkContext: Created broadcast 0 from textFile at Demo.scala:12

22/07/15 17:04:31 INFO FileInputFormat: Total input files to process : 1

22/07/15 17:04:32 INFO SparkContext: Starting job: foreach at Demo.scala:18

22/07/15 17:04:32 INFO DAGScheduler: Registering RDD 3 (map at Demo.scala:16) as input to shuffle 0

22/07/15 17:04:32 INFO DAGScheduler: Got job 0 (foreach at Demo.scala:18) with 2 output partitions

22/07/15 17:04:32 INFO DAGScheduler: Final stage: ResultStage 1 (foreach at Demo.scala:18)

22/07/15 17:04:32 INFO DAGScheduler: Parents of final stage: List(ShuffleMapStage 0)

22/07/15 17:04:32 INFO DAGScheduler: Missing parents: List(ShuffleMapStage 0)

22/07/15 17:04:32 INFO DAGScheduler: Submitting ShuffleMapStage 0 (MapPartitionsRDD[3] at map at Demo.scala:16), which has no missing parents

22/07/15 17:04:32 INFO MemoryStore: Block broadcast_1 stored as values in memory (estimated size 7.0 KiB, free 898.1 MiB)

22/07/15 17:04:32 INFO MemoryStore: Block broadcast_1_piece0 stored as bytes in memory (estimated size 3.9 KiB, free 898.1 MiB)

22/07/15 17:04:32 INFO BlockManagerInfo: Added broadcast_1_piece0 in memory on 192.168.15.19:57647 (size: 3.9 KiB, free: 898.5 MiB)

22/07/15 17:04:32 INFO SparkContext: Created broadcast 1 from broadcast at DAGScheduler.scala:1513

22/07/15 17:04:32 INFO DAGScheduler: Submitting 2 missing tasks from ShuffleMapStage 0 (MapPartitionsRDD[3] at map at Demo.scala:16) (first 15 tasks are for partitions Vector(0, 1))

22/07/15 17:04:32 INFO TaskSchedulerImpl: Adding task set 0.0 with 2 tasks resource profile 0

22/07/15 17:04:32 INFO TaskSetManager: Starting task 0.0 in stage 0.0 (TID 0) (192.168.15.19, executor driver, partition 0, PROCESS_LOCAL, 7475 bytes) taskResourceAssignments Map()

22/07/15 17:04:32 INFO TaskSetManager: Starting task 1.0 in stage 0.0 (TID 1) (192.168.15.19, executor driver, partition 1, PROCESS_LOCAL, 7475 bytes) taskResourceAssignments Map()

22/07/15 17:04:32 INFO Executor: Running task 1.0 in stage 0.0 (TID 1)

22/07/15 17:04:32 INFO Executor: Running task 0.0 in stage 0.0 (TID 0)

22/07/15 17:04:32 INFO HadoopRDD: Input split: file:/C:/Users/Administrator/IdeaProjects/mytest/src/main/java/test.txt:40+40

22/07/15 17:04:32 INFO HadoopRDD: Input split: file:/C:/Users/Administrator/IdeaProjects/mytest/src/main/java/test.txt:0+40

22/07/15 17:04:33 INFO Executor: Finished task 0.0 in stage 0.0 (TID 0). 1432 bytes result sent to driver

22/07/15 17:04:33 INFO Executor: Finished task 1.0 in stage 0.0 (TID 1). 1303 bytes result sent to driver

22/07/15 17:04:33 INFO TaskSetManager: Finished task 1.0 in stage 0.0 (TID 1) in 672 ms on 192.168.15.19 (executor driver) (1/2)

22/07/15 17:04:33 INFO TaskSetManager: Finished task 0.0 in stage 0.0 (TID 0) in 687 ms on 192.168.15.19 (executor driver) (2/2)

22/07/15 17:04:33 INFO TaskSchedulerImpl: Removed TaskSet 0.0, whose tasks have all completed, from pool

22/07/15 17:04:33 INFO DAGScheduler: ShuffleMapStage 0 (map at Demo.scala:16) finished in 0.781 s

22/07/15 17:04:33 INFO DAGScheduler: looking for newly runnable stages

22/07/15 17:04:33 INFO DAGScheduler: running: HashSet()

22/07/15 17:04:33 INFO DAGScheduler: waiting: HashSet(ResultStage 1)

22/07/15 17:04:33 INFO DAGScheduler: failed: HashSet()

22/07/15 17:04:33 INFO DAGScheduler: Submitting ResultStage 1 (ShuffledRDD[4] at reduceByKey at Demo.scala:16), which has no missing parents

22/07/15 17:04:33 INFO MemoryStore: Block broadcast_2 stored as values in memory (estimated size 5.3 KiB, free 898.1 MiB)

22/07/15 17:04:33 INFO MemoryStore: Block broadcast_2_piece0 stored as bytes in memory (estimated size 3.0 KiB, free 898.1 MiB)

22/07/15 17:04:33 INFO BlockManagerInfo: Added broadcast_2_piece0 in memory on 192.168.15.19:57647 (size: 3.0 KiB, free: 898.5 MiB)

22/07/15 17:04:33 INFO SparkContext: Created broadcast 2 from broadcast at DAGScheduler.scala:1513

22/07/15 17:04:33 INFO DAGScheduler: Submitting 2 missing tasks from ResultStage 1 (ShuffledRDD[4] at reduceByKey at Demo.scala:16) (first 15 tasks are for partitions Vector(0, 1))

22/07/15 17:04:33 INFO TaskSchedulerImpl: Adding task set 1.0 with 2 tasks resource profile 0

22/07/15 17:04:33 INFO TaskSetManager: Starting task 0.0 in stage 1.0 (TID 2) (192.168.15.19, executor driver, partition 0, NODE_LOCAL, 7217 bytes) taskResourceAssignments Map()

22/07/15 17:04:33 INFO TaskSetManager: Starting task 1.0 in stage 1.0 (TID 3) (192.168.15.19, executor driver, partition 1, NODE_LOCAL, 7217 bytes) taskResourceAssignments Map()

22/07/15 17:04:33 INFO Executor: Running task 1.0 in stage 1.0 (TID 3)

22/07/15 17:04:33 INFO Executor: Running task 0.0 in stage 1.0 (TID 2)

22/07/15 17:04:33 INFO ShuffleBlockFetcherIterator: Getting 1 (72.0 B) non-empty blocks including 1 (72.0 B) local and 0 (0.0 B) host-local and 0 (0.0 B) push-merged-local and 0 (0.0 B) remote blocks

22/07/15 17:04:33 INFO ShuffleBlockFetcherIterator: Getting 1 (117.0 B) non-empty blocks including 1 (117.0 B) local and 0 (0.0 B) host-local and 0 (0.0 B) push-merged-local and 0 (0.0 B) remote blocks

22/07/15 17:04:33 INFO ShuffleBlockFetcherIterator: Started 0 remote fetches in 14 ms

22/07/15 17:04:33 INFO ShuffleBlockFetcherIterator: Started 0 remote fetches in 14 ms

(butterfly.,1)

(is,1)

(it,3)

(Love,1)

(a,1)

(goes.,1)

(like,1)

(goes,1)

(where,2)

(It,1)

(and,1)

(pleases,2)

22/07/15 17:04:33 INFO Executor: Finished task 0.0 in stage 1.0 (TID 2). 1321 bytes result sent to driver

22/07/15 17:04:33 INFO Executor: Finished task 1.0 in stage 1.0 (TID 3). 1321 bytes result sent to driver

22/07/15 17:04:33 INFO TaskSetManager: Finished task 0.0 in stage 1.0 (TID 2) in 78 ms on 192.168.15.19 (executor driver) (1/2)

22/07/15 17:04:33 INFO TaskSetManager: Finished task 1.0 in stage 1.0 (TID 3) in 62 ms on 192.168.15.19 (executor driver) (2/2)

22/07/15 17:04:33 INFO TaskSchedulerImpl: Removed TaskSet 1.0, whose tasks have all completed, from pool

22/07/15 17:04:33 INFO DAGScheduler: ResultStage 1 (foreach at Demo.scala:18) finished in 0.094 s

22/07/15 17:04:33 INFO DAGScheduler: Job 0 is finished. Cancelling potential speculative or zombie tasks for this job

22/07/15 17:04:33 INFO TaskSchedulerImpl: Killing all running tasks in stage 1: Stage finished

22/07/15 17:04:33 INFO DAGScheduler: Job 0 finished: foreach at Demo.scala:18, took 1.169723 s

22/07/15 17:04:33 INFO SparkUI: Stopped Spark web UI at http://192.168.15.19:4040

22/07/15 17:04:33 INFO MapOutputTrackerMasterEndpoint: MapOutputTrackerMasterEndpoint stopped!

22/07/15 17:04:33 INFO MemoryStore: MemoryStore cleared

22/07/15 17:04:33 INFO BlockManager: BlockManager stopped

22/07/15 17:04:33 INFO BlockManagerMaster: BlockManagerMaster stopped

22/07/15 17:04:33 INFO OutputCommitCoordinator$OutputCommitCoordinatorEndpoint: OutputCommitCoordinator stopped!

22/07/15 17:04:33 INFO SparkContext: Successfully stopped SparkContext

22/07/15 17:04:33 INFO ShutdownHookManager: Shutdown hook called

22/07/15 17:04:33 INFO ShutdownHookManager: Deleting directory C:\Users\Administrator\AppData\Local\Temp\spark-6624b247-1ac0-45f6-baa8-f271e26b2dc1

Process finished with exit code 0

打印结果中可以看到:

说明计算成功。

版权归原作者 红目香薰 所有, 如有侵权,请联系我们删除。