IDEA配置Spark运行环境

在IDEA中添加scala插件 创建maven项目 并添加scala的sdk

前提 :本地已安装Scala

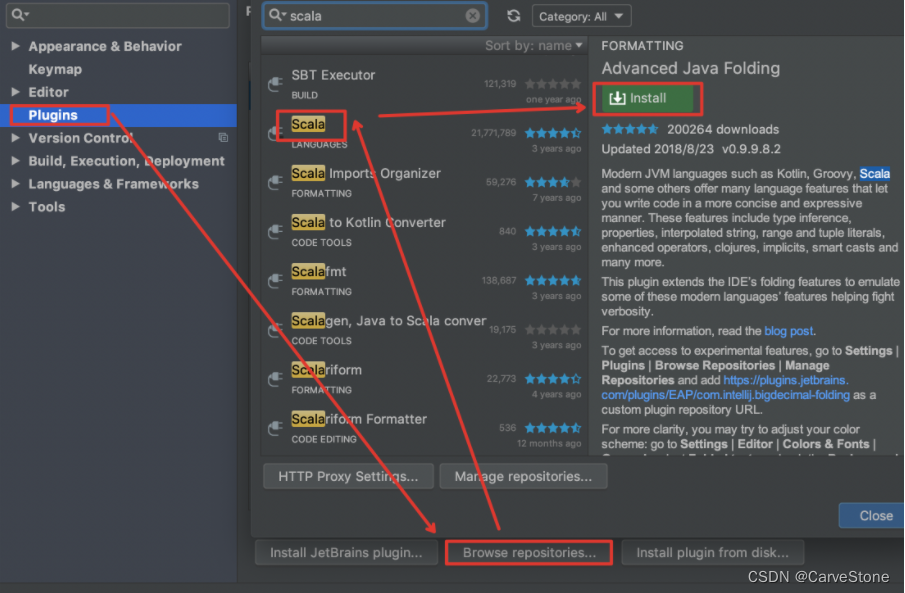

- 安装Scala插件(在线)Preferences -> Plugins -> Browse Repositories -> 搜索 scala -> install

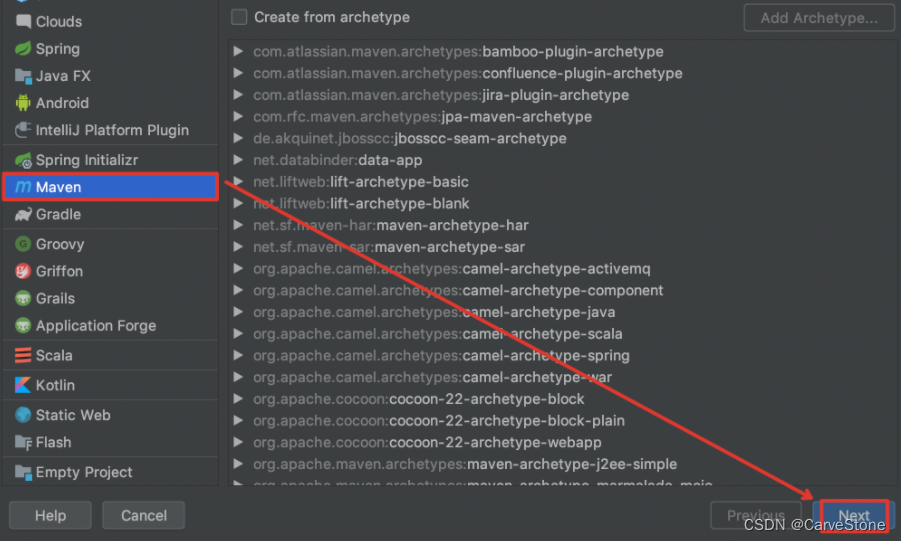

- 创建Maven工程File -> New -> Project… -> Maven -> Next

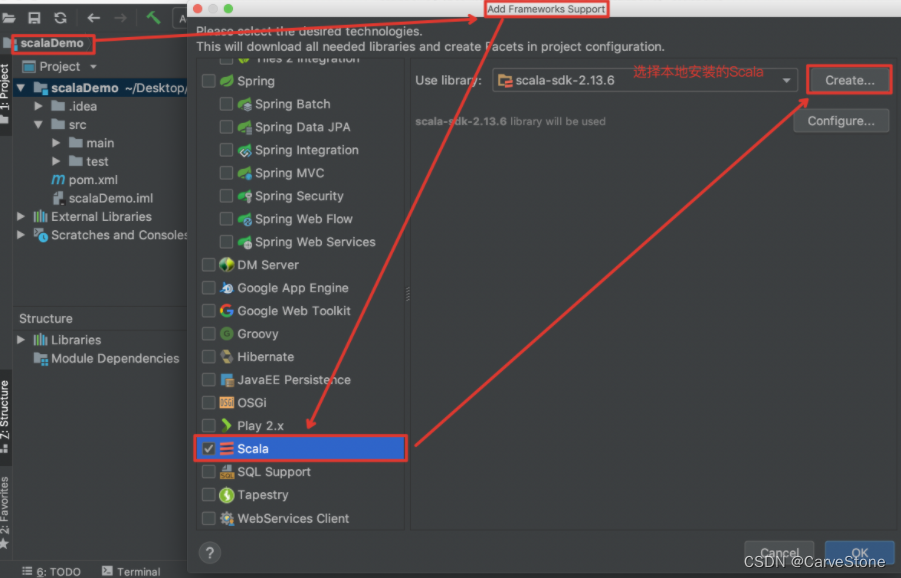

- 引入Scala框架所在项目(点击右键) -> Add Framework Support… ->选择 Scala->点击 OK

相关依赖jar的引入 配置pom.xml

pom.xml

<dependencies><dependency><groupId>org.apache.spark</groupId><artifactId>spark-core_2.11</artifactId><version>2.4.7</version></dependency><dependency><groupId>org.apache.logging.log4j</groupId><artifactId>log4j-core</artifactId><version>2.8.2</version></dependency></dependencies>

log4j.properties

log4j.rootCategory=ERROR, console

log4j.appender.console=org.apache.log4j.ConsoleAppender

log4j.appender.console.target=System.err

log4j.appender.console.layout=org.apache.log4j.PatternLayout

log4j.appender.console.layout.ConversionPattern=%d{yy/MM/dd HH:mm:ss} %p %c{1}: %m%n

# Set the default spark-shell log level to ERROR. When running the spark-shell,the# log level for this class is used to overwrite the root logger's log level, sothat# the user can have different defaults for the shell and regular Spark apps.log4j.logger.org.apache.spark.repl.Main=ERROR

# Settings to quiet third party logs that are too verboselog4j.logger.org.spark_project.jetty=ERROR

log4j.logger.org.spark_project.jetty.util.component.AbstractLifeCycle=ERROR

log4j.logger.org.apache.spark.repl.SparkIMain$exprTyper=ERROR

log4j.logger.org.apache.spark.repl.SparkILoop$SparkILoopInterpreter=ERROR

log4j.logger.org.apache.parquet=ERROR

log4j.logger.parquet=ERROR

# SPARK-9183: Settings to avoid annoying messages when looking up nonexistentUDFs in SparkSQL with Hive supportlog4j.logger.org.apache.hadoop.hive.metastore.RetryingHMSHandler=FATAL

log4j.logger.org.apache.hadoop.hive.ql.exec.FunctionRegistry=ERROR

本文转载自: https://blog.csdn.net/weixin_44018458/article/details/128831642

版权归原作者 码上行舟 所有, 如有侵权,请联系我们删除。

版权归原作者 码上行舟 所有, 如有侵权,请联系我们删除。