Quick reference

- The official Spark docker image.

- Maintained by: openEuler CloudNative SIG.

- Where to get help: openEuler CloudNative SIG, openEuler.

Spark | openEuler

Current MLflow docker images are built on the openEuler. This repository is free to use and exempted from per-user rate limits.

Apache Spark is a multi-language engine for executing data engineering, data science, and machine learning on single-node machines or clusters. It provides high-level APIs in Scala, Java, Python, and R, and an optimized engine that supports general computation graphs for data analysis. It also supports a rich set of higher-level tools including Spark SQL for SQL and DataFrames, pandas API on Spark for pandas workloads, MLlib for machine learning, GraphX for graph processing, and Structured Streaming for stream processing.

Learn more on Spark website.

Supported tags and respective Dockerfile links

The tag of each spark docker image is consist of the version of spark and the version of basic image. The details are as follows

TagsCurrentlyArchitectures3.3.1-22.03-ltsspark 3.3.1 on openEuler 22.03-LTSamd64, arm643.3.2-22.03-ltsspark 3.3.2 on openEuler 22.03-LTSamd64, arm643.4.0-22.03-ltsspark 3.4.0 on openEuler 22.03-LTSamd64, arm64

Usage

In this usage, users can select the corresponding

{Tag}

based on their requirements.

- Online Documentation You can find the latest Spark documentation, including a programming guide, on the project web page. This README file only contains basic setup instructions.

- Pull the

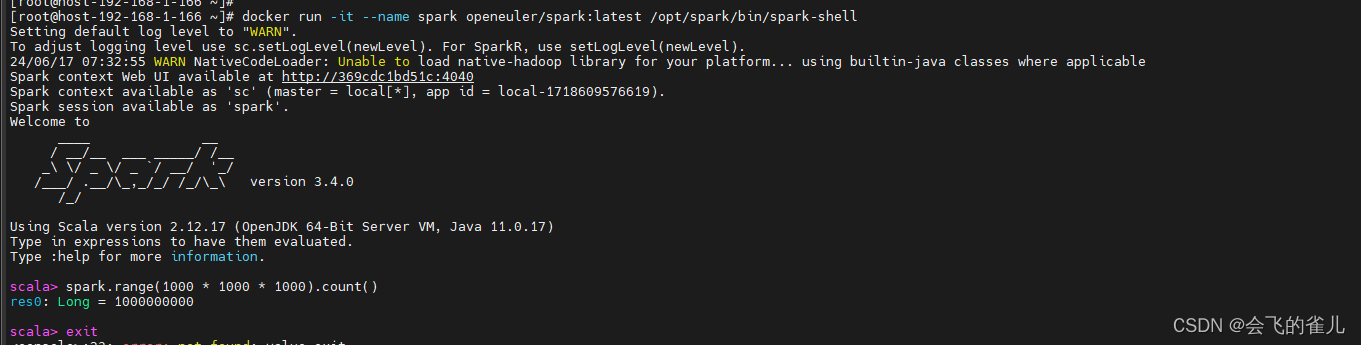

openeuler/redisimage from dockerdocker pull openeuler/spark:{Tag} - Interactive Scala Shell The easiest way to start using Spark is through the Scala shell:

docker run -it--name spark openeuler/spark:{Tag} /opt/spark/bin/spark-shellTry the following command, which should return 1,000,000,000:scala> spark.range(1000 * 1000 * 1000).count()

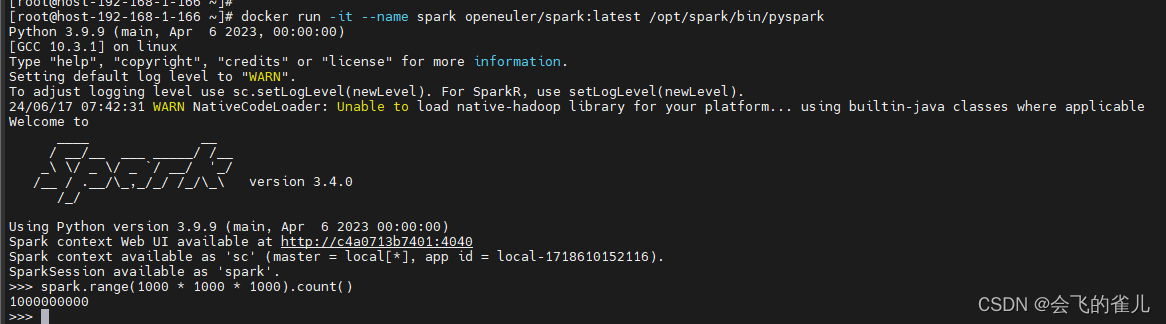

- Interactive Python Shell The easiest way to start using PySpark is through the Python shell:

docker run -it--name spark openeuler/spark:{Tag} /opt/spark/bin/pysparkAnd run the following command, which should also return 1,000,000,000:>>> spark.range(1000 * 1000 * 1000).count()

- Running Spark on Kubernetes https://spark.apache.org/docs/latest/running-on-kubernetes.html.

- Configuration and environment variables See more in https://github.com/apache/spark-docker/blob/master/OVERVIEW.md#environment-variable.

Question and answering

If you have any questions or want to use some special features, please submit an issue or a pull request on openeuler-docker-images.

版权归原作者 会飞的雀儿 所有, 如有侵权,请联系我们删除。