文章目录

编译环境准备

软件版本Hadoop3.3.2Hive3.1.2Spark3.3.1Flink1.14.5

一. 下载并解压hudi

cd /home/software

wget https://mirrors.tuna.tsinghua.edu.cn/apache/hudi/0.12.0/hudi-0.12.0.src.tgz --no-check-certificate

tar -xvf hudi-0.12.0.src.tgz -C /home

二. maven的下载和配置

2.1 maven的下载和解压

cd /home/software

wget https://mirrors.tuna.tsinghua.edu.cn/apache/maven/maven-3/3.8.6/binaries/apache-maven-3.8.6-bin.tar.gz --no-check-certificate

tar -xvf apache-maven-3.8.6-bin.tar.gz -C /home

2.2 添加环境变量到/etc/profile中

vi /etc/profile

#MAVEN_HOME

export MAVEN_HOME=/home/apache-maven-3.8.6

export PATH=$PATH:$MAVEN_HOME/bin

2.3 修改为阿里镜像

vi /home/apache-maven-3.8.6/conf/settings.xml

<!-- 添加阿里云镜像-->

<mirror>

<id>nexus-aliyun</id>

<mirrorOf>central</mirrorOf>

<name>Nexus aliyun</name>

<url>http://maven.aliyun.com/nexus/content/groups/public</url>

</mirror>

三. 编译hudi

3.1 修改pom文件

vim /home/hudi-0.12.0/pom.xml

新增repository加速依赖下载

<repository>

<id>nexus-aliyun</id>

<name>nexus-aliyun</name>

<url>http://maven.aliyun.com/nexus/content/groups/public/</url>

<releases>

<enabled>true</enabled>

</releases>

<snapshots>

<enabled>false</enabled>

</snapshots>

</repository>

修改依赖的组件版本

<hadoop.version>3.3.2</hadoop.version>

<hive.version>3.1.2</hive.version>

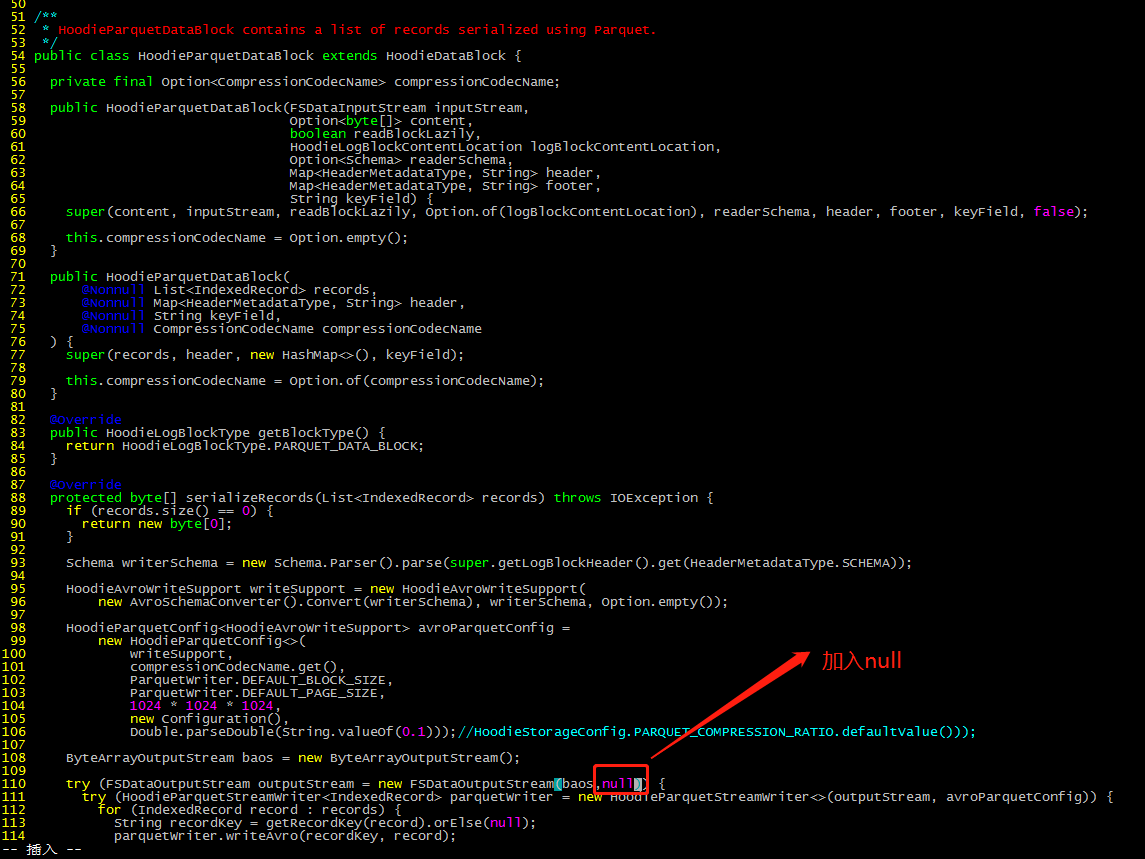

3.2 修改源码兼容hadoop3

Hudi默认依赖的hadoop2,要兼容hadoop3,除了修改版本,还需要修改如下代码:

vim /home/hudi-0.12.0/hudi-common/src/main/java/org/apache/hudi/common/table/log/block/HoodieParquetDataBlock.java

3.3 手动安装Kafka依赖

通过网址下载:http://packages.confluent.io/archive/5.3/confluent-5.3.4-2.12.zip

解压后找到以下jar包,上传服务器hp5

common-config-5.3.4.jar

common-utils-5.3.4.jar

kafka-avro-serializer-5.3.4.jar

kafka-schema-registry-client-5.3.4.jar

install到maven本地仓库

mvn install:install-file -DgroupId=io.confluent -DartifactId=common-config -Dversion=5.3.4 -Dpackaging=jar -Dfile=./common-config-5.3.4.jar

mvn install:install-file -DgroupId=io.confluent -DartifactId=common-utils -Dversion=5.3.4 -Dpackaging=jar -Dfile=./common-utils-5.3.4.jar

mvn install:install-file -DgroupId=io.confluent -DartifactId=kafka-avro-serializer -Dversion=5.3.4 -Dpackaging=jar -Dfile=./kafka-avro-serializer-5.3.4.jar

mvn install:install-file -DgroupId=io.confluent -DartifactId=kafka-schema-registry-client -Dversion=5.3.4 -Dpackaging=jar -Dfile=./kafka-schema-registry-client-5.3.4.jar

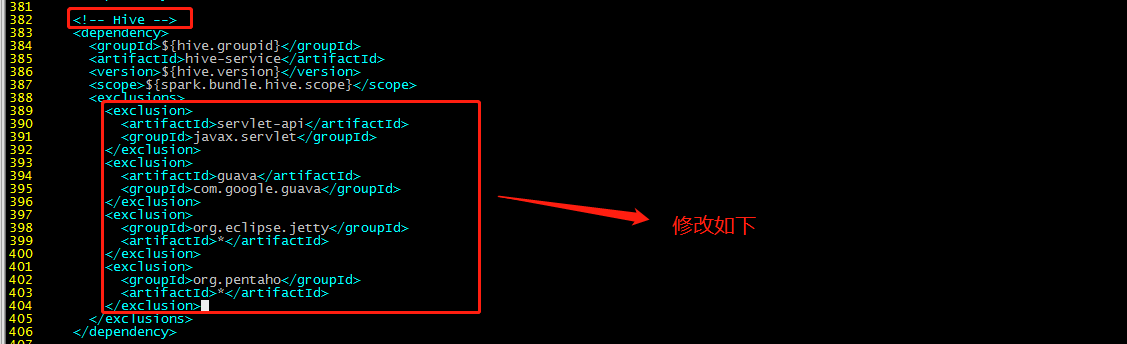

3.4 解决spark模块依赖冲突

修改了Hive版本为3.1.2,其携带的jetty是0.9.3,hudi本身用的0.9.4,存在依赖冲突。

3.4.1 修改hudi-spark-bundle的pom文件

排除低版本jetty,添加hudi指定版本的jetty:

cp /home/hudi-0.12.0/packaging/hudi-spark-bundle/pom.xml /home/hudi-0.12.0/packaging/hudi-spark-bundle/pom.xml.bak

vim /home/hudi-0.12.0/packaging/hudi-spark-bundle/pom.xml

Hive依赖(382行):

<exclusion>

<artifactId>guava</artifactId>

<groupId>com.google.guava</groupId>

</exclusion>

<exclusion>

<groupId>org.eclipse.jetty</groupId>

<artifactId>*</artifactId>

</exclusion>

<exclusion>

<groupId>org.pentaho</groupId>

<artifactId>*</artifactId>

</exclusion>

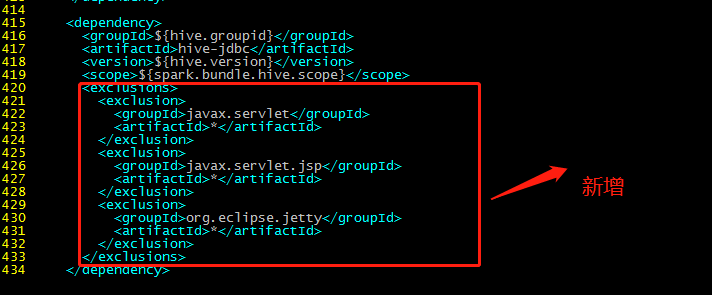

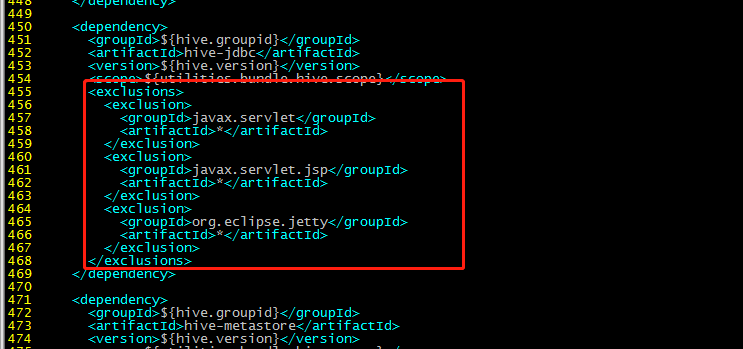

415行:

<exclusions>

<exclusion>

<groupId>javax.servlet</groupId>

<artifactId>*</artifactId>

</exclusion>

<exclusion>

<groupId>javax.servlet.jsp</groupId>

<artifactId>*</artifactId>

</exclusion>

<exclusion>

<groupId>org.eclipse.jetty</groupId>

<artifactId>*</artifactId>

</exclusion>

</exclusions>

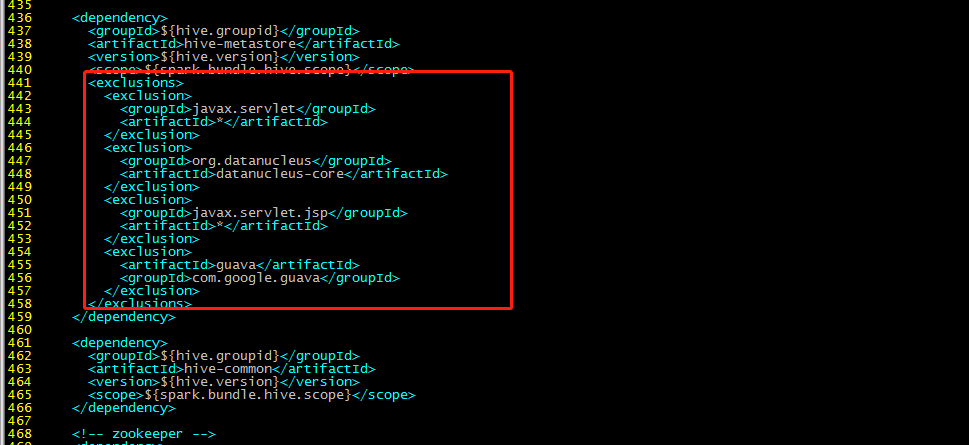

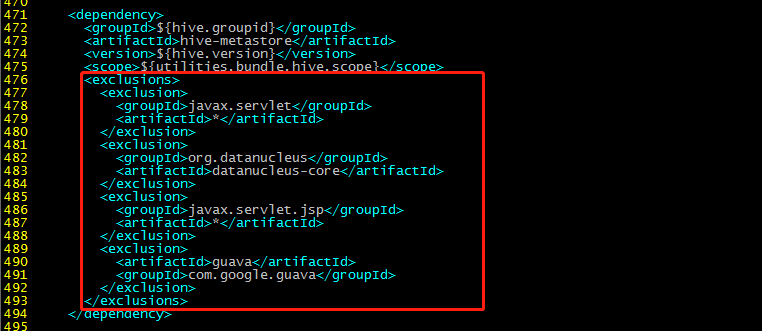

436行:

<exclusions>

<exclusion>

<groupId>javax.servlet</groupId>

<artifactId>*</artifactId>

</exclusion>

<exclusion>

<groupId>org.datanucleus</groupId>

<artifactId>datanucleus-core</artifactId>

</exclusion>

<exclusion>

<groupId>javax.servlet.jsp</groupId>

<artifactId>*</artifactId>

</exclusion>

<exclusion>

<artifactId>guava</artifactId>

<groupId>com.google.guava</groupId>

</exclusion>

</exclusions>

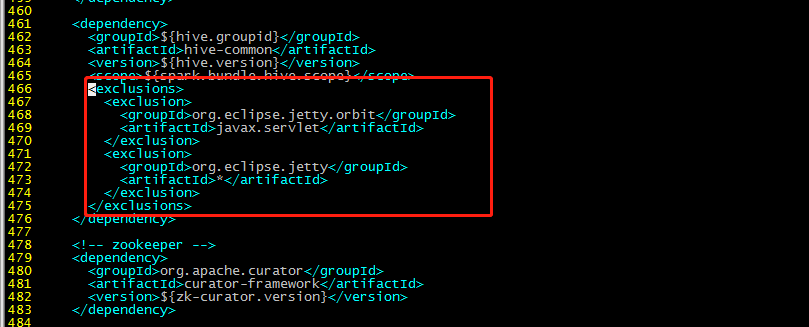

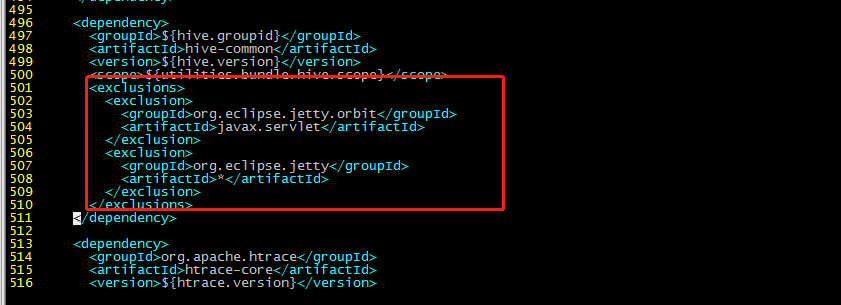

461行:

<exclusions>

<exclusion>

<groupId>org.eclipse.jetty.orbit</groupId>

<artifactId>javax.servlet</artifactId>

</exclusion>

<exclusion>

<groupId>org.eclipse.jetty</groupId>

<artifactId>*</artifactId>

</exclusion>

</exclusions>

增加hudi配置版本的jetty:

<!-- 增加hudi配置版本的jetty -->

<dependency>

<groupId>org.eclipse.jetty</groupId>

<artifactId>jetty-server</artifactId>

<version>${jetty.version}</version>

</dependency>

<dependency>

<groupId>org.eclipse.jetty</groupId>

<artifactId>jetty-util</artifactId>

<version>${jetty.version}</version>

</dependency>

<dependency>

<groupId>org.eclipse.jetty</groupId>

<artifactId>jetty-webapp</artifactId>

<version>${jetty.version}</version>

</dependency>

<dependency>

<groupId>org.eclipse.jetty</groupId>

<artifactId>jetty-http</artifactId>

<version>${jetty.version}</version>

</dependency>

3.4.2 修改hudi-utilities-bundle的pom文件

排除低版本jetty,添加hudi指定版本的jetty:

vim /home/hudi-0.12.0/packaging/hudi-utilities-bundle/pom.xml

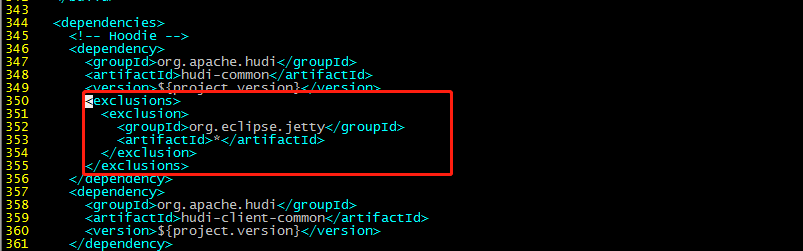

345行部分:

<exclusions>

<exclusion>

<groupId>org.eclipse.jetty</groupId>

<artifactId>*</artifactId>

</exclusion>

</exclusions>

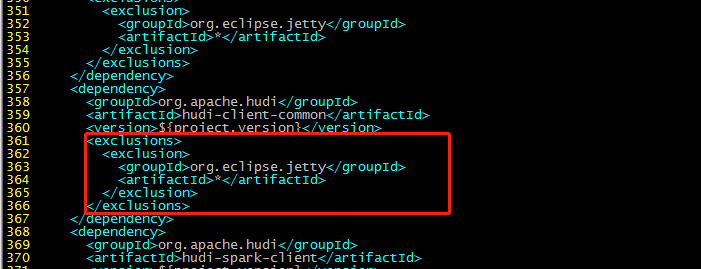

357行:

<exclusions>

<exclusion>

<groupId>org.eclipse.jetty</groupId>

<artifactId>*</artifactId>

</exclusion>

</exclusions>

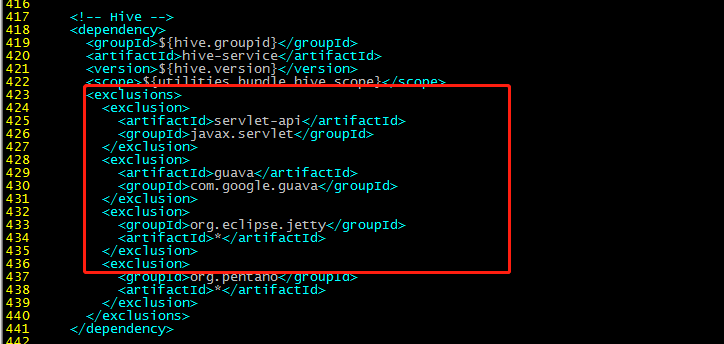

417行部分:

<exclusions>

<exclusion>

<artifactId>servlet-api</artifactId>

<groupId>javax.servlet</groupId>

</exclusion>

<exclusion>

<artifactId>guava</artifactId>

<groupId>com.google.guava</groupId>

</exclusion>

<exclusion>

<groupId>org.eclipse.jetty</groupId>

<artifactId>*</artifactId>

</exclusion>

<exclusion>

<groupId>org.pentaho</groupId>

<artifactId>*</artifactId>

</exclusion>

</exclusions>

450:

<exclusions>

<exclusion>

<groupId>javax.servlet</groupId>

<artifactId>*</artifactId>

</exclusion>

<exclusion>

<groupId>javax.servlet.jsp</groupId>

<artifactId>*</artifactId>

</exclusion>

<exclusion>

<groupId>org.eclipse.jetty</groupId>

<artifactId>*</artifactId>

</exclusion>

</exclusions>

471行:

<exclusions>

<exclusion>

<groupId>javax.servlet</groupId>

<artifactId>*</artifactId>

</exclusion>

<exclusion>

<groupId>org.datanucleus</groupId>

<artifactId>datanucleus-core</artifactId>

</exclusion>

<exclusion>

<groupId>javax.servlet.jsp</groupId>

<artifactId>*</artifactId>

</exclusion>

<exclusion>

<artifactId>guava</artifactId>

<groupId>com.google.guava</groupId>

</exclusion>

</exclusions>

496行:

<exclusions>

<exclusion>

<groupId>org.eclipse.jetty.orbit</groupId>

<artifactId>javax.servlet</artifactId>

</exclusion>

<exclusion>

<groupId>org.eclipse.jetty</groupId>

<artifactId>*</artifactId>

</exclusion>

</exclusions>

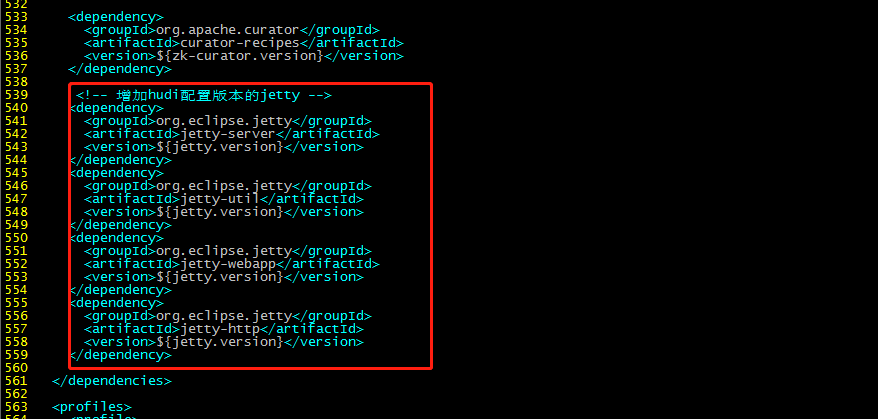

增加:

<!-- 增加hudi配置版本的jetty -->

<dependency>

<groupId>org.eclipse.jetty</groupId>

<artifactId>jetty-server</artifactId>

<version>${jetty.version}</version>

</dependency>

<dependency>

<groupId>org.eclipse.jetty</groupId>

<artifactId>jetty-util</artifactId>

<version>${jetty.version}</version>

</dependency>

<dependency>

<groupId>org.eclipse.jetty</groupId>

<artifactId>jetty-webapp</artifactId>

<version>${jetty.version}</version>

</dependency>

<dependency>

<groupId>org.eclipse.jetty</groupId>

<artifactId>jetty-http</artifactId>

<version>${jetty.version}</version>

</dependency>

3.5 编译

cd /home/hudi-0.12.0

mvn clean package -DskipTests -Dspark3.3 -Dflink1.14 -Dscala-2.12 -Dhadoop.version=3.3.2 -Pflink-bundle-shade-hive3

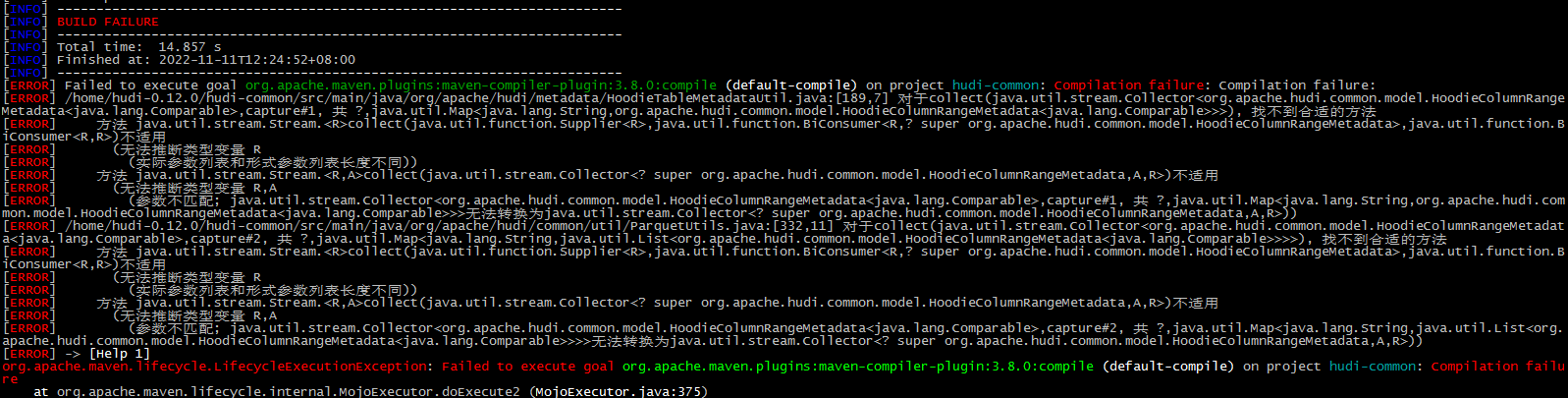

报错:

这个报错在网上找了好久都没找到解决方案,后来想了下,我使用的是open jdk11,换回JDK8版本,此问题解决。

安装apache的各个组件,还是继续使用JDK8版本吧,别使用open jdk了,坑太多了。

[ERROR] Failed to execute goal org.apache.maven.plugins:maven-compiler-plugin:3.8.0:compile (default-compile) on project hudi-common: Compilation failure: Compilation failure:

[ERROR] /home/hudi-0.12.0/hudi-common/src/main/java/org/apache/hudi/metadata/HoodieTableMetadataUtil.java:[189,7] 对于collect(java.util.stream.Collector<org.apache.hudi.common.model.HoodieColumnRangeMetadata<java.lang.Comparable>,capture#1, 共 ?,java.util.Map<java.lang.String,org.apache.hudi.common.model.HoodieColumnRangeMetadata<java.lang.Comparable>>>), 找不到合适的方法

[ERROR] 方法 java.util.stream.Stream.<R>collect(java.util.function.Supplier<R>,java.util.function.BiConsumer<R,? super org.apache.hudi.common.model.HoodieColumnRangeMetadata>,java.util.function.BiConsumer<R,R>)不适用

[ERROR] (无法推断类型变量 R

[ERROR] (实际参数列表和形式参数列表长度不同))

[ERROR] 方法 java.util.stream.Stream.<R,A>collect(java.util.stream.Collector<? super org.apache.hudi.common.model.HoodieColumnRangeMetadata,A,R>)不适用

[ERROR] (无法推断类型变量 R,A

[ERROR] (参数不匹配; java.util.stream.Collector<org.apache.hudi.common.model.HoodieColumnRangeMetadata<java.lang.Comparable>,capture#1, 共 ?,java.util.Map<java.lang.String,org.apache.hudi.common.model.HoodieColumnRangeMetadata<java.lang.Comparable>>>无法转换为java.util.stream.Collector<? super org.apache.hudi.common.model.HoodieColumnRangeMetadata,A,R>))

[ERROR] /home/hudi-0.12.0/hudi-common/src/main/java/org/apache/hudi/common/util/ParquetUtils.java:[332,11] 对于collect(java.util.stream.Collector<org.apache.hudi.common.model.HoodieColumnRangeMetadata<java.lang.Comparable>,capture#2, 共 ?,java.util.Map<java.lang.String,java.util.List<org.apache.hudi.common.model.HoodieColumnRangeMetadata<java.lang.Comparable>>>>), 找不到合适的方法

[ERROR] 方法 java.util.stream.Stream.<R>collect(java.util.function.Supplier<R>,java.util.function.BiConsumer<R,? super org.apache.hudi.common.model.HoodieColumnRangeMetadata>,java.util.function.BiConsumer<R,R>)不适用

[ERROR] (无法推断类型变量 R

[ERROR] (实际参数列表和形式参数列表长度不同))

[ERROR] 方法 java.util.stream.Stream.<R,A>collect(java.util.stream.Collector<? super org.apache.hudi.common.model.HoodieColumnRangeMetadata,A,R>)不适用

[ERROR] (无法推断类型变量 R,A

[ERROR] (参数不匹配; java.util.stream.Collector<org.apache.hudi.common.model.HoodieColumnRangeMetadata<java.lang.Comparable>,capture#2, 共 ?,java.util.Map<java.lang.String,java.util.List<org.apache.hudi.common.model.HoodieColumnRangeMetadata<java.lang.Comparable>>>>无法转换为java.util.stream.Collector<? super org.apache.hudi.common.model.HoodieColumnRangeMetadata,A,R>))

[ERROR] -> [Help 1]

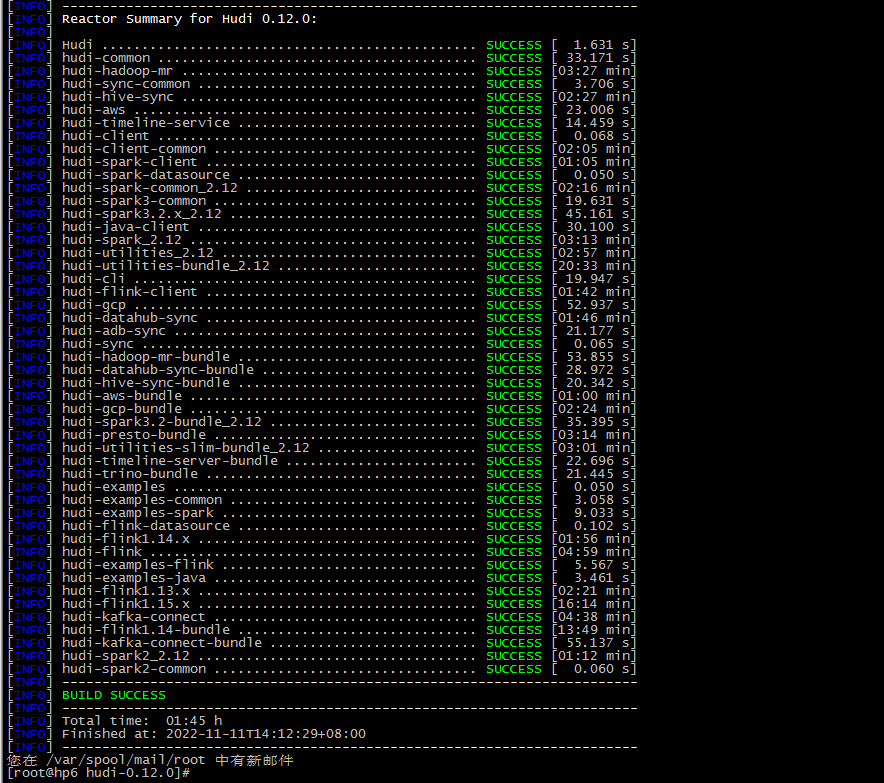

编译成功:

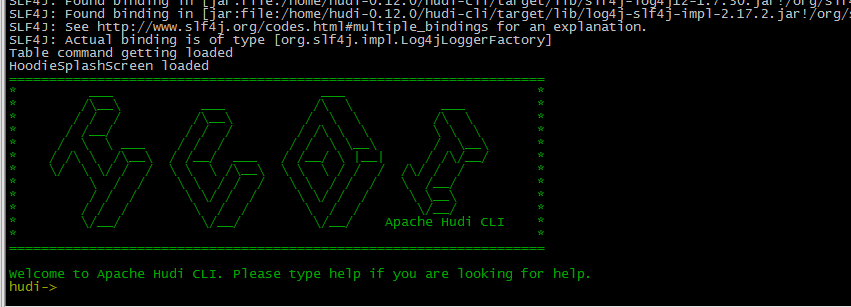

编译成功后,进入hudi-cli说明成功:

cd /home/hudi-0.12.0/hudi-cli

./hudi-cli.sh

相关的jar包:

编译完成后,相关的包在packaging目录的各个模块中:

比如,flink与hudi的包:

参考:

版权归原作者 只是甲 所有, 如有侵权,请联系我们删除。