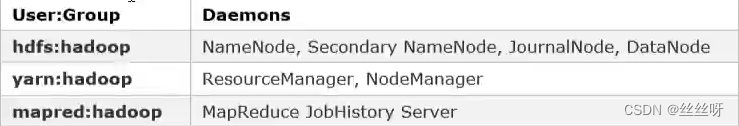

1 创建Hadoop系统用户

为Hadoop开启Kerberos,需为不同服务准备不同的用户,启动服务时需要使用相应的用户。须在所有节点创建以下用户和用户组。

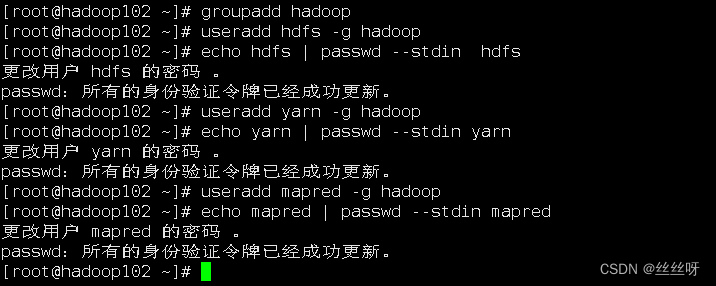

创建hadoop组

[root@hadoop102 ~]# groupadd hadoop

hadoop103和hadoop104做同样的操作。

创建各用户并设置密码

[root@hadoop102 ~]# useradd hdfs -g hadoop

[root@hadoop102 ~]# echo hdfs | passwd --stdin hdfs

[root@hadoop102 ~]# useradd yarn -g hadoop

[root@hadoop102 ~]# echo yarn | passwd --stdin yarn

[root@hadoop102 ~]# useradd mapred -g hadoop

[root@hadoop102 ~]# echo mapred | passwd --stdin mapred

hadoop103和hadoop104做同样的操作。

2 Hadoop Kerberos配置

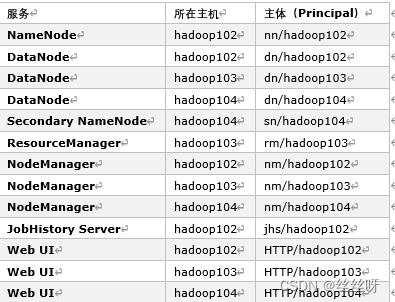

2.1 为Hadoop各服务创建Kerberos主体(Principal)

主体格式如下:ServiceName/HostName@REALM,例如 dn/hadoop102@EXAMPLE.COM

**1.**各服务所需主体如下

**2.**创建主体

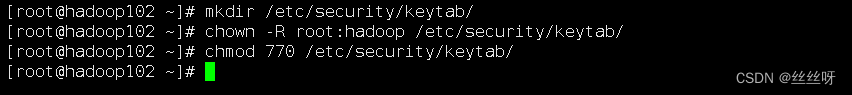

1)在所有节点创建keytab文件目录

[root@hadoop102 ~]# mkdir /etc/security/keytab/

[root@hadoop102 ~]# chown -R root:hadoop /etc/security/keytab/

[root@hadoop102 ~]# chmod 770 /etc/security/keytab/

hadoop103和hadoop104做同样的操作。

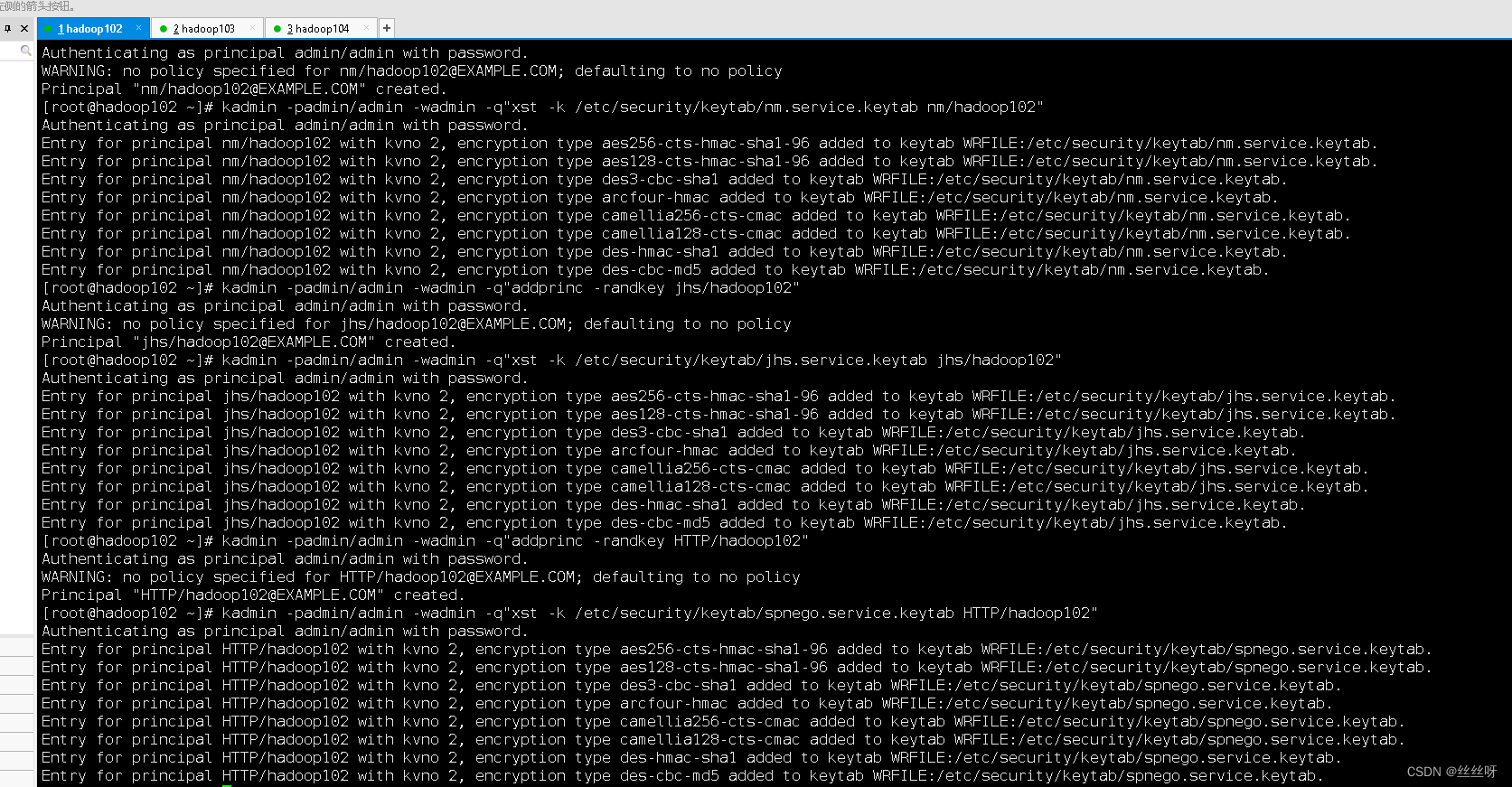

2)以下命令在hadoop102节点执行

NameNode(hadoop102)

[root@hadoop102 ~]# kadmin -padmin/admin -wadmin -q"addprinc -randkey nn/hadoop102"

[root@hadoop102 ~]# kadmin -padmin/admin -wadmin -q"xst -k /etc/security/keytab/nn.service.keytab nn/hadoop102"

DataNode(hadoop102)

[root@hadoop102 ~]# kadmin -padmin/admin -wadmin -q"addprinc -randkey dn/hadoop102"

[root@hadoop102 ~]# kadmin -padmin/admin -wadmin -q"xst -k /etc/security/keytab/dn.service.keytab dn/hadoop102"

NodeManager(hadoop102)

[root@hadoop102 ~]# kadmin -padmin/admin -wadmin -q"addprinc -randkey nm/hadoop102"

[root@hadoop102 ~]# kadmin -padmin/admin -wadmin -q"xst -k /etc/security/keytab/nm.service.keytab nm/hadoop102"

JobHistory Server(hadoop102)

[root@hadoop102 ~]# kadmin -padmin/admin -wadmin -q"addprinc -randkey jhs/hadoop102"

[root@hadoop102 ~]# kadmin -padmin/admin -wadmin -q"xst -k /etc/security/keytab/jhs.service.keytab jhs/hadoop102"

Web UI(hadoop102)

[root@hadoop102 ~]# kadmin -padmin/admin -wadmin -q"addprinc -randkey HTTP/hadoop102"

[root@hadoop102 ~]# kadmin -padmin/admin -wadmin -q"xst -k /etc/security/keytab/spnego.service.keytab HTTP/hadoop102"

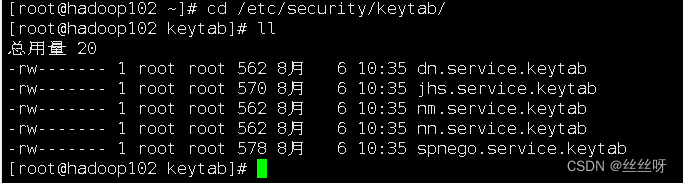

查看[root@hadoop102 ~]# cd /etc/security/keytab/

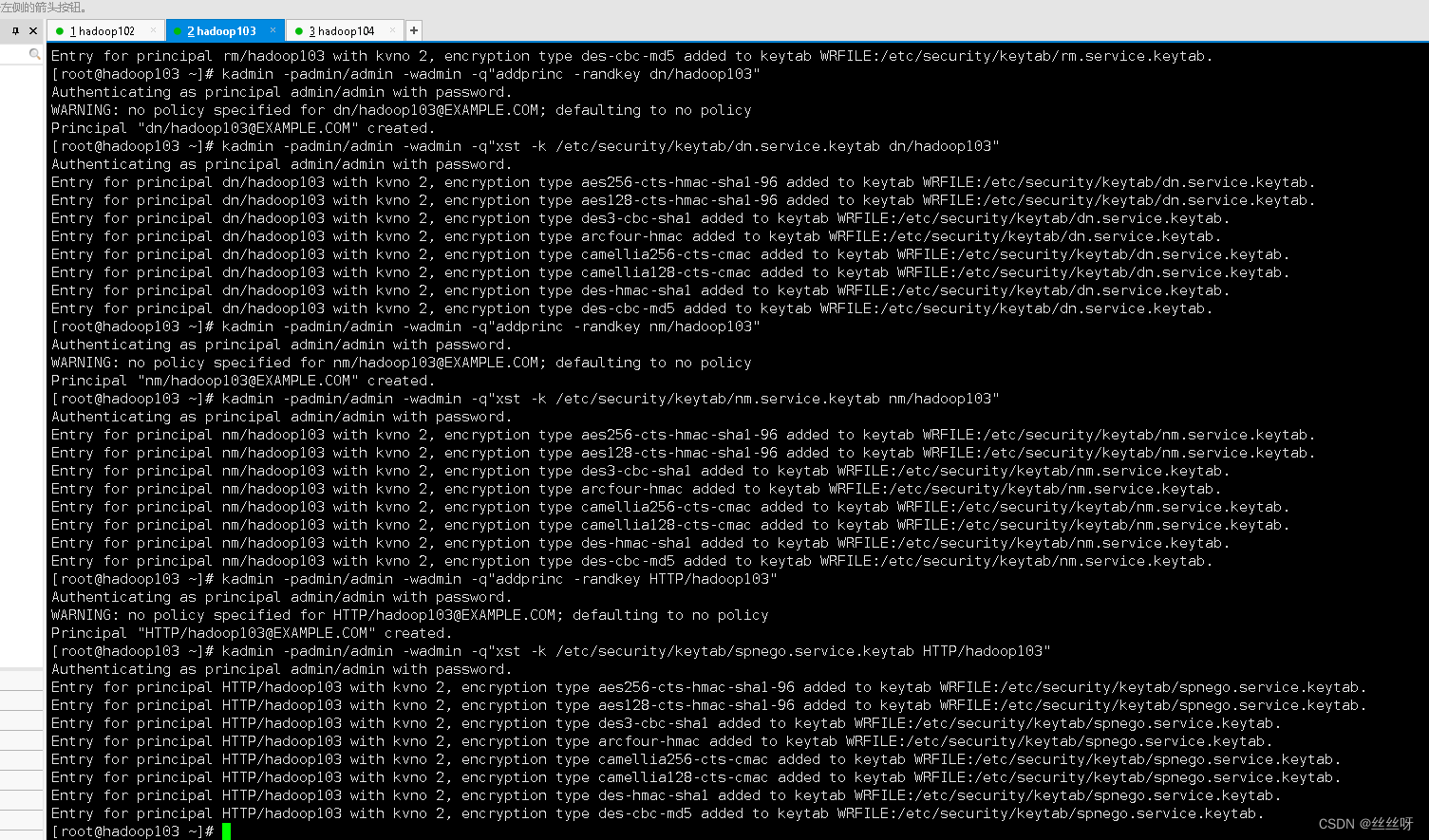

2)以下命令在hadoop103执行

ResourceManager(hadoop103)

[root@hadoop103 ~]# kadmin -padmin/admin -wadmin -q"addprinc -randkey rm/hadoop103"

[root@hadoop103 ~]# kadmin -padmin/admin -wadmin -q"xst -k /etc/security/keytab/rm.service.keytab rm/hadoop103"

DataNode(hadoop103)

[root@hadoop103 ~]# kadmin -padmin/admin -wadmin -q"addprinc -randkey dn/hadoop103"

[root@hadoop103 ~]# kadmin -padmin/admin -wadmin -q"xst -k /etc/security/keytab/dn.service.keytab dn/hadoop103"

NodeManager(hadoop103)

[root@hadoop103 ~]# kadmin -padmin/admin -wadmin -q"addprinc -randkey nm/hadoop103"

[root@hadoop103 ~]# kadmin -padmin/admin -wadmin -q"xst -k /etc/security/keytab/nm.service.keytab nm/hadoop103"

Web UI(hadoop103)

[root@hadoop103 ~]# kadmin -padmin/admin -wadmin -q"addprinc -randkey HTTP/hadoop103"

[root@hadoop103 ~]# kadmin -padmin/admin -wadmin -q"xst -k /etc/security/keytab/spnego.service.keytab HTTP/hadoop103"

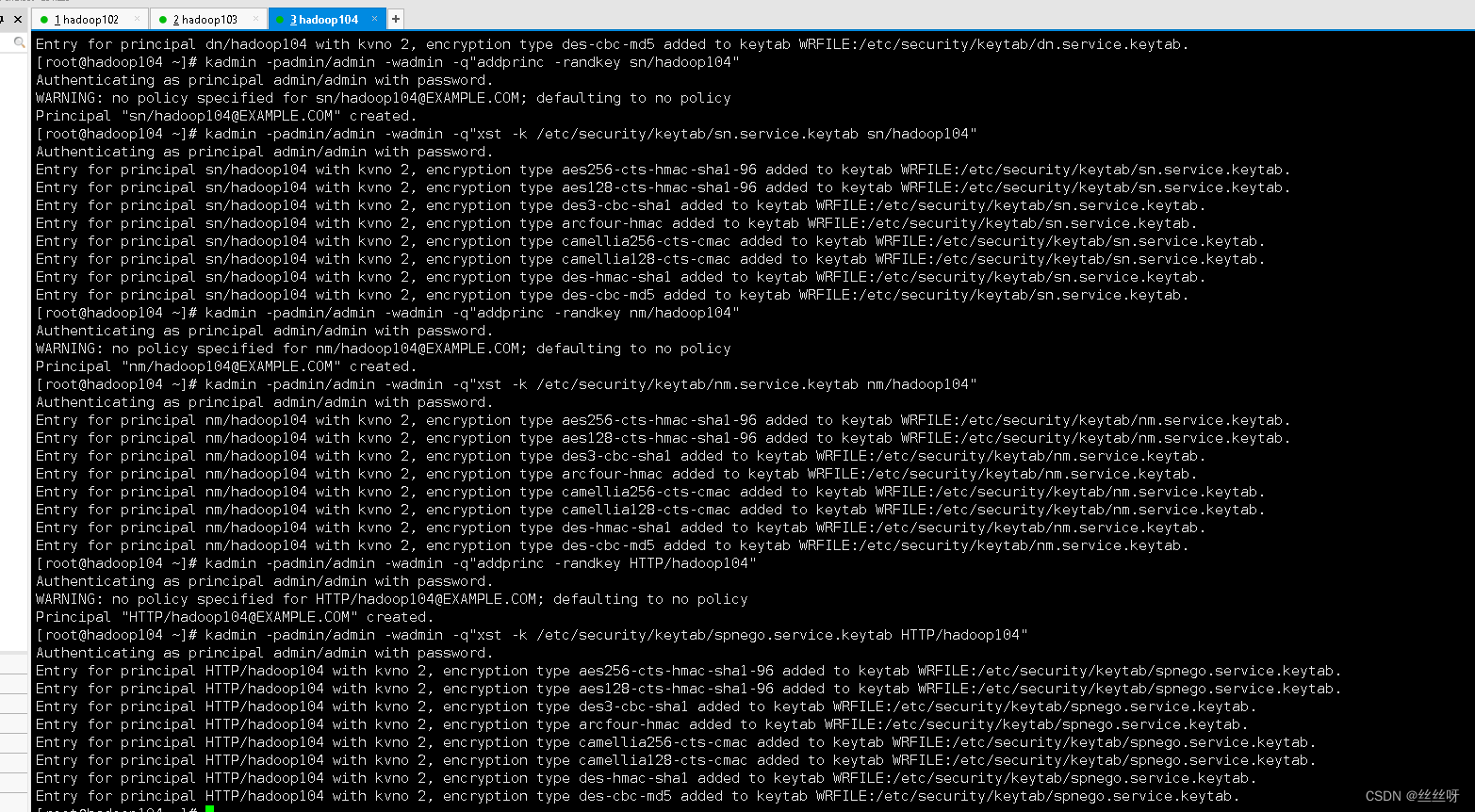

3)以下命令在hadoop104执行

DataNode(hadoop104)

[root@hadoop104 ~]# kadmin -padmin/admin -wadmin -q"addprinc -randkey dn/hadoop104"

[root@hadoop104 ~]# kadmin -padmin/admin -wadmin -q"xst -k /etc/security/keytab/dn.service.keytab dn/hadoop104"

Secondary NameNode(hadoop104)

[root@hadoop104 ~]# kadmin -padmin/admin -wadmin -q"addprinc -randkey sn/hadoop104"

[root@hadoop104 ~]# kadmin -padmin/admin -wadmin -q"xst -k /etc/security/keytab/sn.service.keytab sn/hadoop104"

NodeManager(hadoop104)

[root@hadoop104 ~]# kadmin -padmin/admin -wadmin -q"addprinc -randkey nm/hadoop104"

[root@hadoop104 ~]# kadmin -padmin/admin -wadmin -q"xst -k /etc/security/keytab/nm.service.keytab nm/hadoop104"

Web UI(hadoop104)

[root@hadoop104 ~]# kadmin -padmin/admin -wadmin -q"addprinc -randkey HTTP/hadoop104"

[root@hadoop104 ~]# kadmin -padmin/admin -wadmin -q"xst -k /etc/security/keytab/spnego.service.keytab HTTP/hadoop104"

*4.修改所有节点keytab文件的所有者和访问权限*

[root@hadoop102 ~]# chown -R root:hadoop /etc/security/keytab/

[root@hadoop102 ~]# chmod 660 /etc/security/keytab/*

hadoop103和hadoop104做同样的操作。

2.2 修改Hadoop配置文件

1.core-site.xml

[root@hadoop102 ~]# vim /opt/module/hadoop-3.1.3/etc/hadoop/core-site.xml

增加以下内容

<!-- Kerberos主体到系统用户的映射机制 -->

<property>

<name>hadoop.security.auth_to_local.mechanism</name>

<value>MIT</value>

</property>

<!-- Kerberos主体到系统用户的具体映射规则 -->

<property>

<name>hadoop.security.auth_to_local</name>

<value>

RULE:[2:$1/$2@$0]([ndj]n\/.*@EXAMPLE\.COM)s/.*/hdfs/

RULE:[2:$1/$2@$0]([rn]m\/.*@EXAMPLE\.COM)s/.*/yarn/

RULE:[2:$1/$2@$0](jhs\/.*@EXAMPLE\.COM)s/.*/mapred/

DEFAULT

</value>

</property>

<!-- 启用Hadoop集群Kerberos安全认证 -->

<property>

<name>hadoop.security.authentication</name>

<value>kerberos</value>

</property>

<!-- 启用Hadoop集群授权管理 -->

<property>

<name>hadoop.security.authorization</name>

<value>true</value>

</property>

<!-- Hadoop集群间RPC通讯设为仅认证模式 -->

<property>

<name>hadoop.rpc.protection</name>

<value>authentication</value>

</property>

2.hdfs-site.xml

[root@hadoop102 ~]# vim /opt/module/hadoop-3.1.3/etc/hadoop/hdfs-site.xml

增加以下内容

<!-- 访问DataNode数据块时需通过Kerberos认证 -->

<property>

<name>dfs.block.access.token.enable</name>

<value>true</value>

</property>

<!-- NameNode服务的Kerberos主体,_HOST会自动解析为服务所在的主机名 -->

<property>

<name>dfs.namenode.kerberos.principal</name>

<value>nn/[email protected]</value>

</property>

<!-- NameNode服务的Kerberos密钥文件路径 -->

<property>

<name>dfs.namenode.keytab.file</name>

<value>/etc/security/keytab/nn.service.keytab</value>

</property>

<!-- Secondary NameNode服务的Kerberos主体 -->

<property>

<name>dfs.secondary.namenode.keytab.file</name>

<value>/etc/security/keytab/sn.service.keytab</value>

</property>

<!-- Secondary NameNode服务的Kerberos密钥文件路径 -->

<property>

<name>dfs.secondary.namenode.kerberos.principal</name>

<value>sn/[email protected]</value>

</property>

<!-- NameNode Web服务的Kerberos主体 -->

<property>

<name>dfs.namenode.kerberos.internal.spnego.principal</name>

<value>HTTP/[email protected]</value>

</property>

<!-- WebHDFS REST服务的Kerberos主体 -->

<property>

<name>dfs.web.authentication.kerberos.principal</name>

<value>HTTP/[email protected]</value>

</property>

<!-- Secondary NameNode Web UI服务的Kerberos主体 -->

<property>

<name>dfs.secondary.namenode.kerberos.internal.spnego.principal</name>

<value>HTTP/[email protected]</value>

</property>

<!-- Hadoop Web UI的Kerberos密钥文件路径 -->

<property>

<name>dfs.web.authentication.kerberos.keytab</name>

<value>/etc/security/keytab/spnego.service.keytab</value>

</property>

<!-- DataNode服务的Kerberos主体 -->

<property>

<name>dfs.datanode.kerberos.principal</name>

<value>dn/[email protected]</value>

</property>

<!-- DataNode服务的Kerberos密钥文件路径 -->

<property>

<name>dfs.datanode.keytab.file</name>

<value>/etc/security/keytab/dn.service.keytab</value>

</property>

<!-- 配置NameNode Web UI 使用HTTPS协议 -->

<property>

<name>dfs.http.policy</name>

<value>HTTPS_ONLY</value>

</property>

<!-- 配置DataNode数据传输保护策略为仅认证模式 -->

<property>

<name>dfs.data.transfer.protection</name>

<value>authentication</value>

</property>

3.yarn-site.xml

[root@hadoop102 ~]# vim /opt/module/hadoop-3.1.3/etc/hadoop/yarn-site.xml

增加以下内容

<!-- Resource Manager 服务的Kerberos主体 -->

<property>

<name>yarn.resourcemanager.principal</name>

<value>rm/[email protected]</value>

</property>

<!-- Resource Manager 服务的Kerberos密钥文件 -->

<property>

<name>yarn.resourcemanager.keytab</name>

<value>/etc/security/keytab/rm.service.keytab</value>

</property>

<!-- Node Manager 服务的Kerberos主体 -->

<property>

<name>yarn.nodemanager.principal</name>

<value>nm/[email protected]</value>

</property>

<!-- Node Manager 服务的Kerberos密钥文件 -->

<property>

<name>yarn.nodemanager.keytab</name>

<value>/etc/security/keytab/nm.service.keytab</value>

</property>

4.mapred-site.xml

[root@hadoop102 ~]# vim /opt/module/hadoop-3.1.3/etc/hadoop/mapred-site.xml

增加以下内容

<!-- 历史服务器的Kerberos主体 -->

<property>

<name>mapreduce.jobhistory.keytab</name>

<value>/etc/security/keytab/jhs.service.keytab</value>

</property>

<!-- 历史服务器的Kerberos密钥文件 -->

<property>

<name>mapreduce.jobhistory.principal</name>

<value>jhs/[email protected]</value>

</property>

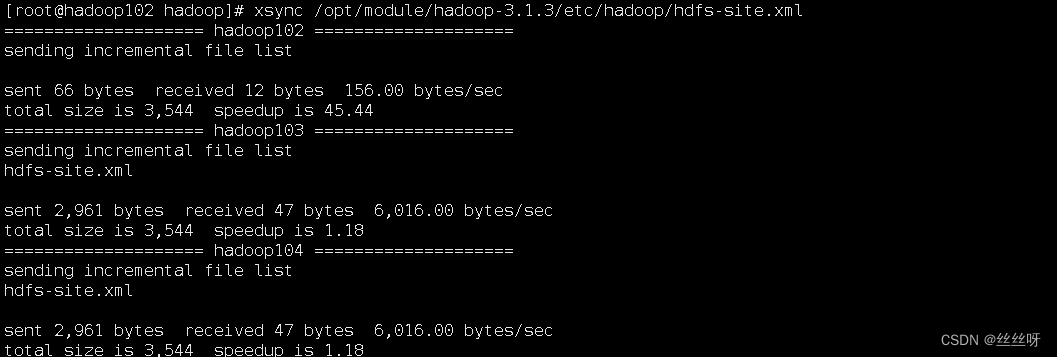

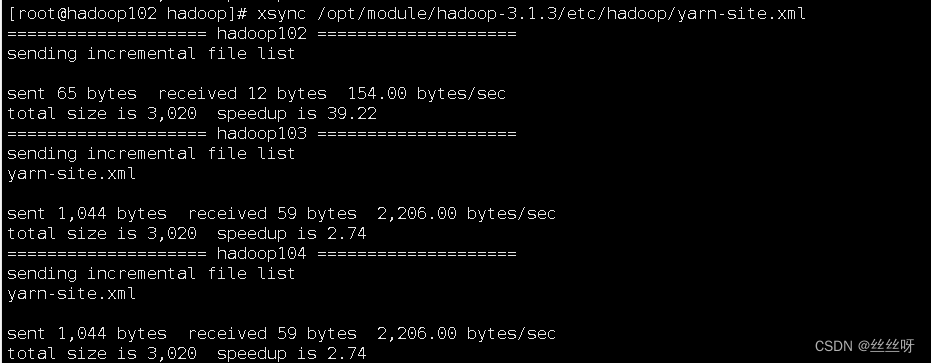

5.分发以上修改的配置文件

[root@hadoop102 ~]# xsync /opt/module/hadoop-3.1.3/etc/hadoop/core-site.xml

[root@hadoop102 ~]# xsync /opt/module/hadoop-3.1.3/etc/hadoop/hdfs-site.xml

[root@hadoop102 ~]# xsync /opt/module/hadoop-3.1.3/etc/hadoop/yarn-site.xml

[root@hadoop102 ~]# xsync /opt/module/hadoop-3.1.3/etc/hadoop/mapred-site.xml

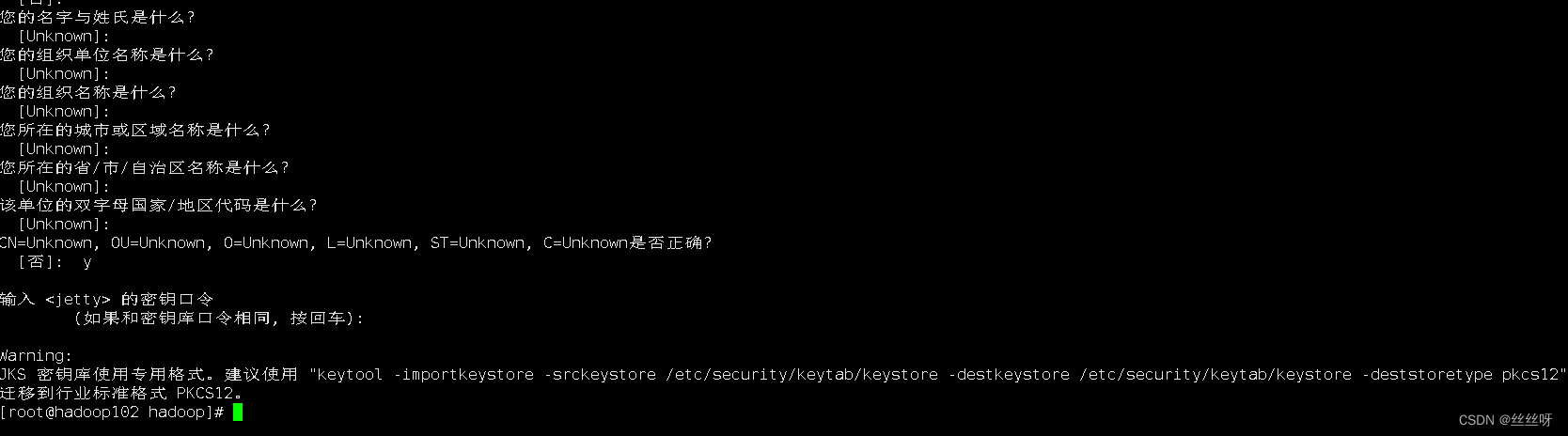

2.3 配置HDFS使用HTTPS安全传输协议

**1.**生成密钥对

1)生成 keystore的密码及相应信息的密钥库

[root@hadoop102 ~]# keytool -keystore /etc/security/keytab/keystore -alias jetty -genkey -keyalg RSA

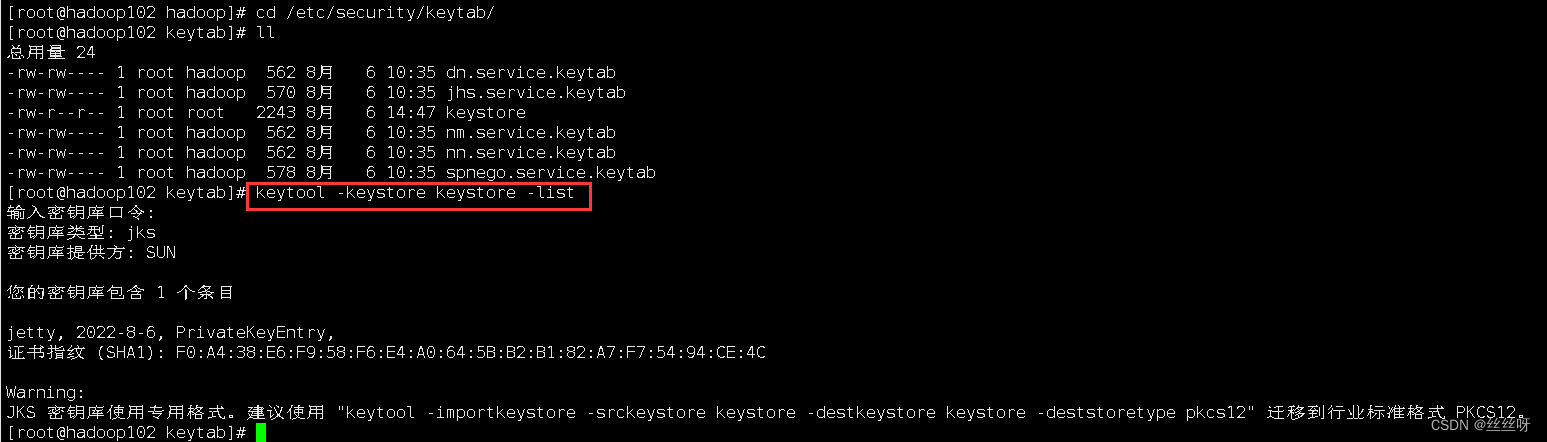

查看密钥库里面的内容

[root@hadoop102 keytab]# keytool -keystore keystore -list

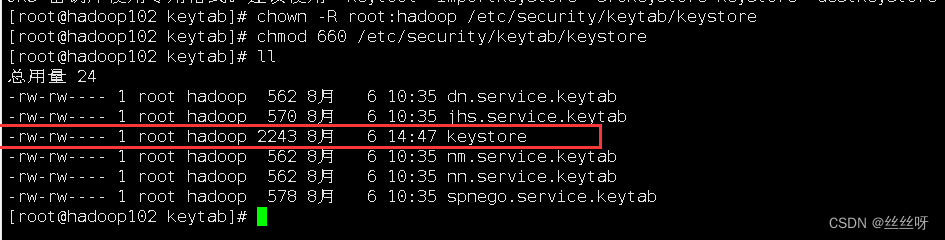

2)修改keystore文件的所有者和访问权限

[root@hadoop102 ~]# chown -R root:hadoop /etc/security/keytab/keystore

[root@hadoop102 ~]# chmod 660 /etc/security/keytab/keystore

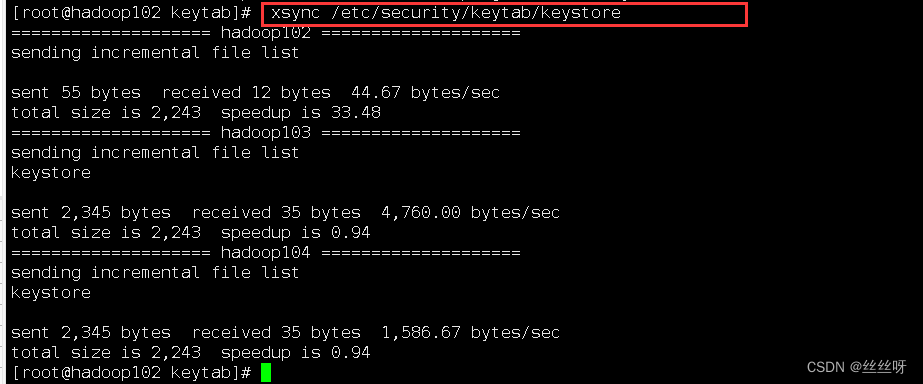

3****)将该证书分发到集群中的每台节点的相同路径

[root@hadoop102 ~]# xsync /etc/security/keytab/keystore

4)修改hadoop配置文件ssl-server.xml.example****,

修改文件名为ssl-server.xml

[root@hadoop102 hadoop]# mv ssl-server.xml.example ssl-server.xml

[root@hadoop102 hadoop]# vim ssl-server.xml

修改以下参数

<!-- SSL密钥库路径 -->

<property>

<name>ssl.server.keystore.location</name>

<value>/etc/security/keytab/keystore</value>

</property>

<!-- SSL密钥库密码 -->

<property>

<name>ssl.server.keystore.password</name>

<value>123456</value>

</property>

<!-- SSL可信任密钥库路径 -->

<property>

<name>ssl.server.truststore.location</name>

<value>/etc/security/keytab/keystore</value>

</property>

<!-- SSL密钥库中密钥的密码 -->

<property>

<name>ssl.server.keystore.keypassword</name>

<value>123456</value>

</property>

<!-- SSL可信任密钥库密码 -->

<property>

<name>ssl.server.truststore.password</name>

<value>123456</value>

</property>

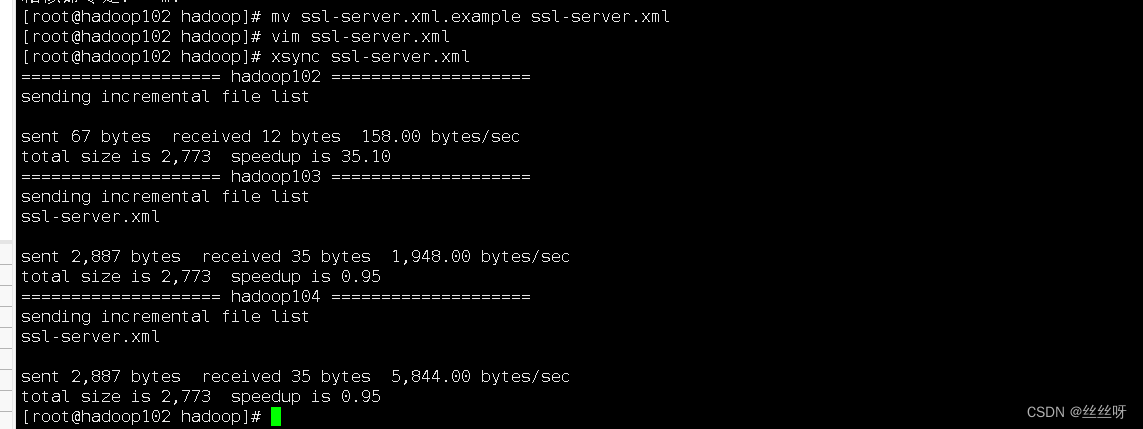

分发

[root@hadoop102 hadoop]# xsync ssl-server.xml

2.4 配置Yarn使用LinuxContainerExecutor

1)修改所有节点的container-executor所有者和权限,要求其所有者为root,所有组为hadoop(启动NodeManger的yarn用户的所属组),权限为6050。其默认路径为$HADOOP_HOME/bin

[root@hadoop102 hadoop]# cd /opt/module/hadoop-3.1.3/bin/

[root@hadoop102 bin]# ll

[root@hadoop102 ~]# chown root:hadoop /opt/module/hadoop-3.1.3/bin/container-executor

[root@hadoop102 ~]# chmod 6050 /opt/module/hadoop-3.1.3/bin/container-executor

hadoop103 和hadoop104做相同的操作。

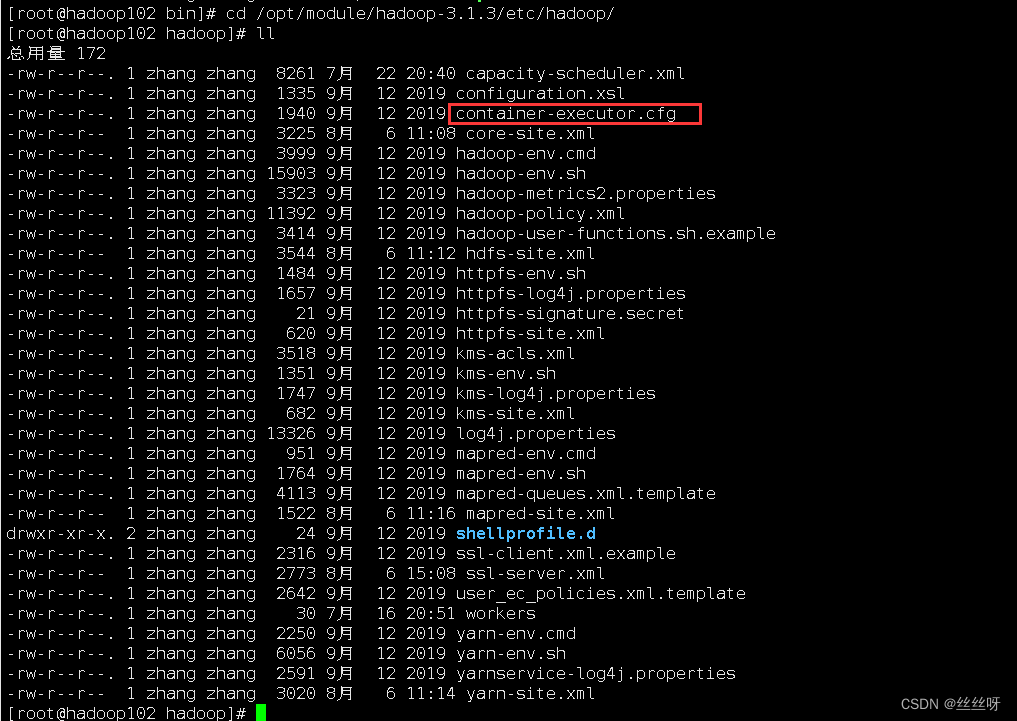

2)修改所有节点的container-executor.cfg文件的所有者和权限,要求该文件及其所有的上级目录的所有者均为root,所有组为hadoop(启动NodeManger的yarn用户的所属组),权限为400。其默认路径为$HADOOP_HOME/etc/hadoop

[root@hadoop102 bin]# cd /opt/module/hadoop-3.1.3/etc/hadoop/

[root@hadoop102 ~]# chown root:hadoop /opt/module/hadoop-3.1.3/etc/hadoop/container-executor.cfg

[root@hadoop102 ~]# chown root:hadoop /opt/module/hadoop-3.1.3/etc/hadoop

[root@hadoop102 ~]# chown root:hadoop /opt/module/hadoop-3.1.3/etc

[root@hadoop102 ~]# chown root:hadoop /opt/module/hadoop-3.1.3

[root@hadoop102 ~]# chown root:hadoop /opt/module

[root@hadoop102 ~]# chmod 400 /opt/module/hadoop-3.1.3/etc/hadoop/container-executor.cfg

hadoop103 和hadoop104做相同的操作。

在hadoop102上查看一下

3)修改$HADOOP_HOME/etc/hadoop/container-executor.cfg

[root@hadoop102 opt]# cd /opt/module/hadoop-3.1.3/etc/hadoop/

[root@hadoop102 hadoop]# vim container-executor.cfg

4)修改$HADOOP_HOME/etc/hadoop/yarn-site.xml文件

[root@hadoop102 ~]# vim $HADOOP_HOME/etc/hadoop/yarn-site.xml

增加以下内容

<!-- 配置Node Manager使用LinuxContainerExecutor管理Container -->

<property>

<name>yarn.nodemanager.container-executor.class</name>

<value>org.apache.hadoop.yarn.server.nodemanager.LinuxContainerExecutor</value>

</property>

<!-- 配置Node Manager的启动用户的所属组 -->

<property>

<name>yarn.nodemanager.linux-container-executor.group</name>

<value>hadoop</value>

</property>

<!-- LinuxContainerExecutor脚本路径 -->

<property>

<name>yarn.nodemanager.linux-container-executor.path</name>

<value>/opt/module/hadoop-3.1.3/bin/container-executor</value>

</property>

5)分发container-executor.cfg和yarn-site.xml文件

[root@hadoop102 ~]# xsync $HADOOP_HOME/etc/hadoop/container-executor.cfg

[root@hadoop102 ~]# xsync $HADOOP_HOME/etc/hadoop/yarn-site.xml

版权归原作者 丝丝呀 所有, 如有侵权,请联系我们删除。