本文以华为云为例,低成本二进制方式测试部署K8S集群(V1.26.1)。

温馨提示:首次使用公有云一定要注意新用户优惠,最好用于购买云服务器,长期持有的服务器资源可支持域名备案

1 基本环境配置

1.1 高可用架构

采用虚拟IP+keepalived+Haproxy实现kube-apiserver的高可用

#mermaid-svg-5Q7zmIqE1nqsEbip {font-family:"trebuchet ms",verdana,arial,sans-serif;font-size:16px;fill:#333;}#mermaid-svg-5Q7zmIqE1nqsEbip .error-icon{fill:#552222;}#mermaid-svg-5Q7zmIqE1nqsEbip .error-text{fill:#552222;stroke:#552222;}#mermaid-svg-5Q7zmIqE1nqsEbip .edge-thickness-normal{stroke-width:2px;}#mermaid-svg-5Q7zmIqE1nqsEbip .edge-thickness-thick{stroke-width:3.5px;}#mermaid-svg-5Q7zmIqE1nqsEbip .edge-pattern-solid{stroke-dasharray:0;}#mermaid-svg-5Q7zmIqE1nqsEbip .edge-pattern-dashed{stroke-dasharray:3;}#mermaid-svg-5Q7zmIqE1nqsEbip .edge-pattern-dotted{stroke-dasharray:2;}#mermaid-svg-5Q7zmIqE1nqsEbip .marker{fill:#333333;stroke:#333333;}#mermaid-svg-5Q7zmIqE1nqsEbip .marker.cross{stroke:#333333;}#mermaid-svg-5Q7zmIqE1nqsEbip svg{font-family:"trebuchet ms",verdana,arial,sans-serif;font-size:16px;}#mermaid-svg-5Q7zmIqE1nqsEbip .label{font-family:"trebuchet ms",verdana,arial,sans-serif;color:#333;}#mermaid-svg-5Q7zmIqE1nqsEbip .cluster-label text{fill:#333;}#mermaid-svg-5Q7zmIqE1nqsEbip .cluster-label span{color:#333;}#mermaid-svg-5Q7zmIqE1nqsEbip .label text,#mermaid-svg-5Q7zmIqE1nqsEbip span{fill:#333;color:#333;}#mermaid-svg-5Q7zmIqE1nqsEbip .node rect,#mermaid-svg-5Q7zmIqE1nqsEbip .node circle,#mermaid-svg-5Q7zmIqE1nqsEbip .node ellipse,#mermaid-svg-5Q7zmIqE1nqsEbip .node polygon,#mermaid-svg-5Q7zmIqE1nqsEbip .node path{fill:#ECECFF;stroke:#9370DB;stroke-width:1px;}#mermaid-svg-5Q7zmIqE1nqsEbip .node .label{text-align:center;}#mermaid-svg-5Q7zmIqE1nqsEbip .node.clickable{cursor:pointer;}#mermaid-svg-5Q7zmIqE1nqsEbip .arrowheadPath{fill:#333333;}#mermaid-svg-5Q7zmIqE1nqsEbip .edgePath .path{stroke:#333333;stroke-width:2.0px;}#mermaid-svg-5Q7zmIqE1nqsEbip .flowchart-link{stroke:#333333;fill:none;}#mermaid-svg-5Q7zmIqE1nqsEbip .edgeLabel{background-color:#e8e8e8;text-align:center;}#mermaid-svg-5Q7zmIqE1nqsEbip .edgeLabel rect{opacity:0.5;background-color:#e8e8e8;fill:#e8e8e8;}#mermaid-svg-5Q7zmIqE1nqsEbip .cluster rect{fill:#ffffde;stroke:#aaaa33;stroke-width:1px;}#mermaid-svg-5Q7zmIqE1nqsEbip .cluster text{fill:#333;}#mermaid-svg-5Q7zmIqE1nqsEbip .cluster span{color:#333;}#mermaid-svg-5Q7zmIqE1nqsEbip div.mermaidTooltip{position:absolute;text-align:center;max-width:200px;padding:2px;font-family:"trebuchet ms",verdana,arial,sans-serif;font-size:12px;background:hsl(80, 100%, 96.2745098039%);border:1px solid #aaaa33;border-radius:2px;pointer-events:none;z-index:100;}#mermaid-svg-5Q7zmIqE1nqsEbip :root{--mermaid-font-family:"trebuchet ms",verdana,arial,sans-serif;}

k8s-master-lb

Haproxy01

Haproxy02

k8s-master01

k8s-master02

k8s-master03

1.2 集群规划

k8s节点使用华为云竞价计费实例,k8s-master-lb通过华为云虚拟vip实现。

主机IP地址说明组件master-lb10.0.10.10vipmaster0110.0.10.11master01etcd, kube-apiserver, controller manager, scheduler;

kube-proxy, kubelet;

keepalived+haproxy;

kubectlmaster0210.0.10.12master02etcd, kube-apiserver, controller manager, scheduler;

kube-proxy, kubelet;

keepalived+haproxymaster0310.0.10.13master03etcd, kube-apiserver, controller manager, scheduler;

kube-proxy, kubeletnode0110.0.10.101node01kube-proxy, kubelet

1.3 网段及版本信息

K8S与Docker等的版本参考依赖关系

配置信息说明系统版本CentOS7.9内核kernel-ml-4.19.12K8S版本1.26.1Docker版本20.10.*etcd版本3.5.6主机网段10.0.10.0/24Pod网段172.16.0.0/12Service网段192.168.0.0/16coredns1.9.4

2 创建私有云+服务器

2.1 创建vpc及子网

在华为云–私有云控制台点击创建“虚拟私有云”,同时创建vpc及subnets,网段见上节规划信息。

2.2 创建虚拟IP

在虚拟私有云控制台,导航栏选择“子网”。点击子网名称后,在“IP地址管理”页签中,单击“申请虚拟IP地址”,IP地址与集群规划信息保持一致。

2.3 购买ECS服务器

再次提醒:首次使用公有云一定要注意新用户优惠,最好用于购买云服务器,长期持有的服务器资源可支持域名备案

在ECS控制台,购买竞价计费模式的弹性云服务器,仅用于测试部署集群,随开随用,停机时仅需支付磁盘费用。

- 数量:4台,3台master+1台node节点

- 区域:北京四

- 计费模式:竞价计费,如图所示,开机费用¥0.13/h,停机¥0.02/h

- 规格:1C4G或2C4G,选最便宜的类型

- 磁盘:高IO型

- 镜像:CentOS7.9

- 弹性公网IP:静态BGP,按流量计费,带宽10,随实例释放

- 最终购买配置(4台):

- 创建完毕后,修改服务器IP和主机名等,与上节集群规划信息保持一致

- 修改主机名

# 分别设置主机名

hostnamectl set-hostname master01

#重启reboot

3 服务器配置

3.1 配置hosts

cat>> /etc/hosts <<EOF

10.0.10.10 master-lb

10.0.10.11 master01

10.0.10.12 master02

10.0.10.13 master03

10.0.10.101 node01

EOF

3.2 配置阿里源

- docker源

# 安装所需的软件包: yum-utils提供了yum-config-manager,用于管理yum仓库

yum install-y yum-utils

# 添加阿里源仓库

yum-config-manager --add-repo https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

3.3 必备工具安装

yum installwget jq psmisc vim net-tools telnet yum-utils device-mapper-persistent-data lvm2 git-y

3.4 免密登录(仅在master01执行)

# 返回homecd ~

# 生成密钥文件(一路回车即可)

ssh-keygen -t rsa

# 传输到免密机器上foriin master01 master02 master03 node01;do ssh-copy-id -i ~/.ssh/id_rsa.pub $i;done

4 Runtime安装(Containerd)

如果安装的版本低于1.24,选择Docker和Containerd均可,高于1.24选择Containerd作为Runtime。本文选择Containerd。

K8S官方容器运行时:安装指南

K8S与Docker等的版本:依赖关系

Docker离线安装包:下载地址

- 安装docker-ce-20.10:

yum install docker-ce-20.10.* docker-ce-cli-20.10.* containerd -y

- 无需启动Docker,只需要配置和启动Containerd即可

- 配置Containerd所需的模块(安装和配置的先决条件)

cat<<EOF|sudotee /etc/modules-load.d/containerd.conf

overlay

br_netfilter

EOF

- 加载模块

modprobe -- overlay &&

modprobe -- br_netfilter

- 配置Containerd所需内核

cat<<EOF|sudotee /etc/sysctl.d/99-kubernetes-cri.conf

net.bridge.bridge-nf-call-iptables = 1

net.ipv4.ip_forward = 1

net.bridge.bridge-nf-call-ip6tables = 1

EOF

- 加载内核

sysctl--system

- 配置Containerd的配置文件

mkdir-p /etc/containerd

containerd config default |tee /etc/containerd/config.toml

- 修改配置文件(使用 systemd cgroup 驱动程序)

vim /etc/containerd/config.toml

---------------------------------------

# 将Containerd的Cgroup改为Systemd:搜索SystemdCgroup,改为true[plugins."io.containerd.grpc.v1.cri".containerd.runtimes.runc]...

[plugins."io.containerd.grpc.v1.cri".containerd.runtimes.runc.options]

SystemdCgroup =true

---------------------------------------

# 同时将sandbox_image的Pause镜像改成符合自己版本的地址:

registry.cn-hangzhou.aliyuncs.com/google_containers/pause:3.6

- 启动Containerd,并配置开机自启动

systemctl daemon-reload && systemctl enable--now containerd

- 配置crictl客户端连接的运行时位置

cat> /etc/crictl.yaml <<EOF

runtime-endpoint: unix:///run/containerd/containerd.sock

image-endpoint: unix:///run/containerd/containerd.sock

timeout: 10

debug: false

EOF

- 测试

systemctl status containerd.service

# 查看镜像

ctr images ls# 查看容器

ctr container ls

5 kube-apiserver高可用实现

考虑到公有云负载均衡器的成本,本文采用虚拟IP+keepalived+Haproxy实现kube-apiserver的高可用。

高可用架构如下图所示,为节省机器,将Haproxy复用到master节点

#mermaid-svg-HpaYfLw5HVgfnOj3 {font-family:"trebuchet ms",verdana,arial,sans-serif;font-size:16px;fill:#333;}#mermaid-svg-HpaYfLw5HVgfnOj3 .error-icon{fill:#552222;}#mermaid-svg-HpaYfLw5HVgfnOj3 .error-text{fill:#552222;stroke:#552222;}#mermaid-svg-HpaYfLw5HVgfnOj3 .edge-thickness-normal{stroke-width:2px;}#mermaid-svg-HpaYfLw5HVgfnOj3 .edge-thickness-thick{stroke-width:3.5px;}#mermaid-svg-HpaYfLw5HVgfnOj3 .edge-pattern-solid{stroke-dasharray:0;}#mermaid-svg-HpaYfLw5HVgfnOj3 .edge-pattern-dashed{stroke-dasharray:3;}#mermaid-svg-HpaYfLw5HVgfnOj3 .edge-pattern-dotted{stroke-dasharray:2;}#mermaid-svg-HpaYfLw5HVgfnOj3 .marker{fill:#333333;stroke:#333333;}#mermaid-svg-HpaYfLw5HVgfnOj3 .marker.cross{stroke:#333333;}#mermaid-svg-HpaYfLw5HVgfnOj3 svg{font-family:"trebuchet ms",verdana,arial,sans-serif;font-size:16px;}#mermaid-svg-HpaYfLw5HVgfnOj3 .label{font-family:"trebuchet ms",verdana,arial,sans-serif;color:#333;}#mermaid-svg-HpaYfLw5HVgfnOj3 .cluster-label text{fill:#333;}#mermaid-svg-HpaYfLw5HVgfnOj3 .cluster-label span{color:#333;}#mermaid-svg-HpaYfLw5HVgfnOj3 .label text,#mermaid-svg-HpaYfLw5HVgfnOj3 span{fill:#333;color:#333;}#mermaid-svg-HpaYfLw5HVgfnOj3 .node rect,#mermaid-svg-HpaYfLw5HVgfnOj3 .node circle,#mermaid-svg-HpaYfLw5HVgfnOj3 .node ellipse,#mermaid-svg-HpaYfLw5HVgfnOj3 .node polygon,#mermaid-svg-HpaYfLw5HVgfnOj3 .node path{fill:#ECECFF;stroke:#9370DB;stroke-width:1px;}#mermaid-svg-HpaYfLw5HVgfnOj3 .node .label{text-align:center;}#mermaid-svg-HpaYfLw5HVgfnOj3 .node.clickable{cursor:pointer;}#mermaid-svg-HpaYfLw5HVgfnOj3 .arrowheadPath{fill:#333333;}#mermaid-svg-HpaYfLw5HVgfnOj3 .edgePath .path{stroke:#333333;stroke-width:2.0px;}#mermaid-svg-HpaYfLw5HVgfnOj3 .flowchart-link{stroke:#333333;fill:none;}#mermaid-svg-HpaYfLw5HVgfnOj3 .edgeLabel{background-color:#e8e8e8;text-align:center;}#mermaid-svg-HpaYfLw5HVgfnOj3 .edgeLabel rect{opacity:0.5;background-color:#e8e8e8;fill:#e8e8e8;}#mermaid-svg-HpaYfLw5HVgfnOj3 .cluster rect{fill:#ffffde;stroke:#aaaa33;stroke-width:1px;}#mermaid-svg-HpaYfLw5HVgfnOj3 .cluster text{fill:#333;}#mermaid-svg-HpaYfLw5HVgfnOj3 .cluster span{color:#333;}#mermaid-svg-HpaYfLw5HVgfnOj3 div.mermaidTooltip{position:absolute;text-align:center;max-width:200px;padding:2px;font-family:"trebuchet ms",verdana,arial,sans-serif;font-size:12px;background:hsl(80, 100%, 96.2745098039%);border:1px solid #aaaa33;border-radius:2px;pointer-events:none;z-index:100;}#mermaid-svg-HpaYfLw5HVgfnOj3 :root{--mermaid-font-family:"trebuchet ms",verdana,arial,sans-serif;}

k8s-master-lb

Haproxy01

Haproxy02

k8s-master01

k8s-master02

k8s-master03

- 上文中已创建虚拟IP,接着在虚拟IP控制台绑定后端机器(master01,master02,master03)

- Master节点通过yum安装HAProxy和KeepAlived

yum install keepalived haproxy -y

- 配置HAProxy(所有机器配置相同)

删除默认配置,使用以下配置,注意按需修改监听端口16443和后端负载masterIP:6443

vim /etc/haproxy/haproxy.cfg

global

maxconn 2000

ulimit-n 16384

log 127.0.0.1 local0 err

stats timeout 30s

defaults

log global

mode http

option httplog

timeout connect 5000

timeout client 50000

timeout server 50000

timeout http-request 15s

timeout http-keep-alive 15s

frontend monitor-in

bind *:33305

mode http

option httplog

monitor-uri /monitor

frontend k8s-master

bind 0.0.0.0:16443

bind 127.0.0.1:16443

mode tcp

option tcplog

tcp-request inspect-delay 5s

default_backend k8s-master

backend k8s-master

mode tcp

option tcplog

option tcp-check

balance roundrobin

default-server inter 10s downinter 5s rise 2 fall 2 slowstart 60s maxconn 250 maxqueue 256 weight 100

server master01 10.0.10.11:6443 check

server master02 10.0.10.12:6443 check

server master03 10.0.10.13:6443 check

- 配置keepalived

删除默认配置,使用以下配置,注意区分每个节点的state、unicast_src_ip、priority、unicast_peer配置

vim /etc/keepalived/keepalived.conf

global_defs {

router_id LVS_DEVEL

script_user root

enable_script_security

}

vrrp_script chk_apiserver {

script "/etc/keepalived/check_apiserver.sh"

interval 5

weight -5

fall 2

rise 1

}

vrrp_instance VI_1 {

state MASTER # 主=MASTER,从=BACKUP

interface eth0

unicast_src_ip 10.0.0.11

virtual_router_id 52 # 唯一ID

priority 100 # 主=100,从<100

advert_int 1

authentication {

auth_type PASS

auth_pass K8SHA_KA_AUTH

}

unicast_peer {

10.0.10.12 # 对端节点

10.0.10.13

}

virtual_ipaddress {

10.0.10.10 # 高可用虚拟IP

}

track_script {

chk_apiserver

}

}

- 配置KeepAlived健康检查

vim /etc/keepalived/check_apiserver.sh

#!/bin/basherr=0forkin$(seq13)docheck_code=$(pgrep haproxy)if[[$check_code==""]];thenerr=$(expr $err + 1)sleep1continueelseerr=0breakfidoneif[[$err!="0"]];thenecho"systemctl stop keepalived"

/usr/bin/systemctl stop keepalived

exit1elseexit0fi

# 执行权限chmod +x /etc/keepalived/check_apiserver.sh

- 启动haproxy和keepalived

systemctl daemon-reload &&

systemctl enable--now haproxy &&

systemctl enable--now keepalived

# 查看状态

systemctl status haproxy

systemctl status keepalived

# systemctl restart keepalived && systemctl status keepalived

- 测试keepalived是否正常

ping10.0.10.10 -c4

telnet 10.0.0.10 16443(安装apiserver后再测试)

---

ip addr show

# 在master01机器上查看是否绑定了虚拟IP# 关闭master01,会发现虚拟IP被master02绑定# 再开机master01,虚拟IP会被master01重新绑定

6 K8S&ETCD安装包下载

6.1 kubernetes

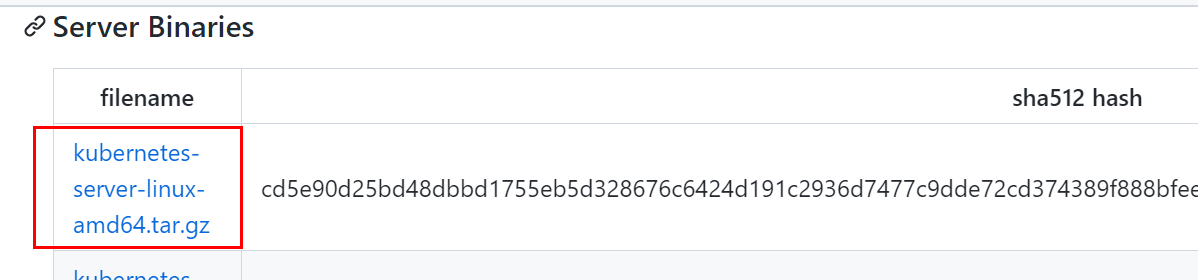

- 下载kubernetes安装包,选择v1.26.1,官方发布地址:

提示:kubernetes安装包已同时打包上传至文库,传送地址:k8s-1.26.1版本二进制部署文件(含插件)

https://github.com/kubernetes/kubernetes/tree/master/CHANGELOG

# server版包括了所有组件wget https://dl.k8s.io/v1.26.1/kubernetes-server-linux-amd64.tar.gz

6.2 etcd

etcd是CoreOS团队于2013年6月发起的开源项目,它的目标是构建一个高可用的分布式键值(key-value)数据库。etcd内部采用

raft

协议作为一致性算法,etcd基于Go语言实现。

- 下载etcd安装包,选择v3.5.6,官方发布地址:

提示:etcd安装包已同时打包上传至文库,传送地址:k8s-1.26.1版本二进制部署文件(含插件)

https://github.com/etcd-io/etcd/releases

wget https://github.com/etcd-io/etcd/releases/download/v3.5.6/etcd-v3.5.6-linux-amd64.tar.gz

6.3 解压并分发

- 分别解压

# 解压kubernetes安装文件tar-xf kubernetes-server-linux-amd64.tar.gz --strip-components=3-C /usr/local/bin kubernetes/server/bin/kube{let,ctl,-apiserver,-controller-manager,-scheduler,-proxy}# 解压etcd安装文件tar-zxvf etcd-v3.5.6-linux-amd64.tar.gz --strip-components=1-C /usr/local/bin etcd-v3.5.6-linux-amd64/etcd{,ctl}

- 将组件发送到其他节点

MasterNodes='master02 master03'WorkNodes='node01'forNODEin$MasterNodes;doecho$NODE;scp /usr/local/bin/kube{let,ctl,-apiserver,-controller-manager,-scheduler,-proxy}$NODE:/usr/local/bin/;scp /usr/local/bin/etcd* $NODE:/usr/local/bin/;doneforNODEin$WorkNodes;doscp /usr/local/bin/kube{let,-proxy}$NODE:/usr/local/bin/;done

7 制作证书

Kubernetes 系统各组件需要使用 TLS 证书对通信进行加密。

7.1 下载生成证书工具CFSSL

CFSSL是CloudFlare开源的一款PKI/TLS工具,CFSSL包含一个命令行工具和一个用于签名,验证并且捆绑TLS证书的HTTP API服务,使用Go语言编写。在使用etcd,kubernetes等组件的过程中会大量接触到证书的生成和使用。

提示:CFSSL安装包已同时打包上传至文库,传送地址:k8s-1.26.1版本二进制部署文件(含插件)

wget"https://github.com/cloudflare/cfssl/releases/download/v1.6.1/cfssl_1.6.1_linux_amd64"-O /usr/local/bin/cfssl

wget"https://github.com/cloudflare/cfssl/releases/download/v1.6.1/cfssljson_1.6.1_linux_amd64"-O /usr/local/bin/cfssljson

# 离线下载mv cfssl cfssljson /usr/local/bin/

chmod +x /usr/local/bin/cfssl*

7.2 ETCD证书

- 创建etcd证书目录

mkdir-p /etc/etcd/ssl

cd /etc/etcd/ssl

- 创建etcd-ca-csr.json,修改年限为100年

CN(Common Name): etcd/kube-apiserver从证书中提取该字段作为请求的用户名 (User Name);浏览器使用该字段验证网站是否合法,一般写的是域名。

O(Organization): etcd/kube-apiserver 从证书中提取该字段作为请求用户所属的组 (Group);

这两个参数在后面的kubernetes启用RBAC模式中很重要,因为需要设置kubelet、admin等角色权限

C(Country): 国家

ST(State): 州,省

L(Locality): 地区,城市

O(Organization Name): 组织名称,公司名称

OU(Organization Unit Name): 组织单位名称,公司部门

cat> etcd-ca-csr.json <<EOF

{

"CN": "etcd",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "Beijing",

"L": "Beijing",

"O": "etcd",

"OU": "Etcd Security"

}

],

"ca": {

"expiry": "876000h"

}

}

EOF

- 生成etcd的CA认证中心

# 初始化创建CA认证中心,将会生成 ca-key.pem(私钥) ca.pem(公钥)

cfssl gencert -initca etcd-ca-csr.json | cfssljson -bare /etc/etcd/ssl/etcd-ca

# etcd-ca.csr # 根证书申请文件# etcd-ca-key.pem # 根证书私钥# etcd-ca.pem # 根证书公钥# 查看证书时间

openssl x509 -in etcd-ca.pem -noout-text|grep' Not '

- 创建ca-config.json

# 知识点:ca-config.json: 可以定义多个 profiles,分别指定不同的过期时间、使用场景等参数;后续在签名证书时使用某个 profile;此实例只有一个kubernetes模板。

signing: 表示该证书可用于签名其它证书;生成的 ca.pem 证书中 CA=TRUE;

server auth: 表示client可以用该CA对server提供的证书进行验证;

client auth: 表示server可以用该CA对client提供的证书进行验证;

cat > ca-config.json <<EOF{"signing":{"default":{"expiry":"876000h"},"profiles":{"kubernetes":{"usages":["signing","key encipherment","server auth","client auth"],"expiry":"876000h"}}}}EOF

- etcd-csr.json

cat > etcd-csr.json <<EOF{"CN":"etcd","hosts":["127.0.0.1","master01","master02","master03","10.0.10.11","10.0.10.12","10.0.10.13"],"key":{"algo":"rsa","size":2048},"names":[{"C":"CN","ST":"Beijing","L":"Beijing","O":"etcd","OU":"Etcd Security"}]}EOF

- 签发

cfssl gencert \-ca=/etc/etcd/ssl/etcd-ca.pem \

-ca-key=/etc/etcd/ssl/etcd-ca-key.pem \-config=ca-config.json \-profile=kubernetes \

etcd-csr.json | cfssljson -bare /etc/etcd/ssl/etcd

- 将证书复制到其他节点

MasterNodes='master02 master03'forNODEin$MasterNodes;dossh$NODE"mkdir -p /etc/etcd/ssl"forFILEin etcd-ca-key.pem etcd-ca.pem etcd-key.pem etcd.pem;doscp /etc/etcd/ssl/${FILE}$NODE:/etc/etcd/ssl/${FILE}donedone

7.3 apiserver证书

- 创建k8s证书目录

mkdir-p /etc/kubernetes/pki/

cd /etc/kubernetes/pki/

- 创建ca-csr.json,修改年限为100年

cat > ca-csr.json <<EOF{"CN":"kubernetes","key":{"algo":"rsa","size":2048},"names":[{"C":"CN","ST":"Beijing","L":"Beijing","O":"Kubernetes","OU":"Kubernetes-manual"}],"ca":{"expiry":"876000h"}}EOF

- 生成k8s的CA认证中心

# 创建k8s的CA认证中心,将会生成 ca-key.pem(私钥) ca.pem(公钥)

cfssl gencert -initca ca-csr.json | cfssljson -bare /etc/kubernetes/pki/ca

# 查看证书时间

openssl x509 -in ca.pem -noout-text|grep' Not '

- 创建ca-config.json

cat > ca-config.json <<EOF{"signing":{"default":{"expiry":"876000h"},"profiles":{"kubernetes":{"usages":["signing","key encipherment","server auth","client auth"],"expiry":"876000h"}}}}EOF

- apiserver-csr.json

service网段第一个IP

所有masterIP

负载均衡IP

cat > apiserver-csr.json <<EOF{"CN":"kube-apiserver","hosts":["192.168.0.1","127.0.0.1","10.0.10.10","10.0.10.11","10.0.10.12","10.0.10.13","kubernetes","kubernetes.default","kubernetes.default.svc","kubernetes.default.svc.cluster","kubernetes.default.svc.cluster.local"],"key":{"algo":"rsa","size":2048},"names":[{"C":"CN","ST":"Beijing","L":"Beijing","O":"Kubernetes","OU":"Kubernetes-manual"}]}EOF

- 签发

cfssl gencert \-ca=/etc/kubernetes/pki/ca.pem \

-ca-key=/etc/kubernetes/pki/ca-key.pem \-config=ca-config.json \-profile=kubernetes \

apiserver-csr.json | cfssljson -bare /etc/kubernetes/pki/apiserver

7.4 apiserver聚合证书

- front-proxy-ca-csr.json

cat> front-proxy-ca-csr.json <<EOF

{

"CN": "kubernetes",

"key": {

"algo": "rsa",

"size": 2048

},

"ca": {

"expiry": "876000h"

}

}

EOF

- 创建apiserver聚合证书的CA认证中心

cfssl gencert -initca front-proxy-ca-csr.json | cfssljson -bare /etc/kubernetes/pki/front-proxy-ca

cat > front-proxy-client-csr.json <<EOF{"CN":"front-proxy-client","key":{"algo":"rsa","size":2048}}EOF

- 签发

cfssl gencert \-ca=/etc/kubernetes/pki/front-proxy-ca.pem \

-ca-key=/etc/kubernetes/pki/front-proxy-ca-key.pem \-config=ca-config.json \-profile=kubernetes \

front-proxy-client-csr.json | cfssljson -bare /etc/kubernetes/pki/front-proxy-client

7.5 controller-manage证书

cat > manager-csr.json <<EOF{"CN":"system:kube-controller-manager","key":{"algo":"rsa","size":2048},"names":[{"C":"CN","ST":"Beijing","L":"Beijing","O":"system:kube-controller-manager","OU":"Kubernetes-manual"}]}EOF

- 签发

cfssl gencert \-ca=/etc/kubernetes/pki/ca.pem \

-ca-key=/etc/kubernetes/pki/ca-key.pem \-config=ca-config.json \-profile=kubernetes \

manager-csr.json | cfssljson -bare /etc/kubernetes/pki/controller-manager

- 配置controller-manager.kubeconfig(VIP)

kubectl config set-cluster kubernetes \

--certificate-authority=/etc/kubernetes/pki/ca.pem \

--embed-certs=true \--server=https://10.0.10.10:16443 \--kubeconfig=/etc/kubernetes/controller-manager.kubeconfig

# 设置一个环境项,一个上下文

kubectl config set-context system:kube-controller-manager@kubernetes \--cluster=kubernetes \--user=system:kube-controller-manager \--kubeconfig=/etc/kubernetes/controller-manager.kubeconfig

# set-credentials 设置一个用户项

kubectl config set-credentials system:kube-controller-manager \

--client-certificate=/etc/kubernetes/pki/controller-manager.pem \

--client-key=/etc/kubernetes/pki/controller-manager-key.pem \

--embed-certs=true \--kubeconfig=/etc/kubernetes/controller-manager.kubeconfig

# 使用某个环境当做默认环境

kubectl config use-context system:kube-controller-manager@kubernetes \--kubeconfig=/etc/kubernetes/controller-manager.kubeconfig

7.6 scheduler证书

cat > scheduler-csr.json<<EOF{"CN":"system:kube-scheduler","key":{"algo":"rsa","size":2048},"names":[{"C":"CN","ST":"Beijing","L":"Beijing","O":"system:kube-scheduler","OU":"Kubernetes-manual"}]}EOF

- 签发

cfssl gencert \-ca=/etc/kubernetes/pki/ca.pem \

-ca-key=/etc/kubernetes/pki/ca-key.pem \-config=ca-config.json \-profile=kubernetes \

scheduler-csr.json | cfssljson -bare /etc/kubernetes/pki/scheduler

- 配置scheduler.kubeconfig

kubectl config set-cluster kubernetes \

--certificate-authority=/etc/kubernetes/pki/ca.pem \

--embed-certs=true \--server=https://10.0.10.10:16443 \--kubeconfig=/etc/kubernetes/scheduler.kubeconfig

kubectl config set-credentials system:kube-scheduler \

--client-certificate=/etc/kubernetes/pki/scheduler.pem \

--client-key=/etc/kubernetes/pki/scheduler-key.pem \

--embed-certs=true \--kubeconfig=/etc/kubernetes/scheduler.kubeconfig

kubectl config set-context system:kube-scheduler@kubernetes \--cluster=kubernetes \--user=system:kube-scheduler \--kubeconfig=/etc/kubernetes/scheduler.kubeconfig

kubectl config use-context system:kube-scheduler@kubernetes \--kubeconfig=/etc/kubernetes/scheduler.kubeconfig

7.7 admin证书

- admin-csr.json

cat > admin-csr.json <<EOF{"CN":"admin","key":{"algo":"rsa","size":2048},"names":[{"C":"CN","ST":"Beijing","L":"Beijing","O":"system:masters","OU":"Kubernetes-manual"}]}EOF

- 签发

cfssl gencert \-ca=/etc/kubernetes/pki/ca.pem \

-ca-key=/etc/kubernetes/pki/ca-key.pem \-config=ca-config.json \-profile=kubernetes \

admin-csr.json | cfssljson -bare /etc/kubernetes/pki/admin

- 配置

kubectl config set-cluster kubernetes \

--certificate-authority=/etc/kubernetes/pki/ca.pem \

--embed-certs=true \--server=https://10.0.10.10:16443 \--kubeconfig=/etc/kubernetes/admin.kubeconfig

kubectl config set-credentials kubernetes-admin \

--client-certificate=/etc/kubernetes/pki/admin.pem \

--client-key=/etc/kubernetes/pki/admin-key.pem \

--embed-certs=true \--kubeconfig=/etc/kubernetes/admin.kubeconfig

kubectl config set-context kubernetes-admin@kubernetes \--cluster=kubernetes \--user=kubernetes-admin \--kubeconfig=/etc/kubernetes/admin.kubeconfig

kubectl config use-context kubernetes-admin@kubernetes \--kubeconfig=/etc/kubernetes/admin.kubeconfig

7.8 ServiceAccount Key

openssl genrsa -out /etc/kubernetes/pki/sa.key 2048

openssl rsa -in /etc/kubernetes/pki/sa.key -pubout-out /etc/kubernetes/pki/sa.pub

7.9 发送证书至其他节点

forNODEin master02 master03;doforFILEin$(ls /etc/kubernetes/pki |grep-v etcd);dossh$NODE"mkdir -p /etc/kubernetes/pki"scp /etc/kubernetes/pki/${FILE}$NODE:/etc/kubernetes/pki/${FILE};done;forFILEin admin.kubeconfig controller-manager.kubeconfig scheduler.kubeconfig;doscp /etc/kubernetes/${FILE}$NODE:/etc/kubernetes/${FILE};done;done

- 查看证书数量(31个)

ls /etc/kubernetes/pki/ |wc-l

8 Kubernetes系统组件配置

8.1 ETCD

- master01

vim /etc/etcd/etcd.config.yml

name:'master01'data-dir: /var/lib/etcd

wal-dir: /var/lib/etcd/wal

snapshot-count:5000heartbeat-interval:100election-timeout:1000quota-backend-bytes:0listen-peer-urls:'https://10.0.10.11:2380'listen-client-urls:'https://10.0.10.11:2379,http://127.0.0.1:2379'max-snapshots:3max-wals:5cors:initial-advertise-peer-urls:'https://10.0.10.11:2380'advertise-client-urls:'https://10.0.10.11:2379'discovery:discovery-fallback:'proxy'discovery-proxy:discovery-srv:initial-cluster:'master01=https://10.0.10.11:2380,master02=https://10.0.10.12:2380,master03=https://10.0.10.13:2380'initial-cluster-token:'etcd-k8s-cluster'initial-cluster-state:'new'strict-reconfig-check:falseenable-v2:trueenable-pprof:trueproxy:'off'proxy-failure-wait:5000proxy-refresh-interval:30000proxy-dial-timeout:1000proxy-write-timeout:5000proxy-read-timeout:0client-transport-security:cert-file:'/etc/kubernetes/pki/etcd/etcd.pem'key-file:'/etc/kubernetes/pki/etcd/etcd-key.pem'client-cert-auth:truetrusted-ca-file:'/etc/kubernetes/pki/etcd/etcd-ca.pem'auto-tls:truepeer-transport-security:cert-file:'/etc/kubernetes/pki/etcd/etcd.pem'key-file:'/etc/kubernetes/pki/etcd/etcd-key.pem'peer-client-cert-auth:truetrusted-ca-file:'/etc/kubernetes/pki/etcd/etcd-ca.pem'auto-tls:truedebug:falselog-package-levels:log-outputs:[default]force-new-cluster:false

- 其它master节点注意修改名称和IP即可

- 创建etcd service并启动

cat> /usr/lib/systemd/system/etcd.service <<EOF

[Unit]

Description=Etcd Service

Documentation=https://coreos.com/etcd/docs/latest/

After=network.target

[Service]

Type=notify

ExecStart=/usr/local/bin/etcd --config-file=/etc/etcd/etcd.config.yml

Restart=on-failure

RestartSec=10

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

Alias=etcd3.service

EOF

- 证书目录

mkdir-p /etc/kubernetes/pki/etcd

ln-s /etc/etcd/ssl/* /etc/kubernetes/pki/etcd/

systemctl daemon-reload

systemctl enable--now etcd

- 查看ETCD状态

exportETCDCTL_API=3

etcdctl --endpoints="10.0.10.11:2379,10.0.10.12:2379,10.0.10.13:2379"--cacert=/etc/kubernetes/pki/etcd/etcd-ca.pem --cert=/etc/kubernetes/pki/etcd/etcd.pem --key=/etc/kubernetes/pki/etcd/etcd-key.pem endpoint status --write-out=table

8.2 apiserver

- 所有节点创建相关目录

mkdir-p /etc/kubernetes/manifests/ /etc/systemd/system/kubelet.service.d /var/lib/kubelet /var/log/kubernetes

- master01

vim /usr/lib/systemd/system/kube-apiserver.service

[Unit]

Description=Kubernetes API Server

Documentation=https://github.com/kubernetes/kubernetes

After=network.target

[Service]

ExecStart=/usr/local/bin/kube-apiserver \

--v=2 \

--allow-privileged=true \

--bind-address=0.0.0.0 \

--secure-port=6443 \

--advertise-address=10.0.10.11 \

--service-cluster-ip-range=192.168.0.0/16 \

--service-node-port-range=30000-32767 \

--etcd-servers=https://10.0.10.11:2379,https://10.0.10.12:2379,https://10.0.10.13:2379 \

--etcd-cafile=/etc/etcd/ssl/etcd-ca.pem \

--etcd-certfile=/etc/etcd/ssl/etcd.pem \

--etcd-keyfile=/etc/etcd/ssl/etcd-key.pem \

--client-ca-file=/etc/kubernetes/pki/ca.pem \

--tls-cert-file=/etc/kubernetes/pki/apiserver.pem \

--tls-private-key-file=/etc/kubernetes/pki/apiserver-key.pem \

--kubelet-client-certificate=/etc/kubernetes/pki/apiserver.pem \

--kubelet-client-key=/etc/kubernetes/pki/apiserver-key.pem \

--service-account-key-file=/etc/kubernetes/pki/sa.pub \

--service-account-signing-key-file=/etc/kubernetes/pki/sa.key \

--service-account-issuer=https://kubernetes.default.svc.cluster.local \

--kubelet-preferred-address-types=InternalIP,ExternalIP,Hostname \

--enable-admission-plugins=NamespaceLifecycle,LimitRanger,ServiceAccount,DefaultStorageClass,DefaultTolerationSeconds,NodeRestriction,ResourceQuota \

--authorization-mode=Node,RBAC \

--enable-bootstrap-token-auth=true \

--requestheader-client-ca-file=/etc/kubernetes/pki/front-proxy-ca.pem \

--proxy-client-cert-file=/etc/kubernetes/pki/front-proxy-client.pem \

--proxy-client-key-file=/etc/kubernetes/pki/front-proxy-client-key.pem \

--requestheader-allowed-names=aggregator \

--requestheader-group-headers=X-Remote-Group \

--requestheader-extra-headers-prefix=X-Remote-Extra- \

--requestheader-username-headers=X-Remote-User

# --token-auth-file=/etc/kubernetes/token.csv

Restart=on-failure

RestartSec=10s

LimitNOFILE=65535

[Install]

WantedBy=multi-user.target

- 其它master修改–advertise-address

- 启动

systemctl daemon-reload && systemctl enable--now kube-apiserver

8.3 kube-controller-manager

vim /usr/lib/systemd/system/kube-controller-manager.service

[Unit]

Description=Kubernetes Controller Manager

Documentation=https://github.com/kubernetes/kubernetes

After=network.target

[Service]

ExecStart=/usr/local/bin/kube-controller-manager \

--v=2 \

--root-ca-file=/etc/kubernetes/pki/ca.pem \

--cluster-signing-cert-file=/etc/kubernetes/pki/ca.pem \

--cluster-signing-key-file=/etc/kubernetes/pki/ca-key.pem \

--service-account-private-key-file=/etc/kubernetes/pki/sa.key \

--kubeconfig=/etc/kubernetes/controller-manager.kubeconfig \

--feature-gates=LegacyServiceAccountTokenNoAutoGeneration=false \

--leader-elect=true \

--use-service-account-credentials=true \

--node-monitor-grace-period=40s \

--node-monitor-period=5s \

--pod-eviction-timeout=2m0s \

--controllers=*,bootstrapsigner,tokencleaner \

--allocate-node-cidrs=true \

--cluster-cidr=172.16.0.0/12 \

--requestheader-client-ca-file=/etc/kubernetes/pki/front-proxy-ca.pem \

--node-cidr-mask-size=24

Restart=always

RestartSec=10s

[Install]

WantedBy=multi-user.target

- 启动

systemctl daemon-reload && systemctl enable --now kube-controller-manager

- 查看状态

systemctl status kube-controller-manager

8.4 Scheduler

vim /usr/lib/systemd/system/kube-scheduler.service

[Unit]

Description=Kubernetes Scheduler

Documentation=https://github.com/kubernetes/kubernetes

After=network.target

[Service]

ExecStart=/usr/local/bin/kube-scheduler \

--v=2 \

--leader-elect=true \

--authentication-kubeconfig=/etc/kubernetes/scheduler.kubeconfig \

--authorization-kubeconfig=/etc/kubernetes/scheduler.kubeconfig \

--kubeconfig=/etc/kubernetes/scheduler.kubeconfig

Restart=always

RestartSec=10s

[Install]

WantedBy=multi-user.target

systemctl daemon-reload && systemctl enable --now kube-scheduler

9 TLS Bootstrapping配置

- 在master01创建bootstrap

kubectl config set-cluster kubernetes \

--certificate-authority=/etc/kubernetes/pki/ca.pem \

--embed-certs=true \--server=https://10.0.10.10:16443 \--kubeconfig=/etc/kubernetes/bootstrap-kubelet.kubeconfig

kubectl config set-credentials tls-bootstrap-token-user \--token=c8ad9c.2e4d610cf3e7426e \--kubeconfig=/etc/kubernetes/bootstrap-kubelet.kubeconfig

kubectl config set-context tls-bootstrap-token-user@kubernetes \--cluster=kubernetes \--user=tls-bootstrap-token-user \--kubeconfig=/etc/kubernetes/bootstrap-kubelet.kubeconfig

kubectl config use-context tls-bootstrap-token-user@kubernetes \--kubeconfig=/etc/kubernetes/bootstrap-kubelet.kubeconfig

cd /etc/kubernetes/

vim bootstrap.secret.yaml

apiVersion: v1

kind: Secret

metadata:name: bootstrap-token-c8ad9c

namespace: kube-system

type: bootstrap.kubernetes.io/token

stringData:description:"The default bootstrap token generated by 'kubelet '."token-id: c8ad9c

token-secret: 2e4d610cf3e7426e

usage-bootstrap-authentication:"true"usage-bootstrap-signing:"true"auth-extra-groups: system:bootstrappers:default-node-token,system:bootstrappers:worker,system:bootstrappers:ingress

---apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:name: kubelet-bootstrap

roleRef:apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: system:node-bootstrapper

subjects:-apiGroup: rbac.authorization.k8s.io

kind: Group

name: system:bootstrappers:default-node-token

---apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:name: node-autoapprove-bootstrap

roleRef:apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: system:certificates.k8s.io:certificatesigningrequests:nodeclient

subjects:-apiGroup: rbac.authorization.k8s.io

kind: Group

name: system:bootstrappers:default-node-token

---apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:name: node-autoapprove-certificate-rotation

roleRef:apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: system:certificates.k8s.io:certificatesigningrequests:selfnodeclient

subjects:-apiGroup: rbac.authorization.k8s.io

kind: Group

name: system:nodes

---apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:annotations:rbac.authorization.kubernetes.io/autoupdate:"true"labels:kubernetes.io/bootstrapping: rbac-defaults

name: system:kube-apiserver-to-kubelet

rules:-apiGroups:-""resources:- nodes/proxy

- nodes/stats

- nodes/log

- nodes/spec

- nodes/metrics

verbs:-"*"---apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:name: system:kube-apiserver

namespace:""roleRef:apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: system:kube-apiserver-to-kubelet

subjects:-apiGroup: rbac.authorization.k8s.io

kind: User

name: kube-apiserver

- 配置kubeconfig

mkdir-p /root/.kube ;cp /etc/kubernetes/admin.kubeconfig /root/.kube/config

- 查询集群状态

kubectl get cs

- 创建

kubectl create -f bootstrap.secret.yaml

10 Node节点配置

注:master也看做是node节点,承担负载

10.1 复制证书

cd /etc/kubernetes/

forNODEin master02 master03 node01;dossh$NODEmkdir-p /etc/kubernetes/pki /etc/etcd/ssl /etc/etcd/ssl

forFILEin etcd-ca.pem etcd.pem etcd-key.pem;doscp /etc/etcd/ssl/$FILE$NODE:/etc/etcd/ssl/

doneforFILEin pki/ca.pem pki/ca-key.pem pki/front-proxy-ca.pem bootstrap-kubelet.kubeconfig;doscp /etc/kubernetes/$FILE$NODE:/etc/kubernetes/${FILE}donedone

10.2 kubelet配置

- 所有节点创建相关目录

mkdir-p /var/lib/kubelet /var/log/kubernetes /etc/systemd/system/kubelet.service.d /etc/kubernetes/manifests/ /opt/cni/bin

- 配置kubelet service

cat> /usr/lib/systemd/system/kubelet.service <<EOF

[Unit]

Description=Kubernetes Kubelet

Documentation=https://github.com/kubernetes/kubernetes

[Service]

ExecStart=/usr/local/bin/kubelet

Restart=always

StartLimitInterval=0

RestartSec=10

[Install]

WantedBy=multi-user.target

EOF

- 10-kubelet.conf【containerd】

vim /etc/systemd/system/kubelet.service.d/10-kubelet.conf

[Service]

Environment="KUBELET_KUBECONFIG_ARGS=--bootstrap-kubeconfig=/etc/kubernetes/bootstrap-kubelet.kubeconfig --kubeconfig=/etc/kubernetes/kubelet.kubeconfig"

Environment="KUBELET_SYSTEM_ARGS=--container-runtime=remote --runtime-request-timeout=15m --container-runtime-endpoint=unix:///run/containerd/containerd.sock --cgroup-driver=systemd"

Environment="KUBELET_CONFIG_ARGS=--config=/etc/kubernetes/kubelet-conf.yml"

Environment="KUBELET_EXTRA_ARGS=--node-labels=node.kubernetes.io/node='' "

ExecStart=

ExecStart=/usr/local/bin/kubelet $KUBELET_KUBECONFIG_ARGS $KUBELET_CONFIG_ARGS $KUBELET_SYSTEM_ARGS $KUBELET_EXTRA_ARGS

vim /etc/kubernetes/kubelet-conf.yml

apiVersion: kubelet.config.k8s.io/v1beta1

kind: KubeletConfiguration

address: 0.0.0.0

port:10250readOnlyPort:10255authentication:anonymous:enabled:falsewebhook:cacheTTL: 2m0s

enabled:truex509:clientCAFile: /etc/kubernetes/pki/ca.pem

authorization:mode: Webhook

webhook:cacheAuthorizedTTL: 5m0s

cacheUnauthorizedTTL: 30s

cgroupDriver: systemd

cgroupsPerQOS:trueclusterDNS:- 192.168.0.10

clusterDomain: cluster.local

containerLogMaxFiles:5containerLogMaxSize: 10Mi

contentType: application/vnd.kubernetes.protobuf

cpuCFSQuota:truecpuManagerPolicy: none

cpuManagerReconcilePeriod: 10s

enableControllerAttachDetach:trueenableDebuggingHandlers:trueenforceNodeAllocatable:- pods

eventBurst:10eventRecordQPS:5evictionHard:imagefs.available: 15%

memory.available: 100Mi

nodefs.available: 10%

nodefs.inodesFree: 5%

evictionPressureTransitionPeriod: 5m0s

failSwapOn:truefileCheckFrequency: 20s

hairpinMode: promiscuous-bridge

healthzBindAddress: 127.0.0.1

healthzPort:10248httpCheckFrequency: 20s

imageGCHighThresholdPercent:85imageGCLowThresholdPercent:80imageMinimumGCAge: 2m0s

iptablesDropBit:15iptablesMasqueradeBit:14kubeAPIBurst:10kubeAPIQPS:5makeIPTablesUtilChains:truemaxOpenFiles:1000000maxPods:110nodeStatusUpdateFrequency: 10s

oomScoreAdj:-999podPidsLimit:-1registryBurst:10registryPullQPS:5resolvConf: /etc/resolv.conf

rotateCertificates:trueruntimeRequestTimeout: 2m0s

serializeImagePulls:truestaticPodPath: /etc/kubernetes/manifests

streamingConnectionIdleTimeout: 4h0m0s

syncFrequency: 1m0s

volumeStatsAggPeriod: 1m0s

systemctl daemon-reload && systemctl enable--now kubelet

10.3 kube-proxy配置

- master01操作

kubectl -n kube-system create serviceaccount kube-proxy

kubectl create clusterrolebinding system:kube-proxy \--clusterrole system:node-proxier \--serviceaccount kube-system:kube-proxy

SECRET=$(kubectl -n kube-system get sa/kube-proxy \--output=jsonpath='{.secrets[0].name}')JWT_TOKEN=$(kubectl -n kube-system get secret/$SECRET \--output=jsonpath='{.data.token}'| base64 -d)PKI_DIR=/etc/kubernetes/pki

K8S_DIR=/etc/kubernetes

kubectl config set-cluster kubernetes \

--certificate-authority=/etc/kubernetes/pki/ca.pem \

--embed-certs=true \--server=https://10.0.10.10:16443 \--kubeconfig=${K8S_DIR}/kube-proxy.kubeconfig

kubectl config set-credentials kubernetes \--token=${JWT_TOKEN}\--kubeconfig=/etc/kubernetes/kube-proxy.kubeconfig

kubectl config set-context kubernetes \--cluster=kubernetes \--user=kubernetes \--kubeconfig=/etc/kubernetes/kube-proxy.kubeconfig

kubectl config use-context kubernetes --kubeconfig=/etc/kubernetes/kube-proxy.kubeconfig

forNODEin master02 master03;doscp /etc/kubernetes/kube-proxy.kubeconfig $NODE:/etc/kubernetes/kube-proxy.kubeconfig

doneforNODEin node01;doscp /etc/kubernetes/kube-proxy.kubeconfig $NODE:/etc/kubernetes/kube-proxy.kubeconfig

done

- 所有节点添加kube-proxy的配置和service文件

cat> /usr/lib/systemd/system/kube-proxy.service <<EOF

[Unit]

Description=Kubernetes Kube Proxy

Documentation=https://github.com/kubernetes/kubernetes

After=network.target

[Service]

ExecStart=/usr/local/bin/kube-proxy \

--config=/etc/kubernetes/kube-proxy.yaml \

--v=2

Restart=always

RestartSec=10s

[Install]

WantedBy=multi-user.target

EOF

cat > /etc/kubernetes/kube-proxy.yaml << EOF

apiVersion: kubeproxy.config.k8s.io/v1alpha1

bindAddress: 0.0.0.0

clientConnection:acceptContentTypes:""burst:10contentType: application/vnd.kubernetes.protobuf

kubeconfig: /etc/kubernetes/kube-proxy.kubeconfig

qps:5clusterCIDR: 172.16.0.0/12

configSyncPeriod: 15m0s

conntrack:max:nullmaxPerCore:32768min:131072tcpCloseWaitTimeout: 1h0m0s

tcpEstablishedTimeout: 24h0m0s

enableProfiling:falsehealthzBindAddress: 0.0.0.0:10256hostnameOverride:""iptables:masqueradeAll:falsemasqueradeBit:14minSyncPeriod: 0s

syncPeriod: 30s

ipvs:masqueradeAll:trueminSyncPeriod: 5s

scheduler:"rr"syncPeriod: 30s

kind: KubeProxyConfiguration

metricsBindAddress: 127.0.0.1:10249mode:"ipvs"nodePortAddresses:nulloomScoreAdj:-999portRange:""udpIdleTimeout: 250ms

EOF

systemctl daemon-reload && systemctl enable--now kube-proxy

11 插件安装

11.1 安装kubectl命令补全工具

master01(即控制机)安装即可

yum install-y bash-completion

source /usr/share/bash-completion/bash_completion

source<(kubectl completion bash)echo"source <(kubectl completion bash)">> ~/.bashrc

11.2 安装Calico

官方地址:Calico官方安装方法

- 下载calico部署文件

提示:calico部署文件已同时打包上传至文库,传送地址:k8s-1.26.1版本二进制部署文件(含插件)

curl https://projectcalico.docs.tigera.io/manifests/calico.yaml -o calico.yaml

- 若POD网段不是192.168.0.0/16,需打开注释修改:CALICO_IPV4POOL_CIDR

-name: CALICO_IPV4POOL_CIDR

value:"172.16.0.0/12"

kubectl apply -f calico.yaml

- 查看部署状态

kubectl get pod -A-o wide -w

11.3 安装coreDNS

- 下载官方最新部署文件(官方github地址)

提示:coreDNS部署文件已同时打包上传至文库,传送地址:k8s-1.26.1版本二进制部署文件(含插件)

# git clone

https://github.com/coredns/deployment.git

# 或手动下载deployment/kubernetes文件夹下的deploy.sh和coredns.yaml.sed文件

- 获取clusterDNS IP

CLUSTER_DNS_IP=`kubectl get svc |grep kubernetes |awk'{print $3}'`0

- 填充到官方部署文件

chmod +x deploy.sh

./deploy.sh -s-i${CLUSTER_DNS_IP}> coredns.yaml

- 修改副本数replicas为2后,部署

kubectl apply -f coredns.yaml

11.4 Metrics部署

在新版的Kubernetes中系统资源的采集均使用Metrics-server,可以通过Metrics采集节点和Pod的内存、磁盘、CPU和网络的使用率

- 官方地址:Kubernetes Metrics Server

提示:Metrics部署文件已同时打包上传至文库,传送地址:k8s-1.26.1版本二进制部署文件(含插件)

wget https://github.com/kubernetes-sigs/metrics-server/releases/latest/download/components.yaml

- 修改参数及镜像

vim components.yaml

-args:---cert-dir=/tmp

---secure-port=4443

---kubelet-preferred-address-types=InternalIP,ExternalIP,Hostname

---kubelet-use-node-status-port

---metric-resolution=15s

---kubelet-insecure-tls

---requestheader-client-ca-file=/etc/kubernetes/pki/front-proxy-ca.pem

---requestheader-username-headers=X-Remote-User

---requestheader-group-headers=X-Remote-Group

---requestheader-extra-headers-prefix=X-Remote-Extra-image: registry.cn-hangzhou.aliyuncs.com/google_containers/metrics-server:v0.6.2

- 挂载证书

volumeMounts:-mountPath: /tmp

name: tmp-dir

-name: ca-ssl

mountPath: /etc/kubernetes/pki

nodeSelector:kubernetes.io/os: linux

priorityClassName: system-cluster-critical

serviceAccountName: metrics-server

volumes:-emptyDir:{}name: tmp-dir

-name: ca-ssl

hostPath:path: /etc/kubernetes/pki

- 部署

kubectl apply -f components.yaml

- 查看metrics

kubectl top nodes

kubectl top pod -A

11.5 Dashboard部署

Dashboard用于展示集群中的各类资源,同时也可以通过Dashboard实时查看Pod的日志和在容器中执行一些命令等。

官方地址: K8S官方Dashboard最新部署模板

- 下载最新部署模板

提示:Dashboard部署文件已同时打包上传至文库,传送地址:k8s-1.26.1版本二进制部署文件(含插件)

wget https://raw.githubusercontent.com/kubernetes/dashboard/master/charts/recommended.yaml

- 按需修改service,通过nodesport暴露端口

vim recommended.yaml

kind: Service

apiVersion: v1

metadata:labels:k8s-app: kubernetes-dashboard

name: kubernetes-dashboard

namespace: kubernetes-dashboard

spec:type: NodePort

ports:-port:443targetPort:8443nodePort:31443selector:k8s-app: kubernetes-dashboard

kubectl apply -f recommended.yaml

- 创建用户

vim admin.yaml

apiVersion: v1

kind: ServiceAccount

metadata:name: admin-user

namespace: kube-system

---apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:name: admin-user

annotations:rbac.authorization.kubernetes.io/autoupdate:"true"roleRef:apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: cluster-admin

subjects:-kind: ServiceAccount

name: admin-user

namespace: kube-system

kubectl apply -f admin.yaml

- 查看Token

kubectl -n kube-system describe secret $(kubectl -n kube-system get secret |grep admin-user |awk'{print $1}')

- 在公有云安全组控制台,配置安全组策略,开放该NodePort即可访问

- 访问地址:

https://<node-IP>:31443/,选择token方式,填入获取的token即可

11.6 集群可用性验证

- 部署busybox

cat> busybox-deploy.yaml <<EOF

apiVersion: apps/v1

kind: Deployment

metadata:

name: busybox

spec:

selector:

matchLabels:

app: busybox

template:

metadata:

labels:

app: busybox

spec:

containers:

- name: busybox

image: busybox:1.28 # 1.29以上nslookup命令有问题

args:

- /bin/sh

- -c

- sleep 10; touch /tmp/healthy; sleep 30000

readinessProbe: #就绪探针

exec:

command:

- cat

- /tmp/healthy

initialDelaySeconds: 10 #10s之后开始第一次探测

periodSeconds: 5 #第一次探测之后每隔5s探测一次

EOF

kubectl apply -f busybox-deploy.yaml

- 验证

# Pod解析Servicebusyboxpod=$(kubectl get pods -lapp=busybox -ojsonpath='{.items[0].metadata.name}')

kubectl exec-it$busyboxpod -- nslookup kubernetes

# Pod能解析跨namespace的Service

kubectl exec-it$busyboxpod -- nslookup kube-dns.kube-system

# 每个节点都必须要能访问Kubernetes的kubernetes svc 443和kube-dns的service 53# 安装telnet

yum install telnet -y

telnet 192.168.0.1 443

telnet 192.168.0.10 53# Pod和Pod之间跨namespace跨机器都能通信# 另一种方法是通过dig命令测试coredns解析,其中192.168.0.10为coredns-IP

yum -yinstall bind-utils

dig-t A kubernetes.default.svc.cluster.local. @192.168.0.10 +short

dig-t A kubernetes-dashboard.kubernetes-dashboard.svc.cluster.local. @192.168.0.10 +short

版权归原作者 lc_1203 所有, 如有侵权,请联系我们删除。