文章目录

0 前言

🔥 Hi,大家好,这里是丹成学长的毕设系列文章!

🔥 对毕设有任何疑问都可以问学长哦!

这两年开始,各个学校对毕设的要求越来越高,难度也越来越大… 毕业设计耗费时间,耗费精力,甚至有些题目即使是专业的老师或者硕士生也需要很长时间,所以一旦发现问题,一定要提前准备,避免到后面措手不及,草草了事。

为了大家能够顺利以及最少的精力通过毕设,学长分享优质毕业设计项目,今天要分享的新项目是

🚩 基于YOLO实现的口罩佩戴检测

🥇学长这里给一个题目综合评分(每项满分5分)

- 难度系数:4分

- 工作量:4分

- 创新点:3分

🧿 选题指导, 项目分享:

https://gitee.com/yaa-dc/BJH/blob/master/gg/cc/README.md

1 课题介绍

受全球新冠肺炎疫情影响,虽然目前中国疫情防控取 得了良好效果,绝大多数地区处于疫情低风险,但个别地 区仍有零星散发病例和局部聚集性疫情。在机场、地 铁 站、医院等公共服务和重点机构场所规定必须佩戴口罩, 口罩佩戴检查已成为疫情防控的必备操作。目前,口罩 佩戴检查多为人工检查方式,如高铁上会有乘务人员一节 节车厢巡逻检查提醒乘客佩戴口罩,在医院等高危场所也 会有医务人员提醒时刻戴好口罩。人工检查方式存在检 查效率低下、难以及时发现错误佩戴口罩以及未佩戴口罩 行为等弊端。采用深度学习目标检测方法设计一个具有口罩识别功能的防疫系统,可以大大提高检测效率。

2 算法原理

2.1 算法简介

YOLOv5是一种单阶段目标检测算法,该算法在YOLOv4的基础上添加了一些新的改进思路,使其速度与精度都得到了极大的性能提升。主要的改进思路如下所示:

输入端:在模型训练阶段,提出了一些改进思路,主要包括Mosaic数据增强、自适应锚框计算、自适应图片缩放;

基准网络:融合其它检测算法中的一些新思路,主要包括:Focus结构与CSP结构;

Neck网络:目标检测网络在BackBone与最后的Head输出层之间往往会插入一些层,Yolov5中添加了FPN+PAN结构;

Head输出层:输出层的锚框机制与YOLOv4相同,主要改进的是训练时的损失函数GIOU_Loss,以及预测框筛选的DIOU_nms。

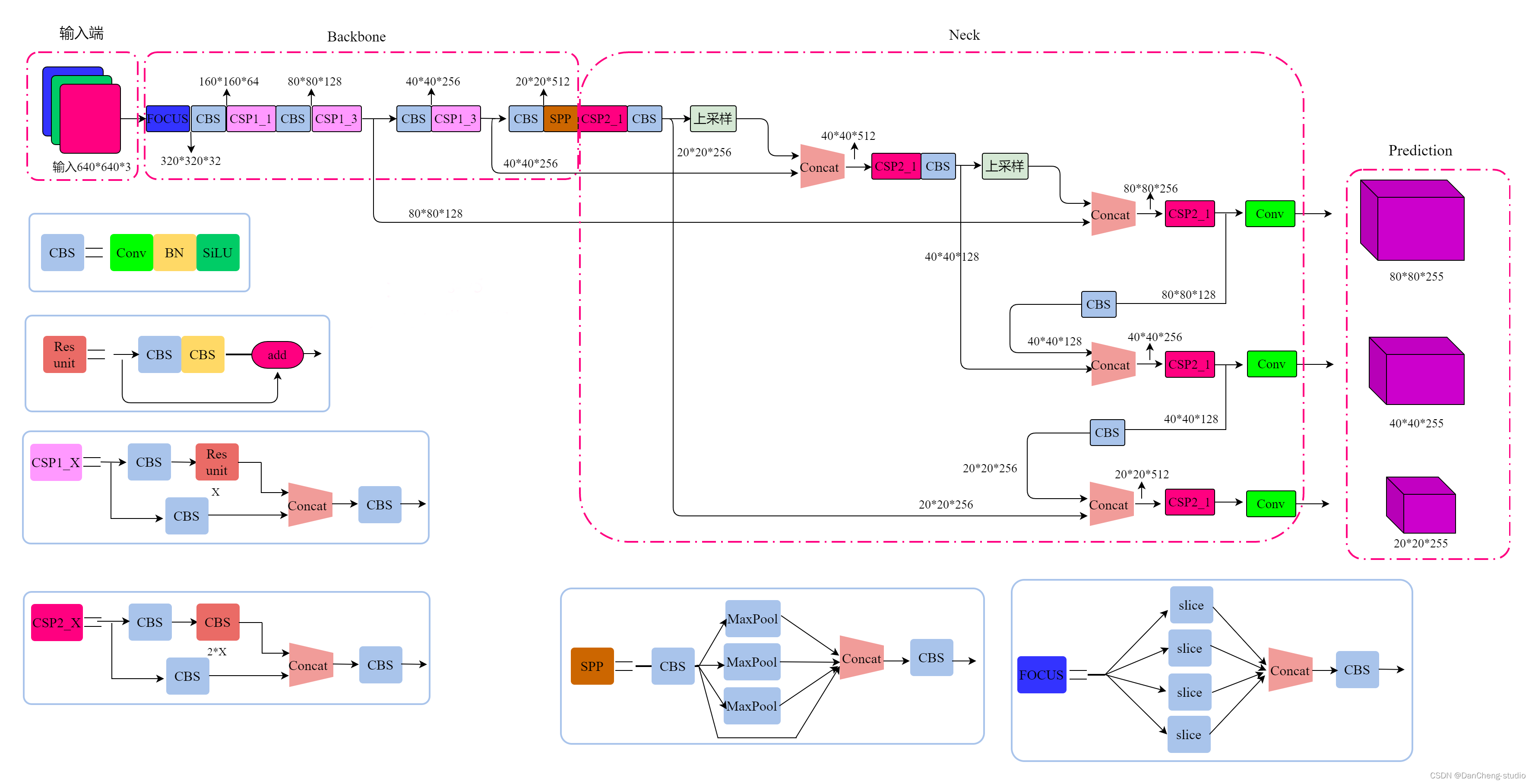

2.2 网络架构

上图展示了YOLOv5目标检测算法的整体框图。对于一个目标检测算法而言,我们通常可以将其划分为4个通用的模块,具体包括:输入端、基准网络、Neck网络与Head输出端,对应于上图中的4个红色模块。YOLOv5算法具有4个版本,具体包括:YOLOv5s、YOLOv5m、YOLOv5l、YOLOv5x四种,本文重点讲解YOLOv5s,其它的版本都在该版本的基础上对网络进行加深与加宽。

- 输入端-输入端表示输入的图片。该网络的输入图像大小为608*608,该阶段通常包含一个图像预处理阶段,即将输入图像缩放到网络的输入大小,并进行归一化等操作。在网络训练阶段,YOLOv5使用Mosaic数据增强操作提升模型的训练速度和网络的精度;并提出了一种自适应锚框计算与自适应图片缩放方法。

- 基准网络-基准网络通常是一些性能优异的分类器种的网络,该模块用来提取一些通用的特征表示。YOLOv5中不仅使用了CSPDarknet53结构,而且使用了Focus结构作为基准网络。

- Neck网络-Neck网络通常位于基准网络和头网络的中间位置,利用它可以进一步提升特征的多样性及鲁棒性。虽然YOLOv5同样用到了SPP模块、FPN+PAN模块,但是实现的细节有些不同。

- Head输出端-Head用来完成目标检测结果的输出。针对不同的检测算法,输出端的分支个数不尽相同,通常包含一个分类分支和一个回归分支。YOLOv4利用GIOU_Loss来代替Smooth L1 Loss函数,从而进一步提升算法的检测精度。

3 关键代码

classDetect(nn.Module):

stride =None# strides computed during build

onnx_dynamic =False# ONNX export parameterdef__init__(self, nc=80, anchors=(), ch=(), inplace=True):# detection layersuper().__init__()

self.nc = nc # number of classes

self.no = nc +5# number of outputs per anchor

self.nl =len(anchors)# number of detection layers

self.na =len(anchors[0])//2# number of anchors

self.grid =[torch.zeros(1)]* self.nl # init grid

self.anchor_grid =[torch.zeros(1)]* self.nl # init anchor grid

self.register_buffer('anchors', torch.tensor(anchors).float().view(self.nl,-1,2))# shape(nl,na,2)

self.m = nn.ModuleList(nn.Conv2d(x, self.no * self.na,1)for x in ch)# output conv

self.inplace = inplace # use in-place ops (e.g. slice assignment)defforward(self, x):

z =[]# inference outputfor i inrange(self.nl):

x[i]= self.m[i](x[i])# conv

bs, _, ny, nx = x[i].shape # x(bs,255,20,20) to x(bs,3,20,20,85)

x[i]= x[i].view(bs, self.na, self.no, ny, nx).permute(0,1,3,4,2).contiguous()ifnot self.training:# inferenceif self.onnx_dynamic or self.grid[i].shape[2:4]!= x[i].shape[2:4]:

self.grid[i], self.anchor_grid[i]= self._make_grid(nx, ny, i)

y = x[i].sigmoid()if self.inplace:

y[...,0:2]=(y[...,0:2]*2-0.5+ self.grid[i])* self.stride[i]# xy

y[...,2:4]=(y[...,2:4]*2)**2* self.anchor_grid[i]# whelse:# for YOLOv5 on AWS Inferentia https://github.com/ultralytics/yolov5/pull/2953

xy =(y[...,0:2]*2-0.5+ self.grid[i])* self.stride[i]# xy

wh =(y[...,2:4]*2)**2* self.anchor_grid[i]# wh

y = torch.cat((xy, wh, y[...,4:]),-1)

z.append(y.view(bs,-1, self.no))return x if self.training else(torch.cat(z,1), x)def_make_grid(self, nx=20, ny=20, i=0):

d = self.anchors[i].device

if check_version(torch.__version__,'1.10.0'):# torch>=1.10.0 meshgrid workaround for torch>=0.7 compatibility

yv, xv = torch.meshgrid([torch.arange(ny).to(d), torch.arange(nx).to(d)], indexing='ij')else:

yv, xv = torch.meshgrid([torch.arange(ny).to(d), torch.arange(nx).to(d)])

grid = torch.stack((xv, yv),2).expand((1, self.na, ny, nx,2)).float()

anchor_grid =(self.anchors[i].clone()* self.stride[i]) \

.view((1, self.na,1,1,2)).expand((1, self.na, ny, nx,2)).float()return grid, anchor_grid

classModel(nn.Module):def__init__(self, cfg='yolov5s.yaml', ch=3, nc=None, anchors=None):# model, input channels, number of classessuper().__init__()ifisinstance(cfg,dict):

self.yaml = cfg # model dictelse:# is *.yamlimport yaml # for torch hub

self.yaml_file = Path(cfg).name

withopen(cfg, encoding='ascii', errors='ignore')as f:

self.yaml = yaml.safe_load(f)# model dict# Define model

ch = self.yaml['ch']= self.yaml.get('ch', ch)# input channelsif nc and nc != self.yaml['nc']:

LOGGER.info(f"Overriding model.yaml nc={self.yaml['nc']} with nc={nc}")

self.yaml['nc']= nc # override yaml valueif anchors:

LOGGER.info(f'Overriding model.yaml anchors with anchors={anchors}')

self.yaml['anchors']=round(anchors)# override yaml value

self.model, self.save = parse_model(deepcopy(self.yaml), ch=[ch])# model, savelist

self.names =[str(i)for i inrange(self.yaml['nc'])]# default names

self.inplace = self.yaml.get('inplace',True)# Build strides, anchors

m = self.model[-1]# Detect()ifisinstance(m, Detect):

s =256# 2x min stride

m.inplace = self.inplace

m.stride = torch.tensor([s / x.shape[-2]for x in self.forward(torch.zeros(1, ch, s, s))])# forward

m.anchors /= m.stride.view(-1,1,1)

check_anchor_order(m)

self.stride = m.stride

self._initialize_biases()# only run once# Init weights, biases

initialize_weights(self)

self.info()

LOGGER.info('')defforward(self, x, augment=False, profile=False, visualize=False):if augment:return self._forward_augment(x)# augmented inference, Nonereturn self._forward_once(x, profile, visualize)# single-scale inference, traindef_forward_augment(self, x):

img_size = x.shape[-2:]# height, width

s =[1,0.83,0.67]# scales

f =[None,3,None]# flips (2-ud, 3-lr)

y =[]# outputsfor si, fi inzip(s, f):

xi = scale_img(x.flip(fi)if fi else x, si, gs=int(self.stride.max()))

yi = self._forward_once(xi)[0]# forward# cv2.imwrite(f'img_{si}.jpg', 255 * xi[0].cpu().numpy().transpose((1, 2, 0))[:, :, ::-1]) # save

yi = self._descale_pred(yi, fi, si, img_size)

y.append(yi)

y = self._clip_augmented(y)# clip augmented tailsreturn torch.cat(y,1),None# augmented inference, traindef_forward_once(self, x, profile=False, visualize=False):

y, dt =[],[]# outputsfor m in self.model:if m.f !=-1:# if not from previous layer

x = y[m.f]ifisinstance(m.f,int)else[x if j ==-1else y[j]for j in m.f]# from earlier layersif profile:

self._profile_one_layer(m, x, dt)

x = m(x)# run

y.append(x if m.i in self.save elseNone)# save outputif visualize:

feature_visualization(x, m.type, m.i, save_dir=visualize)return x

def_descale_pred(self, p, flips, scale, img_size):# de-scale predictions following augmented inference (inverse operation)if self.inplace:

p[...,:4]/= scale # de-scaleif flips ==2:

p[...,1]= img_size[0]- p[...,1]# de-flip udelif flips ==3:

p[...,0]= img_size[1]- p[...,0]# de-flip lrelse:

x, y, wh = p[...,0:1]/ scale, p[...,1:2]/ scale, p[...,2:4]/ scale # de-scaleif flips ==2:

y = img_size[0]- y # de-flip udelif flips ==3:

x = img_size[1]- x # de-flip lr

p = torch.cat((x, y, wh, p[...,4:]),-1)return p

def_clip_augmented(self, y):# Clip YOLOv5 augmented inference tails

nl = self.model[-1].nl # number of detection layers (P3-P5)

g =sum(4** x for x inrange(nl))# grid points

e =1# exclude layer count

i =(y[0].shape[1]// g)*sum(4** x for x inrange(e))# indices

y[0]= y[0][:,:-i]# large

i =(y[-1].shape[1]// g)*sum(4**(nl -1- x)for x inrange(e))# indices

y[-1]= y[-1][:, i:]# smallreturn y

def_profile_one_layer(self, m, x, dt):

c =isinstance(m, Detect)# is final layer, copy input as inplace fix

o = thop.profile(m, inputs=(x.copy()if c else x,), verbose=False)[0]/1E9*2if thop else0# FLOPs

t = time_sync()for _ inrange(10):

m(x.copy()if c else x)

dt.append((time_sync()- t)*100)if m == self.model[0]:

LOGGER.info(f"{'time (ms)':>10s}{'GFLOPs':>10s}{'params':>10s}{'module'}")

LOGGER.info(f'{dt[-1]:10.2f}{o:10.2f}{m.np:10.0f}{m.type}')if c:

LOGGER.info(f"{sum(dt):10.2f}{'-':>10s}{'-':>10s} Total")def_initialize_biases(self, cf=None):# initialize biases into Detect(), cf is class frequency# https://arxiv.org/abs/1708.02002 section 3.3# cf = torch.bincount(torch.tensor(np.concatenate(dataset.labels, 0)[:, 0]).long(), minlength=nc) + 1.

m = self.model[-1]# Detect() modulefor mi, s inzip(m.m, m.stride):# from

b = mi.bias.view(m.na,-1)# conv.bias(255) to (3,85)

b.data[:,4]+= math.log(8/(640/ s)**2)# obj (8 objects per 640 image)

b.data[:,5:]+= math.log(0.6/(m.nc -0.999999))if cf isNoneelse torch.log(cf / cf.sum())# cls

mi.bias = torch.nn.Parameter(b.view(-1), requires_grad=True)def_print_biases(self):

m = self.model[-1]# Detect() modulefor mi in m.m:# from

b = mi.bias.detach().view(m.na,-1).T # conv.bias(255) to (3,85)

LOGGER.info(('%6g Conv2d.bias:'+'%10.3g'*6)%(mi.weight.shape[1],*b[:5].mean(1).tolist(), b[5:].mean()))# def _print_weights(self):# for m in self.model.modules():# if type(m) is Bottleneck:# LOGGER.info('%10.3g' % (m.w.detach().sigmoid() * 2)) # shortcut weightsdeffuse(self):# fuse model Conv2d() + BatchNorm2d() layers

LOGGER.info('Fusing layers... ')for m in self.model.modules():ifisinstance(m,(Conv, DWConv))andhasattr(m,'bn'):

m.conv = fuse_conv_and_bn(m.conv, m.bn)# update convdelattr(m,'bn')# remove batchnorm

m.forward = m.forward_fuse # update forward

self.info()return self

defautoshape(self):# add AutoShape module

LOGGER.info('Adding AutoShape... ')

m = AutoShape(self)# wrap model

copy_attr(m, self, include=('yaml','nc','hyp','names','stride'), exclude=())# copy attributesreturn m

definfo(self, verbose=False, img_size=640):# print model information

model_info(self, verbose, img_size)def_apply(self, fn):# Apply to(), cpu(), cuda(), half() to model tensors that are not parameters or registered buffers

self =super()._apply(fn)

m = self.model[-1]# Detect()ifisinstance(m, Detect):

m.stride = fn(m.stride)

m.grid =list(map(fn, m.grid))ifisinstance(m.anchor_grid,list):

m.anchor_grid =list(map(fn, m.anchor_grid))return self

defparse_model(d, ch):# model_dict, input_channels(3)

LOGGER.info(f"\n{'':>3}{'from':>18}{'n':>3}{'params':>10}{'module':<40}{'arguments':<30}")

anchors, nc, gd, gw = d['anchors'], d['nc'], d['depth_multiple'], d['width_multiple']

na =(len(anchors[0])//2)ifisinstance(anchors,list)else anchors # number of anchors

no = na *(nc +5)# number of outputs = anchors * (classes + 5)

layers, save, c2 =[],[], ch[-1]# layers, savelist, ch outfor i,(f, n, m, args)inenumerate(d['backbone']+ d['head']):# from, number, module, args

m =eval(m)ifisinstance(m,str)else m # eval stringsfor j, a inenumerate(args):try:

args[j]=eval(a)ifisinstance(a,str)else a # eval stringsexcept NameError:pass

n = n_ =max(round(n * gd),1)if n >1else n # depth gainif m in[Conv, GhostConv, Bottleneck, GhostBottleneck, SPP, SPPF, DWConv, MixConv2d, Focus, CrossConv,

BottleneckCSP, C3, C3TR, C3SPP, C3Ghost]:

c1, c2 = ch[f], args[0]if c2 != no:# if not output

c2 = make_divisible(c2 * gw,8)

args =[c1, c2,*args[1:]]if m in[BottleneckCSP, C3, C3TR, C3Ghost]:

args.insert(2, n)# number of repeats

n =1elif m is nn.BatchNorm2d:

args =[ch[f]]elif m is Concat:

c2 =sum(ch[x]for x in f)elif m is Detect:

args.append([ch[x]for x in f])ifisinstance(args[1],int):# number of anchors

args[1]=[list(range(args[1]*2))]*len(f)elif m is Contract:

c2 = ch[f]* args[0]**2elif m is Expand:

c2 = ch[f]// args[0]**2else:

c2 = ch[f]

m_ = nn.Sequential(*(m(*args)for _ inrange(n)))if n >1else m(*args)# module

t =str(m)[8:-2].replace('__main__.','')# module type

np =sum(x.numel()for x in m_.parameters())# number params

m_.i, m_.f, m_.type, m_.np = i, f, t, np # attach index, 'from' index, type, number params

LOGGER.info(f'{i:>3}{str(f):>18}{n_:>3}{np:10.0f}{t:<40}{str(args):<30}')# print

save.extend(x % i for x in([f]ifisinstance(f,int)else f)if x !=-1)# append to savelist

layers.append(m_)if i ==0:

ch =[]

ch.append(c2)return nn.Sequential(*layers),sorted(save)

4 数据集

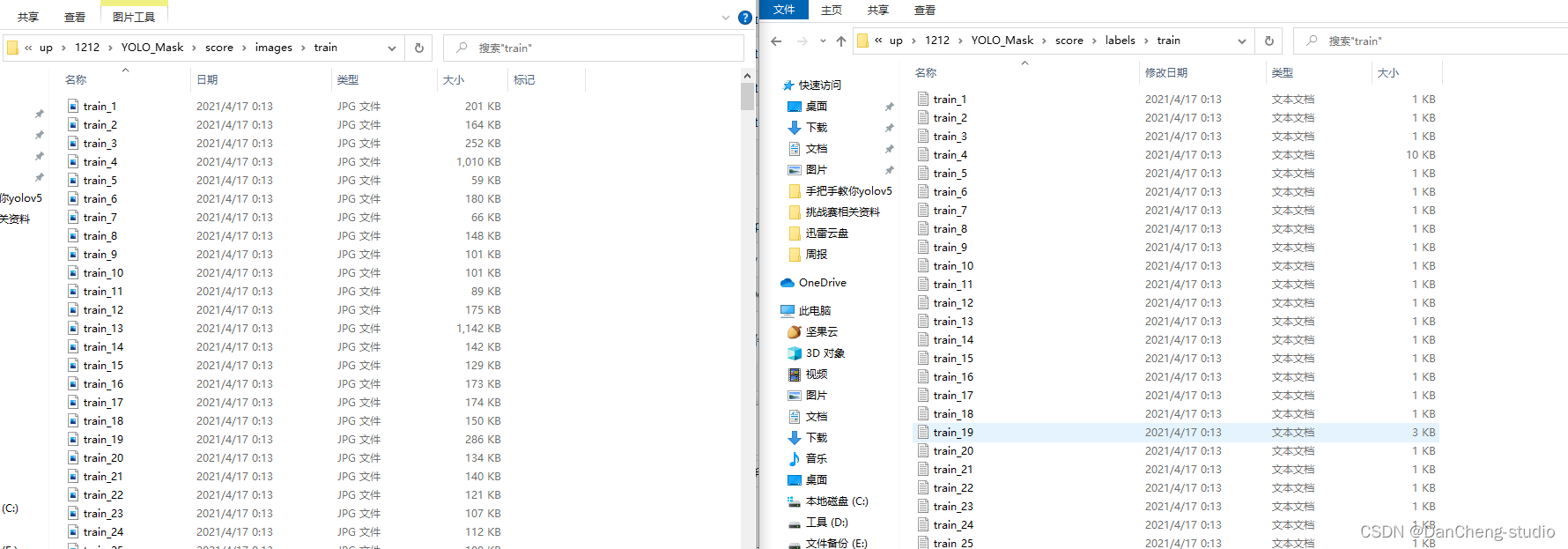

大家可采用公开标注好的数据集。如果为了更深入的学习也可自己标注,但过程相对比较繁琐,麻烦。

以下简单介绍数据标注的相关方法,数据标注这里推荐的软件是labelimg,学长以火灾数据集为例!

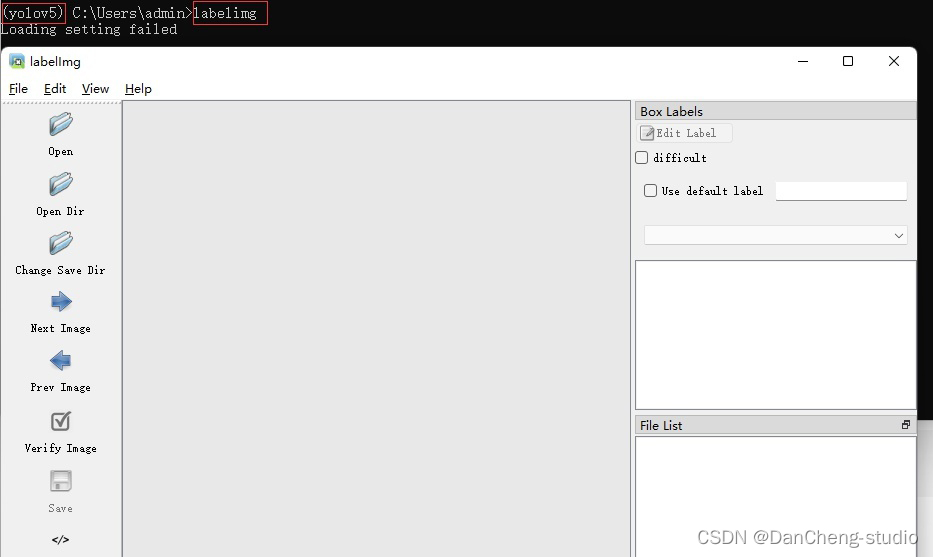

4.1 安装

通过pip指令即可安装

pip install labelimg

4.2 打开

在命令行中输入labelimg即可打开

打开你所需要进行标注的文件夹

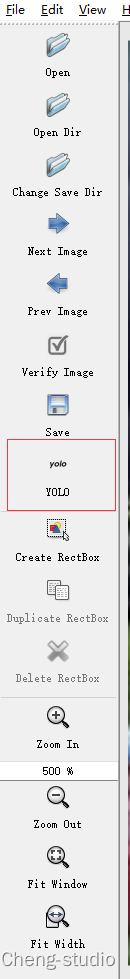

4.3 选择yolo标注格式

点击红色框区域进行标注格式切换,我们需要yolo格式,因此切换到yolo。

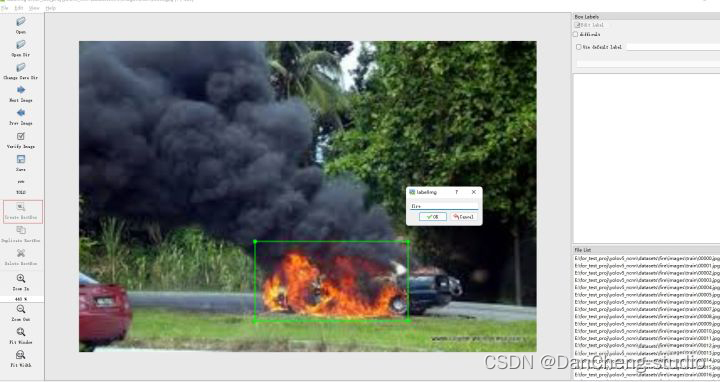

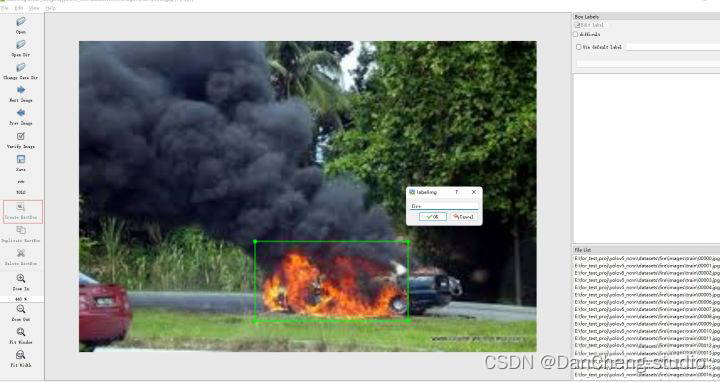

4.4 打标签

点击Create RectBo -> 拖拽鼠标框选目标 -> 给上标签 -> 点击ok。

注:若要删除目标,右键目标区域,delete即可

4.5 保存

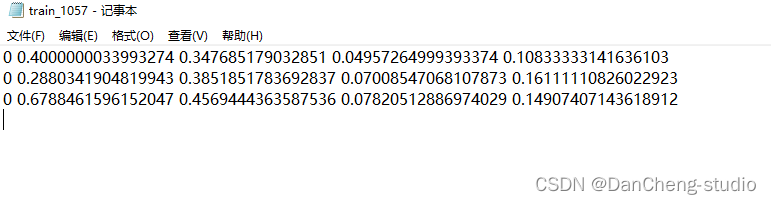

点击save,保存txt。

打开具体的标注文件,你将会看到下面的内容,txt文件中每一行表示一个目标,以空格进行区分,分别表示目标的类别id,归一化处理之后的中心点x坐标、y坐标、目标框的w和h。

5 训练

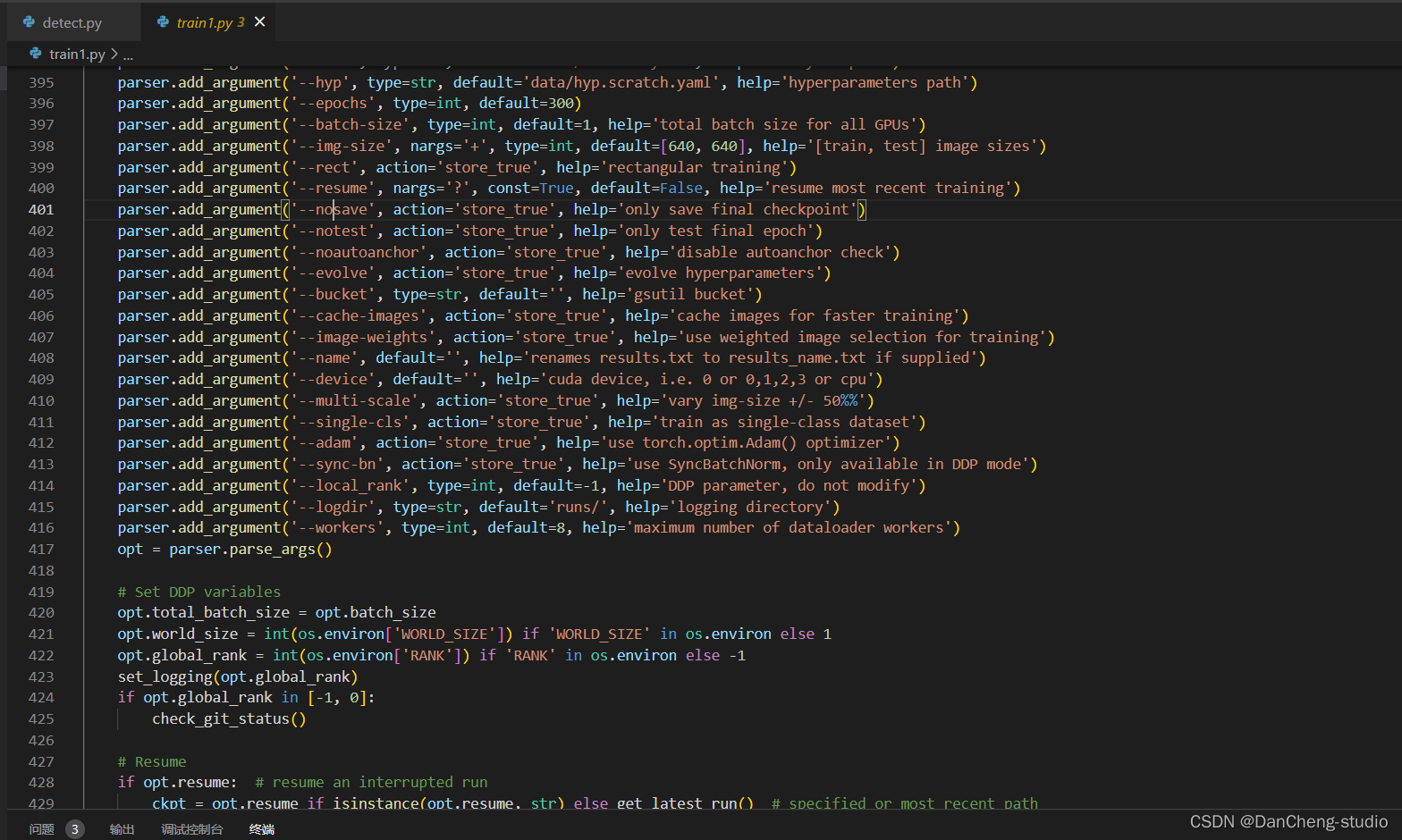

修改train.py中的weights、cfg、data、epochs、batch_size、imgsz、device、workers等参数

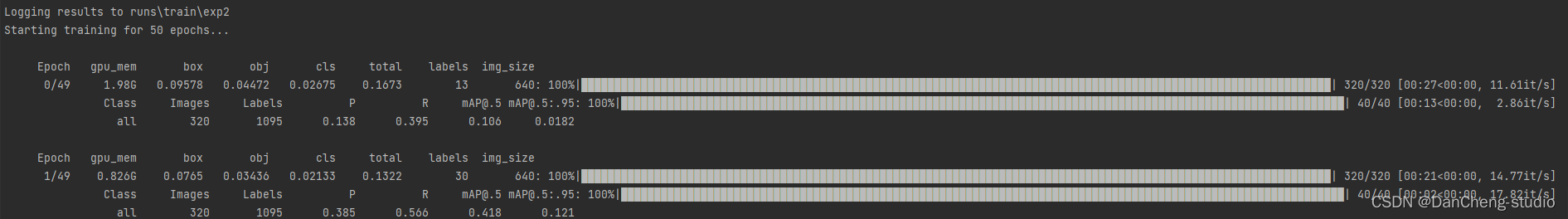

训练代码成功执行之后会在命令行中输出下列信息,接下来就是安心等待模型训练结束即可。

6 实现效果

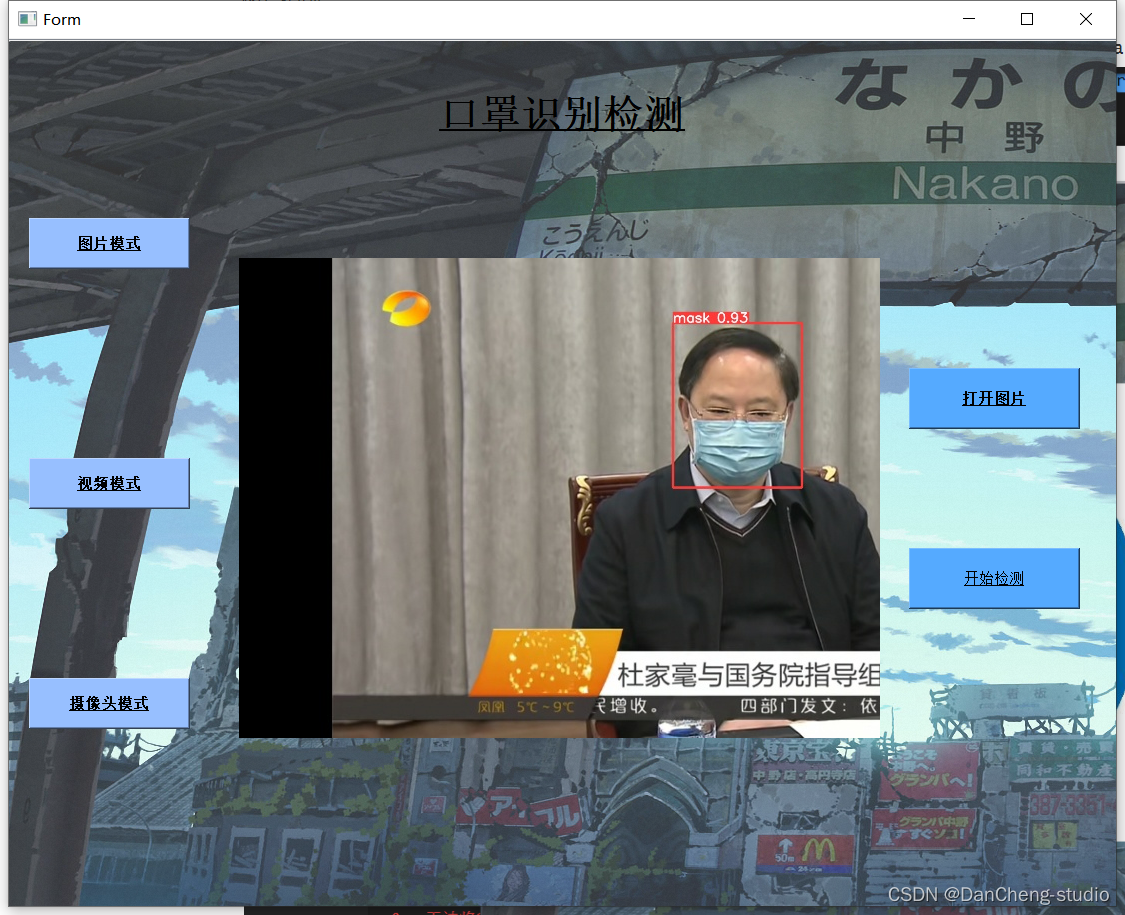

6.1 pyqt实现简单GUI

from PyQt5 import QtCore, QtGui, QtWidgets

classUi_Win_mask(object):defsetupUi(self, Win_mask):

Win_mask.setObjectName("Win_mask")

Win_mask.resize(1107,868)

Win_mask.setStyleSheet("QString qstrStylesheet = \"background-color:rgb(43, 43, 255)\";\n""ui.pushButton->setStyleSheet(qstrStylesheet);")

self.frame = QtWidgets.QFrame(Win_mask)

self.frame.setGeometry(QtCore.QRect(10,140,201,701))

self.frame.setFrameShape(QtWidgets.QFrame.StyledPanel)

self.frame.setFrameShadow(QtWidgets.QFrame.Raised)

self.frame.setObjectName("frame")

self.pushButton = QtWidgets.QPushButton(self.frame)

self.pushButton.setGeometry(QtCore.QRect(10,40,161,51))

font = QtGui.QFont()

font.setBold(True)

font.setUnderline(True)

font.setWeight(75)

self.pushButton.setFont(font)

self.pushButton.setStyleSheet("QPushButton{background-color:rgb(151, 191, 255);}")

self.pushButton.setObjectName("pushButton")

self.pushButton_2 = QtWidgets.QPushButton(self.frame)

self.pushButton_2.setGeometry(QtCore.QRect(10,280,161,51))

font = QtGui.QFont()

font.setBold(True)

font.setUnderline(True)

font.setWeight(75)

self.pushButton_2.setFont(font)

self.pushButton_2.setStyleSheet("QPushButton{background-color:rgb(151, 191, 255);}")

self.pushButton_2.setObjectName("pushButton_2")

self.pushButton_3 = QtWidgets.QPushButton(self.frame)

self.pushButton_3.setGeometry(QtCore.QRect(10,500,161,51))

font = QtGui.QFont()

font.setBold(True)

font.setUnderline(True)

font.setWeight(75)

font.setStrikeOut(False)

self.pushButton_3.setFont(font)

self.pushButton_3.setStyleSheet("QPushButton{background-color:rgb(151, 191, 255);}")

self.pushButton_3.setObjectName("pushButton_3")

self.frame_2 = QtWidgets.QFrame(Win_mask)

self.frame_2.setGeometry(QtCore.QRect(230,110,1031,861))

self.frame_2.setStyleSheet("")

self.frame_2.setFrameShape(QtWidgets.QFrame.StyledPanel)

self.frame_2.setFrameShadow(QtWidgets.QFrame.Raised)

self.frame_2.setObjectName("frame_2")

self.show_picture_page = QtWidgets.QStackedWidget(self.frame_2)

self.show_picture_page.setGeometry(QtCore.QRect(-10,0,871,731))

font = QtGui.QFont()

font.setBold(True)

font.setWeight(75)

self.show_picture_page.setFont(font)

self.show_picture_page.setObjectName("show_picture_page")

self.photo = QtWidgets.QWidget()

self.photo.setObjectName("photo")

self.label = QtWidgets.QLabel(self.photo)

self.label.setGeometry(QtCore.QRect(10,30,641,641))

font = QtGui.QFont()

font.setFamily("Arial")

font.setPointSize(36)

self.label.setFont(font)

self.label.setText("")

self.label.setPixmap(QtGui.QPixmap("./images/UI/up.jpeg"))

self.label.setObjectName("label")

self.pushButton_4 = QtWidgets.QPushButton(self.photo)

self.pushButton_4.setGeometry(QtCore.QRect(680,220,171,61))

font = QtGui.QFont()

font.setBold(True)

font.setUnderline(True)

font.setWeight(75)

self.pushButton_4.setFont(font)

self.pushButton_4.setStyleSheet("QPushButton{background-color:rgb(85, 170, 255);}")

self.pushButton_4.setObjectName("pushButton_4")

self.pushButton_5 = QtWidgets.QPushButton(self.photo)

self.pushButton_5.setGeometry(QtCore.QRect(680,400,171,61))

font = QtGui.QFont()

font.setUnderline(True)

self.pushButton_5.setFont(font)

self.pushButton_5.setStyleSheet("QPushButton{background-color:rgb(85, 170, 255);}")

self.pushButton_5.setObjectName("pushButton_5")

self.show_picture_page.addWidget(self.photo)

self.videos = QtWidgets.QWidget()

self.videos.setObjectName("videos")

self.vid_img = QtWidgets.QLabel(self.videos)

self.vid_img.setGeometry(QtCore.QRect(10,30,640,640))

font = QtGui.QFont()

font.setFamily("Arial")

font.setPointSize(36)

self.vid_img.setFont(font)

self.vid_img.setText("")

self.vid_img.setPixmap(QtGui.QPixmap("./images/UI/up.jpeg"))

self.vid_img.setObjectName("vid_img")

self.mp4_detection_btn = QtWidgets.QPushButton(self.videos)

self.mp4_detection_btn.setGeometry(QtCore.QRect(680,220,171,61))

font = QtGui.QFont()

font.setBold(True)

font.setUnderline(True)

font.setWeight(75)

self.mp4_detection_btn.setFont(font)

self.mp4_detection_btn.setStyleSheet("QPushButton{background-color:rgb(85, 170, 255);}")

self.mp4_detection_btn.setObjectName("mp4_detection_btn")

self.vid_stop_btn = QtWidgets.QPushButton(self.videos)

self.vid_stop_btn.setGeometry(QtCore.QRect(680,400,171,61))

font = QtGui.QFont()

font.setBold(True)

font.setUnderline(True)

font.setWeight(75)

self.vid_stop_btn.setFont(font)

self.vid_stop_btn.setStyleSheet("QPushButton{background-color:rgb(85, 170, 255);}")

self.vid_stop_btn.setObjectName("vid_stop_btn")

self.show_picture_page.addWidget(self.videos)

self.camera = QtWidgets.QWidget()

self.camera.setObjectName("camera")

self.webcam_detection_btn = QtWidgets.QPushButton(self.camera)

self.webcam_detection_btn.setGeometry(QtCore.QRect(680,220,171,61))

self.webcam_detection_btn.setBaseSize(QtCore.QSize(2,2))

font = QtGui.QFont()

font.setBold(True)

font.setUnderline(True)

font.setWeight(75)

self.webcam_detection_btn.setFont(font)

self.webcam_detection_btn.setStyleSheet("QPushButton{background-color:rgb(85, 170, 255);}")

self.webcam_detection_btn.setObjectName("webcam_detection_btn")

self.cam_img = QtWidgets.QLabel(self.camera)

self.cam_img.setGeometry(QtCore.QRect(10,30,640,640))

font = QtGui.QFont()

font.setFamily("Arial")

font.setPointSize(36)

self.cam_img.setFont(font)

self.cam_img.setText("")

self.cam_img.setPixmap(QtGui.QPixmap("./images/UI/up.jpeg"))

self.cam_img.setObjectName("cam_img")

self.vid_stop_btn_cma = QtWidgets.QPushButton(self.camera)

self.vid_stop_btn_cma.setGeometry(QtCore.QRect(680,400,171,61))

font = QtGui.QFont()

font.setBold(True)

font.setUnderline(True)

font.setWeight(75)

self.vid_stop_btn_cma.setFont(font)

self.vid_stop_btn_cma.setStyleSheet("QPushButton{background-color:rgb(85, 170, 255);}")

self.vid_stop_btn_cma.setObjectName("vid_stop_btn_cma")

self.show_picture_page.addWidget(self.camera)

self.label_2 = QtWidgets.QLabel(Win_mask)

self.label_2.setGeometry(QtCore.QRect(430,40,251,71))

font = QtGui.QFont()

font.setPointSize(24)

font.setBold(True)

font.setItalic(False)

font.setUnderline(True)

font.setWeight(75)

self.label_2.setFont(font)

self.label_2.setStyleSheet("Font{background-color:rgb(85, 170, 255);}")

self.label_2.setObjectName("label_2")

self.listView = QtWidgets.QListView(Win_mask)

self.listView.setGeometry(QtCore.QRect(-5,1,1121,871))

self.listView.setStyleSheet(" \n""background-image: url(:/bg.png);")

self.listView.setObjectName("listView")

self.listView.raise_()

self.frame.raise_()

self.frame_2.raise_()

self.label_2.raise_()

self.retranslateUi(Win_mask)

self.show_picture_page.setCurrentIndex(0)

QtCore.QMetaObject.connectSlotsByName(Win_mask)

6.2 图片识别效果

6.3 视频识别效果

6.4 摄像头实时识别

7 最后

版权归原作者 caxiou 所有, 如有侵权,请联系我们删除。