hadoop集群搭建

hadoop摘要

Hadoop 是一个开源的分布式存储和计算框架,旨在处理大规模数据集并提供高可靠性、高性能的数据处理能力。它主要包括以下几个核心组件:

- Hadoop 分布式文件系统(HDFS):HDFS 是 Hadoop 的分布式文件存储系统,用于存储大规模数据,并通过数据的副本和自动故障恢复机制来提供高可靠性和容错性。

- YARN(Yet Another Resource Negotiator):YARN 是 Hadoop 的资源管理平台,用于调度和管理集群中的计算资源,支持多种数据处理框架的并行计算,如 MapReduce、Spark 等。

- MapReduce:MapReduce 是 Hadoop 最初提出的一种分布式计算编程模型,用于并行处理大规模数据集。它将计算任务分解为 Map 和 Reduce 两个阶段,充分利用集群的计算资源进行并行计算。

- Hadoop 生态系统:除了上述核心组件外,Hadoop 还包括了一系列相关项目和工具,如 HBase(分布式列存数据库)、Hive(数据仓库基础设施)、Spark(快速通用的集群计算系统)等,构成了完整的大数据处理生态系统。

总的来说,Hadoop 提供了一套强大的工具和框架,使得用户能够在分布式环境下高效地存储、处理和分析大规模数据,是大数据领域的重要基础设施之一。

基础环境

规划

hadoop001hadoop02hadoop003HDFSNameNodeDataNodeSecondaryNameNode DateNodeYARNNodeManagerResourceManager NodeManagerNodeManager

关闭防火墙 安全模块

systemctl disable --now firewalld

#关闭并且禁止防火墙自启动

setenforce 0#关闭增强模块sed-i's/SELINUX=enforcing/SELINUX=permissive/g' /etc/selinux/config

#禁止自启动

添加主机地址映射

cat>> /etc/hosts <<lxf

192.168.200.41 hadoop001

192.168.200.42 hadoop002

192.168.200.43 hadoop003

lxf

传递软件包

#最好创建一个独立目录存放软件包#[root@hadoop001 ~]# mkdir -p /export/{data,servers,software}[root@hadoop001 ~]# tree /export/

/export/

├── data #存放数据

├── servers #安装服务

└── software #存放服务的软件包3 directories, 0 files

[root@hadoop001 ~]#

配置ssh免密

master 和两台slave都要配置免密

#在hadoop001节点[root@hadoop001 ~]#[root@hadoop001 ~]# ssh-keygen -t rsa [root@hadoop001 ~]#

Generating public/private rsa key pair.

Enter fileinwhich to save the key (/root/.ssh/id_rsa):

Enter passphrase (empty for no passphrase):

Enter same passphrase again:

Your identification has been saved in /root/.ssh/id_rsa.

Your public key has been saved in /root/.ssh/id_rsa.pub.

The key fingerprint is:

SHA256:2AY6p3kFWziXFmXH7e/PHgrAVZ9H7hkCWAtCc19UwZw root@hadoop001

The key's randomart image is:

+---[RSA 2048]----+

| .+.+=+o=+o+|

| .+=o.* oE.|

| = = + o +o|

| . @. . o.+|

| o + So o.|

| = o . .|

| o . . o |

| . . ..o|

| . .=|

+----[SHA256]-----+

[root@hadoop001 ~]#

[root@hadoop001 ~]# hosts=("hadoop001" "hadoop002" "hadoop003"); for host in "${hosts[@]}"; do echo "将公钥分发到 $host"; ssh-copy-id $host; done

将公钥分发到 hadoop001

/usr/bin/ssh-copy-id: INFO: Source of key(s) to be installed: "/root/.ssh/id_rsa.pub"

/usr/bin/ssh-copy-id: INFO: attempting to log in with the new key(s), to filter out any that are already installed

/usr/bin/ssh-copy-id: INFO: 1 key(s) remain to be installed -- if you are prompted now it is to install the new keys

root@hadoop001's password:

Number of key(s) added: 1

Now try logging into the machine, with: "ssh 'hadoop001'"

and check to make sure that only the key(s) you wanted were added.

将公钥分发到 hadoop002

/usr/bin/ssh-copy-id: INFO: Source of key(s) to be installed: "/root/.ssh/id_rsa.pub"

The authenticity of host'hadoop002 (192.168.200.42)' can't be established.

ECDSA key fingerprint is SHA256:ADFjDGD2MxgCqL5fQuWhn+0T5drPiTXERvlMiu/QXjA.

ECDSA key fingerprint is MD5:d2:2b:06:cb:13:48:e0:87:d7:f3:87:8b:2c:56:e4:da.

Are you sure you want to continue connecting (yes/no)? yes

/usr/bin/ssh-copy-id: INFO: attempting to log in with the new key(s), to filter out any that are already installed

/usr/bin/ssh-copy-id: INFO: 1 key(s) remain to be installed -- if you are prompted now it is to install the new keys

root@hadoop002's password:

Number of key(s) added: 1

Now try logging into the machine, with: "ssh 'hadoop002'"

and check to make sure that only the key(s) you wanted were added.

将公钥分发到 hadoop003

/usr/bin/ssh-copy-id: INFO: Source of key(s) to be installed: "/root/.ssh/id_rsa.pub"

/usr/bin/ssh-copy-id: INFO: attempting to log in with the new key(s), to filter out any that are already installed

/usr/bin/ssh-copy-id: INFO: 1 key(s) remain to be installed -- if you are prompted now it is to install the new keys

root@hadoop003's password:

Number of key(s) added: 1

Now try logging into the machine, with: "ssh 'hadoop003'"

and check to make sure that only the key(s) you wanted were added.

[root@hadoop001 ~]#[root@hadoop001 ~]# #在hadoop002和hadoop003重复操作 使得三台节点可以互相通信

安装服务

安装java hadoop

[root@hadoop001]# tree -L 2 /export/

/export/

├── data #因为还没有启动服务所以没有数据文件

├── servers

│ ├── hadoop-3.3.6

│ └── jdk1.8.0_221

└── software

├── hadoop-3.3.6-aarch64.tar.gz

└── jdk-8u221-linux-x64.tar.gz

5 directories, 2 files

[root@hadoop001 export]# #同样在另外两个节点分别解压到对应目录

配置环境变量

#/etc/profile.d/my_env.sh 我们可以专门为hadoop设置一个环境变量文件夹用作修改,而不去直接修改/etc/profile文件,这样在系统启动时或者用户登录时会自动加载这些环境变量。[root@hadoop001 ~]# cat >> /etc/profile.d/my_env.sh << lxf >#JAVA_HOME>exportJAVA_HOME=/export/servers/jdk1.8.0_221

>exportPATH=$PATH:$JAVA_HOME/bin

>#HADOOP_HOME>exportHADOOP_HOME=/export/servers/hadoop-3.3.6

>exportPATH=$PATH:$HADOOP_HOME/bin

>exportPATH=$PATH:$HADOOP_HOME/sbin

> lxf

[root@hadoop001 ~]# source /etc/profile[root@hadoop001 ~]# echo $HADOOP_HOME

/export/servers/hadoop-3.3.6

[root@hadoop001 ~]# echo $JAVA_HOME

/export/servers/jdk1.8.0_221

[root@hadoop001 ~]# #测试环境变量[root@hadoop001 ~]# hadoop version

Hadoop 3.3.6

Source code repository https://github.com/apache/hadoop.git -r 1be78238728da9266a4f88195058f08fd012bf9c

Compiled by ubuntu on 2023-06-18T23:15Z

Compiled on platform linux-aarch_64

Compiled with protoc 3.7.1

From source with checksum 5652179ad55f76cb287d9c633bb53bbd

This command was run using /export/servers/hadoop-3.3.6/share/hadoop/common/hadoop-common-3.3.6.jar

[root@hadoop001 ~]# java -version java version "1.8.0_221"

Java(TM) SE Runtime Environment (build 1.8.0_221-b11)

Java HotSpot(TM)64-Bit Server VM (build 25.221-b11, mixed mode)[root@hadoop001 ~]# #分发环境配置文件scp /etc/profile.d/my_env.sh hadoop002:/etc/profile.d/my_env.sh

scp /etc/profile.d/my_env.sh hadoop003:/etc/profile.d/my_env.sh

#分发完成后注意测试环境变量是否成功配置

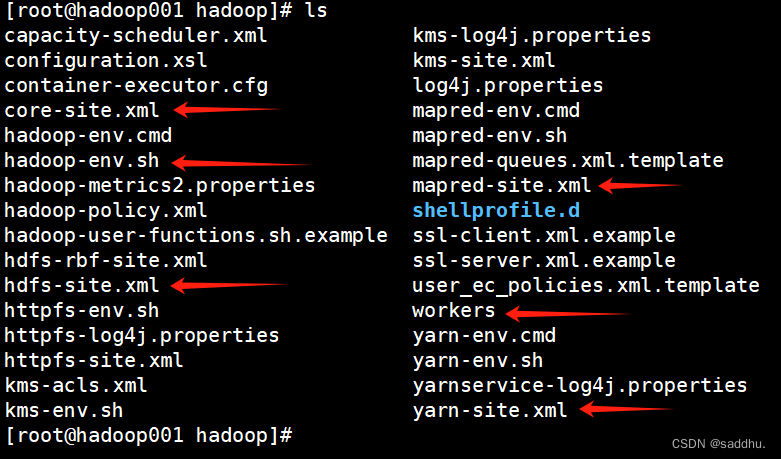

修改Hadoop配置文件

[root@hadoop001 hadoop]# pwd

/export/servers/hadoop-3.3.6/etc/hadoop

[root@hadoop001 hadoop]# grep '^export' hadoop-env.shexportJAVA_HOME=/export/servers/jdk1.8.0_221

exportHDFS_NAMENODE_USER="root"exportHDFS_DATANODE_USER="root"exportHDFS_SECONDARYNAMENODE_USER="root"exportYARN_RESOURCEMANAGER_USER="root"exportYARN_NODEMANAGER_USER="root"exportHADOOP_OS_TYPE=${HADOOP_OS_TYPE:-$(uname -s)}[root@hadoop001 hadoop]#

修改core-site.xml文件

<configuration><property><name>fs.defaultFS</name><value>hdfs://hadoop001:9000</value></property><property><name>hadoop.tmp.dir</name><value>/export/data/hadoop/tmp</value></property></configuration>

修改hdfs-site.xml文件

<configuration><property><name>dfs.relication</name><value>2</value></property><property><name>dfs.namenode.name.dir</name><value>/export/data/hadoop/name</value></property><property><name>dfs.namenode.secondary.http-address</name><value>hadoop002:50090</value></property><property><name>dfs.namenode.data.dir</name><value>/export/data/hadoop/data</value></property></configuration>

修改mapred-site.xml文件

<configuration><property><name>mapreduce.framework.name</name><value>yarn</value></property></configuration>

修改yarn-site.xml文件

<configuration><!-- Site specific YARN configuration properties --><property><name>yarn.nodemanager.aux-services</name><value>mapreduce_shuffle</value></property><property><name>yarn.resourcemanager.hostname</name><value>hadoop001</value></property></configuration>

修改workers文件

[root@hadoop001 hadoop]# cat >> workers << lxf > hadoop001

> hadoop002

> hadoop003

> lxf

[root@hadoop001 hadoop]#

向集群分发配置文件

#开始分发#向hadoop002scp /export/servers/hadoop-3.3.6/etc/hadoop hadoop002:/export/servers/hadoop-3.3.6/etc/hadoop

#向hadoop003scp /export/servers/hadoop-3.3.6/etc/hadoop hadoop002:/export/servers/hadoop-3.3.6/etc/hadoop

hadoop集群

hadoop集群文件系统初始化

Hadoop集群的初始化是非常重要的,它确保了集群的各个组件在启动时处于正确的状态,并且能够正确地协调彼此的工作。在第一次启动Hadoop集群时,初始化是必需的,具体原因如下:

- 文件系统初始化:Hadoop的分布式文件系统(HDFS)需要在启动时进行初始化,这包括创建初始的目录结构、设置权限和准备必要的元数据等操作。

- 元数据准备:Hadoop的各个组件(比如NameNode、ResourceManager等)需要进行元数据的准备工作,包括创建必要的数据库表、清理日志文件等。

- 配置检查:初始化过程还会对各个节点的配置进行检查,确保配置的正确性和一致性,以避免在后续运行中出现问题。

- 启动必要的服务:在初始化过程中,Hadoop会启动各个必要的服务,比如NameNode、DataNode、ResourceManager、NodeManager等,以确保集群的核心组件都能够正常运行。

#在启动hadoop集群之前,需要对主节点hadoop001进行格式化[root@hadoop001 ~]# hadoop namenode -format.......

......

启动

分布启动

启动hdfs

[root@hadoop001 ~]# start-dfs.sh

启动yarn

[root@hadoop001 ~]# start-yarn.sh

集群一键启动

[root@hadoop001 ~]# start-all.sh

关闭

分布关闭

关闭hdfs

[root@hadoop001 ~]# stop-dfs.sh

启动yarn

[root@hadoop001 ~]# stop-yarn.sh

集群一键启动

[root@hadoop001 ~]# stop-all.sh

测试

查看hadoop的WebUI

成功访问

可以在C:\Windows\System32\drivers\etc\hosts下面修改hosts主机地址映射然后在宿主机的浏览器中就可以使用主机名:端口号去访问

如果没有设置就只能使用ip:端口号去访问

关闭hdfs

[root@hadoop001 ~]# stop-dfs.sh

启动yarn

[root@hadoop001 ~]# stop-yarn.sh

集群一键启动

[root@hadoop001 ~]# stop-all.sh

测试

查看hadoop的WebUI

成功访问

可以在C:\Windows\System32\drivers\etc\hosts下面修改hosts主机地址映射然后在宿主机的浏览器中就可以使用主机名:端口号去访问

如果没有设置就只能使用ip:端口号去访问

版权归原作者 saddhu. 所有, 如有侵权,请联系我们删除。