目录

jdk下载地址(tar.gz结尾的)

Java Downloads | Oracle

hadoop下载地址(tar.gz结尾的)

apache-hadoop-core-hadoop-3.3.6安装包下载_开源镜像站-阿里云 (aliyun.com)

安装虚拟机

CentOS-7安装教程-CSDN博客

linux命令不熟悉的可以看这篇

linux常用命令之大数据平台搭建版-CSDN博客

切换为命令行模式

linux图形化界面和字符界面的转换_linux图形界面切换到字符界面命令-CSDN博客

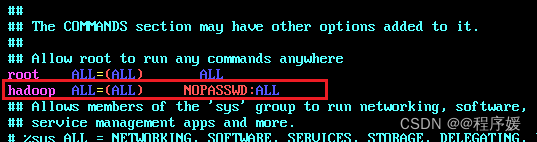

为hadoop用户添加权限

vim /etc/sudoers

关闭防火墙

注:(root用户)

systemctl stop firewalld 关闭

systemctl disable firewalld 取消开机自启动

systemctl status firewalld 检查是否已关闭

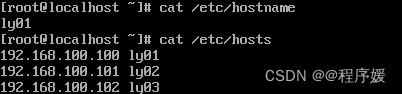

修改主机名以及ip地址映射

主机名根据自己需要修改,ip地址后的就是映射的主机名

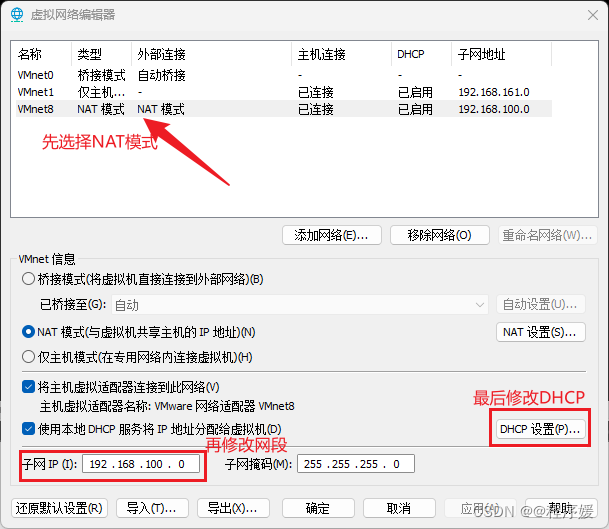

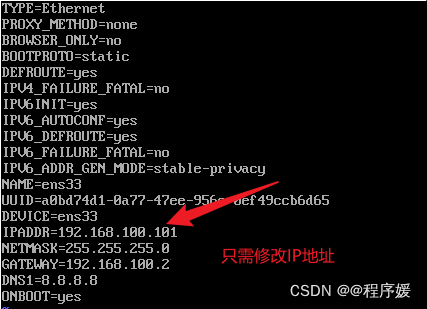

配置静态IP

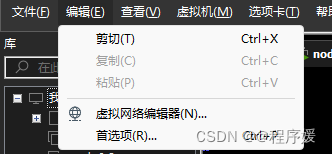

点击虚拟网络编辑器,将网段修改为我们需要的网段

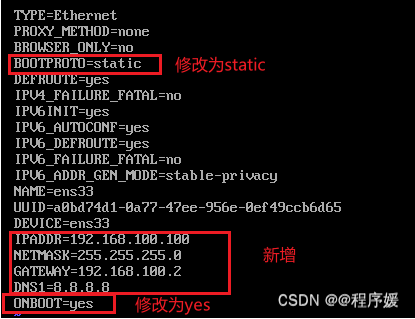

再修改配置文件/etc/sysconf ig/network-scripts/ifcfg-ens33

vim /etc/sysconf ig/network-scripts/ifcfg-ens33

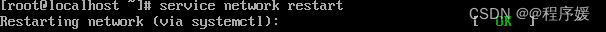

重启网络服务:service network restart

然后重启reboot (主机名用配置文件修改需要重启才会生效)

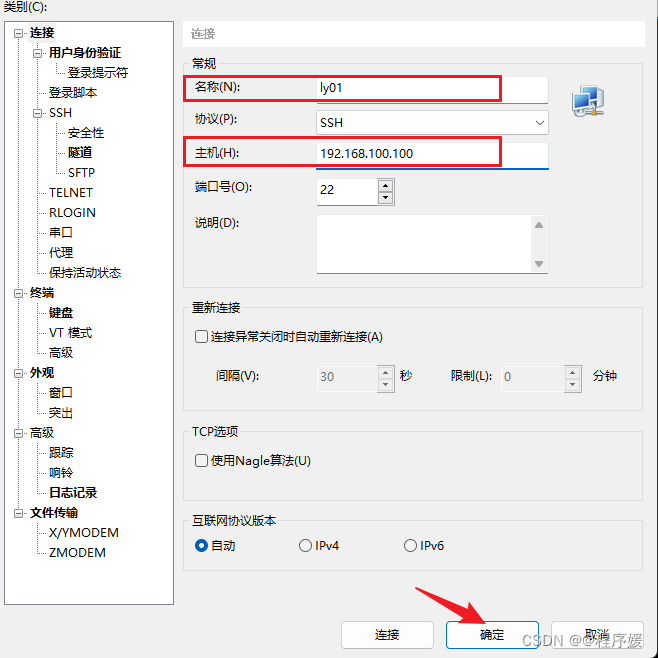

连接xshell ,以hadoop用户登录

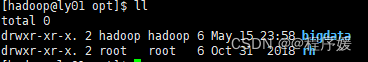

创建目录并将该文件夹权限赋予hadoop用户

[hadoop@ly01 ~]$ sudo mkdir /opt/bigdata

[hadoop@ly01 ~]$ sudo chown hadoop:hadoop /opt/bigdata

切换到该目录

[hadoop@ly01 ~]$ cd /opt/bigdata/

安装配置jdk

卸载OpenJDK、安装新版JDK、配置JDK

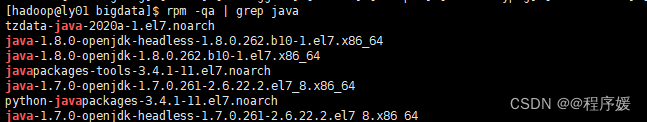

先用rpm -qa | grep java查看java-openjdk版本

根据上述情况,卸载1.7.0、1.8.0即可,不同镜像会略有不同

sudo rpm -e --nodeps java-1.7.0-openjdk-1.7.0.261-2.6.22.2.el7_8.x86_64

sudo rpm -e --nodeps java-1.7.0-openjdk-headless-1.7.0.261-2.6.22.2.el7_8.x86_64

sudo rpm -e --nodeps java-1.8.0-openjdk-1.8.0.262.b10-1.el7.x86_64

sudo rpm -e --nodeps java-1.8.0-openjdk-headless-1.8.0.262.b10-1.el7.x86_64

rz上传jdk文件

[hadoop@ly01 bigdata]$ rz

[hadoop@ly01 bigdata]$ ll

total 135512

-rw-r--r--. 1 hadoop hadoop 138762230 Jul 28 2023 jdk-8u361-linux-x64.tar.gz

解压并重命名为jdk

[hadoop@ly01 bigdata]$ tar -zxvf jdk-8u361-linux-x64.tar.gz

[hadoop@ly01 bigdata]$ mv jdk1.8.0_361 jdk

[hadoop@ly01 bigdata]$ ll

total 135516

drwxrwxr-x. 8 hadoop hadoop 4096 May 16 00:05 jdk

配置环境变量

vim /etc/profile

在最后添加以下内容

export JAVA_HOME=/opt/bigdata/jdk

export PATH=$PATH:$JAVA_HOME/bin

使修改后配置文件生效

[hadoop@ly01 bigdata]$ source /etc/profile

检查是否安装成功

[hadoop@ly01 bigdata]$ java -version

java version "1.8.0_361"

Java(TM) SE Runtime Environment (build 1.8.0_361-b09)

Java HotSpot(TM) 64-Bit Server VM (build 25.361-b09, mixed mode)

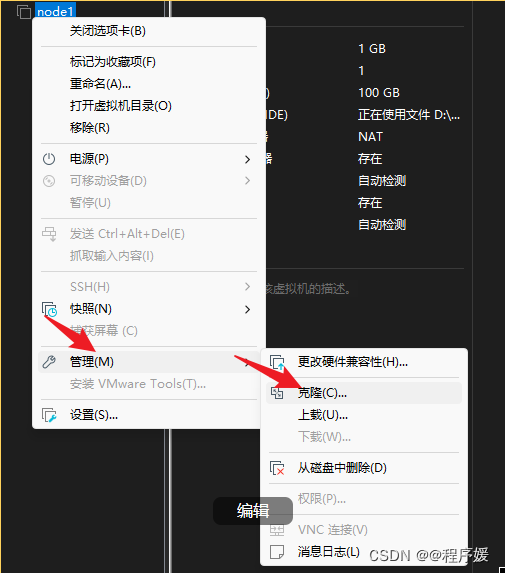

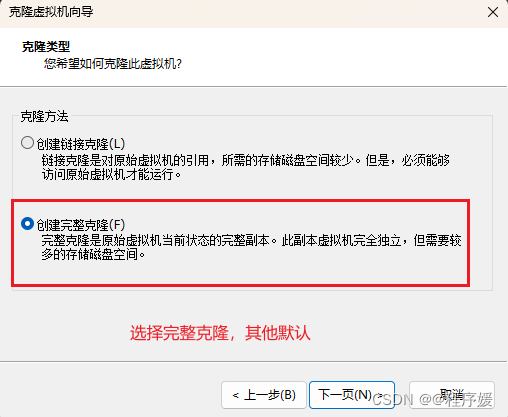

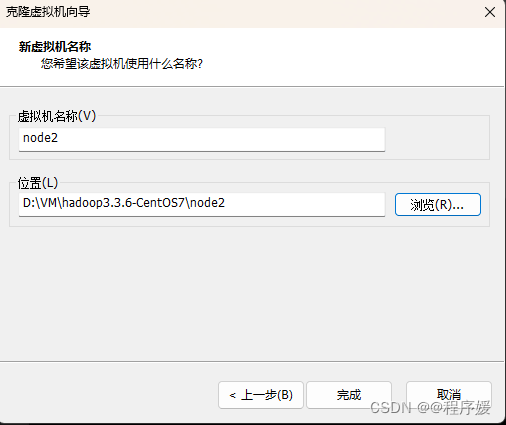

关闭虚拟机,克隆其他两个节点

修改主机名和ip地址

节点2和3都需修改,修改之后重启reboot

**sudo vim /etc/hostname **

vim /etc/sysconf ig/network-scripts/ifcfg-ens33

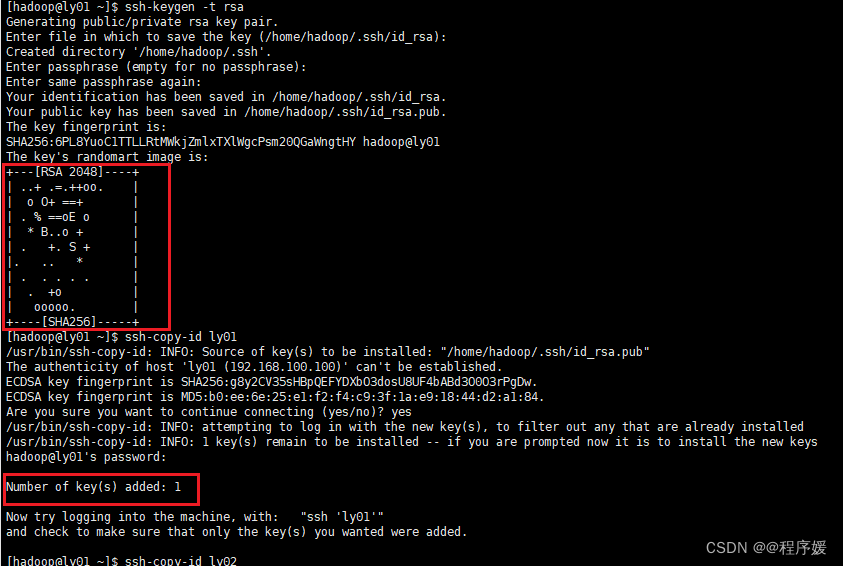

配置免密登录

重启之后通过xshell连接三个节点,均以hadoop用户登录

在每个节点都执行以下命令

ssh-keygen -t rsa (连续三次回车)

ssh-copy-id ly01 (输入yes,hadoop用户的密码)

ssh-copy-id ly02 (输入yes,hadoop用户的密码)

ssh-copy-id ly03 (输入yes,hadoop用户的密码)

可在节点1ssh 连接其他节点测试是否成功

安装配置hadoop

切换目录,rz上传hadoop文件并解压,重命名

[hadoop@ly01 ~]$ cd /opt/bigdata/

[hadoop@ly01 bigdata]$ rz

[hadoop@ly01 bigdata]$ tar -zxvf hadoop-3.3.6.tar.gz

[hadoop@ly01 bigdata]$ mv hadoop-3.3.6 hadoop

配置环境变量

[hadoop@ly01 bigdata]$ sudo vim /etc/profile

#修改为以下内容

export JAVA_HOME=/opt/bigdata/jdk

export HADOOP_HOME=/opt/bigdata/hadoop

export PATH=$PATH:$JAVA_HOME/bin:$HADOOP_HOME/sbin:$HADOOP_HOME/bin

#生效

[hadoop@ly01 bigdata]$ source /etc/profile

配置文件修改

将hadoop-env.sh mapred-env.sh yarn-env.sh 加入JAVA_HOME变量

[hadoop@ly01 bigdata]$ echo "export JAVA_HOME=/opt/bigdata/jdk" >> /opt/bigdata/hadoop/etc/hadoop/hadoop-env.sh

[hadoop@ly01 bigdata]$ echo "export JAVA_HOME=/opt/bigdata/jdk" >> /opt/bigdata/hadoop/etc/hadoop/mapred-env.sh

[hadoop@ly01 bigdata]$ echo "export JAVA_HOME=/opt/bigdata/jdk" >> /opt/bigdata/hadoop/etc/hadoop/yarn-env.sh

切换目录

[hadoop@ly01 bigdata]$ cd /opt/bigdata/hadoop/etc/hadoop

[hadoop@ly01 hadoop]$

节点名称按照自己的修改,文件目录不一样的话也要修改!!!

** core-site.xml修改**

<configuration>

<property>

<name>fs.defaultFS</name>

<value>hdfs://ly01:9000</value>

</property>

<property>

<name>hadoop.tmp.dir</name>

<value>file:/opt/bigdata/hadoop/tmp</value>

</property>

</configuration>

hdfs-site.xml修改

<configuration>

<property>

<name>dfs.replication</name>

<value>3</value>

</property>

<property>

<name>dfs.namenode.name.dir</name>

<value>file:/opt/bigdata/hadoop/tmp/dfs/name</value>

</property>

<property>

<name>dfs.datanode.data.dir</name>

<value>file:/opt/bigdata/hadoop/tmp/dfs/data</value>

</property>

</configuration>

mapred-site.xml修改

<configuration>

<property>

<name>mapreduce.framework.name</name>

<value>yarn</value>

</property>

</configuration>

yarn-site.xml修改

<configuration>

<property>

<name>yarn.nodemanager.aux-services</name>

<value>mapreduce_shuffle</value>

</property>

<property>

<name>yarn.resourcemanager.hostname</name>

<value>ly01</value>

</property>

</configuration>

** workers修改**

#删除原有内容,添加节点名称

ly01

ly02

ly03

** 将节点1上的hadoop文件夹拷贝到另外节点2、节点3上、**

[hadoop@ly01 hadoop]$ scp -r /opt/bigdata/hadoop/ hadoop@ly02:/opt/bigdata/

[hadoop@ly01 hadoop]$ scp -r /opt/bigdata/hadoop/ hadoop@ly03:/opt/bigdata/

将节点1上的profile文件拷贝到另外节点2、节点3上,并到相应的机器上执行source

注:输入yes后输入root用户密码即可,如下

[hadoop@ly01 hadoop]$ sudo scp /etc/profile root@ly02:/etc

The authenticity of host 'ly02 (192.168.100.101)' can't be established.

ECDSA key fingerprint is SHA256:g8y2CV35sHBpQEFYDXbO3dosU8UF4bABd3O0O3rPgDw.

ECDSA key fingerprint is MD5:b0:ee:6e:25:e1:f2:f4:c9:3f:1a:e9:18:44:d2:a1:84.

Are you sure you want to continue connecting (yes/no)? yes

Warning: Permanently added 'ly02,192.168.100.101' (ECDSA) to the list of known hosts.

root@ly02's password:

profile 100% 1961 1.1MB/s 00:00

[hadoop@ly01 hadoop]$ sudo scp /etc/profile root@ly03:/etc

The authenticity of host 'ly03 (192.168.100.102)' can't be established.

ECDSA key fingerprint is SHA256:g8y2CV35sHBpQEFYDXbO3dosU8UF4bABd3O0O3rPgDw.

ECDSA key fingerprint is MD5:b0:ee:6e:25:e1:f2:f4:c9:3f:1a:e9:18:44:d2:a1:84.

Are you sure you want to continue connecting (yes/no)? yes

Warning: Permanently added 'ly03,192.168.100.102' (ECDSA) to the list of known hosts.

root@ly03's password:

profile 100% 1961 1.0MB/s 00:00

[hadoop@ly01 hadoop]$

#在节点2执行

[hadoop@ly02 ~]$ source /etc/profile

#在节点3执行

[hadoop@ly03 ~]$ source /etc/profile

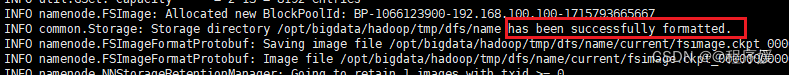

集群初始化

hadoop namenode -format

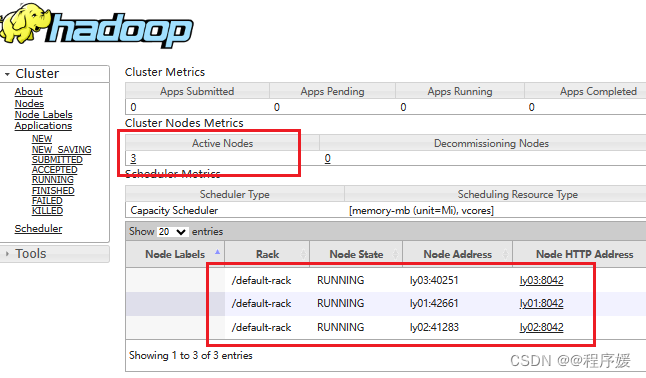

启动hadoop集群

start-yarn.sh

start-dfs.sh

jps查看进程

节点1(主节点)

[hadoop@ly01 hadoop]$ jps

2567 ResourceManager

3498 DataNode

3661 SecondaryNameNode

3390 NameNode

4334 NodeManager

4415 Jps

从节点(都是三个进程)

[hadoop@ly02 hadoop]$ jps

2761 Jps

2698 NodeManager

2493 DataNode

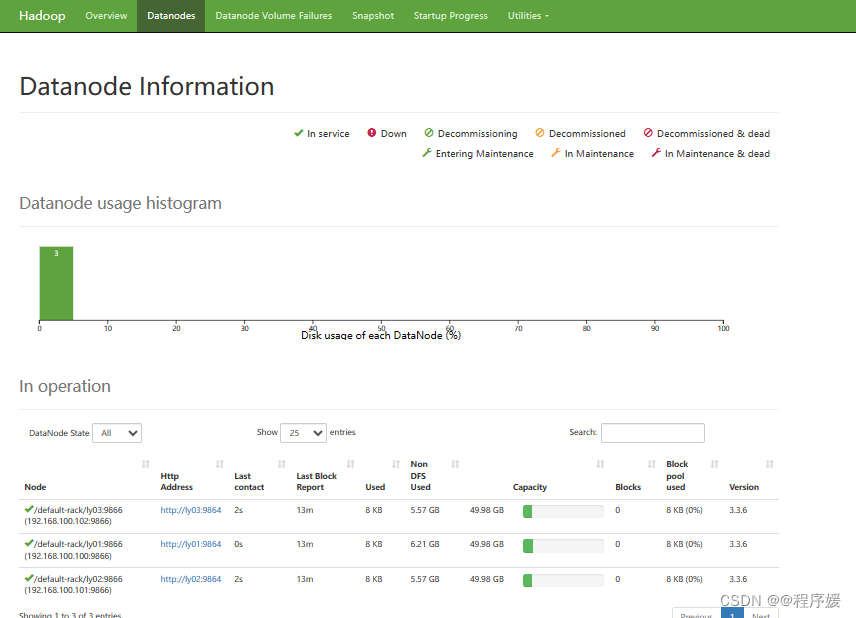

查看进程和web界面

192.168.100.100:8088

192.168.100.100:9870

版权归原作者 @程序媛 所有, 如有侵权,请联系我们删除。