简介

1)Hadoop是一个由Apache基金会所开发的分布式系统基础架构。 2)主要解决,海量数据的存储和海量数据的分析计算问题。

Hadoop HDFS 提供分布式海量数据存储能力

Hadoop YARN 提供分布式集群资源管理能力

Hadoop MapReduce 提供分布式海量数据计算能力

前置要求

- 请确保完成了集群化环境前置准备章节的内容

- 即:JDK、SSH免密、关闭防火墙、配置主机名映射等前置操作

Hadoop集群角色

Hadoop生态体系中总共会出现如下进程角色:

- Hadoop HDFS的管理角色:Namenode进程(

仅需1个即可(管理者一个就够)) - Hadoop HDFS的工作角色:Datanode进程(

需要多个(工人,越多越好,一个机器启动一个)) - Hadoop YARN的管理角色:ResourceManager进程(

仅需1个即可(管理者一个就够)) - Hadoop YARN的工作角色:NodeManager进程(

需要多个(工人,越多越好,一个机器启动一个)) - Hadoop 历史记录服务器角色:HistoryServer进程(

仅需1个即可(功能进程无需太多1个足够)) - Hadoop 代理服务器角色:WebProxyServer进程(

仅需1个即可(功能进程无需太多1个足够)) - Zookeeper的进程:QuorumPeerMain进程(

仅需1个即可(Zookeeper的工作者,越多越好))

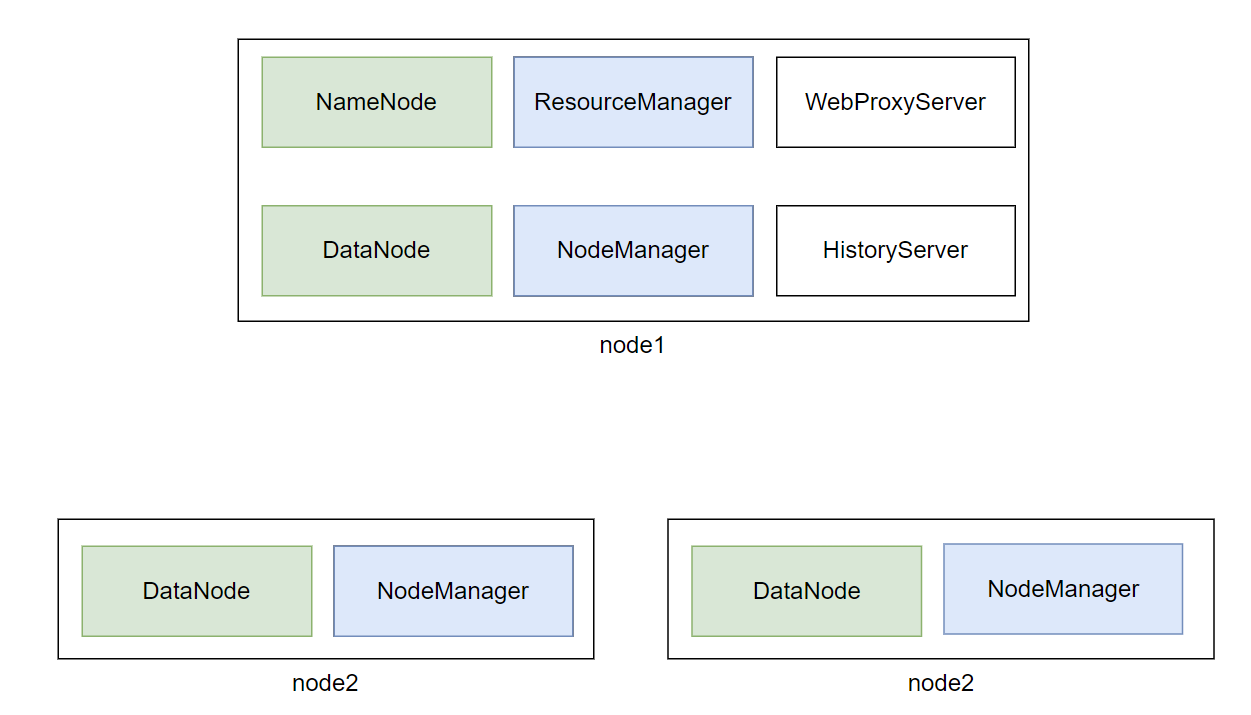

角色和节点分配

角色分配如下:

- node1:Namenode、Datanode、ResourceManager、NodeManager、HistoryServer、WebProxyServer、QuorumPeerMain

- node2:Datanode、NodeManager、QuorumPeerMain

- node3:Datanode、NodeManager、QuorumPeerMain

安装

调整虚拟机内存

如上图,可以看出node1承载了太多的压力。同时node2和node3也同时运行了不少程序

为了确保集群的稳定,需要对虚拟机进行内存设置。

请在VMware中,对:

- node1设置4GB或以上内存

- node2和node3设置2GB或以上内存

大数据的软件本身就是集群化(一堆服务器)一起运行的。

现在我们在一台电脑中以多台虚拟机来模拟集群,确实会有很大的内存压力哦。

Zookeeper集群部署

略

Hadoop集群部署

- 下载Hadoop安装包、解压、配置软链接

# 1. 下载wget http://archive.apache.org/dist/hadoop/common/hadoop-3.3.0/hadoop-3.3.0.tar.gz# 2. 解压# 请确保目录/export/server存在tar -zxvf hadoop-3.3.0.tar.gz -C /export/server/# 3. 构建软链接ln -s /export/server/hadoop-3.3.0 /export/server/hadoop - 修改配置文件:

hadoop-env.sh> Hadoop的配置文件要修改的地方很多,请细心cd 进入到/export/server/hadoop/etc/hadoop,文件夹中,配置文件都在这里修改hadoop-env.sh文件> 此文件是配置一些Hadoop用到的环境变量> > 这些是临时变量,在Hadoop运行时有用> > 如果要永久生效,需要写到/etc/profile中# 在文件开头加入:# 配置Java安装路径export JAVA_HOME=/export/server/jdk# 配置Hadoop安装路径export HADOOP_HOME=/export/server/hadoop# Hadoop hdfs配置文件路径export HADOOP_CONF_DIR=$HADOOP_HOME/etc/hadoop# Hadoop YARN配置文件路径export YARN_CONF_DIR=$HADOOP_HOME/etc/hadoop# Hadoop YARN 日志文件夹export YARN_LOG_DIR=$HADOOP_HOME/logs/yarn# Hadoop hdfs 日志文件夹export HADOOP_LOG_DIR=$HADOOP_HOME/logs/hdfs# Hadoop的使用启动用户配置export HDFS_NAMENODE_USER=rootexport HDFS_DATANODE_USER=rootexport HDFS_SECONDARYNAMENODE_USER=rootexport YARN_RESOURCEMANAGER_USER=rootexport YARN_NODEMANAGER_USER=rootexport YARN_PROXYSERVER_USER=root - 修改配置文件:

core-site.xml如下,清空文件,填入如下内容<?xml version="1.0" encoding="UTF-8"?><?xml-stylesheet type="text/xsl" href="configuration.xsl"?><!-- Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with the License. You may obtain a copy of the License at http://www.apache.org/licenses/LICENSE-2.0 Unless required by applicable law or agreed to in writing, software distributed under the License is distributed on an "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied. See the License for the specific language governing permissions and limitations under the License. See accompanying LICENSE file.--><!-- Put site-specific property overrides in this file. --><configuration> <property> <name>fs.defaultFS</name> <value>hdfs://node1:8020</value> <description></description> </property> <property> <name>io.file.buffer.size</name> <value>131072</value> <description></description> </property></configuration> - 配置:

hdfs-site.xml文件<?xml version="1.0" encoding="UTF-8"?><?xml-stylesheet type="text/xsl" href="configuration.xsl"?><!-- Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with the License. You may obtain a copy of the License at http://www.apache.org/licenses/LICENSE-2.0 Unless required by applicable law or agreed to in writing, software distributed under the License is distributed on an "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied. See the License for the specific language governing permissions and limitations under the License. See accompanying LICENSE file.--><!-- Put site-specific property overrides in this file. --><configuration> <property> <name>dfs.datanode.data.dir.perm</name> <value>700</value> </property> <property> <name>dfs.namenode.name.dir</name> <value>/data/nn</value> <description>Path on the local filesystem where the NameNode stores the namespace and transactions logs persistently.</description> </property> <property> <name>dfs.namenode.hosts</name> <value>node1,node2,node3</value> <description>List of permitted DataNodes.</description> </property> <property> <name>dfs.blocksize</name> <value>268435456</value> <description></description> </property> <property> <name>dfs.namenode.handler.count</name> <value>100</value> <description></description> </property> <property> <name>dfs.datanode.data.dir</name> <value>/data/dn</value> </property></configuration> - 配置:

mapred-env.sh文件# 在文件的开头加入如下环境变量设置export JAVA_HOME=/export/server/jdkexport HADOOP_JOB_HISTORYSERVER_HEAPSIZE=1000export HADOOP_MAPRED_ROOT_LOGGER=INFO,RFA - 配置:

mapred-site.xml文件<?xml version="1.0"?><?xml-stylesheet type="text/xsl" href="configuration.xsl"?><!-- Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with the License. You may obtain a copy of the License at http://www.apache.org/licenses/LICENSE-2.0 Unless required by applicable law or agreed to in writing, software distributed under the License is distributed on an "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied. See the License for the specific language governing permissions and limitations under the License. See accompanying LICENSE file.--><!-- Put site-specific property overrides in this file. --><configuration> <property> <name>mapreduce.framework.name</name> <value>yarn</value> <description></description> </property> <property> <name>mapreduce.jobhistory.address</name> <value>node1:10020</value> <description></description> </property> <property> <name>mapreduce.jobhistory.webapp.address</name> <value>node1:19888</value> <description></description> </property> <property> <name>mapreduce.jobhistory.intermediate-done-dir</name> <value>/data/mr-history/tmp</value> <description></description> </property> <property> <name>mapreduce.jobhistory.done-dir</name> <value>/data/mr-history/done</value> <description></description> </property><property> <name>yarn.app.mapreduce.am.env</name> <value>HADOOP_MAPRED_HOME=$HADOOP_HOME</value></property><property> <name>mapreduce.map.env</name> <value>HADOOP_MAPRED_HOME=$HADOOP_HOME</value></property><property> <name>mapreduce.reduce.env</name> <value>HADOOP_MAPRED_HOME=$HADOOP_HOME</value></property></configuration> - 配置:

yarn-env.sh文件# 在文件的开头加入如下环境变量设置export JAVA_HOME=/export/server/jdkexport HADOOP_HOME=/export/server/hadoopexport HADOOP_CONF_DIR=$HADOOP_HOME/etc/hadoopexport YARN_CONF_DIR=$HADOOP_HOME/etc/hadoopexport YARN_LOG_DIR=$HADOOP_HOME/logs/yarnexport HADOOP_LOG_DIR=$HADOOP_HOME/logs/hdfs - 配置:

yarn-site.xml文件<?xml version="1.0"?><!-- Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with the License. You may obtain a copy of the License at http://www.apache.org/licenses/LICENSE-2.0 Unless required by applicable law or agreed to in writing, software distributed under the License is distributed on an "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied. See the License for the specific language governing permissions and limitations under the License. See accompanying LICENSE file.--><configuration><!-- Site specific YARN configuration properties --><property> <name>yarn.log.server.url</name> <value>http://node1:19888/jobhistory/logs</value> <description></description></property> <property> <name>yarn.web-proxy.address</name> <value>node1:8089</value> <description>proxy server hostname and port</description> </property> <property> <name>yarn.log-aggregation-enable</name> <value>true</value> <description>Configuration to enable or disable log aggregation</description> </property> <property> <name>yarn.nodemanager.remote-app-log-dir</name> <value>/tmp/logs</value> <description>Configuration to enable or disable log aggregation</description> </property><!-- Site specific YARN configuration properties --> <property> <name>yarn.resourcemanager.hostname</name> <value>node1</value> <description></description> </property> <property> <name>yarn.resourcemanager.scheduler.class</name> <value>org.apache.hadoop.yarn.server.resourcemanager.scheduler.fair.FairScheduler</value> <description></description> </property> <property> <name>yarn.nodemanager.local-dirs</name> <value>/data/nm-local</value> <description>Comma-separated list of paths on the local filesystem where intermediate data is written.</description> </property> <property> <name>yarn.nodemanager.log-dirs</name> <value>/data/nm-log</value> <description>Comma-separated list of paths on the local filesystem where logs are written.</description> </property> <property> <name>yarn.nodemanager.log.retain-seconds</name> <value>10800</value> <description>Default time (in seconds) to retain log files on the NodeManager Only applicable if log-aggregation is disabled.</description> </property> <property> <name>yarn.nodemanager.aux-services</name> <value>mapreduce_shuffle</value> <description>Shuffle service that needs to be set for Map Reduce applications.</description> </property></configuration> - 修改workers文件

# 全部内容如下node1node2node3 - 分发hadoop到其它机器

# 在node1执行

cd /export/server

scp -r hadoop-3.3.0 node2:`pwd`/

scp -r hadoop-3.3.0 node2:`pwd`/

- 在node2、node3执行

# 创建软链接ln -s /export/server/hadoop-3.3.0 /export/server/hadoop - 创建所需目录- 在node1执行:

mkdir -p /data/nnmkdir -p /data/dnmkdir -p /data/nm-logmkdir -p /data/nm-local- 在node2执行:mkdir -p /data/dnmkdir -p /data/nm-logmkdir -p /data/nm-local- 在node3执行:mkdir -p /data/dnmkdir -p /data/nm-logmkdir -p /data/nm-local - 配置环境变量在node1、node2、node3修改/etc/profile

export HADOOP_HOME=/export/server/hadoopexport PATH=$PATH:$HADOOP_HOME/bin:$HADOOP_HOME/sbin执行source /etc/profile生效 - 格式化NameNode,在node1执行

hadoop namenode -format> hadoop这个命令来自于:$HADOOP_HOME/bin中的程序> > 由于配置了环境变量PATH,所以可以在任意位置执行hadoop命令哦 - 启动hadoop的hdfs集群,在node1执行即可

start-dfs.sh# 如需停止可以执行stop-dfs.sh> start-dfs.sh这个命令来自于:$HADOOP_HOME/sbin中的程序> > 由于配置了环境变量PATH,所以可以在任意位置执行start-dfs.sh命令哦 - 启动hadoop的yarn集群,在node1执行即可

start-yarn.sh# 如需停止可以执行stop-yarn.sh - 启动历史服务器

mapred --daemon start historyserver# 如需停止将start更换为stop - 启动web代理服务器

yarn-daemon.sh start proxyserver# 如需停止将start更换为stop

验证Hadoop集群运行情况

- 在node1、node2、node3上通过jps验证进程是否都启动成功

- 验证HDFS,浏览器打开:http://node1:9870创建文件test.txt,随意填入内容,并执行:```hadoop fs -put test.txt /test.txthadoop fs -cat /test.txt```

- 验证YARN,浏览器打开:http://node1:8088执行:```# 创建文件words.txt,填入如下内容itheima itcast hadoopitheima hadoop hadoopitheima itcast# 将文件上传到HDFS中hadoop fs -put words.txt /words.txt# 执行如下命令验证YARN是否正常hadoop jar /export/server/hadoop/share/hadoop/mapreduce/hadoop-mapreduce-examples-3.3.0.jar wordcount -Dmapred.job.queue.name=root.root /words.txt /output```

版权归原作者 AixXiang 所有, 如有侵权,请联系我们删除。