Kubernetes概述

使用kubeadm快速部署一个k8s集群

Kubernetes高可用集群二进制部署(一)主机准备和负载均衡器安装

Kubernetes高可用集群二进制部署(二)ETCD集群部署

Kubernetes高可用集群二进制部署(三)部署api-server

Kubernetes高可用集群二进制部署(四)部署kubectl和kube-controller-manager、kube-scheduler

Kubernetes高可用集群二进制部署(五)kubelet、kube-proxy、Calico、CoreDNS

Kubernetes高可用集群二进制部署(六)Kubernetes集群节点添加

主要介绍worker集群添加节点

1. 主机准备

1.1 主机名设置

hostnamectl set-hostname k8s-worker2

hostname

1.2 主机与IP地址解析

集群中已有节点也需要添加新节点的解析。

cat >> /etc/hosts << EOF

192.168.10.101 ha1

192.168.10.102 ha2

192.168.10.103 k8s-master1

192.168.10.104 k8s-master2

192.168.10.105 k8s-master3

192.168.10.106 k8s-worker1

192.168.10.107 k8s-worker2

EOF

1.3 主机安全设置

1.3.1 关闭防火墙

systemctl stop firewalld

systemctl disable firewalld

firewall-cmd --state

1.3.2 关闭selinux

setenforce 0

sed -ri's/SELINUX=enforcing/SELINUX=disabled/'/etc/selinux/config

sestatus

1.4 交换分区设置

swapoff -a

sed -ri's/.*swap.*/#&/'/etc/fstab

echo"vm.swappiness=0" >> /etc/sysctl.conf

sysctl -p

1.5 主机系统时间同步

安装软件

yum -y install ntpdate

制定时间同步计划任务

crontab -e

0 */1 *** ntpdate time1.aliyun.com

1.6 主机系统优化

limit优化

ulimit -SHn 65535

cat <<EOF >> /etc/security/limits.conf

* soft nofile 655360

* hard nofile 131072

* soft nproc 655350

* hard nproc 655350

* soft memlock unlimited

* hard memlock unlimited

EOF

1.7 ipvs管理工具安装及模块加载

为集群节点安装,负载均衡节点不用安装

yum -y install ipvsadm ipset sysstat conntrack libseccomp

所有节点配置ipvs模块,在内核4.19+版本nf_conntrack_ipv4已经改为nf_conntrack, 4.18以下使用nf_conntrack_ipv4即可:

modprobe -- ip_vs

modprobe -- ip_vs_rr

modprobe -- ip_vs_wrr

modprobe -- ip_vs_sh

modprobe -- nf_conntrack

创建 /etc/modules-load.d/ipvs.conf 并加入以下内容:

cat >/etc/modules-load.d/ipvs.conf <<EOF

ip_vs

ip_vs_lc

ip_vs_wlc

ip_vs_rr

ip_vs_wrr

ip_vs_lblc

ip_vs_lblcr

ip_vs_dh

ip_vs_sh

ip_vs_fo

ip_vs_nq

ip_vs_sed

ip_vs_ftp

ip_vs_sh

nf_conntrack

ip_tables

ip_set

xt_set

ipt_set

ipt_rpfilter

ipt_REJECT

ipip

EOF

设置为开机启动

systemctl enable --now systemd-modules-load.service

如果执行开机启动失败了,提示如下信息:

Job for systemd-modules-load.service failed because the control process exited with error code. See "systemctl status systemd-modules-load.service" and "journalctl -xe" for details.

Failed to find module 'ip_vs_fo'

具体原因是内核版本问题,不过也可以将文件中的ip_vs_fo 去掉,然后继续执行

1.8 Linux内核升级

在所有节点中安装,需要重新操作系统更换内核。

[root@localhost ~]# yum -y install perl

[root@localhost ~]# rpm --import https://www.elrepo.org/RPM-GPG-KEY-elrepo.org

[root@localhost ~]# yum -y install https://www.elrepo.org/elrepo-release-7.0-4.el7.elrepo.noarch.rpm

[root@localhost ~]# yum --enablerepo="elrepo-kernel" -y install kernel-ml.x86_64

[root@localhost ~]# grub2-set-default 0

[root@localhost ~]# grub2-mkconfig -o /boot/grub2/grub.cfg

1.9 Linux内核优化

cat <<EOF > /etc/sysctl.d/k8s.conf

net.ipv4.ip_forward = 1

net.bridge.bridge-nf-call-iptables = 1

net.bridge.bridge-nf-call-ip6tables = 1

fs.may_detach_mounts = 1

vm.overcommit_memory=1

vm.panic_on_oom=0

fs.inotify.max_user_watches=89100

fs.file-max=52706963

fs.nr_open=52706963

net.netfilter.nf_conntrack_max=2310720

net.ipv4.tcp_keepalive_time = 600

net.ipv4.tcp_keepalive_probes = 3

net.ipv4.tcp_keepalive_intvl =15

net.ipv4.tcp_max_tw_buckets = 36000

net.ipv4.tcp_tw_reuse = 1

net.ipv4.tcp_max_orphans = 327680

net.ipv4.tcp_orphan_retries = 3

net.ipv4.tcp_syncookies = 1

net.ipv4.tcp_max_syn_backlog = 16384

net.ipv4.ip_conntrack_max = 131072

net.ipv4.tcp_max_syn_backlog = 16384

net.ipv4.tcp_timestamps = 0

net.core.somaxconn = 16384

EOF

sysctl --system

所有节点配置完内核后,重启服务器,保证重启后内核依旧加载

reboot -h now

重启后查看结果:

lsmod | grep --color=auto -e ip_vs -e nf_conntrack

1.10 其它工具安装(选装)

yum install wget jq psmisc vim net-tools telnet yum-utils device-mapper-persistent-data lvm2 git lrzsz -y

2. 配置免密登录

在k8s-master1节点操作

ssh-copy-id root@k8s-worker2

3. Kubernetes软件包获取

3.1 软件包获取

[root@k8s-master1 bin]# pwd/data/k8s-work/kubernetes/server/bin

scp kubelet kube-proxy k8s-worker2:/usr/local/bin

[root@k8s-worker2 ~]# ls /usr/local/bin/kube*/usr/local/bin/kubelet

/usr/local/bin/kube-proxy

3.2 docker-ce安装及配置

wget -O /etc/yum.repos.d/docker-ce.repo https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

yum -y install docker-ce

systemctl enable docker

systemctl start docker

cat <<EOF | sudo tee/etc/docker/daemon.json

{"exec-opts": ["native.cgroupdriver=systemd"],"registry-mirrors": ["https://8i185852.mirror.aliyuncs.com"]}

EOF

必须配置

native.cgroupdriver

,不配置这个步骤会导致kubelet启动失败

systemctl restart docker

3.3 部署kubelet

[root@k8s-worker2 ~]# mkdir -p /etc/kubernetes[root@k8s-worker2 ~]# mkdir -p /etc/kubernetes/ssl[root@k8s-worker2 ~]# mkdir -p /var/lib/kubelet[root@k8s-worker2 ~]# mkdir -p /var/log/kubernetes

[root@k8s-master1 k8s-work]# pwd/data/k8s-work

scp kubelet-bootstrap.kubeconfig kubelet.json k8s-worker2:/etc/kubernetes/

scp ca.pem k8s-worker2:/etc/kubernetes/ssl/

scp kubelet.service k8s-worker2:/usr/lib/systemd/system/

在新加节点k8s-work2上修改kubelet.json文件

[root@k8s-worker2 ~]# vim /etc/kubernetes/kubelet.json{"kind": "KubeletConfiguration","apiVersion": "kubelet.config.k8s.io/v1beta1","authentication": {"x509": {"clientCAFile": "/etc/kubernetes/ssl/ca.pem"},"webhook": {"enabled": true,"cacheTTL": "2m0s"},"anonymous": {"enabled": false

}},"authorization": {"mode": "Webhook","webhook": {"cacheAuthorizedTTL": "5m0s","cacheUnauthorizedTTL": "30s"}},"address": "192.168.10.107",#当前主机的地址"port": 10250,"readOnlyPort": 10255,"cgroupDriver": "systemd",#要和docker中的一致,否则启动不了"hairpinMode": "promiscuous-bridge","serializeImagePulls": false,"clusterDomain": "cluster.local.","clusterDNS": ["10.96.0.2"]}

[root@k8s-worker2 ~]# systemctl daemon-reload[root@k8s-worker2 ~]# systemctl enable --now kubelet[root@k8s-worker2 ~]# systemctl status kubelet

# kubectl get nodes

NAME STATUS ROLES AGE VERSION

k8s-master1 Ready <none> 41h v1.21.10

k8s-master2 Ready <none> 41h v1.21.10

k8s-master3 Ready <none> 41h v1.21.10

k8s-worker1 Ready <none> 41h v1.21.10

k8s-worker2 NotReady <none> 55s v1.21.10

如果启动失败,查看日志

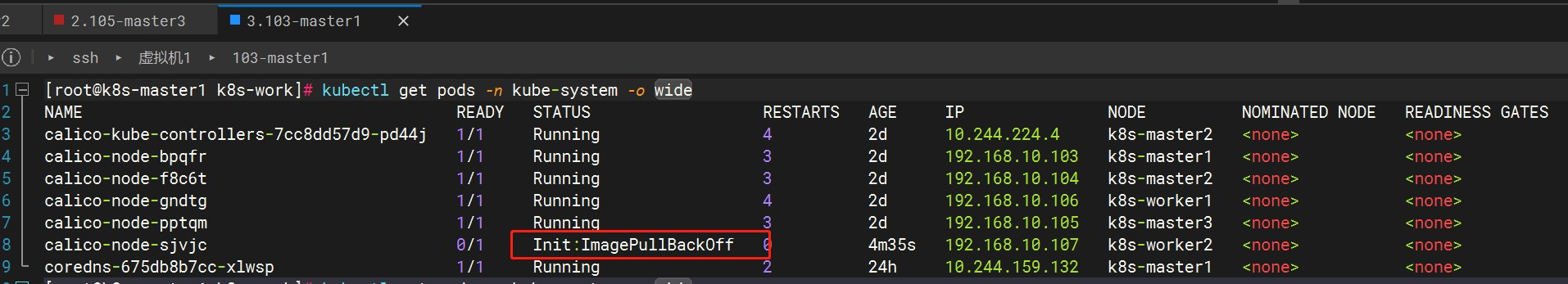

kubectl get pods -n kube-system -o wide

#或者less /var/log/messages

镜像拉取错误,多试几次或者尝试将镜像下载到本地上传到服务器,用

docker load -i xxxx

加载镜像

3.4 部署kube-proxy

[root@k8s-master1 k8s-work]# scp kube-proxy.kubeconfig kube-proxy.yaml k8s-worker2:/etc/kubernetes/[root@k8s-master1 k8s-work]# scp kube-proxy.service k8s-worker2:/usr/lib/systemd/system/

[root@k8s-worker2 ~]# vim /etc/kubernetes/kube-proxy.yaml

apiVersion: kubeproxy.config.k8s.io/v1alpha1

bindAddress: 192.168.10.107 #当前地址

clientConnection:

kubeconfig: /etc/kubernetes/kube-proxy.kubeconfig

clusterCIDR: 10.244.0.0/16

healthzBindAddress: 192.168.10.107:10256 #当前地址

kind: KubeProxyConfiguration

metricsBindAddress: 192.168.10.107:10249 #当前地址

mode: "ipvs"

[root@k8s-worker2 ~]# mkdir -p /var/lib/kube-proxy

[root@k8s-worker2 ~]# systemctl daemon-reload[root@k8s-worker2 ~]# systemctl enable --now kube-proxy[root@k8s-worker2 ~]# systemctl status kube-proxy

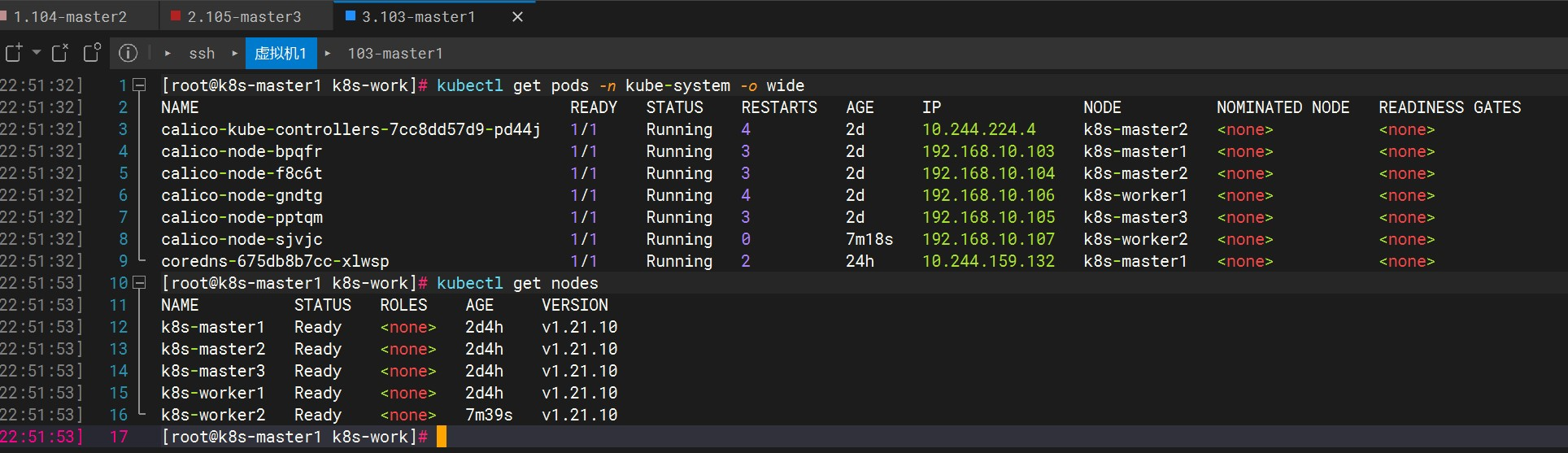

4. 验证

[root@k8s-master1 k8s-work]# kubectl get pods -n kube-system -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

calico-kube-controllers-7cc8dd57d9-pd44j 1/1 Running 4 2d 10.244.224.4 k8s-master2 <none> <none>

calico-node-bpqfr 1/1 Running 3 2d 192.168.10.103 k8s-master1 <none> <none>

calico-node-f8c6t 1/1 Running 3 2d 192.168.10.104 k8s-master2 <none> <none>

calico-node-gndtg 1/1 Running 4 2d 192.168.10.106 k8s-worker1 <none> <none>

calico-node-pptqm 1/1 Running 3 2d 192.168.10.105 k8s-master3 <none> <none>

calico-node-sjvjc 1/1 Running 0 7m18s 192.168.10.107 k8s-worker2 <none> <none>

coredns-675db8b7cc-xlwsp 1/1 Running 2 24h 10.244.159.132 k8s-master1 <none> <none>

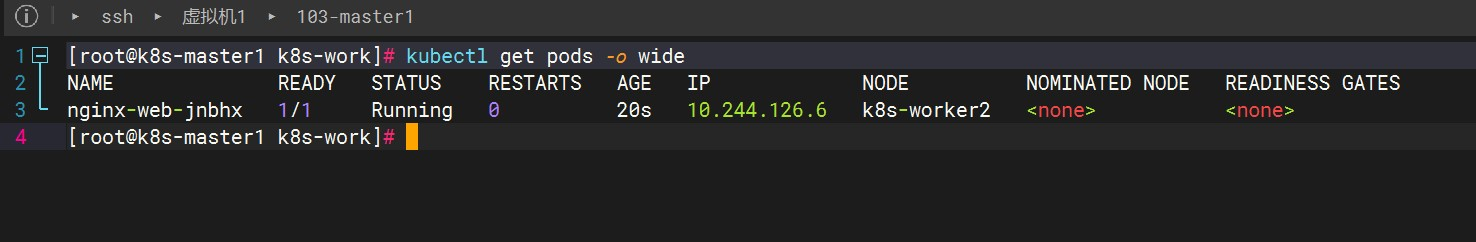

kubectl get nodes --show-labels

kubectl label nodes k8s-worker2 deploy.type=nginxapp

cat > nginx2.yaml << EOF

---

apiVersion: v1

kind: ReplicationController

metadata:

name: nginx-web

spec:

replicas: 1

selector:

name: nginx

template:

metadata:

labels:

name: nginx

spec:

nodeSelector:

deploy.type: nginxapp #根据标签部署

containers:

- name: nginx

image: nginx:1.19.6

ports:

- containerPort: 80

---

apiVersion: v1

kind: Service

metadata:

name: nginx-service-nodeport

spec:

ports:

- port: 80

targetPort: 80

nodePort: 30001

protocol: TCP

type: NodePort

selector:

name: nginx

EOF

kubectl apply -f nginx-work2.yaml

#查看所有名字空间的 Pod

kubectl get pods -A#查看pod的描述信息

kubectl describe pod <podname>-n<namespace>#删除Pod

kubectl delete pod <podname>-n<namespace>

版权归原作者 鱼找水需要时间 所有, 如有侵权,请联系我们删除。