1. 准备工作(所有节点执行)

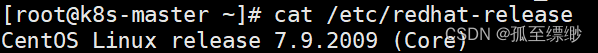

1.1. 准备虚拟机

本地部署,仅供参考。

三个节点:名字为k8s-node1、k8s-node2、k8s-master

设置系统主机名及Host 文件

sudocat<<EOF>> /etc/hosts

192.168.255.141 k8s-node1

192.168.255.142 k8s-node2

192.168.255.140 k8s-master

EOF

# 对应的节点执行sudo hostnamectl set-hostname k8s-node1

sudo hostnamectl set-hostname k8s-node2

sudo hostnamectl set-hostname k8s-master

1.2 更新yum

# 需要更新很久sudo yum update -y#设置存储库sudo yum install-y yum-utils

sudo yum-config-manager --add-repo https://download.docker.com/linux/centos/docker-ce.repo

1.3 相关设置

1.3.1 禁用iptables和firewalld服务

systemctl stop firewalld

systemctl disable firewalld

systemctl stop iptables

systemctl disable iptables

1.3.2 禁用selinux

# 永久关闭sed-i's/enforcing/disabled/' /etc/selinux/config

# 临时关闭

setenforce 0

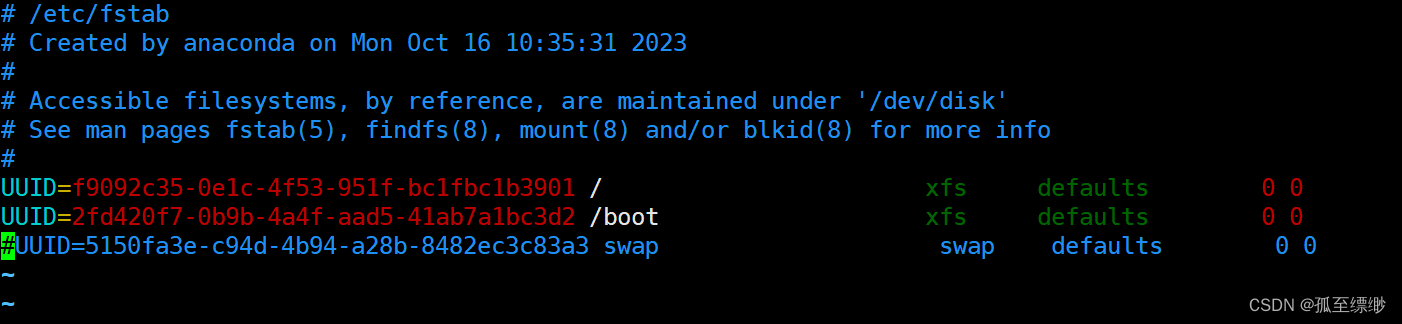

1.3.3 禁用swap分区

# 临时关闭

swapoff -a# 永久关闭vim /etc/fstab

将行

/dev/mapper/xxx swap xxx

注释

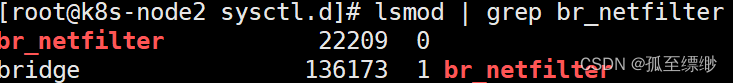

1.3.4 调整内核参数,对于 K8S

cat<<EOF|sudotee /etc/sysctl.d/k8s.conf

net.bridge.bridge-nf-call-iptables = 1

net.bridge.bridge-nf-call-ip6tables = 1

net.ipv4.ip_forward = 1

EOF# 依次执行下面命令sysctl-p

modprobe br_netfilter

lsmod |grep br_netfilter

显示:

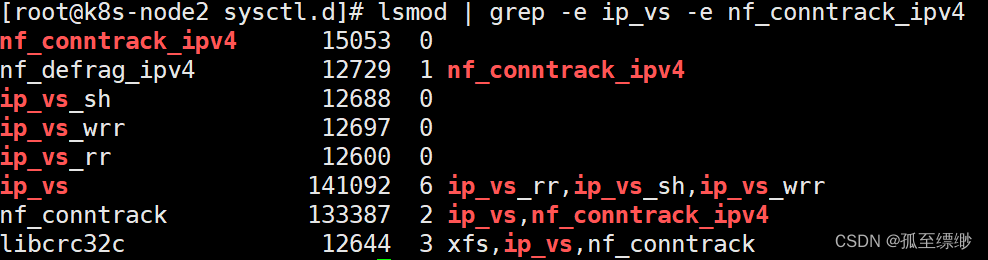

1.3.5 配置 ipvs 功能

# 安装ipset和ipvsadm

yum install ipset ipvsadmin -y# 添加需要加载的模块写入脚本文件cat<<EOF> /etc/sysconfig/modules/ipvs.modules

#!/bin/bash

modprobe -- ip_vs

modprobe -- ip_vs_rr

modprobe -- ip_vs_wrr

modprobe -- ip_vs_sh

modprobe -- nf_conntrack_ipv4

EOF# 为脚本文件添加执行权限chmod +x /etc/sysconfig/modules/ipvs.modules

# 执行脚本文件

/bin/bash /etc/sysconfig/modules/ipvs.modules

# 查看对应的模块是否加载成功

lsmod |grep-e ip_vs -e nf_conntrack_ipv4

显示:

重启

reboot

2. 安装docker和cri-dockerd(所有节点执行)

2.1 安装docker

2.1.1 移除旧版docker(新安装虚拟机则不需执行)

sudo yum remove docker\

docker-client \

docker-client-latest \

docker-common \

docker-latest \

docker-latest-logrotate \

docker-logrotate \

docker-engine

2.1.2 安装docker及其依赖库

sudo yum install-y docker-ce docker-ce-cli containerd.io docker-buildx-plugin docker-compose-plugin

2.1.3 启动Docker,设置开机自启动

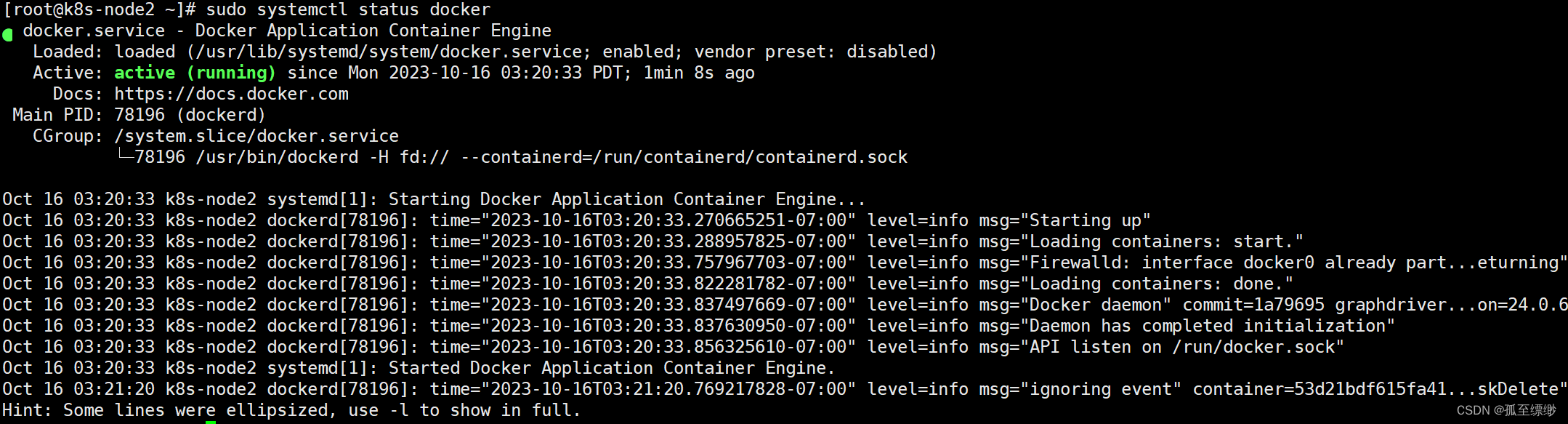

# 启动dockersudo systemctl start docker# 设置docker开机启动sudo systemctl enabledocker# 验证sudo systemctl status docker

2.2 安装cri-dockerd

k8s 1.24版本后需要使用cri-dockerd和docker通信

2.2.1 下载cri-dockerd

# 若没有wget,则执行sudo yum install-ywget# 下载sudowget https://github.com/Mirantis/cri-dockerd/releases/download/v0.3.4/cri-dockerd-0.3.4-3.el7.x86_64.rpm

# 安装sudorpm-ivh cri-dockerd-0.3.4-3.el7.x86_64.rpm

# 重载系统守护进程sudo systemctl daemon-reload

2.2.2 设置镜像加速

sudotee /etc/docker/daemon.json <<-'EOF'

{

"registry-mirrors": ["https://c12xt3od.mirror.aliyuncs.com"]

}

EOF

2.2.3 修改配置文件

修改第10行 ExecStart=

改为 ExecStart=/usr/bin/cri-dockerd --network-plugin=cni --pod-infra-container-image=registry.aliyuncs.com/google_containers/pause:3.7

vi /usr/lib/systemd/system/cri-docker.service

2.2.4 自启动、重启Docker组件

# 重载系统守护进程sudo systemctl daemon-reload

# 设置cri-dockerd自启动sudo systemctl enable cri-docker.socket cri-docker

# 启动cri-dockerdsudo systemctl start cri-docker.socket cri-docker

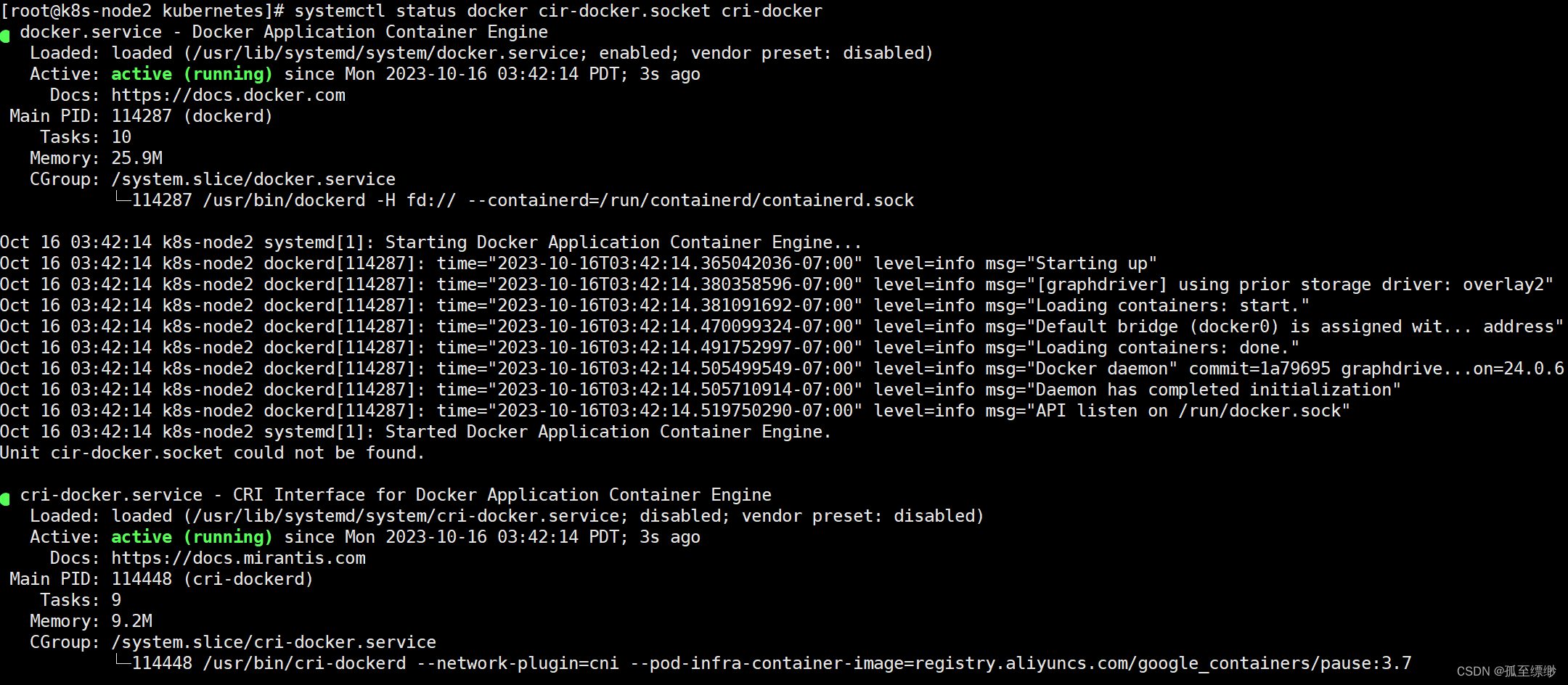

# 检查Docker组件状态sudo systemctl status docker cir-docker.socket cri-docker

显示:

3. 安装Kubernetes

3.1 安装kubectl(所有节点执行)

# 当前使用的是最新版本 v1.28.2# 下载curl-LO"https://dl.k8s.io/release/$(curl-L-s https://dl.k8s.io/release/stable.txt)/bin/linux/amd64/kubectl"# 检验curl-LO"https://dl.k8s.io/$(curl-L-s https://dl.k8s.io/release/stable.txt)/bin/linux/amd64/kubectl.sha256"echo"$(cat kubectl.sha256) kubectl"| sha256sum --check# 安装sudoinstall-o root -g root -m 0755 kubectl /usr/local/bin/kubectl

# 测试

kubectl version --client

3.2 安装kubeadm(所有节点执行)

# 改国内源cat<<EOF|sudotee /etc/yum.repos.d/kubernetes.repo

[kubernetes]

name=Kubernetes

baseurl=http://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64

enabled=1

gpgcheck=0

repo_gpgcheck=1

gpgkey=http://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg

http://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

exclude=kubelet kubeadm kubectl

EOF# 安装sudo yum install-yinstall kubeadm-1.28.2-0 kubelet-1.28.2-0 kubectl-1.28.2-0 --disableexcludes=kubernetes

# 设置自启动sudo systemctl enable--now kubelet

3.3 安装runc(所有节点执行)

# 下载 runc.amd64 sudowget https://github.com/opencontainers/runc/releases/download/v1.1.9/runc.amd64

# 安装sudoinstall-m755 runc.amd64 /usr/local/bin/runc

# 验证

runc -v

3.4 部署集群

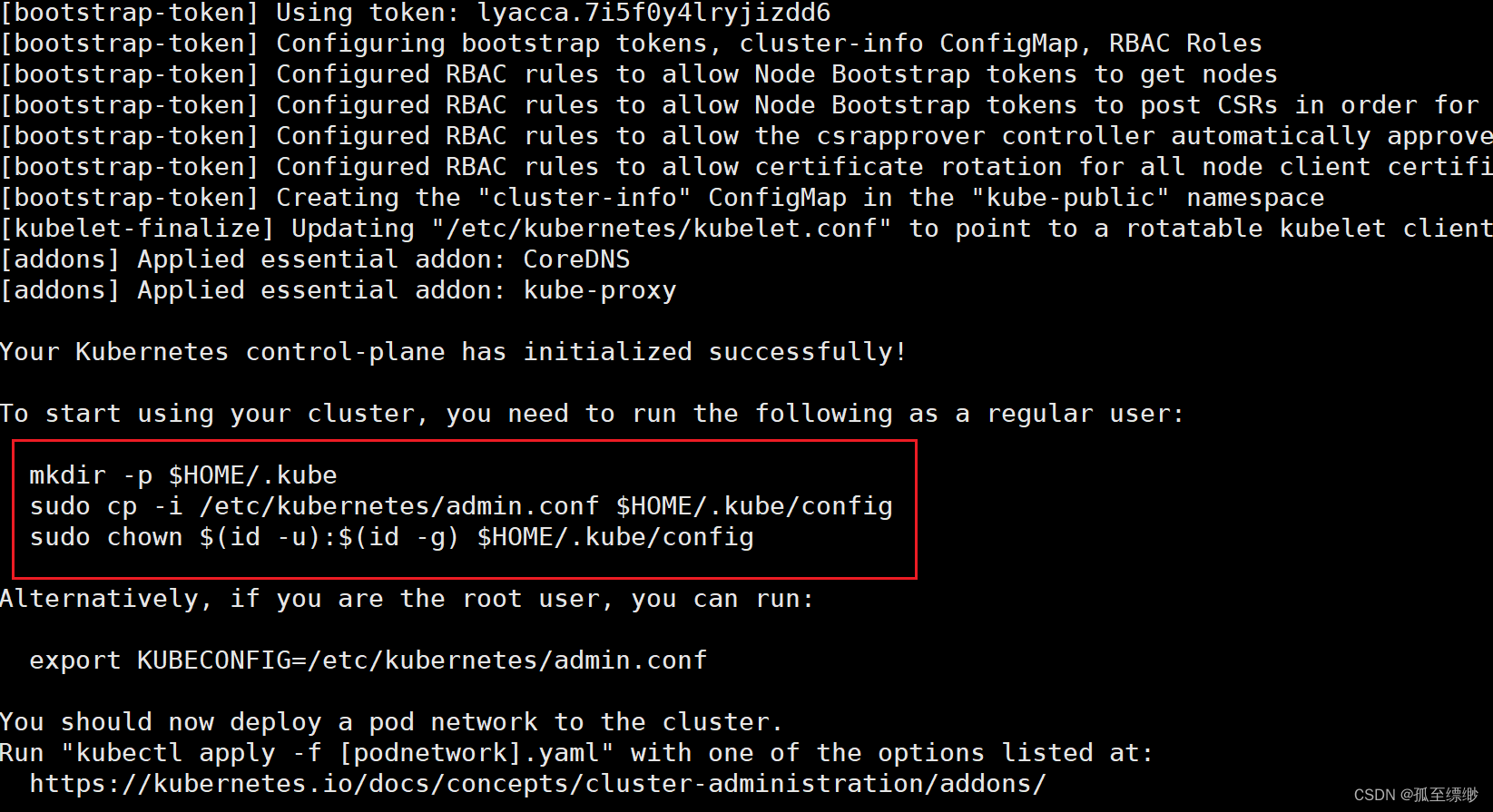

3.4.1 初始化集群(master节点执行)

# 执行 kubeadm init 命令

kubeadm init --node-name=k8s-master --image-repository=registry.aliyuncs.com/google_containers --cri-socket=unix:///var/run/cri-dockerd.sock --apiserver-advertise-address=192.168.255.140 --pod-network-cidr=10.244.0.0/16 --service-cidr=10.96.0.0/12

# 需要修改的参数

--apiserver-advertise-address # 指定 API 服务器的广告地址、我设置为master节点的ip# 初始化成功后运行下面的命令 mkdir-p$HOME/.kube

sudocp-i /etc/kubernetes/admin.conf $HOME/.kube/config

sudochown$(id-u):$(id-g)$HOME/.kube/config

# master节点执行 配置文件的复制(为了在node节点可以使用kubectl相关命令)scp /etc/kubernetes/admin.conf 192.168.255.141:/etc/kubernetes/

scp /etc/kubernetes/admin.conf 192.168.255.142:/etc/kubernetes/

显示:

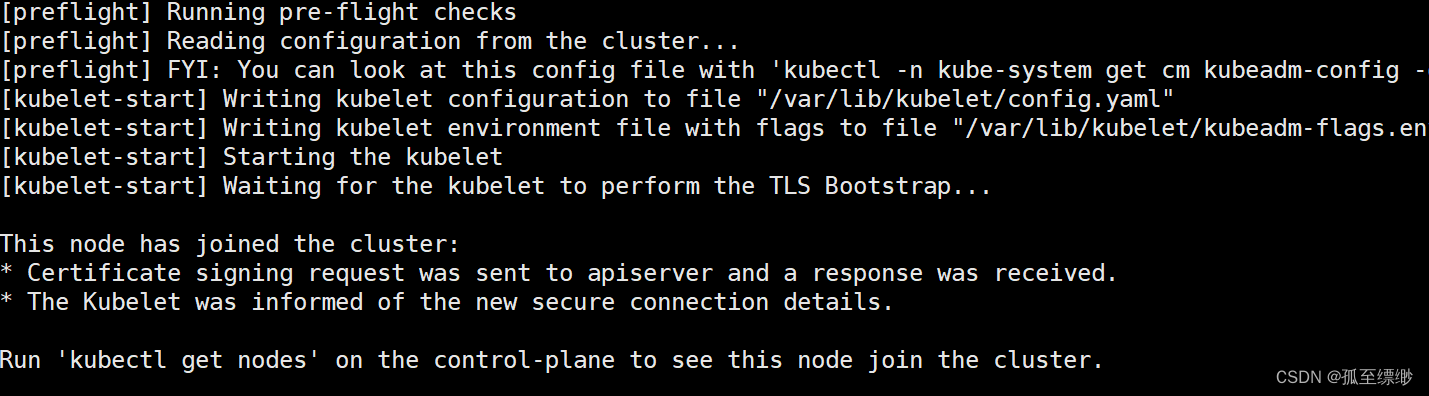

3.4.2 node节点加入(node节点执行)

# 到node节点检查admin.conf文件是否传输完成ls /etc/kubernetes/

admin.conf manifests

# 将admin.conf加入环境变量,直接使用永久生效echo"export KUBECONFIG=/etc/kubernetes/admin.conf">> ~/.bash_profile

# 加载source ~/.bash_profile

# ---------------------------------加入集群-------------------------------------# 1.在master节点执行 kubeadm init成功后,会出现 kubeadm join xxx xxx的命令,直接复制到node节点执行就好。# 2.下面是若没有复制到kubeadm join的命令或者是想要在集群中加入新节点,# 则先在master执行,获取token 和 discovery-token-ca-cert-hash。# 获取 token 参数

kubeadm token list # 查看已有 token

kubeadm token create # 没有token则执行,创建新的 TOKEN# 获取 discovery-token-ca-cert-hash 参数

openssl x509 -pubkey-in /etc/kubernetes/pki/ca.crt | openssl rsa -pubin-outform der 2>/dev/null | openssl dgst -sha256-hex|sed's/^.* //'# 3.node节点执行 kubeadm join# 修改获取的 token 和 discovery-token-ca-cert-hash 后,再执行

kubeadm join192.168.255.140:6443 --token y8v2nc.ie2ovh1kxqtgppbo --discovery-token-ca-cert-hash sha256:1fa593d1bc58653afaafc9ca492bde5b8e40e9adef055e8e939d4eb34fb436bf --cri-socket unix:///var/run/cri-dockerd.sock

3.4.3 重新加入集群(node节点执行)

# 先执行

kubeadm reset --cri-socket unix:///var/run/cri-dockerd.sock

# 再获取TOKEN、discovery-token-ca-cert-hash 参数后,最后执行

kubeadm join192.168.255.140:6443 --token y8v2nc.ie2ovh1kxqtgppbo --discovery-token-ca-cert-hash sha256:1fa593d1bc58653afaafc9ca492bde5b8e40e9adef055e8e939d4eb34fb436bf --cri-socket unix:///var/run/cri-dockerd.sock

3.4.4 安装网络插件下载然后运行

# 下载,若网络抽风~~,则复制下面的kube-flannel.ymlsudowget https://github.com/flannel-io/flannel/releases/download/v0.22.3/kube-flannel.yml

# 执行

kubectl apply -f kube-flannel.yml

或者

vi kube-flannel.yml

# kube-flannel.ymlapiVersion: v1

kind: Namespace

metadata:labels:k8s-app: flannel

pod-security.kubernetes.io/enforce: privileged

name: kube-flannel

---apiVersion: v1

kind: ServiceAccount

metadata:labels:k8s-app: flannel

name: flannel

namespace: kube-flannel

---apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:labels:k8s-app: flannel

name: flannel

rules:-apiGroups:-""resources:- pods

verbs:- get

-apiGroups:-""resources:- nodes

verbs:- get

- list

- watch

-apiGroups:-""resources:- nodes/status

verbs:- patch

-apiGroups:- networking.k8s.io

resources:- clustercidrs

verbs:- list

- watch

---apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:labels:k8s-app: flannel

name: flannel

roleRef:apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: flannel

subjects:-kind: ServiceAccount

name: flannel

namespace: kube-flannel

---apiVersion: v1

data:cni-conf.json:|

{

"name": "cbr0",

"cniVersion": "0.3.1",

"plugins": [

{

"type": "flannel",

"delegate": {

"hairpinMode": true,

"isDefaultGateway": true

}

},

{

"type": "portmap",

"capabilities": {

"portMappings": true

}

}

]

}net-conf.json:|

{

"Network": "10.244.0.0/16",

"Backend": {

"Type": "vxlan"

}

}kind: ConfigMap

metadata:labels:app: flannel

k8s-app: flannel

tier: node

name: kube-flannel-cfg

namespace: kube-flannel

---apiVersion: apps/v1

kind: DaemonSet

metadata:labels:app: flannel

k8s-app: flannel

tier: node

name: kube-flannel-ds

namespace: kube-flannel

spec:selector:matchLabels:app: flannel

k8s-app: flannel

template:metadata:labels:app: flannel

k8s-app: flannel

tier: node

spec:affinity:nodeAffinity:requiredDuringSchedulingIgnoredDuringExecution:nodeSelectorTerms:-matchExpressions:-key: kubernetes.io/os

operator: In

values:- linux

containers:-args:---ip-masq

---kube-subnet-mgr

command:- /opt/bin/flanneld

env:-name: POD_NAME

valueFrom:fieldRef:fieldPath: metadata.name

-name: POD_NAMESPACE

valueFrom:fieldRef:fieldPath: metadata.namespace

-name: EVENT_QUEUE_DEPTH

value:"5000"image: docker.io/flannel/flannel:v0.22.3

name: kube-flannel

resources:requests:cpu: 100m

memory: 50Mi

securityContext:capabilities:add:- NET_ADMIN

- NET_RAW

privileged:falsevolumeMounts:-mountPath: /run/flannel

name: run

-mountPath: /etc/kube-flannel/

name: flannel-cfg

-mountPath: /run/xtables.lock

name: xtables-lock

hostNetwork:trueinitContainers:-args:--f

- /flannel

- /opt/cni/bin/flannel

command:- cp

image: docker.io/flannel/flannel-cni-plugin:v1.2.0

name: install-cni-plugin

volumeMounts:-mountPath: /opt/cni/bin

name: cni-plugin

-args:--f

- /etc/kube-flannel/cni-conf.json

- /etc/cni/net.d/10-flannel.conflist

command:- cp

image: docker.io/flannel/flannel:v0.22.3

name: install-cni

volumeMounts:-mountPath: /etc/cni/net.d

name: cni

-mountPath: /etc/kube-flannel/

name: flannel-cfg

priorityClassName: system-node-critical

serviceAccountName: flannel

tolerations:-effect: NoSchedule

operator: Exists

volumes:-hostPath:path: /run/flannel

name: run

-hostPath:path: /opt/cni/bin

name: cni-plugin

-hostPath:path: /etc/cni/net.d

name: cni

-configMap:name: kube-flannel-cfg

name: flannel-cfg

-hostPath:path: /run/xtables.lock

type: FileOrCreate

name: xtables-lock

3.5 测试kubernetes 集群

# 下面一般在master节点执行,若node节点可以使用kubectl命令,也可以在node节点上操作

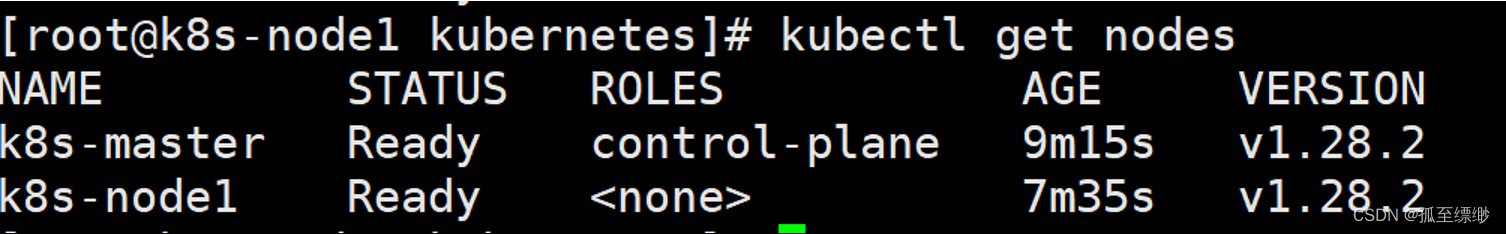

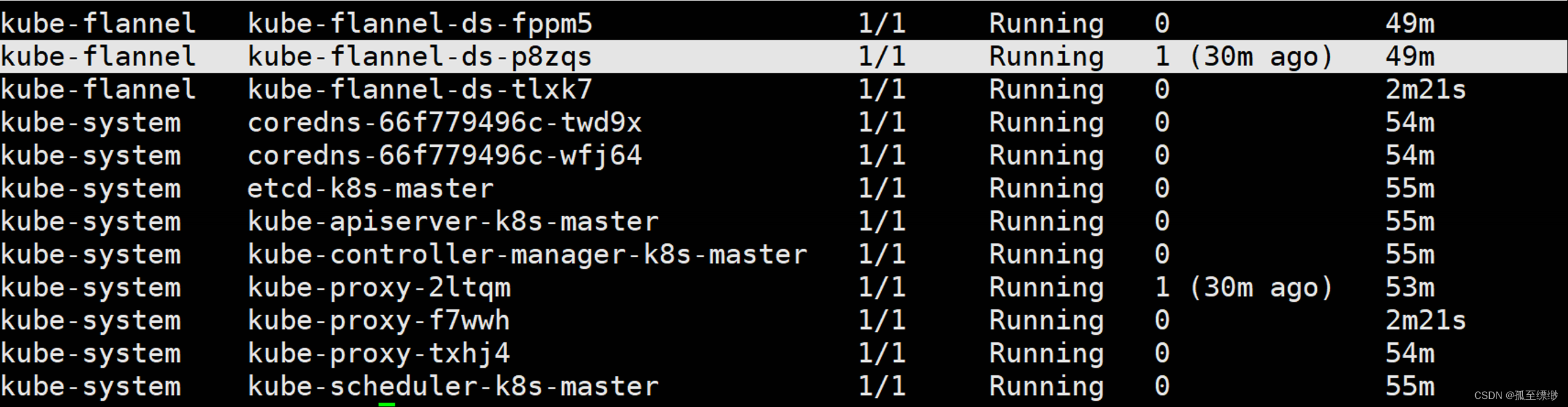

kubectl get nodes

kubectl get pod -A

显示:

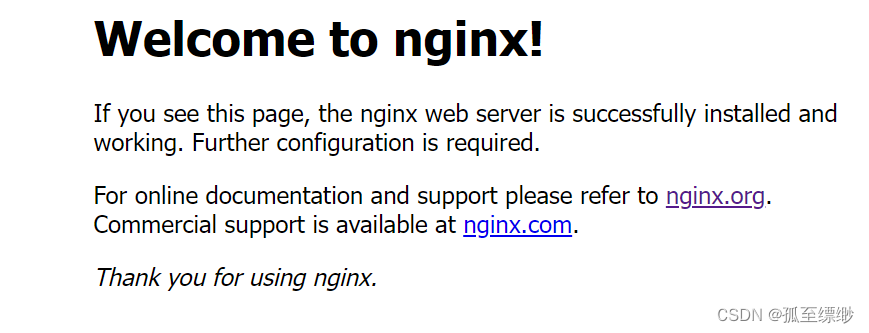

3.5.1 使用nginx测试

vi nginx-deployment.yaml

kubectl apply -f nginx-deployment.yaml

# nginx-deployment.yamlapiVersion: apps/v1

kind: Deployment

metadata:name: nginx-deployment

spec:replicas:3selector:matchLabels:app: nginx

template:metadata:labels:app: nginx

spec:containers:-name: nginx

image: nginx:latest

ports:-containerPort:80---apiVersion: v1

kind: Service

metadata:name: nginx-service

spec:selector:app: nginx

ports:-name: http

port:80targetPort:80nodePort:30080type: NodePort

执行

[root@k8s-master k8s]# kubectl get pod,svc |grep nginx

pod/nginx-deployment-7c79c4bf97-4xzc9 1/1 Running 0 83s

pod/nginx-deployment-7c79c4bf97-lp4fn 1/1 Running 0 83s

pod/nginx-deployment-7c79c4bf97-vt8wh 1/1 Running 0 83s

service/nginx-service NodePort 10.97.154.241 <none>80:30080/TCP 83s

访问:http://192.168.255.140:30080/,出现这个页面就算大功告成!

版权归原作者 孤至缥缈 所有, 如有侵权,请联系我们删除。