文章目录

前言

这里写一个用kubeadm搭建k8s的1.29.x版本,依此来把当前文章变成后续 版本的通用部署k8s文章

,容器运行时有好几个 containerd、CRI-O、Docker Engine(使用 cri-dockerd),这里选择

containerd

containerd安装参考手册

containerd 调用链更短,组件更少,更稳定,支持OCI标准,占用节点资源更少。 建议选择 containerd。

以下情况,请选择 docker 作为运行时组件:

如需使用 docker in docker

如需在 K8S 节点使用 docker build/push/save/load 等命令

如需调用 docker API

如需 docker compose 或 docker swarm

1.准备的三台虚拟机

IP规格操作系统主机名192.168.31.72c4gCentOS7.9k8s-master01192.168.31.82c4gCentOS7.9k8s-node01192.168.31.92c4gCentOS7.9k8s-node02

2.安装 kubeadm 前的准备工作

参考官方文档:安装 kubeadm

2.1 Kubernetes 使用主机名来区分集群内的节点,所以每台主机的 hostname 不可重名。修改 /etc/hostname 这个文件来重命名 hostname:

Master 节点命名为 k8s-master01,Worker 节点命名为 k8s-node01,k8s-node02

hostnamectl set-hostname k8s-master01

hostnamectl set-hostname k8s-node01

hostnamectl set-hostname k8s-node02

2.2 将 SELinux 设置为 permissive 模式(相当于将其禁用)

sudo setenforce 0sudosed-i's/^SELINUX=enforcing$/SELINUX=permissive/' /etc/selinux/config

2.3 关闭防火墙

systemctl stop firewalld.service

systemctl disable firewalld.service

2.4 关闭swap(避免内存交换至磁盘导致性能下降)

$ sudo swapoff -a

$ sudosed-ri'/\sswap\s/s/^#?/#/' /etc/fstab

2.5 配置主机名解析

cat>>/etc/hosts<<EOF

192.168.31.7 k8s-master01

192.168.31.8 k8s-node01

192.168.31.9 k8s-node02

EOF

2.6 开启转发 IPv4 并让 iptables 看到桥接流量

创建名为/etc/modules-load.d/k8s.conf 的文件,并且将 overlay和br_netfilter写入。这两个模块 overlay 和 br_netfilter 是 containerd 运行所需的内核模块。

(overlay模块:overlay模块是用于支持Overlay网络文件系统的模块。Overlay文件系统是一种在现有文件系统的顶部创建叠加层的方法,以实现联合挂载(Union Mount)。它允许将多个文件系统合并为一个单一的逻辑文件系统,具有层次结构和优先级。这样可以方便地将多个文件系统中的文件或目录合并在一起,而不需要实际复制或移动文件。

br_netfilter模块:br_netfilter模块是用于支持Linux桥接网络的模块,并提供了与防火墙(netfilter)子系统的集成。桥接网络是一种将不同的网络接口连接在一起以实现局域网通信的方法,它可以通过Linux内核的桥接功能来实现。br_netfilter模块与netfilter集成,可用于在Linux桥接设备上执行网络过滤和NAT(网络地址转换)操作。)

cat<<EOF|sudotee /etc/modules-load.d/k8s.conf

overlay

br_netfilter

EOFsudo modprobe overlay

sudo modprobe br_netfilter

# 设置所需的 sysctl 参数,参数在重新启动后保持不变cat<<EOF|sudotee /etc/sysctl.d/k8s.conf

net.bridge.bridge-nf-call-iptables = 1

net.bridge.bridge-nf-call-ip6tables = 1

net.ipv4.ip_forward = 1

EOF# 应用 sysctl 参数而不重新启动sudosysctl--system# 运行以下命令确认模块是否正常被加载

lsmod |grep br_netfilter

lsmod |grep overlay

# 通过运行以下指令确认 net.bridge.bridge-nf-call-iptables、net.bridge.bridge-nf-call-ip6tables 和 net.ipv4.ip_forward 系统变量在你的 sysctl 配置中被设置为 1:sysctl net.bridge.bridge-nf-call-iptables net.bridge.bridge-nf-call-ip6tables net.ipv4.ip_forward

3.安装containerd

安装包下载地址:containerd

1.解压安装包

## 解压到 /usr/local 目录下tar Czxvf /usr/local/ containerd-1.7.16-linux-amd64.tar.gz

2.生成默认配置文件

mkdir-p /etc/containerd

containerd config default > /etc/containerd/config.toml

3.使用systemd托管containerd

wget-O /usr/lib/systemd/system/containerd.service https://raw.githubusercontent.com/containerd/containerd/main/containerd.service

systemctl daemon-reload

systemctl enable--now containerd

如果上述方式不行,可以粘贴以下内容执行

# 生成system service文件

cat<<EOF|tee /etc/systemd/system/containerd.service

[Unit]Description=containerd container runtime

Documentation=https://containerd.io

After=network.target local-fs.target

[Service]ExecStartPre=-/sbin/modprobe overlay

ExecStart=/usr/local/bin/containerd

Type=notify

Delegate=yes

KillMode=process

Restart=always

RestartSec=5# Having non-zero Limit*s causes performance problems due to accounting overhead# in the kernel. We recommend using cgroups to do container-local accounting.LimitNPROC=infinity

LimitCORE=infinity

LimitNOFILE=1048576# Comment TasksMax if your systemd version does not supports it.# Only systemd 226 and above support this version.TasksMax=infinity

OOMScoreAdjust=-999

[Install]WantedBy=multi-user.target

EOF

# 加载文件并启动

systemctl daemon-reload

systemctl enable--now containerd

这里有两个重要的参数:

Delegate

: 这个选项允许 containerd 以及运行时自己管理自己创建容器的 cgroups。如果不设置这个选项,systemd 就会将进程移到自己的 cgroups 中,从而导致 containerd 无法正确获取容器的资源使用情况。

KillMode

: 这个选项用来处理 containerd 进程被杀死的方式。默认情况下,systemd 会在进程的 cgroup 中查找并杀死 containerd 的所有子进程。KillMode 字段可以设置的值如下。

control-group

(默认值):当前控制组里面的所有子进程,都会被杀掉

process

:只杀主进程

mixed

:主进程将收到 SIGTERM 信号,子进程收到 SIGKILL 信号

none

:没有进程会被杀掉,只是执行服务的 stop 命令

4.修改默认配置文件

1.# 开启运行时使用systemd的cgroupsed-i'/SystemdCgroup/s/false/true/' /etc/containerd/config.toml

2.#重启containerd

systemctl restart containerd

4.安装runc

用于根据OCI规范生成和运行容器的CLI工具

下载地址

wget https://github.com/opencontainers/runc/releases/download/v1.1.12/runc.amd64

install-m755 runc.amd64 /usr/local/sbin/runc

5.安装 CNI plugins

CNI(container network interface)是容器网络接口,它是一种标准设计和库,为了让用户在容器创建或者销毁时都能够更容易的配置容器网络。这一步主要是为contained nerdctl的客户端工具所安装的依赖 .

客户端工具有两种,分别是crictl和nerdctl, 推荐使用nerdctl,使用效果与docker命令的语法一致

# wget https://github.com/containernetworking/plugins/releases/download/v1.4.1/cni-plugins-linux-amd64-v1.4.1.tgz# mkdir -p /opt/cni/bin[root@clouderamanager-15 containerd]# tar Cxzvf /opt/cni/bin cni-plugins-linux-amd64-v1.4.1.tgz#ll /opt/cni/bin/

total 128528

-rwxr-xr-x 110011274119661 Mar 1218:56 bandwidth

-rwxr-xr-x 110011274662227 Mar 1218:56 bridge

-rwxr-xr-x 1100112711065251 Mar 1218:56 dhcp

-rwxr-xr-x 110011274306546 Mar 1218:56 dummy

-rwxr-xr-x 110011274751593 Mar 1218:56 firewall

-rwxr-xr-x 1 root root 2856252 Feb 212020 flannel

-rwxr-xr-x 110011274198427 Mar 1218:56 host-device

-rwxr-xr-x 110011273560496 Mar 1218:56 host-local

-rwxr-xr-x 110011274324636 Mar 1218:56 ipvlan

-rw-r--r-- 1100112711357 Mar 1218:56 LICENSE

-rwxr-xr-x 110011273651038 Mar 1218:56 loopback

-rwxr-xr-x 110011274355073 Mar 1218:56 macvlan

-rwxr-xr-x 1 root root 37545270 Feb 212020 multus

-rwxr-xr-x 110011274095898 Mar 1218:56 portmap

-rwxr-xr-x 110011274476535 Mar 1218:56 ptp

-rw-r--r-- 110011272343 Mar 1218:56 README.md

-rwxr-xr-x 1 root root 2641877 Feb 212020 sample

-rwxr-xr-x 110011273861176 Mar 1218:56 sbr

-rwxr-xr-x 110011273120090 Mar 1218:56 static

-rwxr-xr-x 110011274381887 Mar 1218:56 tap

-rwxr-xr-x 1 root root 7506830 Aug 182021 tke-route-eni

-rwxr-xr-x 110011273743844 Mar 1218:56 tuning

-rwxr-xr-x 110011274319235 Mar 1218:56 vlan

-rwxr-xr-x 110011274008392 Mar 1218:56 vrf

5.1 安装nerdctl

nerdctl 下载链接

[root@k8s-master01 ~]# tar xf nerdctl-1.7.6-linux-amd64.tar.gz [root@k8s-master01 ~]# cp nerdctl /usr/local/bin/

使用方法可以看看help基本和docker一致,有一点查看k8s的容器的话要带上命名空间,查看命名空间方法, 你们应该看不到,因为你们k8s没安装完毕,下面只是演示已有的环境,你们可以安装文档安装完后在执行就可以看到了

[root@k8s-master01 ~]# nerdctl namespace ls

NAME CONTAINERS IMAGES VOLUMES LABELS

default 230

k8s.io 18280[root@k8s-master01 ~]# nerdctl -n k8s.io ps

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

064fa2784341 registry.aliyuncs.com/google_containers/pause:3.9 "/pause"55 minutes ago Up k8s://kube-system/kube-apiserver-k8s-master01

09c26f251e2b registry.aliyuncs.com/google_containers/pause:3.9 "/pause"54 minutes ago Up k8s://kube-system/coredns-857d9ff4c9-pfv7g

1c76bb267dd7 registry.aliyuncs.com/google_containers/coredns:v1.11.1 "/coredns -conf /etc…"54 minutes ago Up k8s://kube-system/coredns-857d9ff4c9-j2mpk/coredns

26056672e64f registry.aliyuncs.com/google_containers/pause:3.9 "/pause"55 minutes ago Up k8s://kube-system/kube-controller-manager-k8s-master01

39c8b14c7900 docker.io/flannel/flannel:v0.25.1 "/opt/bin/flanneld -…"55 minutes ago Up k8s://kube-flannel/kube-flannel-ds-4lvmj/kube-flannel

5a2179bd3586 registry.aliyuncs.com/google_containers/pause:3.9 "/pause"55 minutes ago Up k8s://kube-system/kube-proxy-pn9nf

5b08a8bc55b3 registry.aliyuncs.com/google_containers/kube-apiserver:v1.29.4 "kube-apiserver --ad…"55 minutes ago Up k8s://kube-system/kube-apiserver-k8s-master01/kube-apiserver

5c5cd79490e2 registry.aliyuncs.com/google_containers/kube-controller-manager:v1.29.4 "kube-controller-man…"55 minutes ago Up k8s://kube-system/kube-controller-manager-k8s-master01/kube-controller-manager

6ac3186661e8 registry.aliyuncs.com/google_containers/etcd:3.5.12-0 "etcd --advertise-cl…"55 minutes ago Up k8s://kube-system/etcd-k8s-master01/etcd

71dcba075ef1 registry.aliyuncs.com/google_containers/pause:3.9 "/pause"55 minutes ago Up k8s://kube-flannel/kube-flannel-ds-4lvmj

832a79f84588 registry.aliyuncs.com/google_containers/pause:3.9 "/pause"55 minutes ago Up k8s://kube-system/etcd-k8s-master01

88c5762186d3 registry.aliyuncs.com/google_containers/coredns:v1.11.1 "/coredns -conf /etc…"54 minutes ago Up k8s://kube-system/coredns-857d9ff4c9-pfv7g/coredns

8da195124e98 registry.aliyuncs.com/google_containers/pause:3.9 "/pause"54 minutes ago Up k8s://kube-system/coredns-857d9ff4c9-j2mpk

972e77d13a98 registry.aliyuncs.com/google_containers/pause:3.9 "/pause"55 minutes ago Up k8s://kube-system/kube-scheduler-k8s-master01

c68d547746ad registry.aliyuncs.com/google_containers/kube-proxy:v1.29.4 "/usr/local/bin/kube…"55 minutes ago Up k8s://kube-system/kube-proxy-pn9nf/kube-proxy

e2aec44d040f registry.aliyuncs.com/google_containers/kube-scheduler:v1.29.4 "kube-scheduler --au…"55 minutes ago Up k8s://kube-system/kube-scheduler-k8s-master01/kube-scheduler

6.安装 kubeadm、kubelet 和 kubectl

参考 官方文档

你需要在每台机器上安装以下的软件包:

kubeadm

:用来初始化集群的指令。

kubelet

:在集群中的每个节点上用来启动 Pod 和容器等。

kubectl

:用来与集群通信的命令行工具。

以下是1.29版本的仓库,如果要更换版本只需更新v版本号即可

cat<<EOF|sudotee /etc/yum.repos.d/kubernetes.repo

[kubernetes]

name=Kubernetes

baseurl=https://pkgs.k8s.io/core:/stable:/v1.29/rpm/

enabled=1

gpgcheck=1

gpgkey=https://pkgs.k8s.io/core:/stable:/v1.29/rpm/repodata/repomd.xml.key

exclude=kubelet kubeadm kubectl cri-tools kubernetes-cni

EOF

yum clean all && yum makecache

不指定版本默认下载最新的

# 指定版本

yum install-y kubelet-1.29.4 kubeadm-1.29.4 kubectl-1.29.4 --disableexcludes=kubernetes

# 不指定版本

yum install-y kubelet kubeadm kubectl --disableexcludes=kubernetes

systemctl enable--now kubelet

# 查看下载的版本[root@k8s-master01 ~]# kubeadm version

kubeadm version: &version.Info{Major:"1", Minor:"29", GitVersion:"v1.29.4", GitCommit:"55019c83b0fd51ef4ced8c29eec2c4847f896e74", GitTreeState:"clean", BuildDate:"2024-04-16T15:05:51Z", GoVersion:"go1.21.9", Compiler:"gc", Platform:"linux/amd64"}# v1.29.4的版本

6.1 配置crictl

# 所有节点都操作cat<<EOF|tee /etc/crictl.yaml

runtime-endpoint: unix:///run/containerd/containerd.sock

EOF

root@k8s-master01 ~]# crictl ps -a

CONTAINER IMAGE CREATED STATE NAME ATTEMPT POD ID POD

7.初始化集群

1.打印初始化配置到yaml文件

kubeadm config print init-defaults > kubeadm-config.yaml

2.修改初始化默认配置文件

apiVersion: kubeadm.k8s.io/v1beta3

bootstrapTokens:-groups:- system:bootstrappers:kubeadm:default-node-token

token: abcdef.0123456789abcdef

ttl: 24h0m0s

usages:- signing

- authentication

kind: InitConfiguration

localAPIEndpoint:advertiseAddress: 192.168.31.7 # master的地址bindPort:6443nodeRegistration:criSocket: unix:///var/run/containerd/containerd.sock

imagePullPolicy: IfNotPresent

name: k8s-master01 # master节点名称taints:# 设置污点,不让pod运行在控制面-effect: PreferNoSchedule

key: node-role.kubernetes.io/master

---apiServer:timeoutForControlPlane: 4m0s

apiVersion: kubeadm.k8s.io/v1beta3

certificatesDir: /etc/kubernetes/pki

clusterName: kubernetes

controllerManager:{}dns:{}etcd:local:dataDir: /var/lib/etcd

imageRepository: registry.aliyuncs.com/google_containers # 设置国内镜像地址,加速镜像拉取kind: ClusterConfiguration

kubernetesVersion: 1.29.4 # k8s安装的版本,记住要和你上面下载的版本号一致networking:podSubnet: 10.244.0.0/16 # 设置pod的网络地址,flannel默认是这个地址dnsDomain: cluster.local

serviceSubnet: 10.96.0.0/12

scheduler:{}---apiVersion: kubelet.config.k8s.io/v1beta1

authentication:anonymous:enabled:falsewebhook:cacheTTL: 0s

enabled:truex509:clientCAFile: /etc/kubernetes/pki/ca.crt

authorization:mode: Webhook

webhook:cacheAuthorizedTTL: 0s

cacheUnauthorizedTTL: 0s

cgroupDriver: systemd

clusterDNS:- 10.96.0.10

clusterDomain: cluster.local

containerRuntimeEndpoint:""cpuManagerReconcilePeriod: 0s

evictionPressureTransitionPeriod: 0s

fileCheckFrequency: 0s

healthzBindAddress: 127.0.0.1

healthzPort:10248httpCheckFrequency: 0s

imageMaximumGCAge: 0s

imageMinimumGCAge: 0s

kind: KubeletConfiguration

logging:flushFrequency:0options:json:infoBufferSize:"0"verbosity:0memorySwap:{}nodeStatusReportFrequency: 0s

nodeStatusUpdateFrequency: 0s

rotateCertificates:trueruntimeRequestTimeout: 0s

shutdownGracePeriod: 0s

shutdownGracePeriodCriticalPods: 0s

staticPodPath: /etc/kubernetes/manifests

streamingConnectionIdleTimeout: 0s

syncFrequency: 0s

volumeStatsAggPeriod: 0s

3.查看下载的镜像

[root@k8s-master01 ~]# kubeadm config images list --config kubeadm-config.yaml

registry.aliyuncs.com/google_containers/kube-apiserver:v1.29.4

registry.aliyuncs.com/google_containers/kube-controller-manager:v1.29.4

registry.aliyuncs.com/google_containers/kube-scheduler:v1.29.4

registry.aliyuncs.com/google_containers/kube-proxy:v1.29.4

registry.aliyuncs.com/google_containers/coredns:v1.11.1

registry.aliyuncs.com/google_containers/pause:3.9

registry.aliyuncs.com/google_containers/etcd:3.5.12-0

4.提前拉取镜像

kubeadm config images pull --config kubeadm-config.yaml

5.初始化集群

kubeadm init --config kubeadm-config.yaml

注意:如果初始化有问题,请参考5.1的方案去排查解决

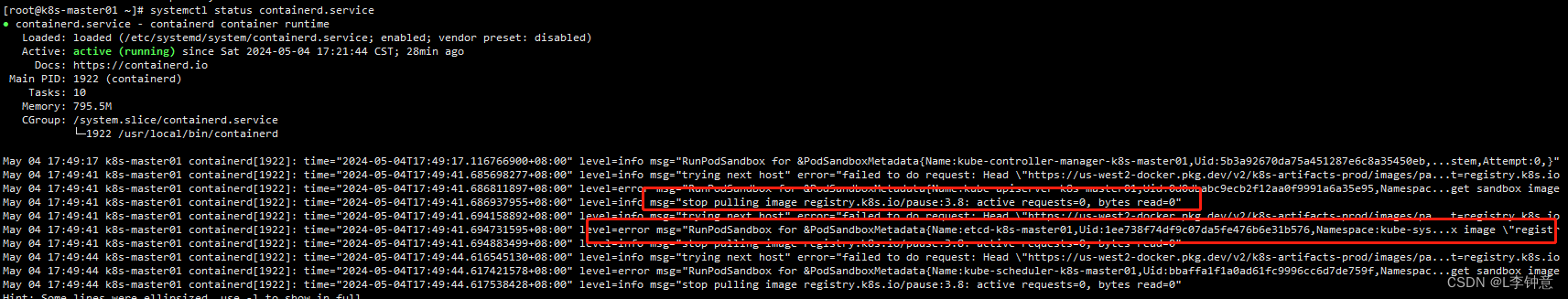

5.1 基本上会出现的错误

它一直请求的是

registry.k8s.io/pause:3.8

这个

registry.k8s.io

仓库的初始容器

3.8

版本,和我们上面通过文件所得到的版本不一样且仓库地址不是阿里云的,所以

kubelet pause

拉取镜像不受

imageRepository: registry.aliyuncs.com/google_containers

的影响

如果也出现初始化失败也是上面的问题,以下就是解决方法:

# 重定向一个标签

ctr -n k8s.io i tag registry.aliyuncs.com/google_containers/pause:3.9 registry.k8s.io/pause:3.8

# 在重新初始化

kubeadm init --config kubeadm-config.yaml --ignore-preflight-errors=all

基本上就可以解决掉了

其他节点也都执行以下操作

crictl pull registry.aliyuncs.com/google_containers/pause:3.9 && ctr -n k8s.io i tag registry.aliyuncs.com/google_containers/pause:3.9 registry.k8s.io/pause:3.8

6.配置kubectl访问集群

1.初始化成功后会出现以下配置信息:

Your Kubernetes control-plane has initialized successfully!

To start using your cluster, you need to run the following as a regular user:

mkdir-p$HOME/.kube

sudocp-i /etc/kubernetes/admin.conf $HOME/.kube/config

sudochown$(id-u):$(id-g)$HOME/.kube/config

Alternatively, if you are the root user, you can run:

exportKUBECONFIG=/etc/kubernetes/admin.conf

You should now deploy a pod network to the cluster.

Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at:

https://kubernetes.io/docs/concepts/cluster-administration/addons/

Then you can join any number of worker nodes by running the following on each as root:

kubeadm join192.168.31.7:6443 --token abcdef.0123456789abcdef \

--discovery-token-ca-cert-hash sha256:3fa473a5e9d923a3fc5ddbecab79bc939c62835cd0f74cee6770b833b124b65c

2.根据提示配置信息

mkdir-p$HOME/.kube

sudocp-i /etc/kubernetes/admin.conf $HOME/.kube/config

sudochown$(id-u):$(id-g)$HOME/.kube/config

3.访问集群

# 查看节点[root@k8s-master01 ~]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

k8s-master01 NotReady control-plane 1m v1.29.4

你会注意到 Master 节点的状态是NotReady,这是由于还缺少网络插件,集群的内部网络还没有正常运作。

8.安装 Flannel 网络插件

Kubernetes 定义了 CNI 标准,有很多网络插件,这里选择最常用的 Flannel,可以在它的 GitHub 仓库找到相关文档。

# 可以直接下载这个yaml,然后进行修改wget https://github.com/flannel-io/flannel/releases/latest/download/kube-flannel.yml

因为咱们刚刚定义了podSubnet的网段为10.244.0.0/16 和flannel是同一个网段 所以就不需要修改yaml的网段了

然后我们安装 Flannel:

$ kubectl apply -f kube-flannel.yml

namespace/kube-flannel created

clusterrole.rbac.authorization.k8s.io/flannel created

clusterrolebinding.rbac.authorization.k8s.io/flannel created

serviceaccount/flannel created

configmap/kube-flannel-cfg created

daemonset.apps/kube-flannel-ds created

再查看就正常啦, 如果还没有ready 可以看下flannel pod是否起来,kubectl get pod -n kube-flannel

[root@k8s-master01 ~]# kubectl get nodes -o wide

NAME STATUS ROLES AGE VERSION INTERNAL-IP EXTERNAL-IP OS-IMAGE KERNEL-VERSION CONTAINER-RUNTIME

k8s-master01 Ready control-plane 15m v1.29.4 192.168.31.7 <none> CentOS

9.安装其他节点

在其他节点执行下面命令即可,这个命令就是最初初始化成功后所留下的信息

kubeadm join192.168.31.7:6443 --token abcdef.0123456789abcdef \

--discovery-token-ca-cert-hash sha256:3fa473a5e9d923a3fc5ddbecab79bc939c62835cd0f74cee6770b833b124b65c

如果忘记了或者被上面命令覆盖了可以执行以下命令重新生成:

kubeadm token create --print-join-command

如果是需要扩展master节点,需要执行以下步骤

# 新master节点mkdir-p /etc/kubernetes/pki/etcd &&mkdir-p ~/.kube/

# 最开始的master节点上执行192.168.31.6为新的master节点scp /etc/kubernetes/admin.conf [email protected]:/etc/kubernetes/

scp /etc/kubernetes/pki/ca.* [email protected]:/etc/kubernetes/pki/

scp /etc/kubernetes/pki/sa.* [email protected]:/etc/kubernetes/pki/

scp /etc/kubernetes/pki/front-proxy-ca.* [email protected]:/etc/kubernetes/pki/

scp /etc/kubernetes/pki/etcd/ca.* [email protected]:/etc/kubernetes/pki/etcd/

# 拿到上面kubeadm 生成的 join 命令在新的master上执行和工作节点的区别在于多了一个--control-plane 参数

kubeadm join192.168.31.7:6443 \--token 2ofltv.hzljc5fvenqbbcxe \

--discovery-token-ca-cert-hash sha256:d149574aa882ea03ed53075b3849818dd1200732ad4d98630c12bd9d7cfd5f96 \

--control-plane

# 注意更换为你的信息

安装完后查看节点信息

[root@k8s-master01 ~]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

k8s-master01 Ready control-plane 23m v1.29.4

k8s-master02 Ready control-plane 13m v1.29.4

k8s-node01 Ready <none> 14m v1.29.4

k8s-node02 Ready <none> 14m v1.29.4

至此整个安装结束

版权归原作者 L李钟意 所有, 如有侵权,请联系我们删除。